Is it possible to design a processor with very high performance and low power consumption? To answer that, embedded illuminati are now focusing on designs tuned to specific workloads – creating a tailored processor that does a few things very efficiently, with nothing extra.

One such application benefitting from this workload-focused approach is embedded vision, which is about more than just cameras transmitting images. Making intelligent decisions on information in a frame – remotely sensing the pulse and respiration of a fitness enthusiast working out in front of a game console, detecting a pedestrian crossing into traffic on a crowded street, determining the speed, location, and trajectory of a pitched ball, and other similarly complex scenarios – calls for processors which can deal with three aspects.

High bandwidth – 1080p video at 60 frames per second presents data at a rate of 3 Gbps. Those rates only increase when larger format image sensors are involved, and the objective is not transmission but pre-processing of images looking for specific details at different scales. Stopping the incoming pipeline to inspect frames isn’t an option; data not only has to be received, but processed in real-time.

Intense processing – Very sophisticated algorithms applying advanced image processing techniques are common in vision applications. Much of the emphasis is on extracting and tracking: determining the shape of an object, then following its path through a changing background as frames progress, with calibration and time correlation enabling derived analytics.

Low power – These applications do not have 35W to play with, becoming more commonly embedded in mobile and automotive devices. The OpenCV library was designed to take advantage of a vector instruction set like Intel SSE on a high performance desktop processor. While OpenCV runs on a 32-bit ARM core with NEON extensions, the result isn’t optimal.

“OpenCV is a starting point for functional implementation, and lets us look at some very cool embedded vision applications,” says Markus Willems, senior product marketing manager for Synopsys. “What we’re trying to do is map those applications to truly embedded devices, and optimize them for performance and power consumption.”

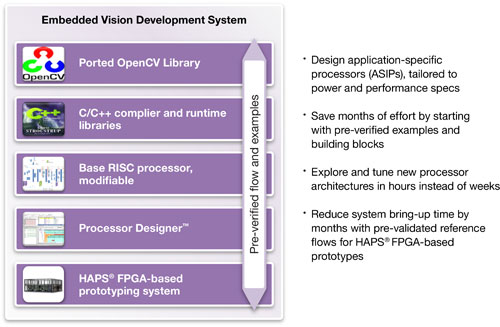

Processor Designer is the Synopsys tool to do just that. Don’t mistake this for just a way to sell ARC processor cores or an FPGA-based prototyping system – those are part of the mix, but the reference designs based on ARC are for what Willems termed the low- to mid-range of computational complexity. What Synopsys is after is the fully programmable, high performance, power efficient, tailored core design for a vision system, with one or more dedicated cores likely sitting alongside some type of ARM general purpose core in many applications.

The flow Willems described harkens back to the days of bit-slice multiplier design, but updated for RTL blocks and C programmability. A typical core design effort using Processor Designer looks as follows:

- Compile OpenCV code on the initial RTL design

- Profile the data paths and instructions during execution

- Look for bottlenecks and power-hungry hotspots

- Recode around those with C or hardware-enabled intrinsics

- Recompile both the RTL and application with the optimizations

Optimizing the instruction set and RTL of a design implements the exact resources necessary, and minimizes power consumption by omitting unnecessary functional blocks from the core. Processor Designer brings several toolsets together into a single environment to aid in understanding the underlying workload in data and compute intensive algorithms like vision, and tuning a core appropriately to get the job done.

lang: en_US

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.