The success of modern battery-powered products depends as much on useful operating time between charges as on functionality. FinFET process technologies overtook earlier planar CMOS in part because they significantly reduce leakage power. But they exacerbate dynamic power consumption thanks to increased pin capacitances. SoC designers must invest considerable effort in sophisticated strategies to reduce dynamic power across expected use-cases. The complexity of these strategies makes early power analysis in such advanced SoCs essential.

Two challenges in power estimation

Power estimation in a gate-level model, the traditional method of choice, is quite accurate but far too late in the design cycle to guide meaningful optimization. Designers need estimation during RTL design. This level of estimation is less accurate than gate-level but allows for much faster iteration on power improvements earlier in the development cycle.

A second challenge is to accurately model use-cases. Dynamic power management strategies build on turning off pieces of functionality whenever possible, sometimes down to a quite granular level of functionality. How effective this will be in reducing overall power is heavily dependent on how the SoC is exercised by the application. You can only assess meaningful options against realistic use cases such as a video game or a realistic object recognition task in a video stream. Simulation-based estimation cannot handle tasks of this complexity. These are only practical in emulation-based modeling.

Must the whole circuit be emulated?

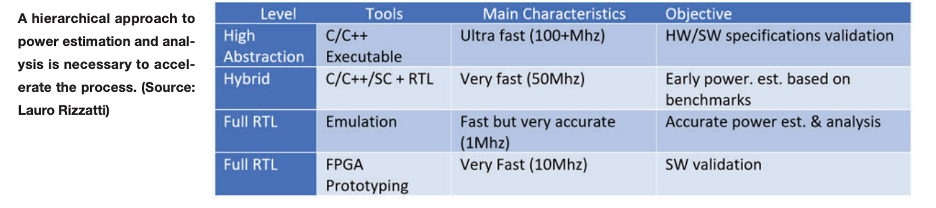

Not at all. It is very practical to run in a hybrid mode, where some parts of the circuit run in C/C++ or Fast models on a host and others run on the emulator, with tightly managed coupling between these two. I see several needs for this approach. First, it can be valuable in early modeling where much of the circuitry may only be available in architectural models.

Second, some aspects of bring up, such as Linux boot, may not be very relevant to power estimation. This part can run on the host at near real-time speeds, not needing the detailed visibility required for power estimation. The critical part of the use-case for power estimation is where the game or object recognition starts running. This is where designers are most likely to find power bugs. Places where power spikes above an expected level. Or you expect it to drop but it doesn’t. This aspect of the use case will run on dedicated functionality in the design – GPUs, AI accelerators, and other related hardware. You can model these in the emulator, gaining fine-grained visibility into signal toggles.

Getting more ambitious

A third reason I sometimes see for hybrid modeling is to efficiently model very large designs. If an SoC contains a cluster of cores, maybe you only need to model one in RTL for early analysis purposes. You can handle the others as software models on the host. This can speed finding and fixing many power bugs, leaving your signoff cleanup for full circuit emulation.

This approach becomes very interesting when you consider emerging chiplet-based design. In one way this is simply a scaled-up version of this third reason, although in practice there are added complexities – coherent link interfaces between chiplets and added latency in those interfaces.

Clearly the direction for pre-silicon power estimation in modern SoCs depends on emulation. Learn more about how Siemens EDA sees this domain.

Also Read:

Faster Time to RTL Simulation Using Incremental Build Flows

SIP Modules Solve Numerous Scaling Problems – But Introduce New Issues

MBIST Power Creates Lurking Danger for SOCs

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.