Last week Synopsys announced their next step in generative AI (GenAI) in Synopsys.ai Copilot based on a collaboration with Microsoft. This integrates Azure OpenAI together with existing Synopsys.ai GenAI capabilities to extend Copilot concepts to the EDA world. For those of you unfamiliar with Copilot, this is a development by GitHub/Microsoft and OpenAI to aid software developers in writing code.

… GitHub Copilot includes assistive features for programmers, such as the conversion of code comments to runnable code, and autocomplete for chunks of code, repetitive sections of code, and entire methods and/or functions. … GitHub states that Copilot’s features allow programmers to navigate unfamiliar coding frameworks and languages by reducing the amount of time users spend reading documentation. (source Wikipedia)

Microsoft has since significantly expanded the scope of Copilot to help in all capabilities provided by Microsoft 365 (Word, etc.) and has introduced solutions to support sales, service, and security along with assistance for software developers. Copilot is technology with broad reach, so it is not surprising that Synopsys has jumped on the potential to extend that technology to EDA. I should add that regular Copilot already supports RTL (thanks Matt Genovese for the demo!) but as a proof of concept in my view. Synopsys is aiming for a more robust version. 😊

Why the Continued Emphasis on AI?

OK, so AI is hot, a new technology with a lot of potential applications but some might suspect it is becoming a solution in search of a problem. In fact new design challenges abound and this continued emphasis on AI is an important direction that the design and EDA communities are exploring to help product designers jump ahead.

Trends to domain-specific architectures and to multi-die systems are ways that product teams are overcoming Moore’s Law limitations in performance and power scaling for advanced processes. However both approaches greatly amplify complexity in design, verification and implementation, yet teams must deliver products today on even more compressed schedules. This while staffing needs across the industry are expected to fall short by tens of thousands of engineers by 2030.

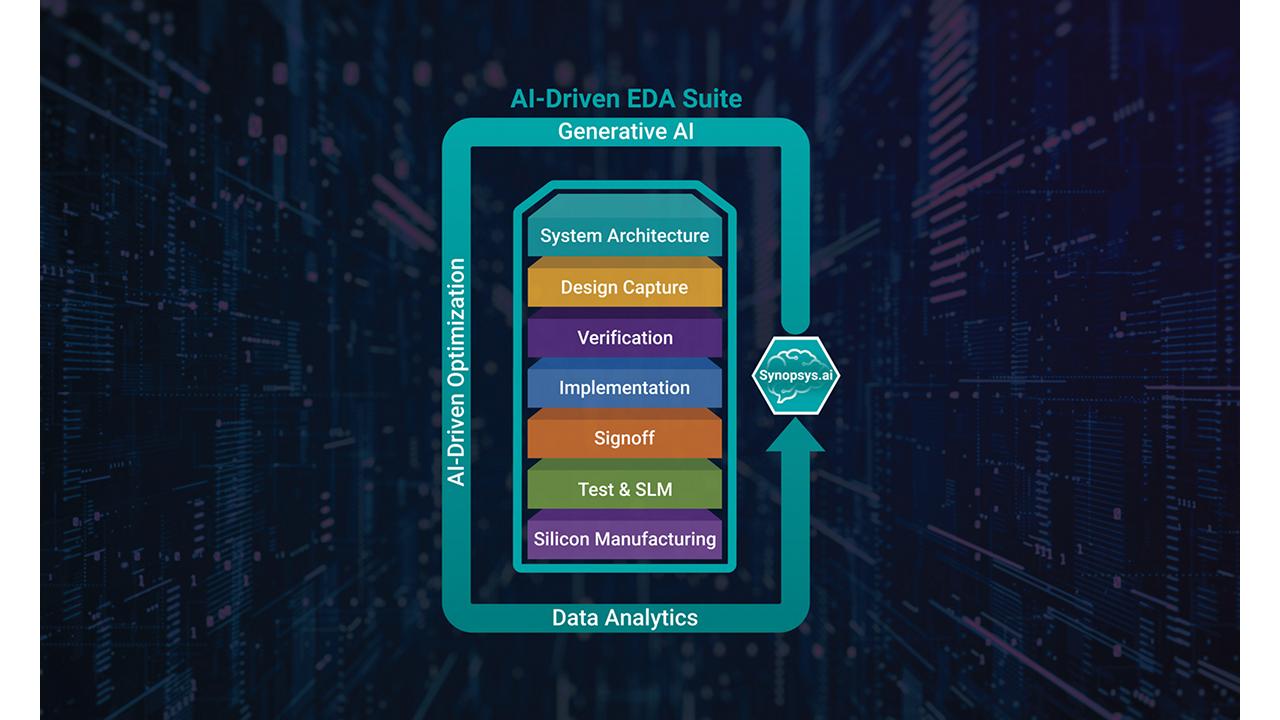

Incremental improvements in tools and methodologies will continue to be important but clearly we need a turbo-charge to overcome problems at this scale. We need ways for engineers to be able to deliver better results in a shorter time without need for additional staffing. That’s where AI comes in. In part through artificial intelligence capabilities in development flow tools, as in DSO.ai, VSO.ai and TSO.ai. In part through assistive (copilot) methods to guide designers more quickly to optimal solutions, the subject of Synopsys’ Copilot announcement.

Synopsys.ai Copilot Working with Designers

Shankar Krishnamoorthy, general manager of the Synopsys EDA Group, told me that the first roll out to early customers (AMD, Intel, Microsoft and other leading companies) is testing capabilities including:

- Collaborative capabilities to provide engineers with guidance on tool knowledge, analysis of results, and enhanced EDA workflows, and

- Generative capabilities to expedite development of RTL, formal verification assertion creation, and UVM testbenches.

In addition to these first new developments, Shankar said his team plans to develop autonomous capabilities across the Synopsys.ai suite, which will enable end-to-end workflow creation from natural language, spanning architecture to design and manufacturing.

I’ll expand on a couple of points, starting with formal verification assertions where I claim at least informed novice status thanks to working with the Synopsys VC Formal group. Formal verification is a very powerful technology but historically has been limited by the high-level of expertise demanded of practitioners, especially when it comes to developing assertions and constraints. Packaging standard tests as apps has greatly simplified many applications and has increased adoption but hasn’t done anything to simplify non-standard/specialized property checks.

Part of the problem is the complexity of the property language (SVA). Copilot should be able to help by converting natural language requirements written by a verification engineer into the correct formal syntax and by providing recommendations for the RTL.

Next, RTL generation. This for me is a more complex picture with different potential use-cases. One is to autocomplete a chunk of code given sufficient context or create a code snippet from a natural language description. At the same time creating assertions to check the correctness of the generated code. Both could be valuable accelerators for a junior developer. Another use-case might extend generation to small blocks from a natural language description (remember the 4096 token limit on GPT-3).

Shankar said the distinction here between proofs of concept and the Synopsys.ai implementation is that Synopsys.ai guard-rails and checks generated code using the many technologies they have at their disposal (even PPA analyses) and of course many years of learning and expertise.

In all cases, I expect experts will code-review generated code to guide further training. No one wants to see hallucinations creep in as added challenges for late-stage verification.

Availability

Shankar tells me that this technology is currently in early custom evaluation and refinement, which especially in this case makes complete sense. Which use-cases are going to make a difference in practice and how big a difference (lines of code/day, bugs created or found per day, …) will emerge. Add in the extensive scope of the goal and I expect more general releases to come a feature or two at a time as these are refined and proven robust in a wide range of use-cases.

Looks like Synopsys is following the Sundar Pichai maxim of “You will see us be bold and ship things, but we are going to be very responsible in how we do it.” Good for them for taking the first step! You can read the press release HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.