It’s all about bandwidth these days – fueling hyperscale data centers that support high-performance and cloud computing applications. It’s what enables you to stream a movie on your smart TV while your roommate plays an online game with friends located in different parts of the country. It’s what makes big data analytics run swiftly and allows artificial intelligence (AI) algorithms to perform their magic and provide valuable insights for everyday gadgets and beyond.

As the data connectivity backbone for the internet, the Ethernet protocol is answering the call for increased bandwidth demands by supporting speeds of 200G, 400G and, now, 800G. Before long, 1.6T will not be out of the question. Going hand in hand with higher bandwidth is the need for efficient data connectivity over longer distances.

While networking equipment companies have historically influenced Ethernet speeds, hyperscalers are now disrupting the market and driving up speeds, while also influencing the Ethernet roadmap. In this post, which was originally published on the “From Silicon to Software” blog, we’ll take a closer look at the bandwidth needs of hyperscale data centers and how Ethernet IP supports scalable, high-data-rate connectivity requirements for data-fueled applications.

Exponential Data Growth

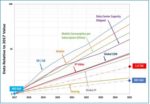

Even before the COVID-19 pandemic hit and pushed many of our daily activities online, bandwidth was growing at rates faster than previously forecasted. The graph below shows the demand over time driven by a variety of data-intensive applications. With the expanding ubiquity of internet of things (IoT), cloud storage, virtual and augmented reality, video streaming, and online collaboration applications, it’s no wonder that this volume of data is expected to grow exponentially.

Source: IEEE 802.3 Industry Connections Bandwidth Assessment, Part II

Last April, the Ethernet Technology Consortium (previously known as the 25 Gigabit Ethernet Consortium) announced the 800GBASE-R specification for 800G Ethernet. This specification introduces a new media access control (MAC) and physical coding sublayer (PCS), repurposing two sets of existing 400G Ethernet logic from the IEEE 802.3bs standard with some modifications to distribute data across eight 106-Gbps physical lanes. The Consortium is comprised of networking and data center industry leaders, including Synopsys, who want to move more aggressively than the IEEE in driving the standards for faster Ethernet networks. IEEE, meanwhile, formed a group last fall to consider the next transmission rate for Ethernet (800G as well as 1.6T are both in the conversation).

Scaling Up for a Data-Driven World

Hyperscale data centers—so named because they’re designed to scale up quickly and in a big way to meet increasing demands for compute, memory, storage, and networking resources—consist of thousands of servers managing petabytes (or more) of information. These massive workhorses are central to our online lives, so it’s not a surprise hyperscalers are dominating the conversation around bandwidth.

One of the ways in which hyperscale data centers scale is with the addition of racks that are networked through Ethernet. Some hyperscalers are now in the business of developing application-specific systems-on-chip to accelerate machine-learning applications, connecting multiple processors and AI accelerators for faster and power-efficient high-performance computing.

Ethernet, however, has become the networking technology of choice because of its flexibility in supporting the same software even if the hardware in the system is replaced. The standard also boasts speed negotiation and backwards compatibility with the software stack – a device that’s 20 years old with an Ethernet port and a software driver can plug into a newer Ethernet network and still work. It can also utilize different kinds and classes of media: optical fiber, copper cables, PCB backplane, etc.

What Makes Ethernet-Based Designs a Challenge?

One of the challenges of designing with the multi-layer Ethernet protocol involves ensuring that the MAC, PCS, and the physical medium attachment (PMA) sublayers deliver the optimal performance and latency once integrated. If the pieces are from different vendors, establishing interoperability can be a tough task, particularly since 800G Ethernet has not yet been standardized by IEEE.

Electrical difficulties are another issue, as is the need for digital logic to handle the throughput (i.e., hardware with parallel processing, very fast clocks, etc.). Additionally, moving from 400G up to 800G entails using a 100G electrical PHY, whose performance across channel reaches presents a challenge.

PHY aside, 800G Ethernet can be regarded similarly as 400G but with faster inputs. At higher data rates, it becomes even more important to engineer the datapath to be as efficient as possible to drive the lowest latency. Forward error correction (FEC) will add latency to the network, and a longer haul will need more powerful FEC.

How Ethernet IP Facilitates High-Performance Compute

IP is an important part of the equation for Ethernet connectivity that ensures first-pass silicon success. A MAC+PCS+PHY integrated Ethernet IP can support increasing bandwidth and data rate requirements, low-latency needs, and interoperability expectations.

Addressing the challenges, Synopsys provides the industry’s only complete 200G/400G/800G Ethernet IP solution. The Synopsys DesignWare® Ethernet Controller IP portfolio for 200G/400G and 800G Ethernet complement our 112G/56G Ethernet PHY IP solutions in advanced FinFET processes, which enables Ethernet interconnects up to 800G. The resulting low-latency, high-performance Ethernet IP solution is ideal for networking, AI, and high-performance computing SoCs.

DesignWare Ethernet IP Solutions are IEEE-compliant and have undergone extensive third-party interoperability testing and certification, reducing integration risks, accelerating time-to-market, and enabling you to focus on product differentiation. The portfolio includes:

- Configurable controllers and silicon-proven PHYs for speeds up to 400G/800G

- Verification IP

- Software Development Kits

- Interface IP Subsystems

Once an Ethernet-based design has been developed, of course, it must be verified. This is where Synopsys VC Verification IP (VIP) for Ethernet 800G can help accelerate verification closure, providing a comprehensive set of protocol, methodology, verification, and productivity features. Implemented in native SystemVerilog and UVM, VC VIP runs natively on all simulators and can be integrated, configured, and customized with minimal effort. In addition, source code UNH-IOL test suites are available for key Ethernet features and clauses, allowing teams to quickly jumpstart their own custom testing and accelerate verification closure.

Summary

The amount of data being processed, shared, and consumed to fuel our Smart Everything world is rather mind-blowing. It is certainly changing the Ethernet landscape as hyperscalers drive up speeds to support the video conference calls, virtual reality games, and swift financial transactions that have come to define modern life. Ethernet IP and Verification IP solutions are proving to be up to the challenge in supporting the high performance and low latency required by an array of data-driven applications.

By Priyank Shukla and John Swanson, Staff Product Marketing Managers, Synopsys Solutions Group, and Anika Malhotra, Senior Product Marketing Manager, Synopsys Verification Group

Also Read:

On-the-Fly Code Checking Catches Bugs Earlier

Upcoming Virtual Event: Designing a Time Interleaved ADC for 5G V2X Automotive Applications

Optimize RTL and Software with Fast Power Verification Results for Billion-Gate Designs