Hyperscale data centers are evolving rapidly to meet the demands of high-bandwidth, low-latency applications, ranging from AI and high-performance computing (HPC) to telecommunications and 4K video streaming. The increasing need for faster data transfer rates has prompted a scaling of Ethernet from 51Tb/s to 100Tb/s. Numerous suppliers are offering IP and components such switches, backplane connectors, pluggables, cable assemblies and other networking infrastructure elements. Extensive ecosystem interoperability is a must to build robust systems.

Synopsys 224G SerDes IP

The Synopsys 224G SerDes IP is designed to provide exceptional performance, power efficiency, and configurability, making it a versatile solution for a wide range of applications, including high-speed networking, data centers, and artificial intelligence. This IP is engineered to support multiple industry-standard protocols, enabling it to seamlessly interface with a variety of communication standards. Its compatibility with protocols such as PCIe (Peripheral Component Interconnect Express), Ethernet, and Common Electrical I/O (CEI), showcases the SerDes IP’s versatility across different applications. The 224G SerDes IP offers a high degree of configurability, allowing designers to tailor its parameters to specific system requirements. Functional demonstrations highlight how the IP can adapt to different data rates, channel lengths, and signal integrity conditions, showcasing its flexibility in meeting the unique needs of various applications.

What might not be as widely known is Synopsys SerDes IP’s remarkable interoperability within an extensive ecosystem.

Attention to Ecosystem Interoperability

Synopsys recognizes the diversity of the modern semiconductor ecosystem, with various vendors providing critical components such as switches, pluggables, and other networking infrastructure elements. Synopsys collaborates with industry partners in the development and validation of its silicon proof points. This collaborative approach ensures that the technology is not developed in isolation but is tested and refined in conjunction with the broader semiconductor ecosystem. Synopsys invests heavily in rigorous testing and validation procedures to ensure that its solutions work seamlessly in real-world scenarios. This involves comprehensive testing with components from various vendors to simulate diverse networking environments. It includes stress testing under challenging conditions, demonstrating the SerDes IP’s reliability and stability in real-world applications.

Extensive Ecosystem Interoperability Demonstrations

Synopsys 224G SerDes IP continues showcasing extensive ecosystem interoperability through multiple tradeshows

Synopsys has actively demonstrated the interoperability of its 224G and 112G SerDes solutions in various settings, establishing its commitment to creating technology that seamlessly integrates into diverse environments. Some notable demonstrations include those at the TSMC Symposium 2023, OIF & OFC 2023, ECOC 2022, DesignCon 2023 and other industry events.

ECOC 2023:

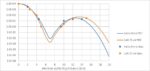

Synopsys showcased the performance of its 224G TX and RX with Keysight test equipment and their latest SW for key 224G TX and RX characterization parameters. OIF Interop demonstrations include its 224G RX equalizing a 224G C2M channel and 3rd party 224G SerDes TX, showcasing BER orders of magnitude better than IEEE or OIF 224G Spec is indicating.

TSMC Symposium 2023:

The demo highlighted interoperability between Synopsys 224G hardware, connectivity, mechanicals, signal integrity, and power integrity, demonstrating superior performance in real-time.

OIF & OFC 2023:

Synopsys demonstrated the interoperability of its 224G and 112G Ethernet PHY IP solutions. The demonstrations featured wide-open PAM4 eyes, very low jitter, and excellent linearity, underscoring the robustness of Synopsys’ SerDes technology.

DesignCon 2023:

This video clip shows seven demonstrations of the Synopsys 224G and 112G Ethernet PHY IP, and the Synopsys PCIe 6.0 IP interoperating with third-party channels and SerDes.

ECOC 2022:

Synopsys showcased the performance and interoperability of its 224G and 112G Ethernet PHY IP solutions at ECOC 2022. Demonstrations included the world’s first 224G Ethernet PHY IP interop with Keysight AWG and ISI channel.

Synopsys Commitment to Furthering SerDes Technology

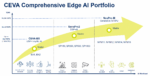

Synopsys’ commitment to pushing the boundaries of high-speed serial interface technology is evident in its multiple silicon proof points across various data rates, including 56G, 112G and 224G to implement 400G and 800G data connectivity. By successfully implementing and validating their SerDes IP across different data rates, Synopsys has showcased the robustness and adaptability of its core technology. This not only instills confidence in the current implementations but also suggests that the technology is well-prepared for the challenges posed by the upcoming 1.6Tbps speeds.

For details about Synopsys 224G Ethernet IP, visit the product page.

Summary

Synopsys 224G Ethernet PHY IP showcasing 224Gbps TX PAM-4 eyes in TSMC N3E

Synopsys 224G Ethernet PHY IP is available to help streamline the transition to 1.6T ethernet data transfer rate. In addition to doubling 112G data rates, the Synopsys 224G Ethernet PHY IP consumes one-third less power (per bit) compared to its predecessor while optimizing network efficiency by reducing cable and switch counts in high-density data centers. Synopsys is the first company to demonstrate 224G Ethernet PHY IP.

By emphasizing multivendor interoperability at 224G, Synopsys has positioned itself as a key enabler of the global data center ecosystem. Its solutions seamlessly integrate with a multitude of components, contributing to the efficiency and reliability of high-speed networking infrastructure.

Also Read:

Synopsys Debuts RISC-V IP Product Families

A Fast Path to Better ARC PPA through Fusion Quickstart Implementation Kits and DSO.AI

100G/200G Electro-Optical Interfaces: The Future for Low Power, Low Latency Data Centers