As semiconductor technology advances and nodes continue to shrink, designers are faced with increasing challenges related to device complexity, power consumption, and reliability. The delicate balance between high performance, low power usage, and long-term reliability is more critical than ever. This growing demand calls for innovative solutions that can dynamically adapt to real-time operating conditions, ensuring devices meet performance standards while minimizing unnecessary power consumption. In conventional chip design, operating voltages are typically set higher than the minimum required to account for variables like temperature changes, signal noise, and process aging. While this safety margin helps prevent performance issues, it often leads to inefficient power consumption due to a one-size-fits-all approach, resulting in over-provisioning.

Demo at the TSMC OIP Ecosystem Forum

At the recent TSMC OIP Ecosystem Forum, proteanTecs showcased their AVS Pro solution with a live demo that highlights how their adaptive voltage scaling (AVS) technology can revolutionize power management in semiconductor chips. The solution can achieve up to 14% in power savings.

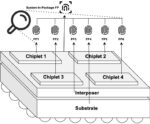

The demo showed how AVS Pro effectively minimizes power consumption by dynamically adjusting the chip’s operating voltage using embedded margin agents and dedicated algorithms. In this case, hundreds of agents were spread across the chip’s logic paths, to continuously monitor the timing margins—an indicator of how close a path is to experiencing a timing failure. This real-time data was fed into the AVS Pro application, which adjusted the voltage based on the current needs of the chip, ensuring that performance was maintained without excessive power usage. Initially, the chip’s supply voltage was set at 650 millivolts—higher than the minimal operating voltage, or VDD Min, of 580 millivolts. The extra voltage is applied as a safeguard against potential issues like aging, environmental noise, and workload variations. However, this guard band leads to over-provisioning, which wastes valuable power.

When AVS Pro was enabled, the system reduced the voltage based on real-time feedback from the agent measurements. This careful scaling resulted in significant power savings—up to 12.51% in the demo—without sacrificing performance or stability. AVS Pro continues to adjust the voltage until the timing margins reach a safe minimum. If a sudden workload spike or voltage drop threatens to push the timing margins below a critical threshold, the system instantly increases the voltage to maintain stability and avoid potential failures. Once conditions stabilize, AVS Pro resumes voltage reduction, ensuring the chip operates at its most efficient power level.

This kind of solution is essential for industries such as AI, high-performance computing (HPC), data centers, mobile telecom, and automotive electronics.

How AVS Pro Works: Real-Time Monitoring and Adaptation

At the core of AVS Pro is its ability to monitor millions of logic paths in a chip in real time, providing a highly granular picture of each path’s proximity to a timing failure. The system continuously analyzes these margins and dynamically adjusts voltage levels to prevent failures caused by environmental factors, process variation, latent defects, noise, application stress, and aging effects. In contrast to traditional methods, which apply broad voltage guard bands for worst-case scenarios, AVS Pro tailors its response to the chip’s real-time conditions. By doing so, it optimizes power usage while ensuring that performance remains reliable even under challenging conditions, such as high temperatures or heavy workloads. When conditions are favorable, AVS Pro safely lowers the voltage, reducing power consumption and extending the device’s lifespan, by pushing out device wearout.

The system also accounts for process variations, ensuring each chip is calibrated individually to operate at its optimal voltage. Moreover, it monitors aging effects that slow down transistors over time, continuously adjusting voltage to compensate for degradation, thus preventing performance degradation or premature failure.

A Holistic, Closed-Loop Solution

The power of AVS Pro lies in its closed-loop integration of hardware and firmware. This tightly coupled system continuously monitors, analyzes, and adjusts voltage levels in real time, ensuring the chip remains within its optimal operating parameters. The system not only responds to current conditions but also learns from historical data, enabling it to predict future trends and make proactive voltage adjustments.

Fast-Response Protection and Adaptation

Another key feature of AVS Pro is its fast-response safety net. In dynamic environments where conditions can change rapidly, it is crucial for the system to make quick adjustments to avoid timing failures. AVS Pro’s closed-loop architecture provides real-time feedback between the hardware and firmware, allowing the system to instantly react to voltage fluctuations or workload spikes. By detecting potential failures early and taking corrective action immediately, AVS Pro ensures that even minor performance fluctuations are addressed before they escalate into more serious problems. This type of capability is essential for applications that demand high reliability, such as cloud computing, AI/HPC, and critical infrastructure.

Summary

The combination of real-time monitoring, adaptive voltage scaling, and a closed-loop architecture makes AVS Pro an ideal solution for designers and manufacturers looking to optimize their products for the next generation of computing technologies, where performance, power efficiency, and reliability are paramount.

The proteanTecs AVS Pro solution pushes the boundaries of adaptive voltage scaling and power optimization, delivering tangible benefits across a wide range of applications, from data centers to consumer devices. By ensuring each chip operates at the most efficient voltage level, AVS Pro maximizes performance while minimizing power consumption, paving the way for the future of high-performance semiconductor design.

Play with a Power Reduction ROI Calculator. (you have to scroll down a bit on the page)

Learn more about chip power reduction and data center economics.

Access a whitepaper on Power Performance Optimizer.

Visit proteanTecs.com to learn more about their various technology offerings.

Also Read:

proteanTecs Introduces a Safety Monitoring Solution #61DAC

proteanTecs at the 2024 Design Automation Conference

WEBINAR: Navigating the Power Challenges of Datacenter Infrastructure