In a former life I was the GM of a business where we built specialized structures used for semiconductor process bring-up, characterization and monitoring. These monitoring structures were placed in wafer scribe-lines and were used to monitor key parameters during wafer processing. The structures provided feedback to automated process control (APC) loops that dynamically tuned the manufacturing equipment to keep the semiconductor process within specific tolerances.

Later, the industry played with the idea of using algorithms to identify key transistors of a design that could be monitored by APC loops to center the design for the best performing chip. Standard product chip companies routinely did this, but the idea never caught on in the COT / pure play foundry space due to fears that SoC companies could lose valuable IP by identifying critical parts of their designs.

Now, as designers continue to push the envelope in terms of process technology, performance and power they are finding that driving their designs to the edge of the process and design windows makes their designs more susceptible to process variability. And, with the advent of the internet-of-things, mobile applications, autonomous vehicles and robots, more SoCs are finding themselves in physically challenging environments (e.g. SoC’s in the wild). Combining a SoC on the edge of the design window with a widely varying physical environment has people worried about design failures at the worst possible moment.

Considering this, a new approach seems to be catching on and that is the idea of embedding process, voltage and temperature (PVT) monitors directly in a design so that the SoC can re-center itself within the design ‘window-of-the-moment’ by using on-chip monitoring data to intelligently alter its own voltage rails, clock speeds, work loads etc. Enter Moortec Semiconductor Ltd.

Moortec provides embedded on-chip subsystem IP solutions for Process, Voltage & Temperature (PVT) sensing/monitoring, targeting advanced node CMOS technologies from 40nm down to 7nm. They’ve recently published a white paper that is worth a read as it details an idea that seems to now be coming of age (see links below).

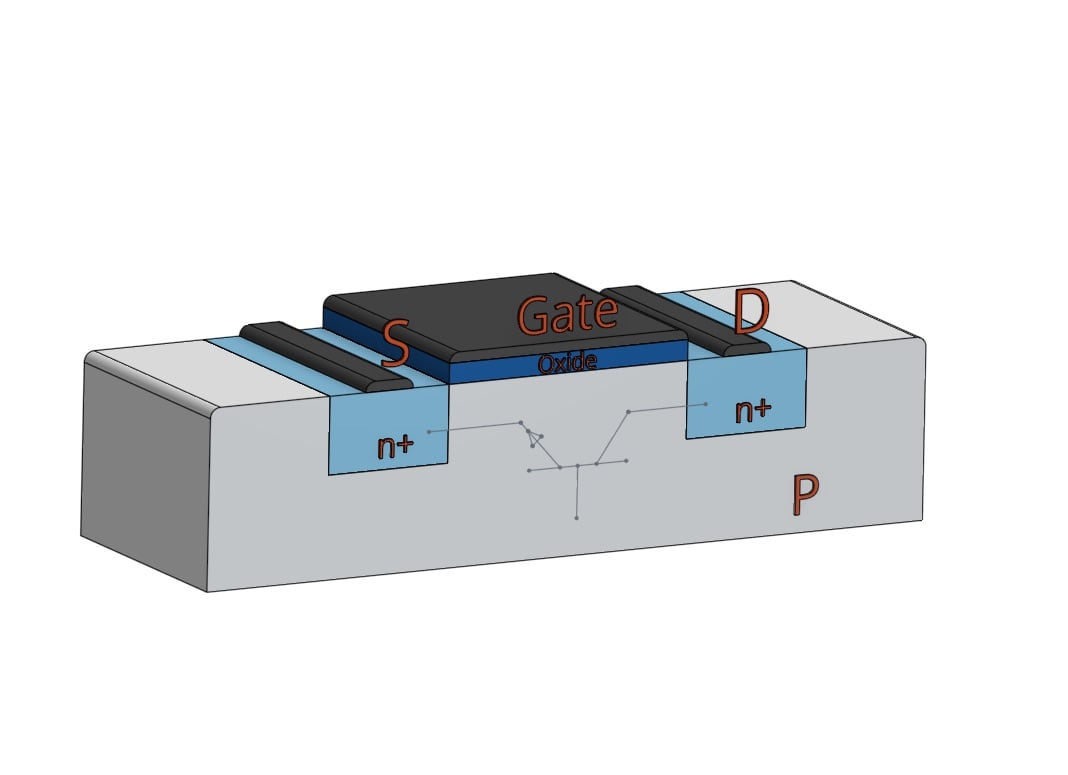

The white paper highlights some of the keys reasons for why now, might be the right time for on-chip monitoring to take off. The current challenges manifest themselves through process variability, timing violations, excessive power consumption and early aging effects. These can lead to ICs failing to perform as expected. As an example, the move to finFET technology proved to be a boon for dealing with short-channel effects and leakage for sub-30nm transistors. However, finFETs tend to have much higher current density and are therefore more prone to localized heating than conventional planar transistors. This can lead to problems in local-interconnect around the transistors. Self-heating and high current densities can lead to longevity problems, contributing to aging effects such as negative bias temperature instability (NBTI), hot carrier injection (HCI) and electromigration within the metal interconnects.

An interesting phenomenon called “temperature inversion” has been observed for process nodes below 40nm where transistors are seen switching more slowly than expected at cooler temperatures. This has caused designers to have to consider more “corners” when performing circuit-timing analysis. They find themselves now having to include simulations for conditions like ‘best hot’ and ‘worst cold’. The use of guard-banding to protect against various PVT corners is becoming problematic, especially for designs requiring very low energy consumption. As supply voltages are pushed ever closer to the switching thresholds of the transistors to reduce power consumption, small differences in the supply voltage can make for big differences in the switching delays of the circuit.

Another big contributor of variability is the use of multiple cores and die within a system-in-a-package (SiP). This type of packaging is becoming quite prevalent, especially for complex IoT designs with heterogeneous architectures, multiple cores, embedded memories, sensors and in-device transceivers. Often die are stacked on top each other making for hard to predict, temperature relationships that are dependent upon system loads and operating conditions.

Moortec proposes the use of process, voltage and temperature (PVT) monitors that are embedded into the SoC. The simplest use case is a one-time optimization of the SoC during production test. In this case the on-chip monitors can be used to facilitate actions such as speed binning. They can also be used to indicate how well a device will perform for a given power budget. A more complex scenario would be to use the monitors for real-time understanding of the SoC in its operating environment. An example might be having the monitors checking on-chip temperatures and then having intelligent circuitry request a minimum supply voltage that it knows will allow the device to meet timing.

Moortec has architected their solution to split the IP into two parts. The first part being the sensor or monitor and the second part being a controller that is used to interpret the data being provided by the monitor(s). This split architecture allows designers to decide how many sensors/monitors are needed for their specific SoC and where the sensors should be located. A simple solution might be to put sensors at each of the four corners of the die along with a sensor in the middle. A more complex solution may associate a sensor with each critical block and within individual processor cores or groups of cores. In these cases, data would be collected from the various sensing monitors over an interconnect fabric such as the ARM Advanced Peripheral Bus (APB).

All in all, I find this technology very compelling as it enables designers to truly squeeze the most out of their technologies, but in a way where risk can be mitigated and even modified depending on the application. At some point in the future I can see this being very applicable for integrated electronic-photonic designs where the photonics is especially susceptible to temperature shifts. It seems that analog and mixed-signal designs would also benefit from this technology.

For more information pull this white paper down and take a read. You can also check out the rest of Moortec Semiconductor’s offerings on their web page listed below:

Whitepaper: The Implementation of Embedded PVT Monitoring Subsystems in Today’s Cutting Edge Technologies

Moortec Semiconductors web site