David Zipper of Harvard’s Kennedy School writes in Slate that the incoming Biden Administration should “bring the hammer down” on Tesla Motors for its mis-labeled and therefore misleading Autopilot application and the recently updated Full Self-Driving software beta in the interest of the general public. Zipper’s plan, apparently is to “stop” Tesla and somehow put Federal regulators in charge of “guiding” the electric car company in its development and deployment of self-driving technology.

Slate: “The Biden Administration Needs to Do Something about Tesla”

Zipper is correct in highlighting the limitations of Tesla’s FSD software but his hysteria is misguided. FSD – launched this past fall as a beta for customers with suitably equipped vehicles and with an array of consumer caveats – is a potential menace. But a blunt force regulatory response of the sort Zipper is advocating is hardly in order and certainly nothing the Biden Administration should sign up for – especially given the fact that Tesla has become the poster child of global American automotive technological achievement.

Nevertheless, Zipper trots out fellow travelers supporting his cause including: the National Transportation Safety Board; the National Highway Traffic Safety Association, Partners for Automated Vehicle Education (PAVE), the AAA, the Owner-Operator Independent Drivers Association (OOIDA), the Government Accountability Office, and a somewhat ambivalent Alliance for Automotive Innovation.

What’s the real problem? How did we arrive at this moment where an innovative EV startup has disrupted industry norms and traditions with a customer-pleasing driving automation solution that simultaneously promises life-saving technological advances and the potential for sudden death? Why has Tesla stirred up such passionate opposition?

We got here because A) the NHTSA ran out of passive safety regulatory solutions such as seat belts, airbags, stability control, and anti-lock braking to reduce highway fatalities; and B) the agency has been sidelined, de-emphasized and defunded at the very moment when it needs more attention and funding to take on the challenge of regulating active safety systems such as blind spot detection, lane departure warning, automatic emergency braking, cross-traffic warning, and adaptive cruise control.

The last major NHTSA safety initiative was a voluntary effort agreed to by the automotive industry to implement automatic emergency braking. Before that came the decade-long effort to mandate backup camera technology.

If it weren’t for the COVID-19 pandemic killing thousands of Americans on a daily basis, consumers might be more troubled by the 100 Americans dying every day on U.S. roadways. Tesla’s CEO Elon Musk argues that his vehicles and his technology are part of the solution, not the problem.

The solution to the Tesla FSD beta software is quite simple and Zipper touches on it but fails to focus on it. The problem is the driver monitor built into Tesla vehicles. Zipper notes that it lacks an eye-tracker, thereby allowing it to be easily subverted by reckless or incautious users.

In reality, Tesla’s vehicles are already equipped with in-cabin driver and passenger monitors that may well be capable – with an over-the-air software update – of fulfilling the need for a more robust solution. Should a monitor be required, Tesla is capable of a flip-switching response.

So, the solution appears to be simple. NHTSA ought to initiate an investigation of the efficacy of driver monitoring systems and develop a recommendation. Given the resources and time normally required by such an investigation, though, NHTSA and the public might be better served by the pursuit of the same voluntary path taken for encouraging the adoption of automatic emergency braking.

Zipper notes the advantages of Europe’s so-called “type approval” process for reviewing and approving systems to be introduced for European automobiles. He fails to mention that the separate European New Car Assessment Program likely has overriding relevance here due to the popularity of its five-star safety ratings based on rigorous and ongoing research.

Euro-NCAP will require driver monitoring as standard equipment on all new vehicles beginning with model year 2022. All indications are that this requirement – already evolving – will eventually integrate eye tracking solutions.

As noted by Strategy Analytics in a recent report on the subject: “However, members of the UNECE safety committee believe that, by 2022, the test protocols from Euro-NCAP will be tightened to include direct monitoring of the driver’s eyes and face movements – and thus could be beneficial for interior camera-based driver monitoring systems.”

Strategy Analytics: “European Mandate Boosts Interior Camera-Based Driver Monitoring, Winners Now Emerging”

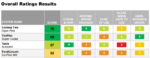

In other words, nothing less than eye-tracking will be required as standard equipment on European vehicles in order for them to achieve a five-star safety rating – equivalent in the U.S. to the Insurance Institute for Highway Safety’s five-star safety rating. It’s worth noting that Consumer Reports recently gave Comma. Two’s Open Pilot aftermarket driver assistance system a top rating in part due to its integration of eye-tracking based driver monitoring.

SOURCE: Consumer Reports

Consumer Reports: “Advanced Driver Assistance Systems – Test Results and Recommendations”

General Motors was a leader in integrating eye-tracking technology from Seeing Machines as part of its Super Cruise semi-automated driving system. Super Cruise took second place behind Comma Two in the Consumer Reports ranking. Tesla was third.

The greater significance behind the entire debate is the recognition of the efficacy of human-based driving. In its own literature, Euro-NCAP blames 90% of all crashes on human frailties. The reality is that if machines were doing all the driving today our transportation systems would fail miserably. Human beings are actually pretty good at driving cars – even if more than a million humans die every year in vehicle crashes.

The 100/day fatality rate in the U.S. actually represents great progress – but U.S. regulators are aware that they have reached an impasse. Transformative advances such as the adoption of seat belts and airbags are in the rearview mirror and active safety represents terra incognita. The path forward is literally and figuratively unclear.

The first step down this path, though, likely lies through driver monitoring, to better understand driver behavior and how to assist drivers. Rather than seeking to remove human beings from the driving task, auto makers, like Tesla, are seeking out ways to assist drivers.

The Consumer Reports report on advanced driver assist systems (ADAS) highlights the challenges of developing and refining effective and appropriate user interfaces that are helpful without being distracting, confusing, or annoying. Strategy Analytics conducts user experience research in this area as well and is on record criticizing Tesla’s FSD beta software.

Strategy Analytics: “Tesla Full Self Driving HMI – Not Useful, Not Usable, Not Safe”

We will not make progress by standing in the path of innovation. Developers, like drivers, need help and, maybe, some guidance. It may be time to appoint a proper Congressionally approved director of NHTSA and properly fund this essential organization so that it can take on its greatest challenge yet – helping machines to better assist humans in the task of safe driving.

At the very moment that the industry is poised to start removing steering wheels from cars, regulators are calling for driver monitors to make sure drivers are paying attention to the driving task. Suffice it to say there will be some very confusing messages for drivers to digest in the coming years. Let’s hope we get the messaging, the branding, and the regulations right in the interest of saving lives.