Speaking of having the right tools, FPGA-based prototyping has become as much if not more about the synthesis software than it is about the FPGA hardware. This is a follow-up to my post earlier this month on FPGA-based prototyping, but with a different perspective from another vendor. Instead of thinking about what else can be done beyond just prototyping, Synopsys has taken on three big myths surrounding the concept in a new white paper.

A very interesting point to me is the first one taken on by product manager Troy Scott: FPGA-based prototype capacity is often perceived as limited to less than 100 million ASIC gates. If we believe other sources, the magic numbers in this equation are 5 million gates or less, and 80 million gates or more. Stunningly, the 2014 Wilson Research Verification Study shows that people are actually less successful on the smaller designs. The overall first and second spin success rate, according to that study, is lower for designs at 5M gates than it is for designs at 80M gates.

That may be because people are spending more time and effort in verification and validation of larger designs. For that job, they are using better tools such as emulation and FPGA-based prototyping platforms – which are about even in terms of industry adoption, both around 33-35%.

However, prevailing wisdom says that as designs get larger, the reluctance to use FPGA-based prototyping seems to increase and the adoption rate drops. We’ve all seen news that the raw capacity of platforms like HAPS-70 have increased substantially with introduction of Xilinx UltraScale VU440 parts – Synopsys is now shipping these platforms to early adopters.

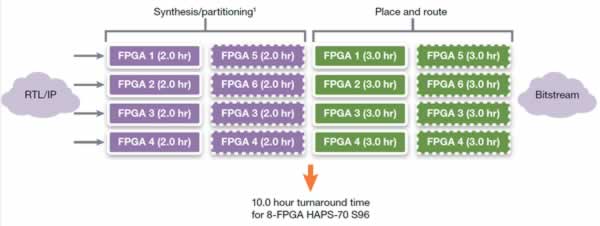

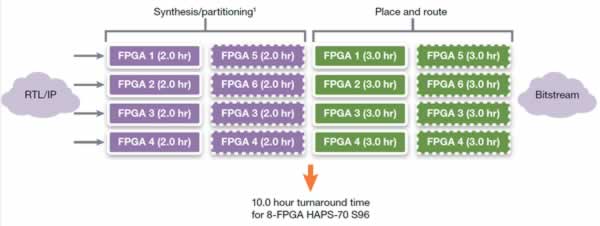

So, what’s the hold up? Myth #1 is the 100M gate barrier. There is no doubt one can now get 100M gates poured into an FPGA-based platform. The first concern is can one build and rebuild a design on that platform for 100M gates in a reasonable amount of time? Troy goes through an analysis using Synopsys ProtoCompiler, which leverages parallelism up to four concurrent processes of synthesis. The result is a 10 hour turnaround – 4 hours in synthesis and partitioning, 6 hours in place & route.

In a more advanced situation, ProtoCompiler supports any number of compile points, even allowing nesting. This facilitates incremental builds, where only part of the design is rebuilt. The four current processes can be applied to four compile points, so rebuilds of changed areas can go faster than the entire build. Multiple licenses can also be ganged to increase parallelism.

Myth #2 is the partitioning effort. Troy shares some data from the R&D team at Synopsys – recall they are also in the IP business, and they eat their own dog food so to speak – showing benchmarks on partitioning time across 13 programs. (They aren’t triskaidekaphobic, apparently.) These benchmarks include the use of high-speed interconnect TDM schemes, automatically generated by ProtoCompiler. The results may be surprising, showing a realistic view of days, not months, to get to a working design.

Myth #3 is debug. That does get tricky on multi-FPGA prototypes. Troy explains how ProtoCompiler handles instrumentation, coordinates with the deep trace debug capability in HAPS, and deals with external DDR3 memory for debug resources. One critical point Troy makes is that hundreds of signals can be captured for full seconds of clock time. Or, things can be stretched to grab more signals for shorter periods.

The full paper is here (registration required):

Busting the 3 Big Common Myths About Physical Prototyping

The upshot of all this is we’re not just talking about gate capacity any more. Maybe we should be talking more about the low end, which is why Synopsys introduced HAPS-DX for smaller environments. Being able to synthesize designs faster, while inserting effective partitioning and debug, is what makes an FPGA-based prototyping platform really useful. In both the small and large cases, it is the ProtoCompiler technology where Synopsys is making progress.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.