Dr. Stanley Hyduke, founder and CEO of Aldec talks about how keeping pace with the evolution of FPGAs and listening to customers underpin the company’s success.

Tell us about Aldec

Aldec is a team of bright, self-driven individuals, all striving to contribute and be a part of the technological evolution. I formed the company in 1984 and today we remain a privately-owned EDA company with a user community of more than 35,000 engineers, more than 50 global partners and a portfolio of software- and hardware-based products.

We are seen by many in the industry as the FPGA verification company. While that certainly is a core capability, we cover the entire FPGA spectrum; from design entry, code linting, simulation and emulation, through to helping engineers create the reports needed for full lifecycle traceability and certification, and ending on FPGA prototyping.

We have very much grown up with the FPGA industry. For instance, Xilinx was also established in 1984. However, our ability to provide high-performance verification tools has always been a strength, as has our commitment to delivering greater efficiency. Coincidentally, both strengths were reflected in the name of our very first EDA tool, Standard Universal Simulator for Improved Engineering or SUSIE, in 1985.

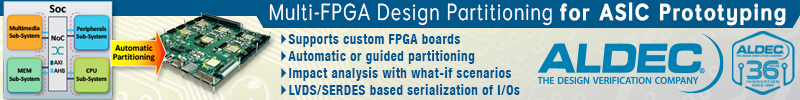

Over the years we have certainly kept pace with how different industry sectors have been utilizing FPGAs. For instance, the use of FPGAs for ASIC prototyping presented a great opportunity for us and led to us launching our first HES hardware, emulation, and prototyping solution. Combined with our software-based products for planning, requirements, linting and simulation, our HES products enable us to span the entire ASIC verification spectrum. Moreover, as FPGA complexity has increased – to the extent where we now have FPGA-based SoCs – the verification flow has much in common with that of an ASIC.

Yes, we’re still mainly seen as the FPGA verification company. But I don’t mind that. Just look at what FPGAs are doing today and will be doing tomorrow.

How has the role of the FPGA changed during recent years?

FPGAs are pushing hard into several new markets and for a number of different reasons. A large gate-count FPGA with an embedded processor core can accommodate some of the most ambitious SoC designs around. Its re-configurability and the fact that its architecture is ideal for certain deep pipelining and massive parallel compute intensive tasks makes a modern FPGA a very attractive position.

Granted, SoC ASICs and ASSPs still have their places for lots of emerging applications and FPGAs will most likely continue to play a hugely significant role in their development. But for some applications, targeting a large, high-speed reconfigurable device makes a lot of sense because of what an FPGA can do.

High Performance Computing, or HPC, is a prime example. For many process-intensive tasks traditional processors running software can no longer cope. Accordingly, there is a shift to remove much of the processor’s workload into one or more FPGAs that provide hardware acceleration.

Interestingly, companies in the HPC sector are hiring lots of FPGA designers and that’s good news for us because of the complexity of some of the mapped functions. We have, for example, sold several seats of our functional verification tool Riviera-PRO into the HPC sector. In conjunction with the emergence of HPC we’re seeing increasing use of FPGAs in cloud-based applications; and in this respect our hardware emulation products have great potential.

On another note, FPGAs are becoming far more involved in ‘edge processing’ by which I mean they’re doing more at the interface with the real world. For example, image conditioning, recognition and processing, as part of an Embedded Vision system, requires lots of rapid Single Instruction Multiple Data (SIMD) work to be done before software algorithms – possibly running on an embedded core within the FPGA – make decisions based on what’s being ‘seen’ at the ‘edge’. EV is an essential part of ‘Deep Learning’, which is itself part of emerging AI technologies.

But it’s not all about large gate-count devices. Smaller gate-count devices are being embedded into sub-systems (some of which are safety-critical) within modern avionics architectures – and it’s easy to imagine the automotive sector following suit. In addition, rad-hard FPGAs are making their way into space launch vehicles and payloads (for deep space exploration) where, in the past, ASICs and ASSPs would have been employed. And in the consumer sector, within which I include Infotainment, several IoT projects are likely to be served by small to medium gate-count FPGAs.

How are Aldec’s products keeping pace with the evolving and new applications?

In many instances we’re seeing tools that we have had in our product portfolio for years being pulled naturally into these new markets. For instance, at SC16 (the International Conference for High Performance Computing Networking, Storage and Analysis), we worked with ReneLife (part of the Indian Institute for Scientific Research) to showcase an accelerator platform for genome alignment. The platform includes an Aldec HES-7 board – as traditionally used for ASIC prototyping – and, at the time of the show, it was already possible to align 650GB of human genome short read data sets in a matter of minutes.

Another HPC application is High Frequency Trading, or HFT. High Frequency Traders and Quantitative Analysts (Quants) strive to reach close-to-zero latency to employ a range of mathematical algorithms to take advantage of fleeting variations in stock price. Even if it’s a fraction of a cent return, if they can receive the market information from stock exchanges several nanoseconds faster than their competition and trade in sufficiently large quantities, big profits can be made. At The Trading Show 2017 in May we unveiled our HES-HPC-DSP-KU115 prototyping board. While it’s a great addition to our HES family of products for SoC and large ASIC designs, this board makes possible the accelerated execution of many types of HFT algorithms.

As for our software-based tools, they’ve moved with the times too. For instance, the discipline of RTL linting – which we cater to with our ALINT-PRO tool – struggled for acceptance in the early years. But, with designs becoming increasingly complex and heavily reliant on third party IP running on various asynchronous clock speeds, it has never been more important to go into simulation and synthesis with the cleanest HDL possible. In addition, companies want standardization; not just against industry best-practices but also against their in-house practices for when they use subcontractors. Plus, DO-254 is effectively a best-practice guide and, with this in mind, we recently launched a DO-254 plug-in for ALINT-PRO.

Our high performance mixed-language RTL simulator tool, Riviera-PRO, has also kept pace with user requirements. As a traditional FPGA verification tool it ticks all the boxes as it is fast, efficient and supports languages that include; Verilog, VHDL, SystemVerilog(UVM) and SystemC. We have many users in the HPC sector that are using Riviera-PRO with Python and Cocotb to craft extremely complex testbenches.

But new products are also needed. At the Embedded Vision Summit earlier this year we launched the latest addition to our family of Xilinx Zynq-based prototyping boards; the TySOM-2A-7Z030. One of the reference designs available for the board in an ADAS multi-camera surround view, and the way it works is reflective of what is happening within embedded vision and deep learning applications. The reference design processes and displays four video streams simultaneously in real-time; a feat which has been achieved by offloading some of the computationally intensive tasks from the processor (an ARM Cortex-A9) onto the FPGA. With the launch of TySOM we’re catering for software as much as hardware engineers and in some respects we’re mirroring how the FPGA vendors are behaving. It’s no secret that they see software engineers as future users.

I also mentioned that FPGAs are being increasingly employed in avionics applications, and we’re proud of the fact we’ve been supporting this part of the aerospace sector for several years. In fact, 2017 marks the tenth anniversary of our first engagement with a customer needing to certify to DO-254. Also, it was later in that same year, 2007, that we launched our D0-254 Compliance Tool Set (CTS) for the at-speed, in-target verification of an FPGA. Since then many companies have adopted our CTS as their de facto means of demonstrating design assurance levels A and B under DO-254.

As for space applications, radiation-tolerant FPGAs are being used. However, these tend to be one-time-programmable (OTP) anti-fuse space-qualified devices. Clearly, before committing to launch you must have complete confidence in the design, so it’s likely that several expensive devices will be consumed as part of the verification process. We therefore worked with Microsemi to jointly develop an adapter for prototyping purposes. It uses Microsemi’s ProASIC 3E flash-based FPGA technology and is footprint is compatible with Microsemi’s RTAX devices.

Earlier this year Siemens completed its acquisition of Mentor Graphics, one of your competitors, any views on that?

The EDA user community as a whole is still undecided on whether the acquisition will ultimately be a good or a bad thing for the industry. The fact that Mentor was acquired by a company not traditionally associated with EDA – and in some respects one of its customers – still has many people puzzled. From our point of view, the acquisition happening to one of ‘the big three’ sent a great message out to the industry. Company size is not an indication of stability. Anything can happen. And there must be cases where those competing in the same sectors as Siemens now find they’re using their competitor’s EDA and CAD tools.

The acquisition has leveled the playing field and people are looking – more than ever before – beyond the big three. This is great because it’s all about the tools now – performance, cost-effectiveness, suitability for use in specific industry sectors – and the vendor’s ability to listen to what the end-users want rather than telling them what they should be using.

Also Read:

CEO Interview: Vincent Markus of Menta

CEO Interview: Alan Rogers of Analog Bits

CTO Interview: Jeff Galloway of Silicon Creations

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.