Understanding Intel’s future means understanding Intel’s past

Yes, there are two paths you can go by, but in the long run. There’s still time to change the road you’re on.

Intel is at a crossroad. The road they have been on since inception, and the road that has differentiated them from the rest of the pack and made them great was their manufacturing prowess. They are the last “real man” standing that owns their own fabs.

Now we are at a point where Intel has to decide whether to continue to try to be the last CPU IDM, patch up their mistakes and stumbles in manufacturing and recover their greatness (although maybe not Moore’s Law leadership). Or, throw in the towel and follow AMD and everyone else into TSMC’s warm embrace. Or perhaps some half baked compromise between the two extremes.

There is no easy way out nor clear decision to be had. And you may ask yourself, “Well, how did I get here?”

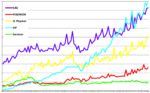

AMD was taking their last dying gasps, Apple gave up on PowerPC and went all in with Intel. Intel was flying higher, even more so than its partner Microsoft.

Then a few years ago, it seemed as if something started happening and it all started unraveling.

It was not a single point in time nor was there a single inflection point event that signaled a change in Intel, it came about much more slowly, much more insidiously.

Lets get rid of our most experienced people

A few years ago, back in 2016, Intel did a “RIF” (reduction in force) of about 11%. Intel had previously done a significant reduction way back in 2006 of about 10%. At the time we noted that Intel seemed to be trying to soften the blow by offering early retirement and other “packages” to older employees with the most seniority (and experience). It seemed to us , as a casual observer, with some friends at Intel, that the RIF had gone well beyond its original intent and Intel was losing real, experienced, talent, who were taking the attractive “package” and leaving pre-maturely without transition.

In an industry that runs on “tribal knowledge” and “copy exact” and experience of how to run a very, very complex multi billion dollar fab, much of the most experienced, best talent walked out the doors at Intel’s behest, with years of knowledge in their collective heads

Lets go buy some stuff with the money burning a hole in our pockets

Intel over the past years has also been on a bit of a shopping spree, buying all nature of companies for very high valuations. Though we won’t go through all the acquisitions, they all seemed to have some sort of legitimate justification or logic even though they may or may not have exactly been anywhere near Intel’s wheelhouse. In the end , when we add up the price of the acquisitions and try to calculate the value added to Intel we come up very short.

While we would never criticize M&A as a method to grow as we think that properly applied acquisitions can propel a company well beyond its peers and into new, faster markets, badly done M&A, done with weak logic, can sink a company.

Mobile Phones are toys that will never amount to anything

Intel famously balked at making CPUs for Apple’s Iphone and essentially completely “whiffed” on the smart phone and tablet markets while TSMC embraced it. (This somehow reminds me of a software analyst I knew when we both were at Morgan Stanley, telling Bill Gates that microcomputers wouldn’t amount to anything when Morgan was pitching for Microsofts IPO business, which Goldman got and put them on the tech map). Though this was a single, key mistake, there was never a significant recovery effort until the game was already over.

Forgetting your roots/ Taking your eye off the ball

Perhaps the peak of my concerns about Intel came a number of years ago at an Intel event. The CEO of Intel at the time (name withheld to protect the innocent…) was doing a presentation about all the myriad of new markets that Intel’s was getting into and looking at.

It was a litany of multi billion dollar opportunities and amazing technologies. He spoke for an hour on a host of topics and I never heard him use the word “semiconductor” (or chip) once. You would not have known from the speech that Intel was even in the semiconductor business in any way, let alone that it was the “leader” from which all its profits came. When I walked out of the room I had the urge to short Intel on the spot….but didn’t.

Deserting a sinking ship

When we heard that the legendary Jim Keller was leaving Intel in the beginning of 2020 it was clearly an ominous sign. Jim is the semiconductor design genius/guru that has had stints at Tesla, Apple, AMD and finally Intel. He has been a bit of a turn-around/seal team that parachutes in for a few years, pulls off a miracle and then hops on to the next lily pad to come to the next companies rescue. He has since moved on to an AI chip start up that he will lead.

His departure around the time that Apple also abandoned the Intel ship was a very clear indication that things were already sinking. Here we are, almost a year later still without a rescue plan.

A plane crash is never “just one thing” went wrong

My point in all this is that Intel’s problem is not just bad yields and delays of 10NM and 7NM caused by a singular or some number of esoteric technology issues that caused them to fall behind TSMC in Moore’s Law.

Sure, that’s the manifestation of other underlying issues that taken together have caused those symptoms to come to a head to cause problems. Its a bit like an airline trying to decide whether to outsource its airplane maintenance after a plane crashed due to a loose screw after years of neglect, mistakes and laying off the most experienced mechanics. Maybe its not the mechanics faults.

Maybe its a managerial fault that needs fixing first. For inspiration look at what the semiconductor winners are doing

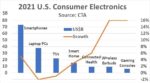

It is interesting to note that Intel used to be the biggest spender on capex in the industry and was passed by around the time of its issues starting to manifest by both TSMC and Samsung.

We are now looking ay Samsung potentially spending a record $30B a year and TSMC spending a record $20B, both more than Intel.

While randomly throwing money at an issue is not a solution, a focused spend on both machinery and people that are key to your leadership position is well warranted and should not be subject to cuts to come in on budget or make a quarterly earning number. It is clear that TSMC, Samsung and now the Chinese, view long term spend and commitment in semiconductors as crucial to long term success despite short term impact. Focusing on the stock price, buybacks and acquisitions while using capex and R&D to balance the budget is the quintessential sacrificing long term for short term.

The problem is much bigger than just Intel

While we love Intel as being an American technology pioneer and former leader, we are also worried about Intel being the last standing US semiconductor maker (not counting Micron…which doesn’t quite count). As we have written about extensively for many years, the risk to the greater US, its national defense and economy by losing semiconductor leadership is well beyond what most people can even begin to understand.

The numbers are many times Intel’s revenues and go well beyond just dollars and cents to national security.

While Intel and its shareholders are not responsible for the US national economy and security, the alignment between Intel’s long term success and the US’s best interest is clear and synergistic.

AMD’s example is not a good one

Many investors and analysts would take the easy position that following AMD’s lead in splitting the company in two between the fabs and the rest of the company worked for AMD and will work for Intel. We think that this is not a good comparison.

AMD did not have the minimum critical mass needed to support a fab and all the R&D that goes along with it. Intel has the size, scope and market needed to support the associated spend. The basis of the problem is not economic as it was with AMD it is an execution/technical problem that Intel has encountered. The divorce between AMD and its fab did not work out too good for its fab (now Global Foundries).

AMD did find a buyer (sucker) in Abu Dhabi, who thought they were going to buy their way into high tech but didn’t anticipate the years and billions of endless spending to keep up in semiconductors, especially without the requisite revenue/profitability to support it. The math simply didn’t work and they wound up bailing out of the Moore’s Law race. GloFo is now off hiding in a “specialized” corner of the semiconductor industry hoping to avoid being trampled by the bigger players before they can IPO or otherwise unload the company.

Investors will point to AMD’s recent success but we would point out that that success is more of an example of TSMC’s success than AMD’s, much as Apple’s success is directly linked to TSMC and Nvidia and others etc;. While we take nothing away from Lisa Su’s management of AMD, she did have the luck of being in charge when the supply deal with the millstone that was GloFo expired and AMD was free to use TSMC as a fab, which was somewhat of a “no-brainer” . Intel does not have the luxury of the same luck nor easy decision to make.

Intel’s situation is much more complicated. In addition, AMD honestly didn’t have much of a choice at the time.

Putting toothpaste back in the tube is not easy

The other end of the decision spectrum is doubling down and fixing the existing issues with Intel’s manufacturing problems. Regaining leadership in Moore’s Law is likely a lost cause that can’t be recovered as we haven’t seen TSMC ever stumble which is likely what Intel would need to happen.

We are also at a bit of a transition as Moore’s Law is indeed slowing and becoming more difficult and multiple cores, multiple packaged die, 3D stacking and other alternatives attempt to make up for the geometric slowing.

This means that it isn’t just catching up to TSMC on Moore’s Law by leapfrogging a generation or miraculously fixing the yield issues it also means doing a lot on the many alternative technology fronts. Intel does have the resources to fight a multi-front war. It would mean a likely increase in spend and duplicate costs as Intel would have to outsource to TSMC while at the same time also spending to fix and improve their existing process and technologies.

This larger incremental cost would likely not sit well with investors as the additional costs would squeeze profitability in the short run (likely a number of years)

Outsourcing only works if you have multiple potential manufacturers…

We would point out that outsourcing only works in the long run if you have multiple, equally competent outsource partners to play off one another to keep them honest and pricing reasonable. If both Intel and AMD outsource to TSMC without Samsung as a viable alternative, they will both lose as TSMC will be in the drivers seat and will be able to determine winners and losers and pricing. AMD being at TSMC has worked because the real competition has been TSMC versus Intel.

We would also point out that Apple is an even more vulnerable position than Intel with TSMC now that it has decided to move all its laptop/desktop CPU business away from Intel and put all its eggs in TSMC’s basket. In Apple’s case they have even less alternative as going to Samsung foundry as a backup/alternative/ stalking horse against TSMC is not a very viable alternative. Apple is perhaps even more vulnerable than Intel would be.

Even though Samsung is pouring oodles of money into its hugely profitable chip business it doesn’t mean they will be competitive in foundry and where they are making money is memory anyway.

Outsourcing is a “burn the boats”/”roach motel”, one way decision that there is no recovery from if it doesn’t work and it will likely put Intel at the mercy of others. Both Intel and AMD will be in the exact same boat.

Thinking outside the box

We would imagine that there should be alternative, unique solutions to Intel’s manufacturing issue.

Could Intel buy/rent TSMC’s process/know how? Could TSMC help fix/run Intels advanced fabs? Could Intel become TSMC’s presence in Arizona?

What alternative arrangements can get Intel’s manufacturing back on track more quickly while using TSMC in the interim as a fill in the gap in manufacturing?

Could the US government get involved with the “Chips for America? act as the threat of losing Intel’s manufacturing would be a clear case for that legislation.

Maybe Apple would be willing to chip some money in to get on shore manufacturing that is not dependent on an isolated island off of mainland China.

There should be a way to help Intel out in the near term with manufacturing and help TSMC out with presence and diversity outside of its island.

Maybe Samsung could step in as some white knight and team up with Intel such that both get a synergistic effect with their logic manufacturing efforts.

We think that this complex situation requires a complex, out of the box, solution which will require some compromise.

The Stocks

Unfortunately we don’t see an easy way out for Intel that doesn’t hurt either the short term or long term valuation. If Intel increases outsourcing and abandons being a fab leader they will sacrifice long term value for short term profits and a quick/easy fix.

If they choose to stay in the game, short term profits and the stock will be hurt by the extra expense of outsourcing while at the same time continuing to invest even more to fix the problem as an independent manufacturer.

If Intel chooses the outsource approach we would likely look to get out of the stock after it had run up on the news of the cost cutting and investors thinking the same thing will happen to Intel that happened to AMD.

If Intel chooses to fight on independently, we might be tempted to wait for a lower entry point as the impact on profitability would increase.

The wild card is some sort of in between solution that is a unique hybrid that is difficult to game out, but that may be the best hope for a good outcome.

From the perspective of semiconductor equipment makers, Intel giving up the ghost would be very bad as they would not only lose Intel’s spend but would have to deal with an ever more dominant TSMC that would take industry spending from 3 players down to two oppressive giants, Samsung and TSMC. More customers are always better for equipment makers.

Tokyo Electron & Hitachi does a lot of Intel business as does KLA and to a slightly lesser extent Applied. Lam is more exposed to Samsung. ASML already does the vast majority of its business with TSMC and less with Intel so they would see less impact.

About Semiconductor Advisors LLC

Semiconductor Advisors is an RIA (a Registered Investment Advisor),

specializing in technology companies with particular emphasis on semiconductor and semiconductor equipment companies. We have been covering the space longer and been involved with more transactions than any other financial professional in the space. We provide research, consulting and advisory services on strategic and financial matters to both industry participants as well as investors. We offer expert, intelligent, balanced research and advice. Our opinions are very direct and honest and offer an unbiased view as compared to other sources.

Also Read:

SMIC Blacklist puts ASML in Jam

Noose tightens on SMIC- Dead Fab Walking?

China Semiconductor Bond Bust!