One of the many consequences of shrinking process nodes is that traditional OPC can no longer achieve good pattern fidelity with reasonable turn-around-time. But there is a solution; we made it ourselves and call it matrix OPC.

First, let’s explore the problems with traditional optical proximity correction (OPC) when applied to advance node layouts. During OPC, edges in a layout are broken into fragments and each fragment is iteratively adjusted by multiplying its edge placement error (EPE) with a carefully selected or calculated feedback. The traditional OPC algorithms assume a purely one-to-one correspondence between each individual polygon fragment on a mask and its associated EPE on the wafer. That means that moving one fragment on the mask only impacts the associated EPE, and conversely, that the incremental displacement of a fragment on a mask can be estimated by its associated EPE during the OPC iterations. This approach of tuning the layout based on single fragment EPE can no longer handle the stronger fragment-to-fragment interactions that start to be seen at the 28nm technology node.

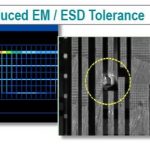

An example of such An example of such problem is shown in Figure 1. Traditional OPC is clueless when it comes to converging on a solution when there is strong cross-coupling between neighboring fragments. In the figure, the grey boxes are the wafer target, the rectangles are the mask shape, and the circles are simulations of the target shapes as they’ll actually be manufactured. The red lines are the contour shapes and also the mask shapes computed from a traditional OPC recipe, and green lines are also contour shapes, but with a perturbation applied to one edge on the mask for a test. The left picture shows that changing the upper edge of the lower rectangle on the mask (red line) only impacts the lower contact’s contour, but the impact is on all four sides. The right picture shows that a small change in bottom edge of the upper rectangle impacts the image of both contacts, but mostly the bottom one. A perfect solution would match the simulated contours to the grey target squares. In this case, conventional OPC is not capable of finding such a mask shape.

So how do you get OPC to recognize the effect neighbor fragments have on each other? Glad you asked, because we think the answer is matrix OPC. Matrix OPC incorporates the influence of neighboring fragments into the feedback control of fragment movements for full-chip OPC. We’ve been working on this technology for roughly 14 years, with the first publication at SPIE in 2002–“Model-based OPC using the MEEF matrix,” by Nick Cobb and Yuri Granik. Another paper to read on the topic is “A Feasible Model-Based OPC Algorithm Using Jacobian Matrix of Intensity Distribution Functions,” by Ye Chen, Kechih Wu, Zheng Shi and Xiaolang Yan. We’ve been perfecting the technique ever since, and it is now available in the Calibre[SUP]®[/SUP] tools. My colleague, Junjiang Lei, and I put this blog together as an introduction to the fruits of our long labor.

Calibre Matrix OPC is targeted to the 28nm, 20nm, 14nm, 10nm technology nodes. It is edge-based, full-chip level, enhanced OPC, and scales to large numbers of CPUs in the same way as the traditional OPC does and with comparable runtime. Its simulation and computation are use the compact form of the Calibre 3D mask models and resist models. It is compatible with and augments the existing Calibre OPC/RET techniques, including tagging, retargeting, process-window OPC and multi-patterning OPC. We also made sure that Matrix OPC can be combined with traditional OPC in the same recipe.

Matrix OPC works because it changes each EPE on the wafer by calculating the movements of all polygon fragments on the mask collectively. The correlations between fragments are captured in a mask error enhancement factor (MEEF) matrix. [Unfortunately, theMEEF matrix is almost always ill-posed, large in size, and sparsely populated. The condition of such a matrix demands extra care in applications, which we discussed in a SPIE paper this year, “Model-based OPC using the MEEF matrix II” Junjiang Lei, Le Hong, George Lippincott, James Word, Proc. of SPIE Vol. 9052 (2014).]

We demonstrated the quality and performance of matrix OPC in benchmark tests of 14nm-28nm designs with known difficult hotspots. Figure 2 shows a few of the cases, which are either industrial full-chip designs or clips from full-chip layouts that were large enough to project the full chip quality confidently. On real customer designs, matrix OPC improves the OPC results in terms of the verification standards defined by customers’ OPC verification recipes. In each case, the matrix OPC recipe results in a much lower relative error number than the baseline OPC.

In summary, the Calibre team is pleased to offer matrix OPC to reduce the severity of the errors in the OPC verification results. This new functionality is fully compatible with the existing Calibre OPC techniques and solutions, including, but not limited to, the 3D mask model, the CM1 resist model, multi-patterning OPC, tagging functions, retargeting techniques, and process window OPC functions. In a given recipe, the matrix OPC iteration and the traditional OPC iteration can be used in a mixed fashion. We’ve also recently reduced the runtime overhead required for Matrix OPC to nearly 0.

Le Hong (product engineer) and Junjiang Lei (technical program manager), Mentor Graphics