Kush Gulati is the CEO of Omni Design Technologies, a company he co-founded in 2015 to lead a transformation in how high-performance analog IP is developed and integrated into SoCs in advanced process nodes. With a PhD from MIT, he is a renowned expert in data converters, and a serial entrepreneur. His first startup was a detective agency he started when he was in middle school — Omni Design is his fifth business. Kush’s previous startup – Cambridge Analog Technologies – was acquired by Maxim Integrated in 2011 and, after a few years leading the Advanced IP Solutions group at Maxim, he founded Omni Design in 2015. Speaking to Kush, I am immediately struck by his innovative thought process, clear vision of the future of the semiconductor industry and for how Omni Design is enabling highly differentiated SoCs across a wide range of emerging applications, including 5G, wireline and optical communications, automotive/ADAS, AI, and IoT.

Please tell us about Omni Design?

Omni Design was founded to meet an industry need. The world we live in is analog, and virtually all the data processing is digital. So… you need to translate the analog data into digital, process it, and then transform it back to analog to operate in the real world. Technologies such as 5G, autonomous vehicles, optical computing, and image processing are driving exponential growth in the requirements to receive and transmit data at ever-increasing speeds and dynamic range using ever lower energy.

Traditional suppliers of analog-to-digital converters (ADCs) and digital-to-analog converters (DACs) have been selling discrete devices that are incorporated onto a printed circuit board with the rest of the systems. As systems have become both more integrated and more powerful, there is a tremendous and growing industry demand for these ADCs and DACs in the form of embedded IP cores.

Omni Design is helping meet this demand by offering a portfolio of ADCs and DACs at various resolutions (from 6-bits to 14-bits) and sampling rates (5 Mega samples per second to 20+ Giga samples per second) in 28nm and advanced FinFET process technologies.

Omni Design has a tremendously creative team – this is at the very heart of our ability to build complex circuitry that expands the state of the art while simultaneously achieving a very high rate of first silicon success.

What makes Omni Design unique?

First of all, Omni Design has invented techniques that not only improve the power efficiency and the performance of data converters and analog circuits but also those that enable analog designs that are extremely compatible with FinFET design. This makes our products easier to integrate with digital circuits in advanced FinFET processes which is of course key to enabling complex SoCs for applications such as 5G and LiDAR.

Second, we take a systems approach to analog design – focusing not only on the specific IP we are developing, but also on how it will fit into the customer’s overall system specification. Our optimization process enables customers to get the maximum value from the IP we deliver to them. In LiDAR applications, for example, we focus not just on one block of the signal chain, but the entire solution from the optical sensor interface to the digital interface.

Third, we are developing our analog IP using a platform-based approach. From each of these platforms we can deliver data converters that are closer in spec to the customers’ requirements without needing to develop them as custom IP from ground up. The benefit to the customer, of course, is that once the architecture of one of our platforms is silicon validated, the derivatives of that platform can be deployed quickly and with high confidence in their products — without requiring additional test chips and silicon measurements.

What keeps system designers up at night?

The real-world demands of emerging technologies such as self-driving cars, 5G, IoT, etc. are clearly pushing the envelope when it comes to analog design and the integration of high-speed analog IP into an SoC. Discrete components are simply not able to provide the required performance, especially at a cost and with the power efficiency necessary to move these technologies into the mainstream. Consequently, these system designers must look for novel techniques to integrate complex analog functionality into their SoCs to get their next-generation products to market.

How can Omni Design help?

Omni Design works in close partnership with its customers to ensure that they get highly complex analog IP that, when incorporated into their SoCs, works the first time with performance that meets or exceeds the original design specifications. We use our proprietary SWIFT™ technology, so customers can be confident that the data converter IP will meet or exceed their power and performance requirements – at a competitive cost.

Which markets is Omni Design targeting?

Omni Design is focused on the leading edge of the market – delivering state-of-the-art analog IP in process nodes from 28nm to advanced FinFET nodes. Our customers are razor-focused on product differentiation in their end markets and come to us with challenging requirements in sampling rates, power, resolution, and many other specifications tied to the quality of the data converter operation. We work closely with them – as a consultative partner – to design the final data converters and analog front-end modules so that these customers can optimize their system and derive the maximum benefit from our IP.

Final thoughts on the semiconductor IP business?

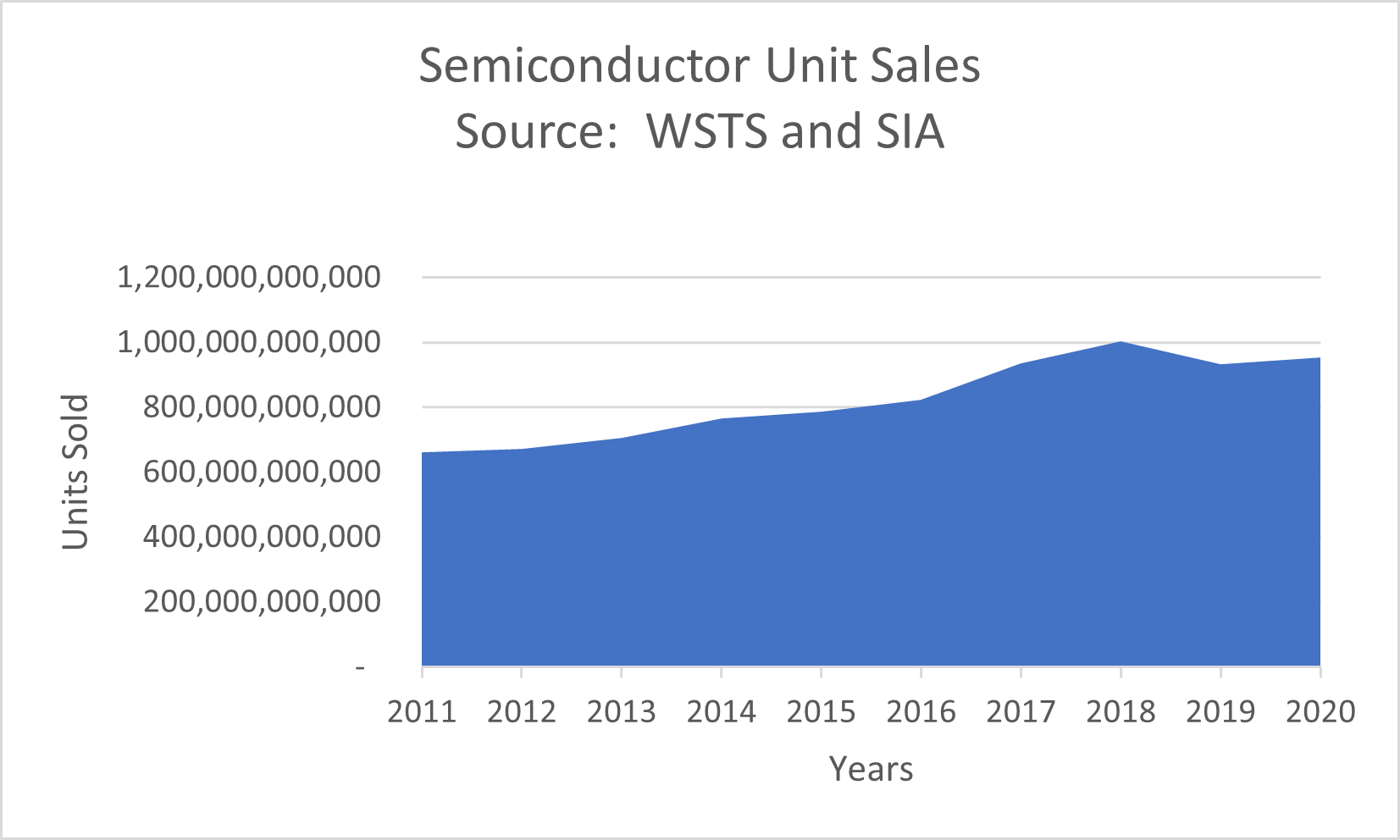

We are in the midst of another major transformation of the semiconductor industry. The move to the fabless model that dominated the 1990s was the first transformation. The emergence of foundries eliminated the need for companies to pour huge amounts of capital into increasingly expensive manufacturing facilities. Although that hurdle was eliminated, another gradually emerged as those foundries advanced to increasingly sophisticated process nodes. In FinFET process nodes, the concept of a complex system on a chip has become a reality. When tens of billions of transistors are available, it is possible to create extraordinarily powerful and differentiated solutions. The design challenge, however, is daunting. It would require hundreds of man-years to take advantage of those transistors using a full-custom design methodology.

This challenge will be solved by IP and design reuse. Omni Design and other IP companies are creating complex building-block circuits that were once discrete chips but are now being integrated by skilled systems developers using sophisticated design tools to quickly and efficiently create highly capable SoCs. By enabling the integration of high-performance, reusable analog IP in complex SoCs designed in FinFET processes, we will see the same sort of semiconductor industry explosion in innovation that occurred when the fabless model initially emerged.

Also Read:

Executive Interview: Casper van Oosten of Intermolecular, Inc.