Over the recent years, the volume and velocity of discussions relating to chiplets have intensified. A major reason for this is the projected market opportunity. According to research firm Omdia, chiplets driven market is expected to be $6B by 2024 from just $645M in 2018. That’s an impressive nine-fold projected increase over a six-year period.

Chiplets are neither chips nor packages. They are what we end up with after architecturally disintegrating a large integrated circuit into multiple smaller dies. The smaller dies are referred to as chiplets. Conceptually one could say that a chiplets-based design resembles a Silicon-In-Package (SiP) based design. But the similarity stops at the concept level at best. Historically SiP based approach was adopted primarily for faster time to market by mixing and matching dies of pre-existing chips. Many times, these pre-existing dies were in different process technologies. The package related cost savings combined with the time to market benefit made certain SiP-based products better business decisions compared to a system-on-chip (SoC) approach.

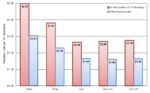

Chiplets based design seems attractive starting with sub-10nm process node. There are a number of reasons for this. For one, litho equipment impose reticle size limits to ensure distortion free mask exposures, thereby limiting the size of large chips. Another reason is the exorbitant cost of developing a SoC-based product. Yet another reason is the number of good dies per wafer expected from large chips in these advanced process nodes. The yield rate is better for smaller dies. The multiple smaller dies could avoid high development cost relating to very advanced process nodes and still deliver an overall product cost advantage as a chiplet-based implementation. Additionally, at sub-5nm process nodes, transistor cost is projected to uptick. Refer to Figure 1 below.

Figure 1: Cost of transistor at different process technologies

Source: International Business Strategies 2020

Currently lot of attention and efforts are directed at chiplet interfaces. A number of companies have developed excellent IP products supporting different types of chiplet interfaces. Equally important is a well-defined design and integration methodology that will deliver successful chiplets-based products. A recently published whitepaper from Siemens EDA addresses exactly this. It identifies the technical challenges to anticipate and presents ways to overcome those. The whitepaper was authored by Keith Felton, Anthony Mastroianni, Kevin Rinebold and Per Viklund. This blog synthesizes the salient points garnered from that whitepaper.

Early planning and predictive analysis

A number of challenges get introduced when dealing with chiplets integration. Apart from power delivery and thermal management, developing and co-optimizing the interposer and package becomes challenging as different teams will be dealing with different chiplets. Investigating the interposer connectivity structures and evaluating available material options early on will prevent late-stage design changes. And approximating metal coverage per layer using a Monte Carlo-type sweep analysis will help identify parts of a circuit whose performance would be impacted the most. These steps help accelerate the design and integration process.

Hierarchical chiplet co-optimization

Even though any one particular chiplet is a subset of functions of an SoC, taking a hierarchical planning of the chiplets provides a lot of benefits. Combined with a robust engineering change order (ECO) mechanism as one would with a SoC approach, asynchronous changes from different chiplet teams would not fall through the cracks. And chiplet’s bump array and signal assignments can be created at the interposer level and passed back to the individual chiplet design teams.

Chiplet interface management and design

Even with standardized chiplet interfaces, frequently graphical schematics are used to specify the interfaces. The designer looks up interface definition for each chiplet and manually creates the required connectivity. But this is a lot of manual work and has the potential to introduce mistakes that may not be easy to catch early in the design process. Siemens EDA introduces a novel concept for eliminating this risk.

A novel concept – Interface based design

The interface description becomes part of the chiplet model as an interface-based-design (IBD) object. This novel approach ensures correct-by-design chiplet connectivity. It enables the designer to focus on chiplet floorplanning and chiplet-to-package or chiplet-to-interposer signal assignments. The designer is able to explore routing scenarios without having to transition to a substrate place-and-route tool.

Electrical, thermal and mechanical stress management

The close proximity of chiplets may introduce chip-package interactions (CPI) that result in electrical, thermal and/or mechanical stress issues. It is very important that predictive analysis is started as early in the planning phase as possible. This will help identify the right materials for certain connectivity structures.

Testing and testability

Testing and testability are always challenging aspects when it comes to chip designs. Although the different chiplets sourced will be wafer sorted and delivered as known good dies, testing is still needed as an assembled product. IEEE test standards are being developed to accommodate 2.5D test methods. Tool vendors may deploy different approaches to implementing these standards, causing potential test compatibility issues when utilizing chiplets from different vendors. A number of advances are being made in this area as well to ensure interoperability.

Verification and signoff

With so many aspects involved, it is imperative that verification and in-design validation happen throughout the process from the initial planning stages through the layout process. This ensures that any layout enhancement techniques used to improve yield and reliability have not impacted the design in any unintended way. And if impacted, the issues are caught early on before the design is sent to manufacturing.

Summary

In essence, Siemens has elucidated a methodology with clear steps to take to achieve success with heterogeneous chiplets design and integration. It has also highlighted the need for coming together of the ecosystem to support chiplets based design testing. For detailed insight, please refer to the whitepaper and have exploratory discussions with Siemens EDA. You can download the whitepaper “Heterogeneous Chiplets Design and Integration” here.

Also Read:

Siemens EDA Acquires an IP Validation Tool for standard cells, IO and Hard IP