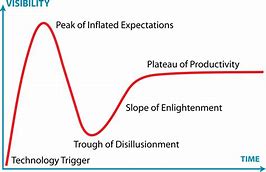

There are few tech promises these days as prominent as those surrounding driverless cars (trucks, buses, …). But thanks to always-on media amplifiers, it’s not always easy to separate potential from reality. I recently talked to Kurt Shuler, VP Marketing at Arteris, who shared his view after returning from this year’s CES. Kurt is much an enthusiast as anyone but pointed immediately to the Gartner media-hype curve, saying that pitches were more muted this year, particularly in moving away from live demos, perhaps thanks to last year’s less than stellar performances. On the hype curve, Kurt feels we’ve moved past peak hype and are now into the long slog of delivery.

He’s not alone in this view. Others are also digging into the details, looking more closely at what it takes to get to different levels of autonomy and are more skeptical that wide-scale autonomy is right around the corner. No-one is saying it’s not going to happen, but reality is setting in on how long it’s going to take. In Kurt’s view, we’re 80% of the way there, but the last 20% is going to take years, maybe even decades.

Which obviously contrasts with the marketing message, since no-one wants to signal that they’re intentionally stretching out plans. Intel are making a big push with their acquisition of Mobileye, however a lot of what they are doing is immediately relevant to ADAS, whether or not autonomy takes longer. Tier1 companies are following a variety of strategies, some building their own systems (HW+SW), others using commercial branded systems under the hood and Baidu, Google Waymo push their big data mining/management advantage, though still unclear to me how far Google will get, given their spotty record on Other Bets.

Among OEMs, there’s a wide spectrum, from Tesla who always market to the hilt and seem to want to boil the ocean as fast as possible (witness now autonomous trucks), to perhaps Volvo who initially said they would offer autonomy in 2020 and now have pulled back, focusing more on driver assistance.

Obviously that last 20% represents the difference between what is possible and what is functionally safe, reliable and cost-effective, as in we’re prepared to let these things on the road in the real world and we can afford them. In our neck of the woods in semiconductor design, solution providers are still working hard to push into products more functionality with the “right” HW/SW and PPA balance. What makes for right depends on perspective. The Tier1s are pushing for more to be done in hardware, especially when integrating their IP, and less in software since that means less software problems to manage in the field, a less complex software BOM and generally a reduced safety/security problem around that software.

This naturally requires jamming more functions into a system on chip. Mobileye is adding more hardware accelerators to the bus and Kurt said that NVIDIA is now adding fixed-point accelerators in their latest architecture. The NVIDIA move shouldn’t be surprising. While they dominate in neural net (NN) training, that’s running in the cloud where power isn’t such a big issue and the MAC instructions central to NNs can use floating-point accelerators. In a car, power is very much an issue, which is why NNs on edge applications (inferencing rather than training) have moved to more power-efficient fixed-point accelerators.

Still on the subject of power, functional safety tends to increase power since it requires levels of redundancy. A common method to mitigate the impact of hardware failures in a CPU (through soft errors for example) is to have two (or more) CPUs doing the same calculations, then compare the results. In other areas, duplication or triplication of logic is common in safety-critical functions. Good for safety, not so good for power.

And while on safety, naturally this demands a very high quality of service, even though there are all these units hanging off the bus, along with traffic from the growing number of sensors around the car. Kurt made the point that when you’re traveling at 70 mph and someone cuts in front of you, that clever electronics has milliseconds to respond; cars don’t stop on a dime. Rolling that back into the system, functions on the bus have to be responding at picosecond levels – reliably, not “most of the time unless bus traffic gets heavy”. This takes very careful optimization and a bus architecture which can support that optimization; I’m pretty sure everyone would agree this has to be a NoC .

One more point on safety. When functionality is divided up between multiple components provided by multiple suppliers and assembled by a solution builder, how does the system builder ensure overall system safety? Through redundancy certainly, but how do they deal with varying or differing levels of safety management between these functions? More responsibility for safety management probably has to fall on or at least be mediated by the interconnect.

Looks like there’s still a lot of hard work to be done to turn autonomy promise into a scalable reality but that shouldn’t be a big surprise. Meantime on-chip interconnect, particularly NoC interconnect, is likely to play a significant role in those solutions. You can learn more about Arteris solutions HERE.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.