RISC-V tends to generate excitement over the possibilities for the processor core, any custom instruction extensions, and its attached memory subsystem. Those are all necessary steps to obtaining system-level performance. But is that attention sufficient? Architects who have ventured into larger system-on-chip (SoC) designs know how complicated interconnecting many IP blocks vying for data paths all at once can get. Arteris suggests a ‘system-in’ RISC-V design approach for solving system-level challenges instead of a ‘RISC-V out’ perspective.

De-facto specs can cross up designers quickly

“The typical cut point for starting an SoC design is a standard protocol like AXI,” says Frank Schirrmeister, VP of Solutions and Business Development for Arteris. “In that respect, RISC-V design is fundamentally the same as designing with other processor cores supporting AXI for their system interface.” Many peripheral IP blocks also support AXI. Broad support usually makes for a clear choice, but flexibility and optimization soon become factors at scale.

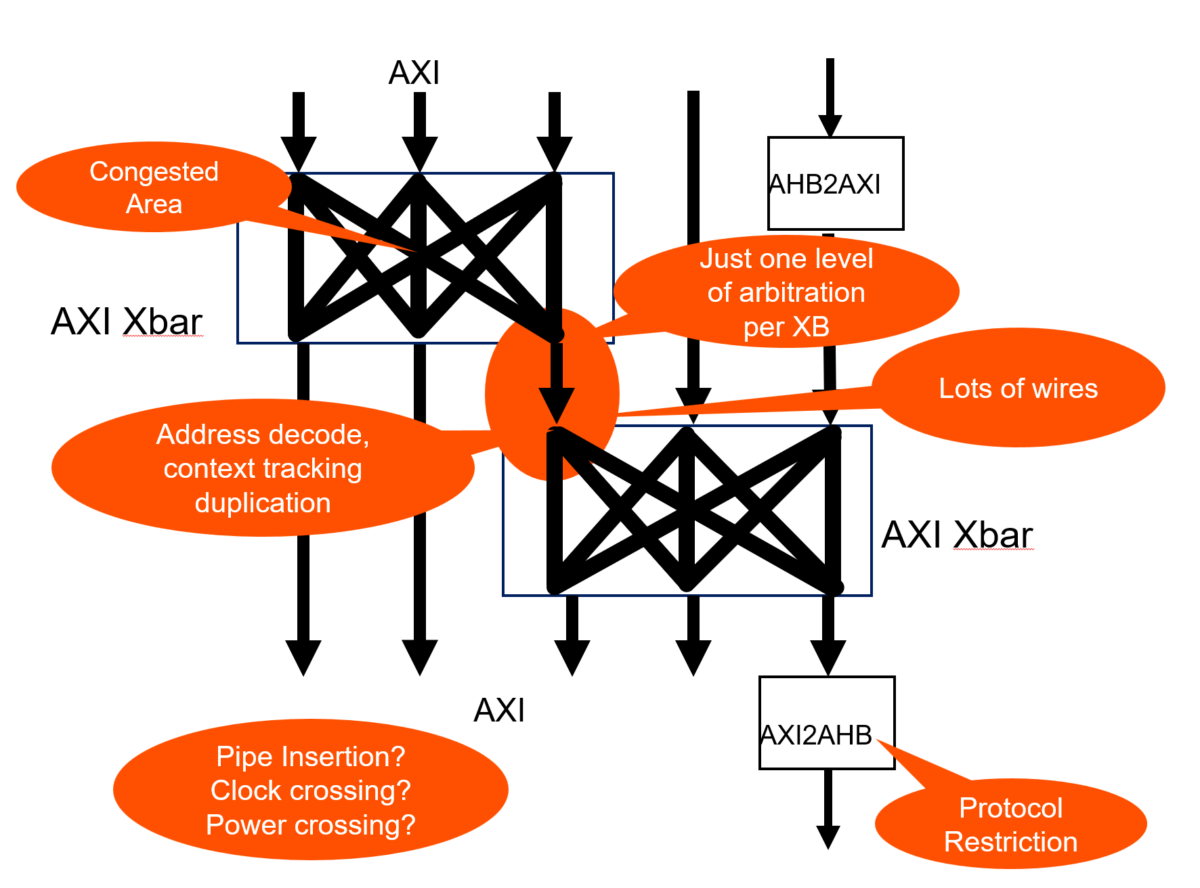

AXI carries about 300 wires, which need routing to every IP block it connects across a chip. It doesn’t take many IP blocks in the mix to create raw wiring congestion. Architects frequently turn to crossbars and bridges as IP block counts scale. Another problem develops quickly – how does one arbitrate between high-speed and high-priority data paths when several must be active simultaneously? AXI crossbars typically offer only one level of arbitration per switch. Protocol restrictions add to the performance throttling problem.

A simplified view of AXI crossbar routing, courtesy of Arteris

Establishing AXI routes between the RISC-V core and various IP blocks may work logically but not physically without extra care and feeding. “Every IP block could be on a different power or clock domain, leading to bigger problems,” continues Schirrmeister. Clock domain crossings lead to instabilities and timing closure problems. Power domain crossings add level-shifting complexity and make managing power consumption through shutting down unused blocks and sequencing their reawakening more challenging.

Adding a NoC embraces AXI in a co-optimized solution

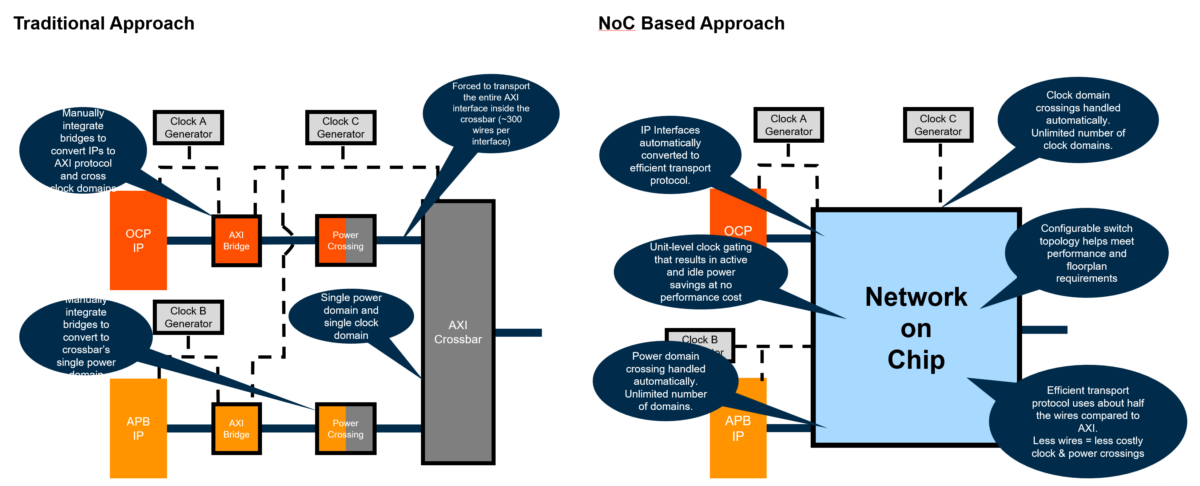

IP blocks may support different protocols, with AXI being widely adopted. However, there are others like OCP, CHI, and more, each with their own versions and specific supported features. In simpler designs, users may like to start with an AXI crossbar, but there is a more efficient alternative for the SoC-wide interconnect. “Without a network-on-chip (NoC), any attempts at co-optimizing an SoC are undone by having to deal with crossbars and clock and power domain complexity,” observes Schirrmeister. “With a NoC, clock and power domain issues are localized and dealt with at the IP block boundary instead of in the network.”

A NoC simplifies transport and handling domain crossings, courtesy of Arteris

The transport protocol inside an Arteris NoC uses about half the wiring of an AXI interface, cutting wiring congestion. Separating clock and power domain crossings from the network helps guarantee NoC timing closure and improves the ability to manage power on a block-by-block basis. Both AXI and non-AXI blocks integrate more easily with localized protocol conversion.

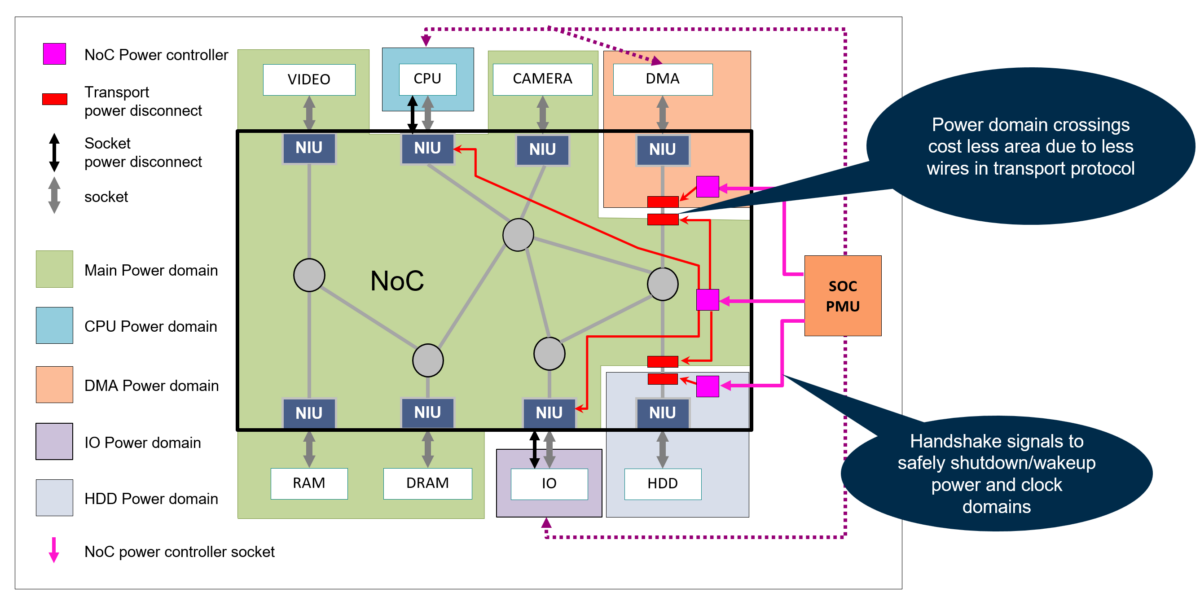

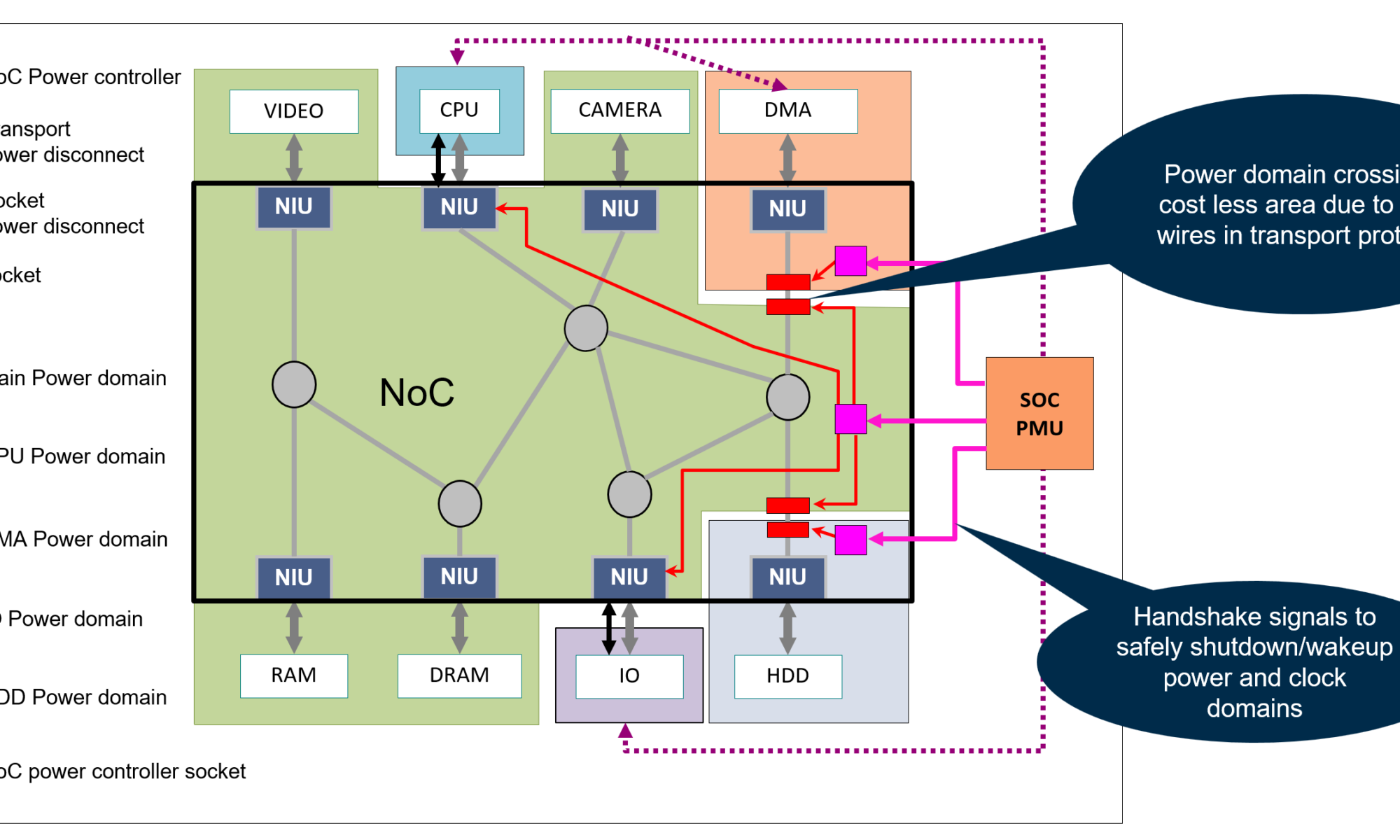

A closer look at how power domain crossings work illustrates the advantages. Fewer wires in the transport protocol lead directly to area savings. Isolating power and clock crossings to the IP block boundary simplifies the configuration of handshaking, which is essential when using a power management unit (PMU) external to the NoC to coordinate supervision.

Power domains isolated to the IP block boundary where required, courtesy of Arteris

System-in RISC-V design approach fundamentals

With a system-in RISC-V design approach, the architectural context becomes the NoC, not the processor. IP blocks – including the RISC-V core – become more interchangeable, with reduced concerns over network routing and ripple effects from clock and power domain crossings.

“Architects can concentrate on their performance criteria around a RISC-V core,” says Schirrmeister. “I need this bandwidth on this lane to my selected IP block, so this has to flow, and that has to stall – and the NoC sorts that out once the block is connected.” Knowing how many video sources or data streams enter the SoC helps size the RISC-V and memory subsystem accordingly, preventing snarled traffic from limiting the RISC-V core performance. As an added benefit, potentially varying protocols depending on the IP used (OCP, CHI, AXI, AHB, APB, and others) can be translated at the border of a NoC for unified handling inside the NoC topology.

A system-in RISC-V design approach may yield even more flexibility in the second design project, where a team enhances its first SoC design with faster RISC-V processing and memory and swaps out targeted IP blocks for more throughput. With video frame rates, resolution, and wired and wireless networking speeds constantly rising, a solid architecture built around a NoC can respond to changes without ripping up the entire design.

Visit the Arteris website for more information on NoCs and other products.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.