The rapid rise of artificial intelligence is fundamentally reshaping computing architectures. As AI models scale toward trillions of parameters, traditional approaches to performance improvement are no longer sufficient. Instead, the industry is entering a new era where system-level innovation, advanced packaging, and 3D integration are becoming the primary drivers of progress. This shift reflects a broader transition in computing, where performance gains increasingly depend on how well entire systems are designed and integrated, rather than how small individual transistors can become.

The End of One-Dimensional Scaling

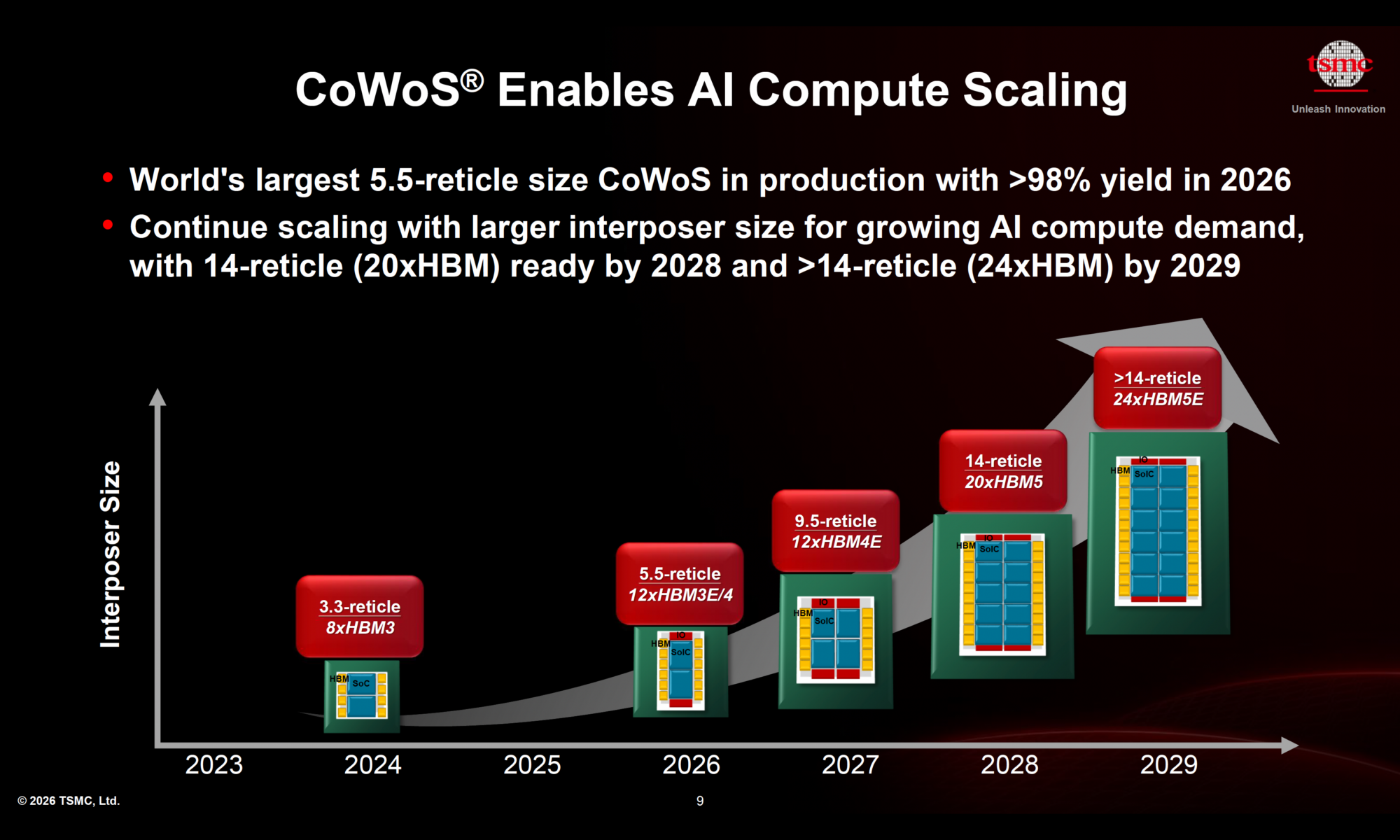

AI compute demand is growing at an exponential rate, creating a widening gap between required performance and what conventional silicon scaling can deliver. Bridging this gap requires innovation beyond the chip itself. The most important shift is that AI performance is now determined at the system level rather than purely at the silicon level. Future gains will depend on how effectively compute, memory, interconnect, and power systems are integrated into a cohesive whole. This marks a transition from device-centric optimization to full-stack co-design, extending from transistor technology all the way to data center architecture.

Data Movement Is the New Bottleneck

A critical constraint in modern AI systems is no longer computation, but data movement. Transporting data across chips can consume up to 50 times more energy than moving data within a single chip. At the same time, data transfer can account for the majority of system activity, significantly reducing accelerator utilization due to communication delays. This shift makes interconnect efficiency a central design priority. Improving bandwidth, reducing latency, and minimizing energy per bit are now essential to unlocking overall system performance.

The Memory Wall Is Getting Worse

As AI models continue to scale, memory demands are increasing even faster than compute capabilities. Emerging workloads, such as long-context processing and multimodal AI, are driving exponential growth in both memory capacity and bandwidth requirements. Systems are transitioning from gigabyte-scale memory to terabyte-scale configurations, while also demanding lower latency. However, memory technology is not advancing at the same pace as compute, creating a widening imbalance. Overcoming this “memory wall” is therefore essential for sustaining AI progress, and it is driving rapid innovation in high-bandwidth memory and memory integration strategies.

Power and Thermal Constraints Are Critical

The increase in compute density, particularly with the adoption of 3D stacking technologies, has led to a corresponding rise in power density and heat generation. These factors are quickly becoming limiting constraints for AI system scaling. Without significant advancements in power delivery, energy efficiency, and thermal management, performance gains cannot be sustained. As a result, power and cooling are no longer secondary considerations but have become central to system design and overall performance.

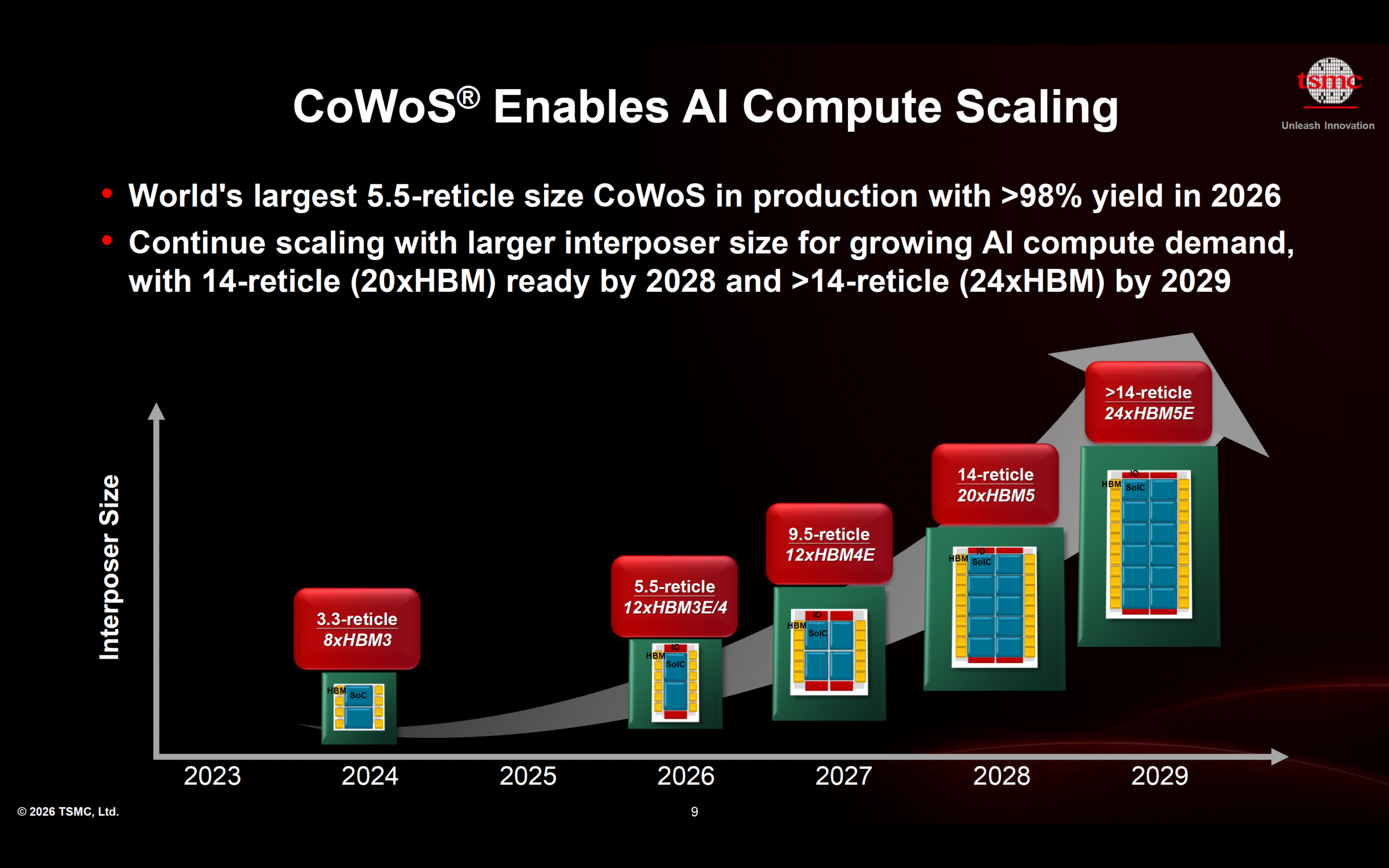

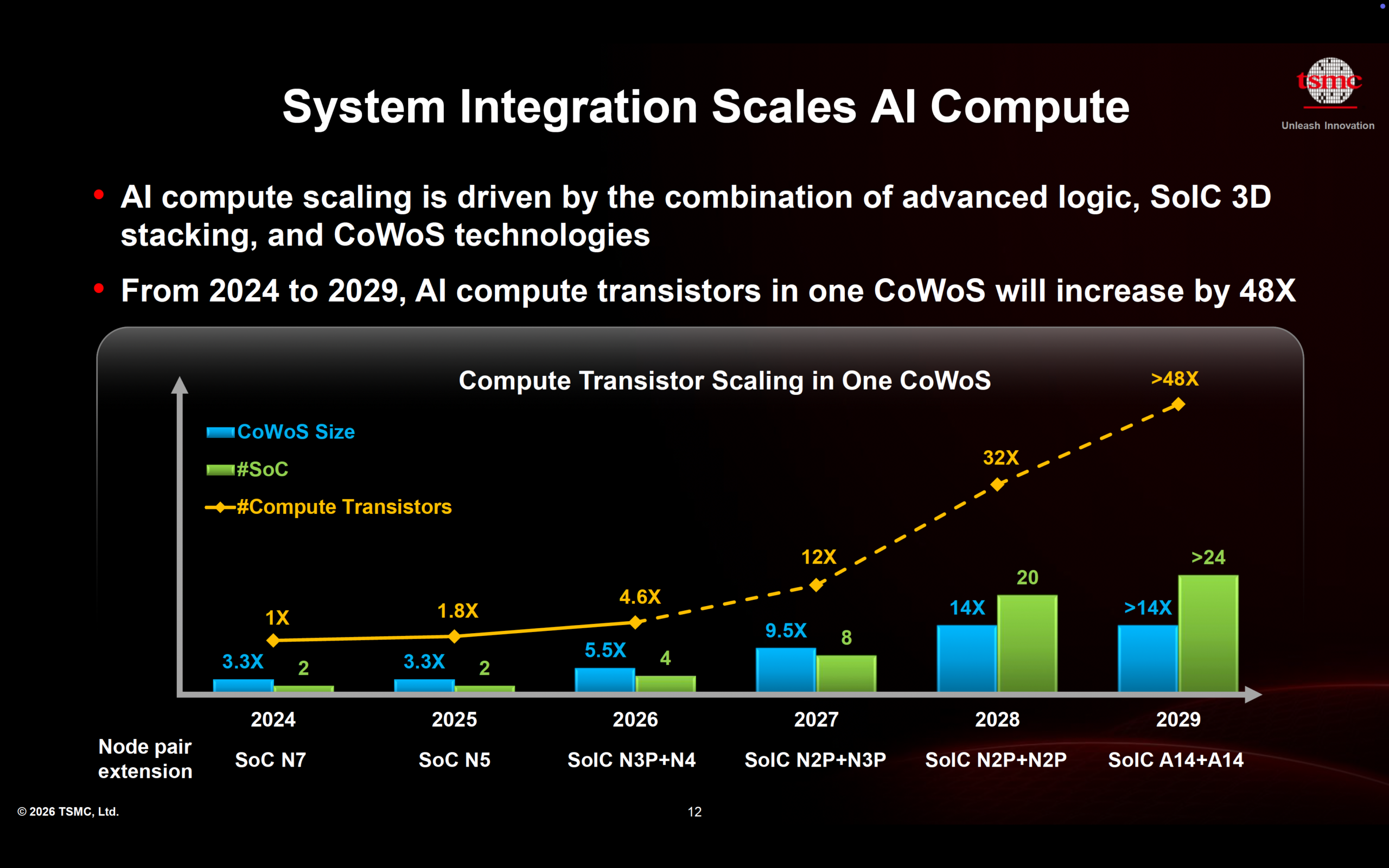

3D Fabric Technologies: The New Foundation

To address these challenges, advanced 3D fabric technologies are emerging as the foundation of next-generation AI systems. These technologies enable the integration of multiple chips and components into highly efficient, high-performance systems. Innovations such as 3D chip stacking allow for dramatically higher interconnect density, reducing both data movement distance and energy consumption. Advanced packaging platforms make it possible to combine logic and memory in close proximity, enabling massive bandwidth and capacity scaling. At the same time, high-bandwidth memory continues to evolve, delivering higher throughput and improved energy efficiency. Together, these advancements position packaging not merely as a supporting technology, but as a primary driver of system performance.

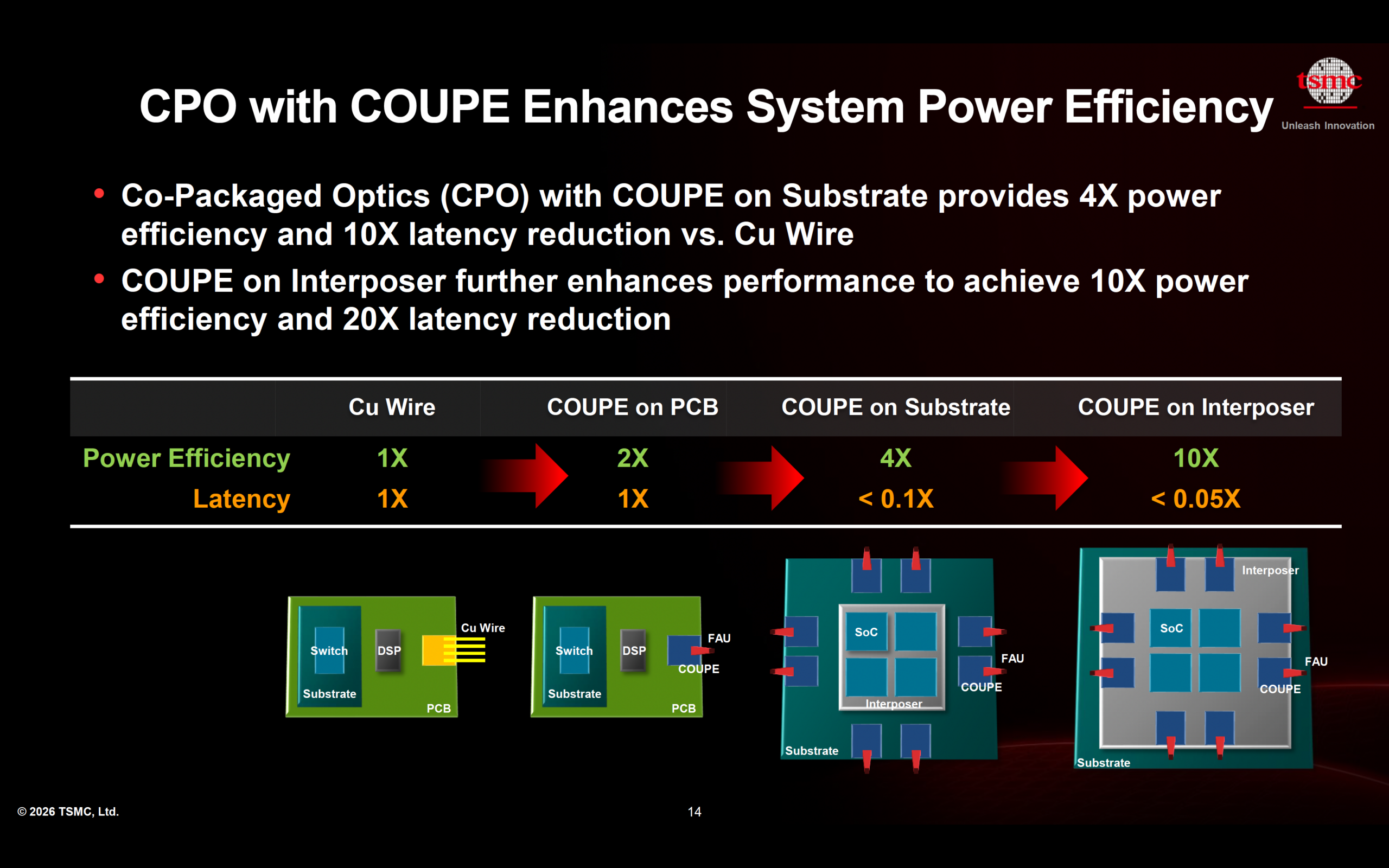

Co-Packaged Optics: Rethinking Interconnects

As electrical interconnects approach their physical limits, co-packaged optics is emerging as a promising solution for high-speed data transfer. By integrating photonics directly with compute hardware, this approach enables significant improvements in both power efficiency and latency. It also provides a scalable path forward for data center networking, where the need for higher bandwidth and lower energy consumption continues to grow. This evolution signals a broader shift toward optical technologies as a key enabler of future AI infrastructure.

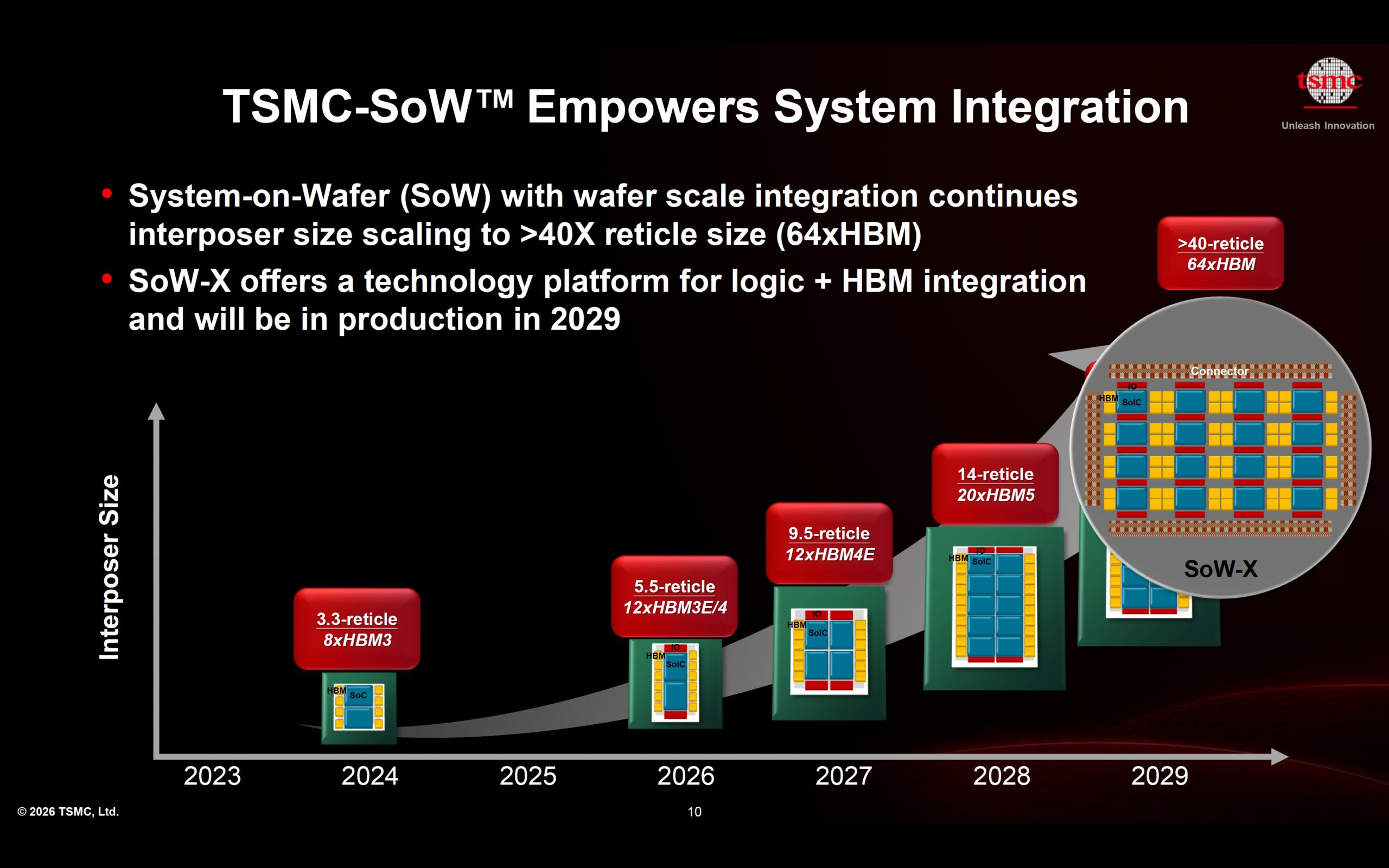

System-on-Wafer and Wafer-Scale Integration

Looking further ahead, system integration is advancing toward wafer-scale architectures, where entire systems are built on a single substrate. This approach enables unprecedented levels of integration density while reducing the overhead associated with traditional interconnects. By minimizing communication distances and improving efficiency, wafer-scale integration offers a powerful pathway for scaling AI performance beyond the limits of conventional packaging methods.

The Rise of System Technology Co-Optimization (STCO)

As AI systems grow more complex, optimizing individual components in isolation is no longer sufficient. The industry is increasingly adopting System Technology Co-Optimization, an approach that simultaneously considers chip design, packaging, interconnects, power delivery, and thermal behavior. This holistic methodology ensures that all parts of the system are designed to work together efficiently, enabling better overall performance and energy efficiency. It represents a fundamental shift in how hardware systems are conceived and developed.

Summary

The future of AI hardware will not be defined by silicon scaling alone. Instead, it will be shaped by advances in packaging, interconnects, memory systems, and power efficiency, all brought together through system-level design. In this new paradigm, the system itself becomes the primary unit of innovation. Success will depend on the ability to integrate across multiple domains and optimize them collectively. As this transformation continues, it is clear that the “system” has effectively become the new chip, redefining how performance is achieved in the age of AI.

Also Read:

Dr. Cliff Hou and the TSMC N2 Process Technology

The Shift to System-Level AI Drives Next-Generation Silicon

All in One Bluetooth Audio: A Complete Solution on a TSMC 12nm Single Die

TSMC Technology Symposium 2026 Overview

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.