These days, the term chiplets is referenced everywhere you look, in anything you read and in whatever you hear. Rightly so because the chiplets or die integration wave is taking off. Generally speaking, the tipping point that kicked off the move happened around the 16nm process technology when large monolithic SoCs started facing yield issues. This obviously translated to an economic issue and highlighted the fact that Moore’s Law benefit which had stood the test of time for more than five decades had started to flatten. While this is certainly true, there are lot more benefits for moving to chiplets-based designs. This “More than Moore” aspect is what will drive the chiplets adoption even faster.

I recently had a conversation on this topic, with Shekhar Kapoor, senior product line director at Synopsys. Shekhar shared Synopsys’ view on what is behind the move to multi-die systems , the challenges to overcome and the solutions that are needed to successfully support this wave.

More Than Just The Yield Benefit

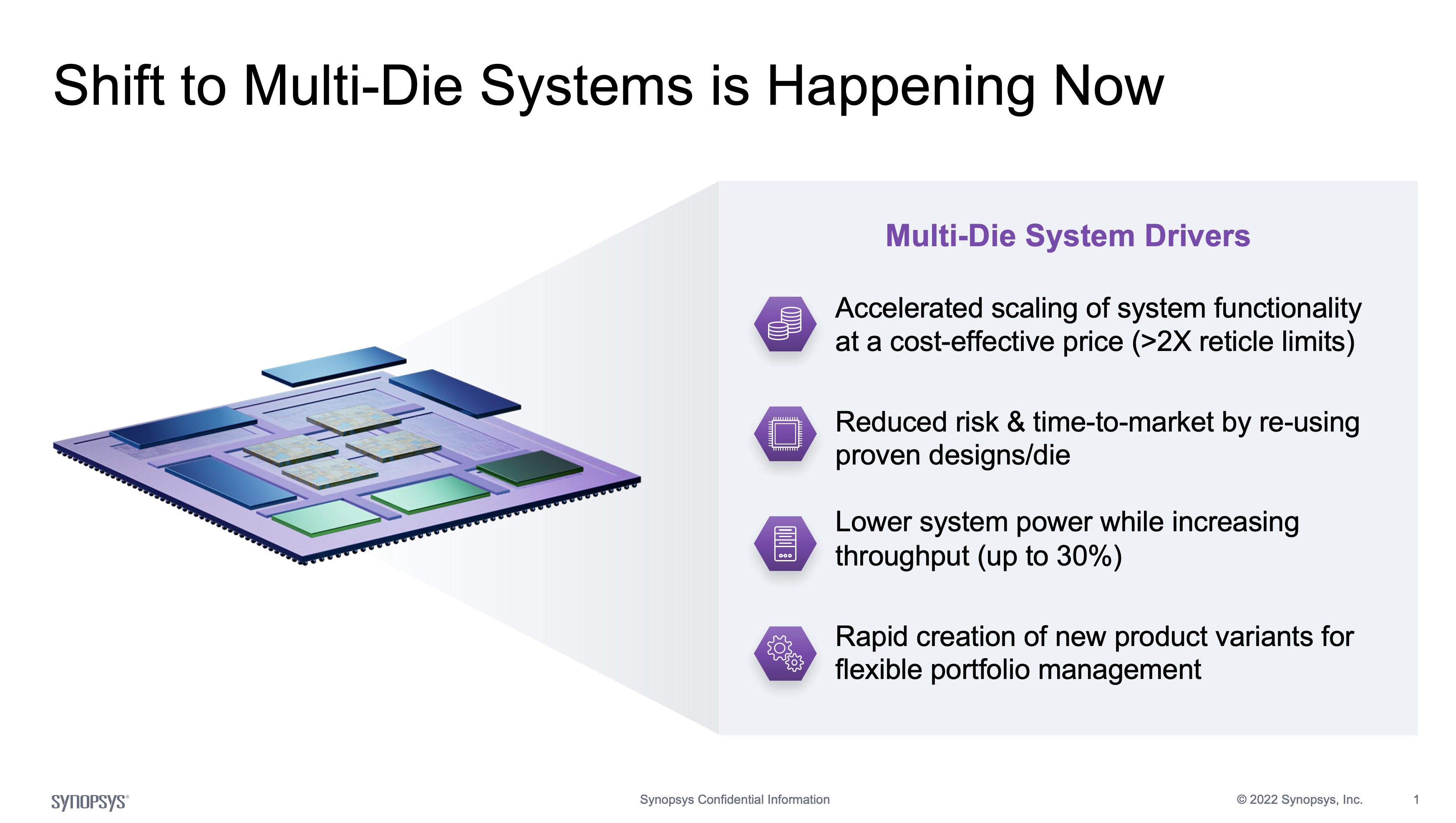

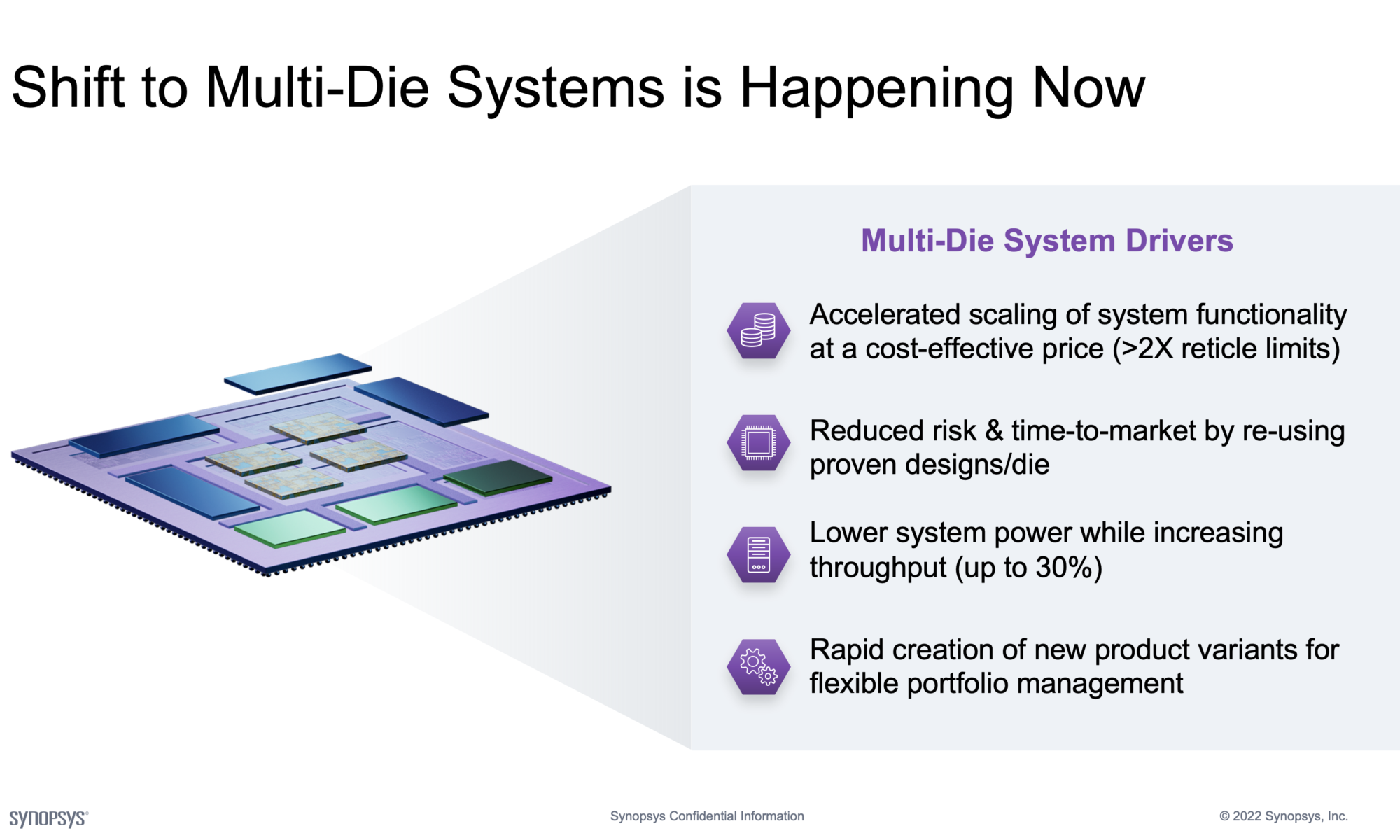

Multi-die systems can accelerate the scaling of system functionality and performance. They can help lower system power consumption while increasing throughput. By allowing re-use of proven designs/dies as part of a system implementation, they help reduce product risk and time-to-market. And, they help create new product variants rapidly and enable strategic development and management of a company’s product portfolio.

“SysMoore Era” Calls for Multi-Die Systems

Until recent years, Moore’s Law benefits delivered at the chip level translated well to satisfy the performance demands of systems. But as Moore’s Law benefits started to slow down, system performance demands have started to grow in leaps and bounds. Systems have been hitting the processing, memory and connectivity walls. Synopsys has coined the term “SysMoore Era” to refer to the future.

Take for example, the tremendous growth in artificial intelligence (AI) driven systems and advances in deep learning neural network models. The compute demand on systems have been growing at incredible rates every couple of years. As an extreme example, OpenAI’s ChatGPT application is powered by a Generative Pre-trained Transformer (GPT) model with 175 billion parameters. That is the current version (GPT3) and the next version (GPT4) is supposed to handle 100 trillion parameters. Just imagine the compute demand of such a system.

On average, the Transformer models have been growing in complexity by 750x over a two-year period and systems are hitting the processing wall. Domain Specific Architectures are being adopted to close the gap on performance demand. Multi-die systems are becoming essential to address the system demands of the “SysMoore Era.”

Multi-Die System Challenges

Heterogeneous die integration introduces a number of challenges. Die-to-die connectivity is at the heart of multi-die systems as different components need to properly communicate with each other. System pathfinding is a closely related challenge that involves determining the best data path between components in the system. Multi-die systems must be designed to also ensure that each component is supplied with adequate power and cooling, while also minimizing system level power consumption. Memory utilization and coherency are also important challenges as these systems must be designed to ensure efficient memory utilization and coherency across the different components. Software development and validation at the system level is yet another challenge as each component may have its own software stack. Design implementation has to be done for efficient die/package co-design with system signoff as the goal. And all this should be accomplished with cost-effective manufacturability and long-term reliability in mind.

Multi-Die System Solutions

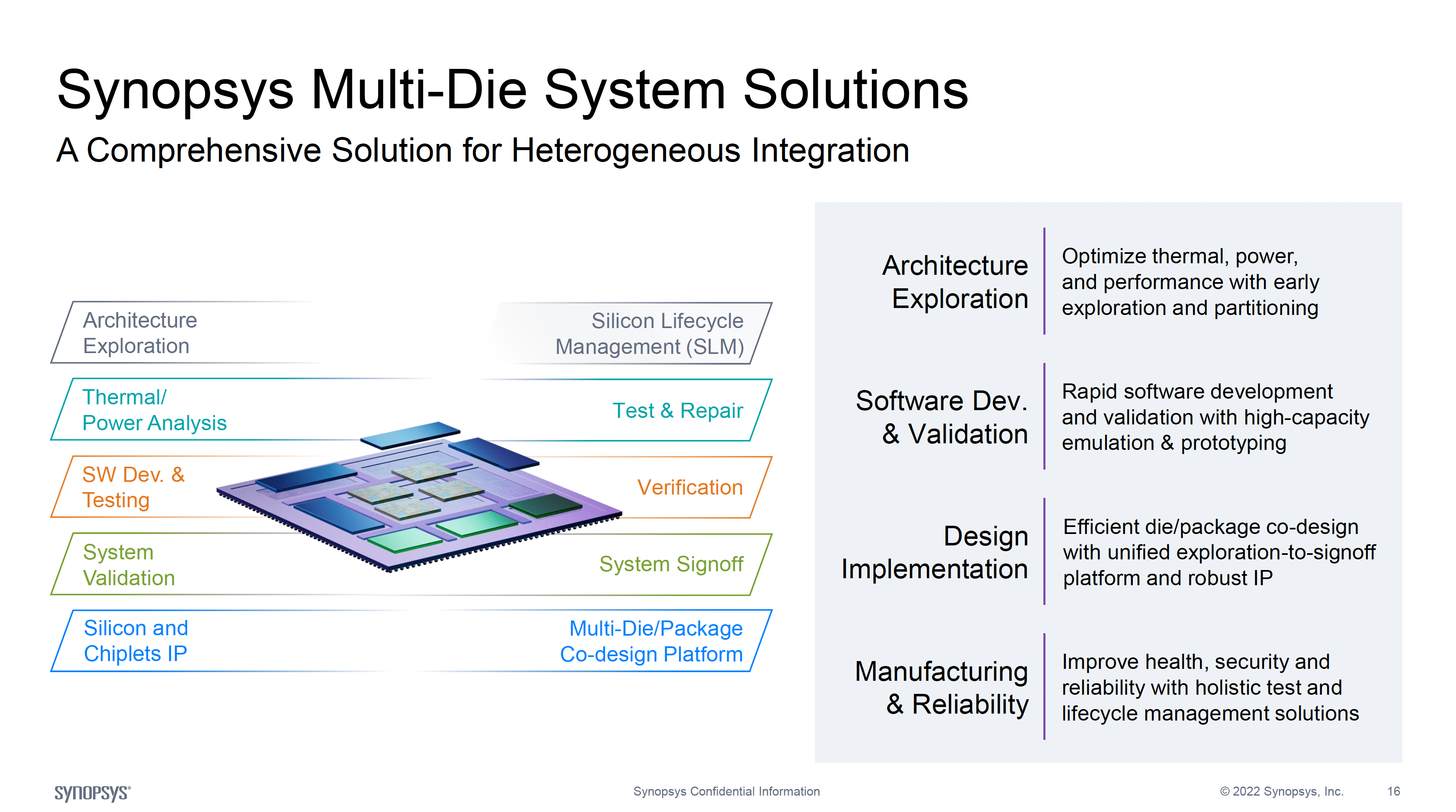

Just as Design Technology Co-Optimization (DTCO) is very important in a monolithic SoC scenario, System Technology Co-Optimization (STCO) is imperative in a multi-die system scenario. To address multi-die system challenges, the solutions can be broadly categorized into the following areas of focus.

Architecture Exploration

A system level tool that allows early exploration and system partitioning for optimizing thermal, power and performance is imperative. Just as a chip-level platform architect tool was critical for a monolithic SoC scenario, so is a system-level platform architect tool for a multi-die system, if not more critical.

Software Development and Validation

High-capacity emulation and prototyping solutions are essential to support rapid software development and validation for the various components of a multi-die system.

Design Implementation

Access to robust and secure die-to-die IP and a unified exploration-to-signoff platform are key to an effective and efficient die/package co-design of the various components of a multi-die system.

Manufacturing & Reliability

Multi-die system hierarchical test, diagnostics and repair and holistic test capabilities are essential for manufacturability and long-term system reliability. Environmental, structural and functional monitoring are needed to enhance the operational metrics of a multi-die system. The solution comprises silicon IP, EDA software and analytics insights for the In-Design, In-Ramp, In-Production and In-Field phases of the product lifecycle.

Summary

As a leader in “Silicon to Software” solutions to enable system innovations, Synopsys offers a complete solution to design, manufacture and deploy multi-die systems. For solution-specific details, refer to their multi-die system page.

Also Read:

PCIe 6.0: Challenges of Achieving 64GT/s with PAM4 in Lossy, HVM Channels

Synopsys Design Space Optimization Hits a Milestone

Webinar: Achieving Consistent RTL Power Accuracy

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.