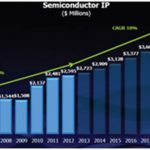

SemiWiki blogger Eric Esteve does an excellent job writing about all of the semiconductor IP available, and the popularity of IP is only growing more each year. Here’s a projection from IBS about semiconductor IP showing revenues of $4.7B by 2020:

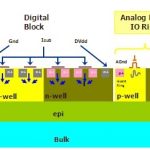

Analyst Gary Smith divides IP into three broad categories: Functional, Foundation and Application.

An example of functional platform would be IP provided by ARM, foundation platform would be IP like TI’s OMAP, and finally an application platform example would be IP or software from Audi for their navigation and infotainment systems.

Related – Filling the Gap between Design Planning & Implementation

The number of IP blocks on a modern SoC is about 200 or so, making about 80% of a chip re-used. Here’s the chart from Semico Research:

Another trend with increased IP use is the rising cost of software with each new node. Data from IBS shows that at the 22 nm node we have SoC costs dominated by software development compared with hardware design or manufacturing.

Related – Smart Collaborative Design Reduces Business Risk

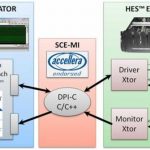

One approach to manage these increased product costs is to use a functional virtual prototype:

Functional Virtual Prototype (Dassault Systemes)

This approach enables early hardware-software co-development, shortening the product life cycle. You can actually verify a new design before detailed implementation begins. The SoC along with all of the related software IP can become a system virtual prototype and managed with specialized software provided by Dassault Systemes.

Related – Enterprise IP Management – A Whole New Gamut in Semiconductor Space

GSA Working Group

Industry experts in IP will meet for two panel discussions and webcast on Thursday January 22, 2015 from 9AM to Noon (PT) at Synopsys in Mountain View, or by phone at 1-719-352-2630:

- IP Management

- Warren Savage, CEO, IPextreme, Moderator

- Ranjit Adhikary, Director of Marketing, ClioSoft

- Shiv Sikand, VP Engineering, IC Manage

- Vishal Moondhra, VP Applications, Methodics

- Michael Munsey, Director ENOVIA Semiconductor Strategy, Dassualt Systems

- Kands Manickam, Senior VP & GM, IPextreme

- IP Business Models

- Warren Savage, CEO, IPextreme, Moderator

- John Koeter, VP Marketing, Synopsys

- Brian Gardner, VP Business Development, True Circuits

- Oliver Gunasekara, CEO, NGCodec

- Frank Ferro, Senior Director Prod cut Management, Rambus

- Marty Kovacich, CFO, Sonics

Also Read

Filling the Gap between Design Planning & Implementation

Smart Collaborative Design Reduces Business Risk

Enterprise IP Management – A Whole New Gamut in Semiconductor Space