Editor’s Note: Siemens EDA recently introduced a comprehensive hardware-assisted verification system comprised of hardware, software and system verification that streamlines and optimizes verification cycles while helping reduce verification cost. What follows is an edited version of an interview Verification Expert Lauro Rizzatti conducted with Jean-Marie Brunet, senior director of product management and engineering for emulation and prototyping at Siemens EDA.

LR: Siemens EDA introduced a suite of comprehensive and integrated hardware-assisted verification tools. Before we discuss the details of this “Big Launch,” let me ask you a general question to set the stage. What trends do you see in chip design and what is driving those trends?

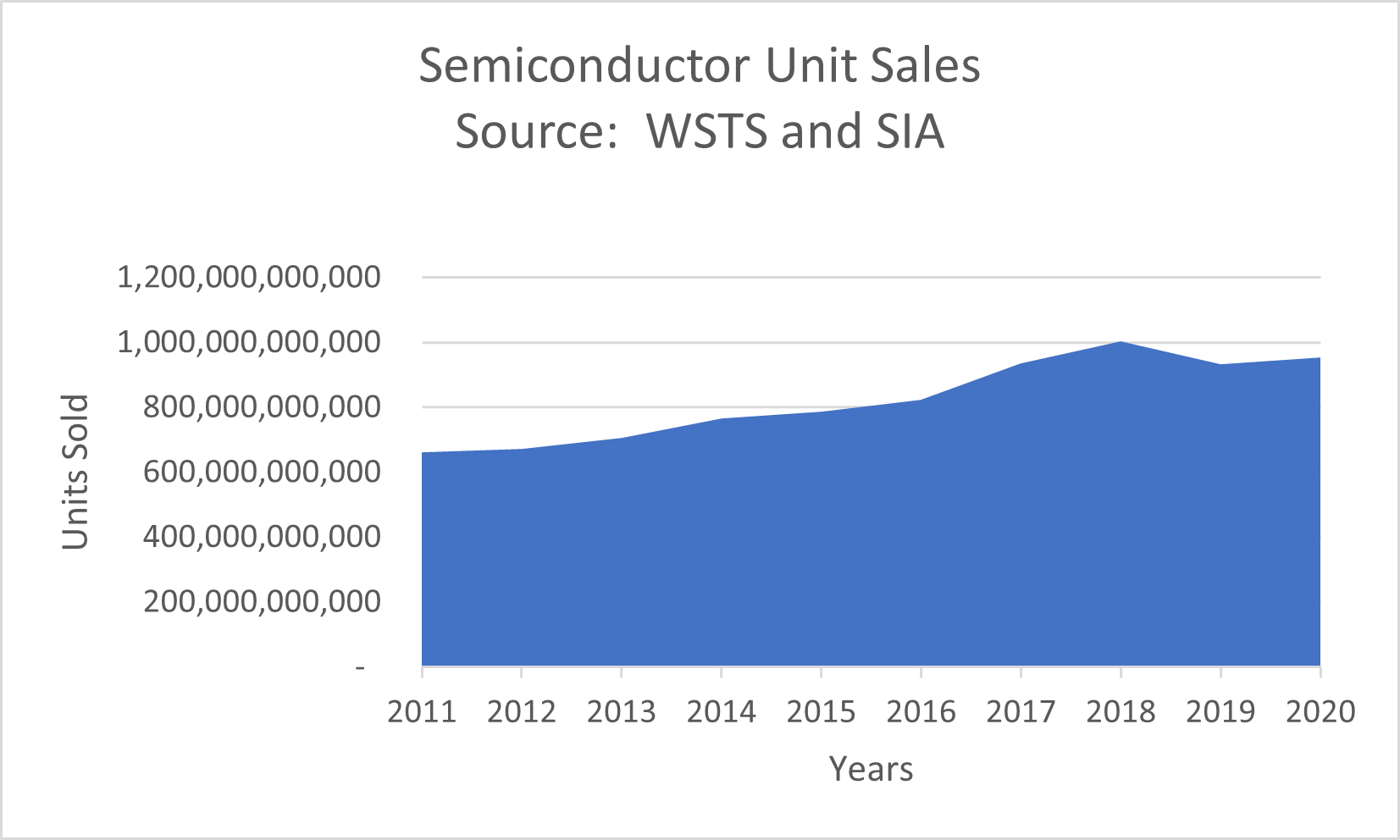

JM: The market trends we see in IC verification and validation are interesting and promising for us. We see that verification costs continue to grow faster than design costs. As software validation costs grow , hardware verification spending overtook register transfer level (RTL) simulation spending in 2018 and this trend continues for the foreseeable future. All market indicators are moving in the direction of the need for more hardware-assisted verification tools, and more spending in this category, which is good news for us.

We see four major markets driving our hardware-assisted verification technology investment.

Number one is networking. Number two is communication with 5G. Number three is computing and storage. Number four is transportation, not only automotive, but any type of transportation.

Consistently, all four vertical markets share the same challenges when designing, verifying, and realizing an IC. The challenges come from the ability to meet power consumption and performance targets.

LR: System-on-Chip (SoC) designs consist of a combination of hardware and software, where software is becoming the dominant contributor. How do you measure success designing such a complex design?

JM: This is an interesting question. The market dynamics driving IC verification are clear. We are moving into an era where software performance defines semiconductor success. It used to be that meeting hardware functional specs defined semiconductor success. While today you still have to meet hardware functional specs, on top of that, you have performance and power targets. The challenge can be met by handling a test environment consisting of lots of workloads, frameworks, and industry benchmarks. Clearly, today’s SoC designs are driven by software performance. That’s the key trend we see.

For any vertical market segment, there is a long list of different workloads or benchmarks that must be executed to certify a design. Looking at artificial intelligence (AI), machine learning ML and deep learning (DL), you have many different types of frameworks or workloads that must be run. The same is true for advanced driver-assisted systems (ADAS) where lots of sensor and raw sensor fusion data must be processed. For the mobile market, we used to see activity within AnTuTu as the main reference. With graphics, we see a lot of Kishonti type of benchmarks. The bottom line is that there is a wide variety of frameworks and benchmarks that have to be run.

For those who paid attention to the announcement by AMD of its third generation EPYC server, the reference to SPECint and SPECfp was captured many times in the slides. Those benchmark references must be run pre-silicon while monitoring the behavior of the design. They are really the references that semiconductor companies use to position their products. They define how products must behave within the context of software workloads.

LR: Let’s move on and discuss your “Big Launch” and the specifics encompassing the launch of the Veloce Suite targeting the verification space. How is software changing the verification process?

JM: The story behind the “Big Launch” is relatively simple –– we identified three reasons driving the need for a complete and integrated suite of hardware-assisted verification tools designed around our Veloce emulation platform.

First, the software environment that we just described in terms of workload requires a massive amount of cycles to run. Billions of cycles are needed for booting operating systems, running benchmarks, and even applications.

Second, it is critical to estimate and measure power and performance while processing these massive workloads. For that you need visibility, accurate analysis and comprehensive debug tools.

And the third aspect is the size and the complexity of the SoC system that now can exceed many billions of gates. You cannot handle such complexity without a hardware-assisted platform.

We launched a suite of products built around our Veloce hardware-assisted verification platform. The first one is Veloce HYCON, our virtual platform to validate software. The second is Veloce Strato+, our new generation of emulator. The third is Veloce Primo, our brand-new enterprise FPGA prototyping engine, and the fourth is Veloce proFPGA, offered through an OEM relationship with ProDesign, to address the desktop FPGA prototyping market.

Based on this offering, we assert not only that the IC verification market needs a complete and integrated solution, but also it needs to approach verification with the context of the right tools for the right tasks. Throughout the verification cycle of an SoC design, different milestones must be met, and these different steps have different needs. Very early on, you need a virtual platform that requires virtual models to process software workloads with a tight integration to hardware emulation to take charge of RTL design blocks. At this stage, it’s all about speed. Eventually, your RTL becomes stable. Now you need to debug your RTL with full design visibility. You need different verification use models. You need scalable design capacity. All of the above needs are offered by an emulator like Veloce Strato+ that allows you to perform power analysis and gate-level emulation. As you approach tape-out, you have a gate-level netlist. Now you have to perform accurate power analysis. Only emulation like Veloce Strato+ can do the job.

We are also introducing a new concept called Emulation Offload from our Veloce Strato+ emulator to our enterprise field programmable gate array (FPGA) prototyping tool, Veloce Primo. The concept here is, early on, you need an emulator that provides accurate and efficient RTL debug. At some point, the design becomes more stable and less buggy, and then you need to verify at a higher speed. At this stage, you want to trade off debug for speed. You can do that by offloading the design from the emulator to the enterprise prototyping tool to accelerate the verification cycle and reduce the cost of verification task.

The last piece of the launch is our desktop FPGA prototyping with Veloce proFPGA, a single-user, smaller footprint type of prototyping tool that sits on the desk or in the lab, with easy bring up and very fast execution.

LR: Could you explain the positioning of the Siemens EDA suite of verification tools against the competition? How are they addressing the challenges you enumerated and why do you think your solution is superior to their offerings?

JM: In the highly competitive market we operate in, there are two critical factors. First is the timing. You have to be first, because when you are first, you define a direction that moves the market forward. That’s what we have accomplished with this launch. The second factor is that you have to offer a complete solution. In the past, we were known only for providing a point tool –– that is, an emulator.

With this launch, we now have all the necessary pieces, well integrated with each other where each tool is the right solution for the right task. This is how we are different from our competitors.

Let’s start with Veloce HYCON, an evolution of the traditional virtual platforms and hybrid emulation offerings. It stands for HYbrid-CONfiguration for the included set of configurable reference platform. Via HYCON, users enjoy an end-to-end software-enabled verification and validation solution that allows them to implement a shift-left strategy by providing a hardware-assisted verification environment where softwre development and validation occurs in parallel with hardware design and verification.

Next is our Strato+ emulation platform. Not all emulators are created equal. The foundation of what we do in Veloce Strato+ sits on three pillars.

The first pillar is the chip. We designed our Crystal chip, essentially a custom-emulator-on-chip. The second is the system hardware architecture. We also completely architect our hardware from the ground up. And the third is the software. We design the chip and the system hardware at the same time we design the software so all three are tightly coupled. When you design a new generation of a tool, you’re looking at what enhancements should be implemented.

With Veloce Strato+, we realized two types of improvement. The first improvement is in capacity. We implemented an exact 1.5X increase in capacity by starting at the source of the Crystal chip. Here we created on a new 2.5D IC package. We integrated a good portion of the memory components that were previously on the board onto the substrate of the package. With this silicon innovation we were able to free up space on the board. Now instead of installing 16 chips on a board as it is with Veloce Strato, with Veloce Strato+ we have 24 chips – 24 divided by 16 leads to an exact increase of capacity of 1.5X.

The second improvement is in performance. Performance comes from a combination of multiple things, from speed of compilation to speed of execution, all contribute to increase the throughput. To accelerate compilation, we implemented a hierarchical flow. Today, every big design is hierarchical, which extends the benefit to virtually all SoC designs. Regarding run-time execution, some emulator providers talk about clock speed and how quickly the chips are running. That’s one aspect and an important aspect, but it’s not the only aspect. What matters is total throughput or what is known as wall-to-wall execution time. That execution time includes processing on the co-model host, plus the interaction with the system hardware. Clearly, the channel-communication architecture is a critical element to achieve the best results.

Within the system hardware, data travels from point-to-point often via a backplane, another critical component for fast execution. At some point, the design data is mapped to the boards in the emulator. Now, the clock speed of the chip becomes important. When data emerges from the chip, it propagates through the backplane to the communication channel, then to the host. The addition of all the above accounts for the total execution time. It is throughput that matters. The Veloce architecture is tailored to optimize throughput and reduce total execution time. On every step along the way, Veloce offers superior throughput to other emulation alternatives on the market.

And the third improvement is improved design debug efficiency. The Crystal 3+ chip provides 100 percent visibility. This is a fundamental advantage of the Veloce Strato platform and the roadmap for future generations of Veloce, continuing on this path of offering superior debug capability versus FPGA-based emulators.

Regarding prototyping, Veloce Primo enterprise prototyping provides five fundamental advantages.

First is performance. Performance ranges from about seven megahertz (MHz) for very large designs up to 70 to 100 megahertz for smaller single FPGA designs.

Second is scalable capacity from a single FPGA user granularity all the way to 320 FPGAs, providing over 10-billion gates in design capacity, certainly enough capacity to handle extremely large SoC designs.

Third, it has the best probe-based debug capabilities in an enterprise prototyping platform for both in-circuit emulation (ICE) and virtual environments. Full visibility is supported by reconstructing combinational values from register data. Design states can be captured at speeds up to 300 MHz. Root cause detection of hard-to-isolate bugs can be accelerated by exporting the design under test (DUT) and test environment from Veloce Primo to Veloce Strato to enjoy faster recompile-debug turnaround time and a higher level of visibility and control.

The fourth advantage is productivity through the consistency of the emulation-prototype flow making it easy for designers to migrate from emulation into prototype and to return to debug prototypes within that Veloce Strato environment. This consistency delivers the ability to have the same DUT RTL and the same virtual environment functioning in both emulation and prototyping.

And, finally, number five is the lowest total cost of ownership, a key value for Veloce Primo. We are the industry leader in both low power consumption and density as we can fit 80 FPGAs in a single 42U rack. We also deliver lights-out remote management to make the day-to-day operational support of your FPGA prototyping farm very cost effective with job scheduling and monitoring as well.

LR: It sounds like a breakthrough introduction. To conclude, could you summarize the story for our audience?

JM: Sure. A key goal of the launch was to be first. As I said before, when you are first, you’re establishing a direction in the industry. We now have defined a direction, namely, you need a complete and fully integrated solution with the right tool for the right task.

To summarize, we introduced an entire new suite of verification tools, well integrated into a consistent flow. For the first time, we provide a versatile offering in FPGA prototyping from enterprise to desktop levels.

We have delivered on time with confidence based on our emulation roadmap. Compared to our competition, the confidence level of delivering against the roadmap is not the same as ours. Fully funded by Siemens, we are executing on our roadmap. The notion of having all the pieces fully integrated is clearly demonstrated in this launch. The future looks very bright for this type of complete and integrated platform.