Voltage converters and regulators are a vital part of pretty much every semiconductor-based product. They play an outsized role in mobile devices such as cell phones where there are many subsystems operating at different voltages with different power needs. Many portable devices rely on Lithium Ion batteries whose output voltage can vary from 4.2 volts down to 3.0 volts as they discharge. The power distribution systems in these devices need to operate with extremely high efficiency to meet battery life requirements.

As an example, a typical cell phone contains CPU cores, DRAM, RF radio, display backlight, camera, audio codec and other subsystems which need voltages ranging from 0.8V to ~4V – all from a single voltage source in the lithium ion battery. A combination of buck and boost converters are needed to precisely produce all these voltage levels from the battery regardless of its state of charge. Because switching based converters can be noisy, low drop out (LDO) voltage regulators are also needed for several power supplies.

In the converters and regulators listed above, one of the most important elements is the pass device, which handles all the current to the load and controls the final output voltage. Pass devices can be made from a wide range of materials and can be designed as bipolar or MOS devices. Regardless of material and device type, the design of the pass device has a major effect on power loss and thermal behavior.

Power devices typically have many fingers and large channel widths (W). Connections to the semiconductor layers are made through a complex interconnection of metal and via layers that connect all the active areas in parallel. The size and topology of these devices leads to complex electrical behaviors. There are a large number of gate/base contacts which often have maze like connections to the external device terminal(s). The same is often true for connections to the source and drain, or emitter and collector.

These complex metal connections contribute to device resistance and can also introduce non-uniform delays within the device. To model this electrical behavior, designers need tools like the Magwel Power Transistor Modeler (PTM) suite. Traditional circuit extractors are not designed to deal with wide metal, large via arrays and usual shapes found in power devices. Likewise, point-to-point resistance values are needed, along with efficient and accurate ways to model the channel.

Magwel’s PTM tools use a solver based extractor that is optimized for the complex metal shapes and vias found in power devices. PTM can automatically identify the channel and will segment it according to user settings to create multiple parallel devices that can be used for full device modeling.

Usually when power devices are used for switching converters the active area can be modeled effectively as a linear resistive value based on the foundry device model and operating conditions, such as temperature and stimulus. However, Low Drop Out (LDO) regulators are often used to get as much working voltage out of a discharging battery. The lower the drop-out voltage the longer the LDO regulator can use a battery and the less overall power is wasted on internal resistance and converted to heat. For this reason, LDO regulator pass device performance is extremely important, necessitating the use of more sophisticated device modeling for the active region. Magwel’s PTM has the option to use non-linear models to accurately predict the behavior of the active area during LDO power device operation.

Another important aspect of power transistor modeling is the stimulus used at the external device pins for simulation. Magwel’s PTM offers a wide range of easy to use options for this. The most basic method is to simply set a constant voltage or current. The user can select the operating Temperature for each simulation. There is also a voltage controlled voltage source (VCVS) mode for modeling the device pin voltage as a proportional function of a probe voltage in the device. This is exceptionally useful for working with circuits that have replica or sensing devices.

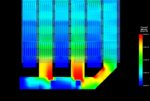

With the inputs described above, PTM can provide voltage values at every point in the device. Designers can also view the current density throughout each layer. Thresholds for current density can be set to flag potential electromigration violations. In addition to output reports and exportable csv files, users can view a field view for full visualization of the device for easy debugging and optimization.

Magwel’s PTM is used by many leading converter circuit design companies. Silicon validation results show correlation within a percent or two. Designers can make provisional changes to the device geometry and pin locations and quickly rerun simulations without iterating back through the layout tools to perform what-if analysis when optimizing the design. More information on the PTM suite of tools is available on the Magwel website.