Until now, most of the processors contained within automobiles could be served by SRAM, at the exception of infotainment systems relying on a more powerful CPU connected to DRAM, but these systems are non-safety-critical. Advanced Driver Awareness Systems (ADAS) and self-driving vehicle systems demand powerful processors that require the memory capacity and bandwidth that is only possible with DRAM. Designers need to precisely understand the differences between DRAM and SRAM in term of reliability, temperature sensitivity and refresh requirements before to move from (embedded) SRAM based computing to external DRAM based architecture, especially for safety critical automotive systems.

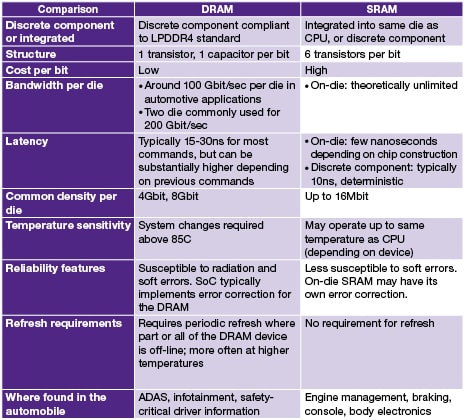

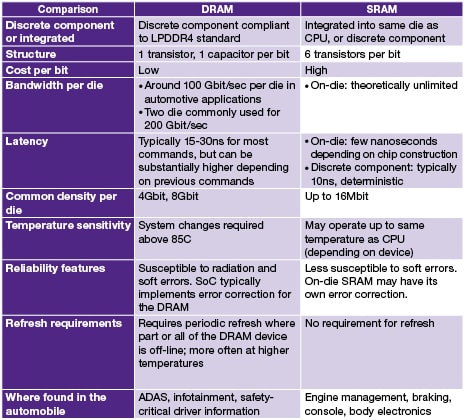

In the above Table (DRAM vs SRAM Use in Automotive Applications) the reader can identify the main differences. The latency associated with DRAM is larger than for SRAM and most importantly, can be non-deterministic. The DRAM technology is characterized by the need to be periodically refreshed to avoid the loss of data in the memory.

The core of a DRAM chip is an analog array of bit-cells that operate by storing a small amount of charge on a capacitor within each bit-cell – just a few tens of femtoFarads or just a few tens of thousands of electrons per bit, on a DRAM device with 4 or 8 billion bits per die. The rate of leakage is dependent on the temperature, leaking more at higher temperatures… and the automotive segment requires the devices to run fully at spec at higher temperature than consumer or even industrial. This results in specially designed DRAMs targeted towards automotive applications.

In term of reliability, DRAM devices are also susceptible to soft errors due to Single Event Upsets (SEUs) – the effect of ionizing radiation on the DRAM device. As a consequence, a bit-cell may lose its charge and again error correction should be employed to recover the lost data. The impact of soft errors can be dramatic and is obviously not acceptable for safety critical automotive systems.

The SoC has to integrate Error Correction Code (ECC) mechanisms when using an external DRAM. We will see further which type of ECC may be implemented in automotive systems to prevent error propagation.

At this point, you may ask why integrating a DRAM into automotive systems, while considering these drawbacks!

The answer is simply that you have no other choice than using DRAM when designing computing intensive automotive systems, as DRAM is an enabling technology for these three automotive advances:

1. Displays: High definition displays generally require DRAM, and as displays like instrumentation consoles and heads-up displays will relay safety-critical information to the driver, then DRAM is needed in this safety-critical application.

2. ADAS systems that process camera and high-bandwidth sensor input: The cameras and other sensors that provide the input to the ADAS system generate a large amount of data which also requires further processing to remove noise, adjust for different lighting conditions, and to identify objects and obstacles. This kind of processing requires the bandwidth and capacity of DRAM.

3. Self-driving vehicles: Self-driving vehicles require processing of a number of high-bandwidth input sources and intense computation, making DRAM a necessity.

The most common DRAM device for new ADAS designs is the LPDDR4 SDRAM. LPDDR4, originally designed for mobile devices offers a balance of capacity, speed and form factor that is attractive for automotive applications. As a result, LPDDR4 has been automotive qualified by DRAM manufacturers and is available in automotive temperature grades.

Even with careful physical interface design, at LPDDR4 data transmission speeds, there is a non-zero bit error rate, so the risk of data transmission errors must also be addressed. There are a few possible ways to mitigate possible errors that may occur in DRAM devices to prevent the errors from propagating into the rest of the system.

The DRAM manufacturer may attempt to create a bit-cell that is more temperature resistant, or the DRAM manufacturer may introduce error correction within the DRAM die to correct for the bit-cells which have lost their charge between refreshes. Even if error correction is present within the DRAM die, the SoC designer may also introduce error correction on the DRAM interface to correct errors in the DRAM.

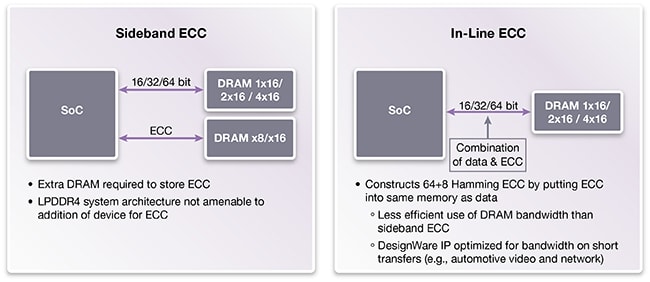

In traditional DDR DRAM designs such as servers and networking chips, any error correction is usually transmitted side-band to the DRAM data. However, when using LPDDR4 devices, the arrangement of LPDDR4 into 16-bit channels, 2 channels per die, 2-4 dies per package, 4 channels per package means that it is highly impractical to implement sideband pins with which to transmit sideband Error Correcting Code (ECC) data. In that case, an in-line ECC scheme may be used, which transmits the ECC data on the same data pins as the data it protects (Above Figure).

As a conclusion, DRAM devices are clearly an enabling technology for advancements in automotive safety, features, and convenience. With careful design and stringent process, DRAM can be introduced into safety-critical areas of the automobile to provide high bandwidth and large capacity to enable the computing necessary for driver information systems, ADAS, and self-driving vehicles.

This article has been inspired by the excellent paper “Understanding Automotive DDR DRAM” written by Marc Greenberg, Product Marketing Director, in Synopsys DesignWare Tech Bulletin.

You will find an exhaustive list of Synopsys Automotive Grade DDR interface IP including PHYs, Controllers, Verification IP, architecture design models, and prototyping systems here

From Eric Esteve from IPnest

Share this post via:

Comments

0 Replies to “Moving from SRAM to DDR DRAM in Safety Critical Automotive Systems”

You must register or log in to view/post comments.