How do you reconfigure system characteristics? The answer to that question is well established – through software. Make the underlying hardware general enough and use platform software to update behaviors and tweak hardware configuration registers. This simple fact drove the explosion of embedded processors everywhere … Read More

WEBINAR: FPGAs for Real-Time Machine Learning Inference

With AI applications proliferating, many designers are looking for ways to reduce server footprints in data centers – and turning to FPGA-based accelerator cards for the job. In a 20-minute session, Salvador Alvarez, Sr. Manager of Product Planning at Achronix, provides insight on the potential of FPGAs for real-time machine… Read More

Integration Methodology of High-End SerDes IP into FPGAs

Over the last couple of decades, the electronics communications industry has been a significant driver behind the growth of the FPGA market and continues on. A major reason behind this is the many different high-speed interfaces built into FPGAs to support a variety of communications standards/protocols. The underlying input-output… Read More

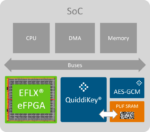

WEBINAR: Taking eFPGA Security to the Next Level

We have written about eFPGA and for six years now and security even longer so it is natural to combine these two very important topics. Last month we covered the partnership between Flex Logix and Intrinsic ID, and the related white paper. Both companies are SemiWiki partners, so we were able to provide more depth and color:

In the … Read More

Flex Logix: Industry’s First AI Integrated Mini-ITX based System

As the market for edge processing is growing, the performance, power and cost requirements of these applications are getting increasingly demanding. These applications have to work on instant data and make decisions in real time at the user end. The applications span the consumer, commercial and industrial market segments.… Read More

WEBINAR The Rise of the SmartNIC

A recent live discussion between experts Scott Schweitzer, Director of SmartNIC Product Planning with Achronix, and Jon Sreekanth, CTO of Accolade Technology, looked at the idea behind the rise of the SmartNIC and ran an “ask us anything” session fielding audience questions about the technology and its use cases.

Three phases

… Read MoreA clear VectorPath when AI inference models are uncertain

The chase to add artificial intelligence (AI) into many complex applications is surfacing a new trend. There’s a sense these applications need a lot of AI inference operations, but very few architects can say precisely what those operations will do. Self-driving may be the best example, where improved AI model research and discovery… Read More

Flex Logix Partners With Intrinsic ID To Secure eFPGA Platform

While the ASIC market has always had its advantages over alternate solutions, it has faced boom and bust cycles typically driven by high NRE development costs and time to market lead times. During the same time, the FPGA market has been consistently bringing out more and more advanced products with each new generation. With very… Read More

EasyVision: A turnkey vision solution with AI built-in

Artificial intelligence (AI) is reserved for companies with hordes of data scientists, right? There’s plenty of big problems where heavy-duty AI fits. There’s also a space of smaller, well-explored problems where lighter AI can deliver rapid results. Flex Logix is taking that idea a step further, packaging their InferX X1 edge… Read More

Time is of the Essence for High-Frequency Traders

In the world of financial trading, nanoseconds count. The faster a trade can be accomplished, the more money a trader can make. Getting a trade in before a competitor also results in improved profits. What does this have to do with the partnership deal recently inked between Silicon Creations and Achronix? Plenty. The two companies… Read More

Crossing the Yield Cliff: IDP V6 and the Future of Manufacturing Forecasting