EDA verification tools generally do a great job of analyzing the written rules in digital design. Clock domain crossings (CDCs) are more like those unwritten rules in baseball; whether or not you have a problem remains indefinite until later, when retaliation can come swiftly out of nowhere.

Rarely as overt or dramatic as a bench-clearing brawl, metastability and other issues due to CDCs can be very hard to spot. Static timing analysis is of little help. Functional simulation may or may not have executed enough timing scenarios. At-speed testing in real silicon is an expensive and late way to discover a problem. Is there an alternative, pre-silicon?

The new release of Aldec ALINT-PRO-CDC 2015.01 provides designers a way to capture experience in debugging CDC issues before they start. Linting, or design rule checking, sifts through HDL code looking for constructs that match or violate a set of rules. This provides a way to automate code review, highlighting areas that may lead to problems.

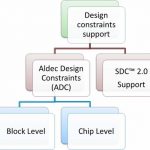

What rules to use to stop CDC problems that are difficult to discover seems to be a problem in itself. This is where experience and the previously unwritten rules come in. ALINT-PRO-CDC accepts a set of design constraints. A key feature added is the ability to read Synopsys Design Constraints (SDC) 2.0 files. This brings in information used to aid synthesis, such as:

- create_clock, create_generated_clock – specifies clock network sources and relations between them

- set_clock_groups – defines groups of clocks that are asynchronous to each other

- set_input_delay, set_output_delay – relation of the input signals to design clocks

- Set of commands used to access design elements – get_clocks, get_pins, get_nets

Without knowing what the constraints were prior to synthesis, this information can be difficult to glean from HDL code alone. Most EDA platforms deal with SDC 2.0 files.

Aldec has gone a step further, allowing teams to add constraint extensions. This can help describe elements such as custom synchronizers, encrypted IP, behavioral models, FPGA vendor primitives, descriptions of reset networks, and other constructs. If particular areas of a design, or certain approaches, have led to CDC issues in prior debug activity, that information can be captured in the form of constraints.

Once constraints are established, ALINT-PRO-CDC goes in with advanced technology. Its synthesis engine looks at clocked elements and performs conditional analysis. A pattern matching engine validates synchronizer structure and finds forbidden netlist patterns. Clocks and resets are detected automatically, and clock domains are extracted.

The strategy reaches beyond only static checks – ALINT-PRO-CDC features integration with Aldec Riviera-PRO for dynamic checks at simulation. This greatly enhances capability for metastability insertion, precisely targeting areas in designs rather than randomly hunting around. Additional assertions and coverage statements help make sure CDC issues are exposed.

Linting is a fabulous tool – perhaps most famously applied in the Toyota sudden acceleration investigations – to find and highlight subtle problems in code that may lead to defects. HDL design is philosophically no different than software design, and it is surprising linting is not being used more broadly in EDA circles.

Aldec is hoping to not only help designers prevent CDC-related bugs, but to add another tool to the safety-critical design formal verification process such as called for in DO-254. With the increase in third-party IP and growing design complexity, the burden on designers to review code without automation help is becoming dangerously high.

Writing the unwritten CDC rules can prevent problems later in the design season. For more information, including an overview presentation, see the Aldec ALINT-PRO-CDC home page.

Related articles: