The NFL has its annual Super Bowl contest each year, EDA vendors attend DAC, then the test folks attend ITCwhich was in Anaheim a few weeks ago. I’ve marketed ATGP, BIST and DFT tools before so I like to keep updated on what’s happening at conferences like ITC. Robert Ruiz from Synopsys spoke with me by phone to provide an update on three new things that they shared at ITC this year.

- New ATPG Technology

- ISO 26262-5 Certification (Auto reliability standard)

- Improved support of FinFET and emerging node tests

New ATPG Technology

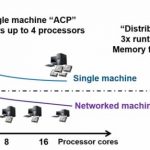

You can only enhance EDA software so much by adding incremental new features, sometimes you have to start over from scratch in order to achieve something great, and in this case the engineers at Synopsys did a re-write of their ATPG algorithm in order to produce new results that complete some 10X faster than before. Just think about that, having to wait either 10 hours or just 1 hour. Of course, we all want that 1 hour result because of the productivity gains provided.

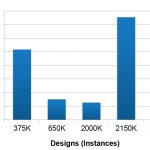

This faster ATPG engine takes more effective advantage of all cores in your machine. In the previous ATPG algorithm each core would read in the entire design, while with the new algorithm the paths for each fault are distributed to an available core, thus using far less RAM. Here’s a look at some actual ATPG runt time speed up results across five different designs:

Your time spent to diagnose where faults are located in actual silicon are also sped up now. This improvement helps foundries and IDMs to bring up new process nodes quicker.

Another benefit of the new ATPG engine is that the quality of the patterns has improved, so you can expect a reduction in the number of test patterns by around 25% as shown below:

ISO 26262-5

The automotive market is adopting an increasing amount of semiconductor content with each new generation of vehicles, and this market has a well-defined requirement for functional safety as defined by the ISO 26262 certification standard. The actual design process and EDA software must meet the ISO standards. To that end, the following Synopsys EDA tools have achieved this functional safety certification process:

- TetraMAX ATPG

- DFTMAX Ultra

- DesignWare STAR Memory System

- STAR Hierarchical System

FinFET and Emerge Node Tests

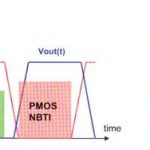

With FinFET transistors there are new process defects that require new fault models like:

- Metal shorts

- Open vias

- Resistive shorts

- Resistive opens

- Litho induced defects

You really need cell-aware faults based upon transistor-level defects found inside of the cells.

You can now perform cell-aware characterization using a SPICE tool like HSPICE in about one day, which is another 10X speed improvement.

Summary

The faster, more efficient ATPG algorithms at Synopsys are certainly going to make test engineers more productive in their jobs. Complying to the ISO standard for automotive safety will be another benefit of using the Synopsys DFT tools. Finally, you can be confident on FinFET designs that the silicon defects are properly modeled.

The big question is, when I get a hold of this new technology? Early customers should get the first use of this technology by the end of the year.

Related Blogs