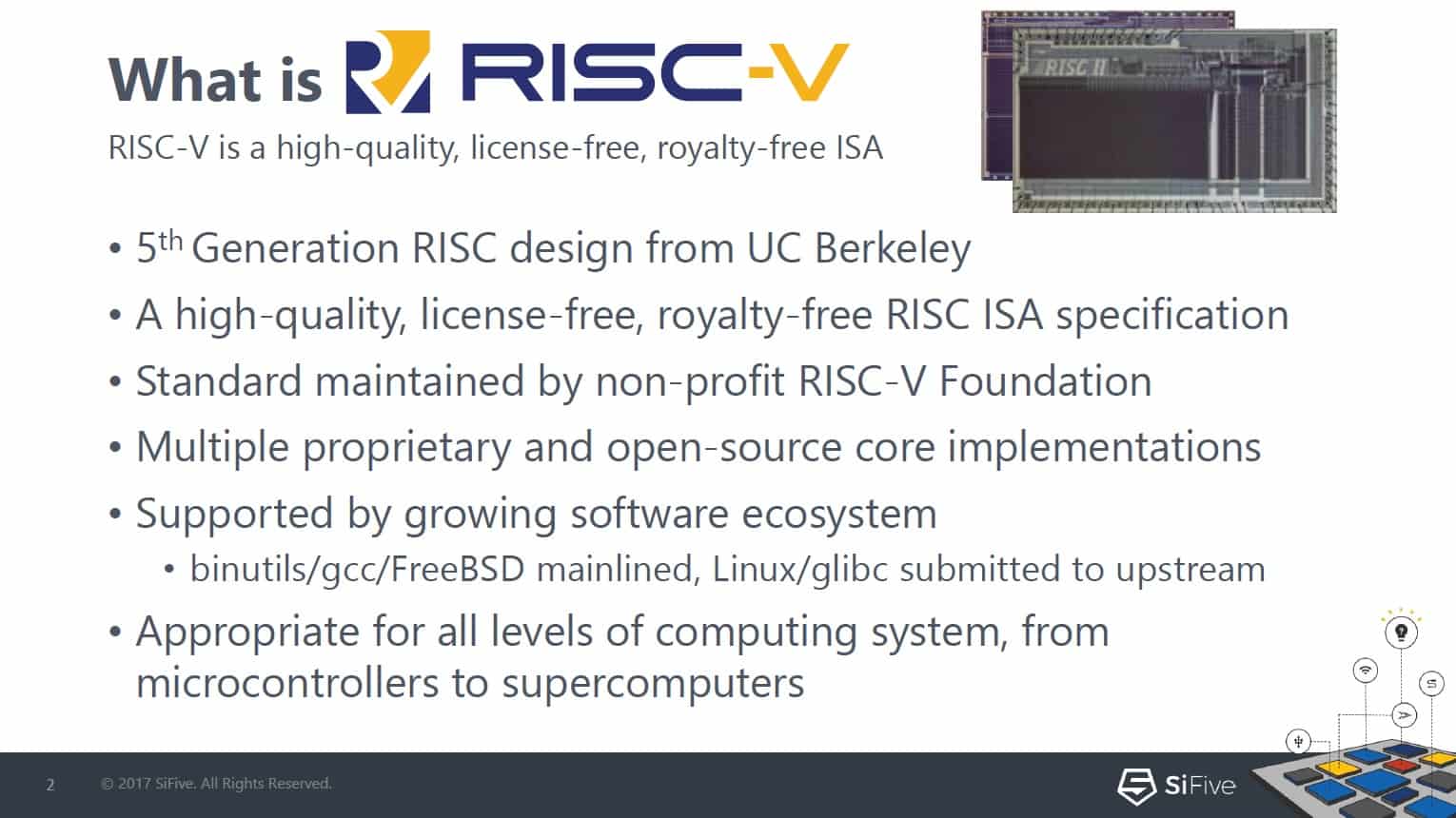

I was at the 7[SUP]th[/SUP] RISC-V Workshop for two days this week. It was hosted by Western Digital at their headquarters in Milpitas. If you have not been following RISC-V, it is an open source Instruction Set Architecture (ISA) for processor design. The initiative started at Berkeley, and has been catching on like wildfire. There are a number of RTL implementations that work in FPGA’s or SOC’s and there is also production silicon from companies such as Si-Five. The RISC-V Workshop was sold out with over 500 attendees – most of whom stayed for the full two days.

The agenda was filled with detailed technical presentations from a wide range of institutions and companies. They covered details of proposed additions to the specification, commercial products using RISC-V, and research projects leveraging the ISA. The presenters talked about everything from server farm simulation, machine learning, debugging tools, novel applications, and more.

The keynote was given by Western Digital CTO Martin Fink. He had several surprising things to tell us. First off, after talking in depth about Western Digital’s take on big data versus fast data, he mentioned that Western Digital actually ships about 1 billion processors a year. These processors enable USB drives, hard drives, solid state drives and more. They play a crucial and growing role in moving and processing data. We are all familiar with the cache schemes to improve performance and monitoring to maintain data integrity. In the future, filtering and processing might even occur on the storage device directly, aided by more advanced and powerful processors.

The second surprising announcement that Martin made was that Western Digital is committing to transition all of these processors to RISC-V. While unexpected, it probably should not have come as a complete surprise. The slide showing companies supporting RISC-V barely has any white space on it these days. Almost every large semiconductor company is represented.

The two days of talks made clear that the RISC-V ecosystem is being built out at a rapid pace and there is a lot of momentum. Low end implementations of RISC-V were handed out to some of the guests in a smart name tag designed by Antmicro that uses the E310 from Si-Five. Si-Five has announced a 5-core chip that is suitable for running Linux. At the upper end of the performance spectrum, a new company called Esperanto came out of stealth mode at the Workshop to announce its technology that uses massively parallel RISC-V processor chips to tackle machine learning.

I’ll be writing more about RISC-V, but because it is open source, you can go directly to the RISC-V website and view the specs and learn about the current implementations, development tools and future work planned to add to the spec. It’s worth noting that the core parts of the ISA are already defined and frozen, so they can be relied upon for development.

RISC-V has the potential to be as transformative as Linux, or HTML. It appears to have the ability to scale from MCU to server class. Already people are using it in a wide range of applications. As an analyst, I attend a lot of technology events and I think the turnout and enthusiasm for this event was exceptional.