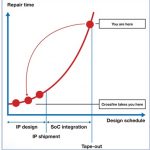

IP must be properly qualified before attempting to use them in any IC design flow. One cannot wait to catch issues further down the chip design cycle. Waiting for issues to appear during design verification poses extremely high risks, including schedule slippage. For example, connection errors in transistor bulk terminals where timing and power closure will work regardless. Such an issue would only be uncovered during final SPICE netlist checks. Another potential problem could include a case where LEF does not match GDS, completely slipping through the cracks, through full synthesis, and would only be caught during chip level DRC or LVS. This would ultimately require updates to the IP as well as re-synthesis (more slippage).

How can one avoid these potential problems? Simple, with Fractal’s Crossfire QA suite. Fractal is your specialized partner for IP qualification. Crossfire can help you deal with design view complexities, increasing amount of checks required to correctly QA an IP, and the difficulties of dealing with excessive volumes of data.

Crossfire supports over 30 standard design formats, from front-end to back-end, including simulation and schematic views, binary databases such as Milkyway, OpenAccess, and NDM, documentation, and custom formats such as Logic Vision and Ansys APL. Any other ASCII based custom formats can also be easily integrated into the tool.

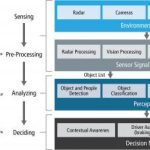

Getting back to the scope of this article, the recommended baseline of checks can be separated into three sections: cell and pin presence for all formats, back-end checks, and front-end related checks.

Cell and Pin Presence Checks

Although consistency checks such as cell and pin presence may sound trivial, and for the most part, they are, one cannot sweep such an important task under the rug. Don’t be surprised if an IP or standard cell library from a well-known IP vendor is delivered with inconsistencies between the various formats, including cell and pins names, port direction, and hierarchy differences.

Back-end Checks

Ensuring layout related consistencies across all back-end related formats is an important part of the IP QA qualification. Pin labels and shape layers must match across all layout and abstract formats. All layout formats such as GDS, Oasis, Milkyway CEL, NDM and OpenAccess layout views must directly match across the board. When comparing a layout to an abstract format such as LEF, Milkyway FRAM or NDM frame, one must ensure that all layer blockages correctly cover un-routable areas in the layout. On top of that, pin shapes and layers must match in order to guarantee a clean DRC/LVS verification down the line.

Other important checks to consider include area attribute definitions for non-layout formats which must match the area defined by the boundary layers for various layout formats. IP and standard cell pins must be accessible by the router and for non-standard cell related IP, pin obstruction needs to be checked in order to ensure accessibility. In some cases, ensuring that all pins are on a pre-defined grid can also be a necessary task. In the end, these checks will ensure a quicker and less error-prone P&R execution.

Front-end Checks

Front-end checks can be broken into seven separate sections: timing arc, NLDM, CCS, ESCM/EM, NLPM, functional characterization, and functional verification. In this blog, we’ll be covering the latter two related to functional checks. The first five sections related to characterization deserve an article all on their own, therefore, they will be covered in an upcoming blog.

Functional characterization checks ensure the timing arcs are defined correctly when compared the given Boolean functions for formats like Liberty, Verilog, and VHDL. Other checks include power down function correctness, ensuring related power and ground pins are defined correctly when compared to spice netlists or UPF models (correct pins are extracted from spice by traversing the circuits defined in the spice format). We also recommend checking related bias pins and whether input pins are correctly connected to gate or antenna diodes.

When dealing with standard cell libraries, it is important to establish the Boolean equivalence of all formats that describe the behavior of a cell. This will ensure that all formats behave in the same manner when dealing with functionality during various front-end related timing simulations.

What else can Crossfire do?

Crossfire is technology independent. From a tool perspective, the differences include:

- Exponential data size growth (up to 2x when compared to previous node)

- Introduction of new design formats (i.e. NDM)

- Number of corners increasing drastically in newer nodes (i.e. FinFet based)

As a tool, Crossfire only has to differentiate between standard cell libraries and all other IP (memories, digital, analog, mixed-signal, etc.). Some checks, such as abutment or functional verification, are designed specifically for standard cell libraries.

Crossfire is a proven validation tool used by various Tier 1 customers. All checks and formats supported by Crossfire are based upon direct cooperation with our customers. Customers moving from “old” to “new” technology nodes automatically get all the checks and format support developed for and used by Tier 1 customers. This cycle of shared knowledge is passed on from one technology node to another.

Conclusion

IP qualification is an essential part of any IC design flow. A correct-by-construction approach is needed since fixing a few bugs close to tapeout is a recipe for disaster. Given that, IP designers need a dedicated partner for QA solutions that ensures the QA needs of the latest process nodes are always up-to-date. In-house QA expertise increases productivity when integrated with Crossfire. All framework, parsing, reporting, and performance optimization is handled by the tool. On top of that, with a given list of recommended baseline checks, we ensure that all customers use the same minimum standard of IP validation for all designs.

TSMC OIP

The Crossfire team and I will be at a booth in the TSMC OIP exhibit hall next week giving out free copies of our Fabless book, discussing the need for IP qualification, and demonstrating the latest Crossfire software. I hope to see you there!