Computer vision as a research topic has been around since the 1960’s and we are enjoying the benefits of this work in modern-day products all around us as robots with computer vision are performing an increasing number of tasks, even our farmers are using computer vision systems to become more productive:

- AgEagle® has a drone that takes aerial images to create a geo-tagged layout of the land and crops

- Prospera® analyzes crop images for health, nutrient levels, water and crop rotation

- Blue River Technology® created a robot with computer vision to spray crops for weeds

- Energid Technologies® built a system to pick citrus fruits using six cameras

- Case IH® enables self-driving tractors to till the soil and harvest crops

- John Deere® provides JDLink so that farmers can track and analyze their machinery, monitor for maintenance and connect to a local dealer at service time

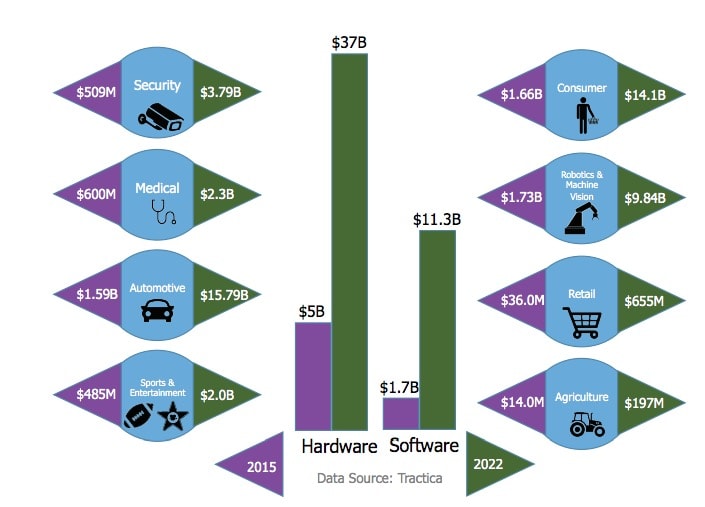

The markets for computer vision products are expected to grow into a $48B size by 2022, according to research company Tractica.

In these eight segments we can expect to see new products based on new semiconductors, image sensors and specialized software:

- Automotive – driver assistance, autonomous

- Sports and entertainment – special effects, movies, TV content

- Consumer – smart phones, AR, VR, biometrics, cameras

- Robotics – industrial, inspection, drones

- Security – surveillance, prevent crime, track faces

- Medical – 3D images from body scanners

- Retail – customer tracking, buying analytics

- Agriculture – crop inspection, harvesting, weeding, tilling

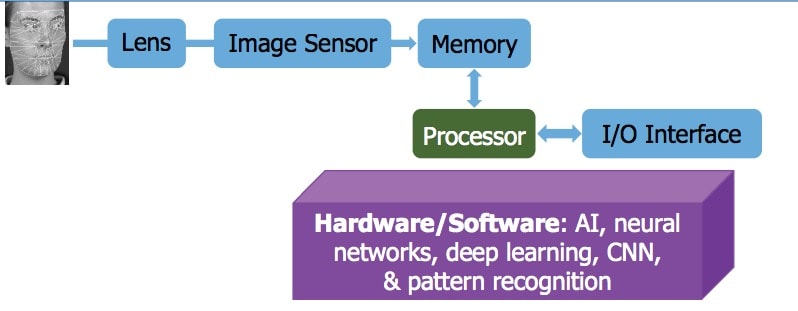

I use Facebook everyday and after uploading a photo they automatically identify my face and friend’s by using Convolutional Neural Networks (CNN) for image recognition and classification. Our economy is helped by the new products and services in computer vision, all made possible by technologies like: deep learning to identify and classify objects, WiFi and mobile networks, fast image uploading, CNN training with huge image databases, parallel processing in the cloud, software libraries, affordable chip and memory prices, plentiful CMOS image sensors.

The pace of innovation in computer vision has created VC funding to the tune of $3.48 billion between Q3 2015 and Q3 2016 for AI startups (neural networks, deep learning, CNN), according to Venture Pulse – KPMG International and CB Insights. The top AI investors from 2011 to June 2016 include: Intel Capital, Google Ventures, GE Ventures, Samsung Ventures and Bloomberg Beta. Talk about merger mania, there were 140 VC-backed AI companies acquired by these AI investors.

A computer vision system has the following basic building blocks:

Engineers have many choices on how to implement their computer vision system:

- CPU

- DSP

- GPU

- FPGA, embedded FPGA

- ASIC

On the downside a GPU consumes the most power, so wouldn’t be appropriate for an onboard system. A DSP is lower power than a GPU, but they aren’t efficient for CNN tasks. CPUs are popular for general purpose computing, however they also tend to be the slowest at performing vision tasks so wouldn’t be appropriate for visual identification in automobiles. FGPA approaches are helpful for getting a vision prototype up and running to see if the concept is viable, before committing to an ASIC for lowest cost and lowest power. So an ASIC approach for vision processing could provide the highest performance at the lowest cost, but then you need to know something about chip-level hardware design.

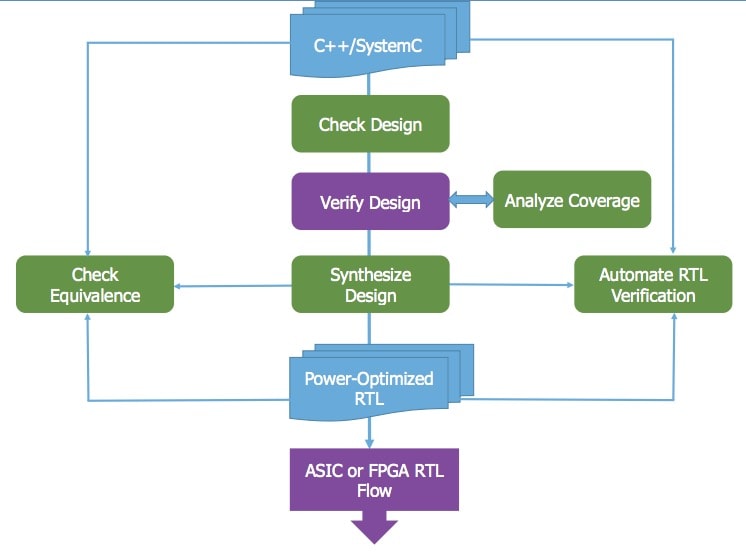

To the rescue for new hardware designers in the computer vision field is the very popular C++ language which can now be used both to describe algorithms and as a source for hardware description. To get from C++ into RTL code an engineer can use High Level Synthesis (HLS) as a starting point instead of the lower-level RTL languages like SystemVerilog or VHDL. With C++ code for your algorithm it is possible to compare the speed and power of an idea using an ASIC, FPGA, CPU or GPU. Iterations in C++ allow the system architect to make trade offs and come up with something optimal for their unique application.

HLS tools have been around for the past 15 years and Mentor (a Siemens business) has been automating this approach with their Catapult HLS tool as shown in this flow diagram:

So an algorithm designer starts out with C++ code then ends up with RTL code ready for use in ASIC or FPGA devices. You get to check the concept for errors before simulation, have a testing environment, and use formal equivalence checking to ensure that RTL matches the C++. The generated RTL code is optimized for power and ready for functional simulation and RTL synthesis into technology-specific cells. Computer vision teams can quickly go from a concept to a hardware prototype in record time by using a C++ flow and HLS technology like Catapult.

Summary

Expect computer vision products to grow both in number and volume over the coming years, and to build a new vision system there is a proven flow from C++ to RTL to cells with software called Catapult HLS from Mentor. You don’t have to be a hardware expert to get optimal hardware results using a HLS tool flow.

Here are a couple more resources to consider:

Related articles:

Share this post via:

Comments

2 Replies to “Computer Vision and High-Level Synthesis”

You must register or log in to view/post comments.