In late September, the MIPI Alliance held their DevCon conference as a virtual event. There were several excellent presentations at the conference. One of those was titled “A MIPI CSI-2/MIPI D-PHY Solution for AI Edge Devices” by Ashraf Takla of Mixel. Looking at the daily volume of technology news about applications moving to the edge, one may be led to believe pretty much every application has move to the edge. Ashraf does a wonderful job of establishing how cloud and edge processing complement each other and why appropriate partitioning is important for achieving optimal system performance. He then proceeds to explain why MIPI interface is a great fit for AI edge devices. You can watch Mixel’s entire presentation at MIPI DevCon here.

While theoretically everything can be processed at the edge or in the cloud, certain functional requirements drive the edge vs cloud processing decisions. This blog includes salient points garnered from the Mixel presentation.

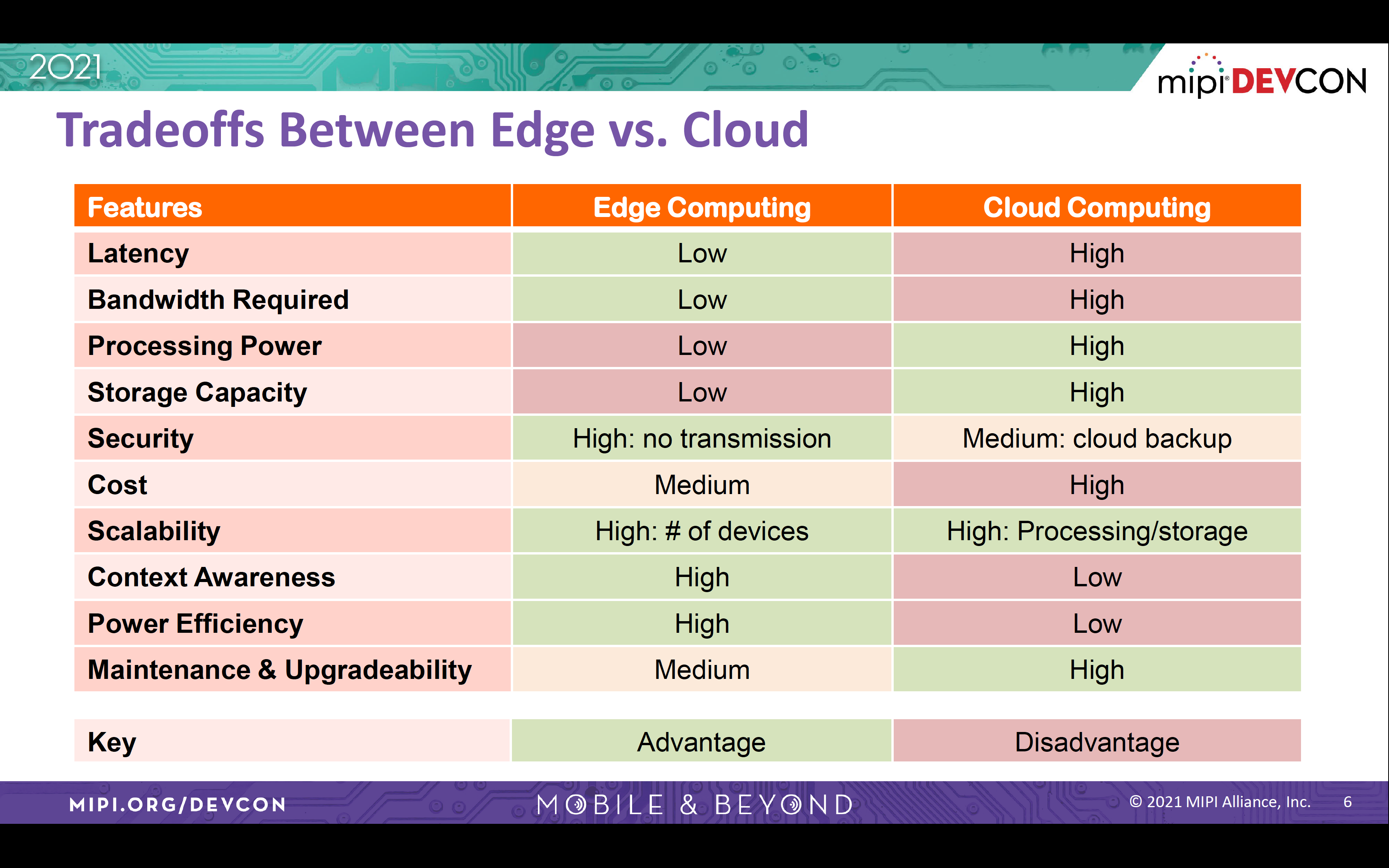

Requirements Favoring Edge processing decisions

- Low latency in order to make decisions in real-time or near real-time

- Minimizing false notifications to improve battery life; processing at the edge requires less bandwidth as well, enabling more power savings

- Security and Privacy; reducing chances for security breach by minimizing/eliminating transmission of raw data to the cloud for processing

- Local processing due to unavailability of broadband/mobile connectivity

- Minimize connectivity costs by reducing bandwidth usage, even when connectivity is available

Requirements Favoring Cloud processing decisions

- High-capacity compute performance not available at the Edge; essential for complex machine learning and modeling

- Large storage capacity

- Ability to scale storage and compute resources at incremental cost

- High security of data in the data center (but at-risk during data transmission)

- Ease of maintaining and upgrading the hardware

The following is a functional requirements tradeoff table that Mixel has pulled together comparing edge and cloud computing.

MIPI

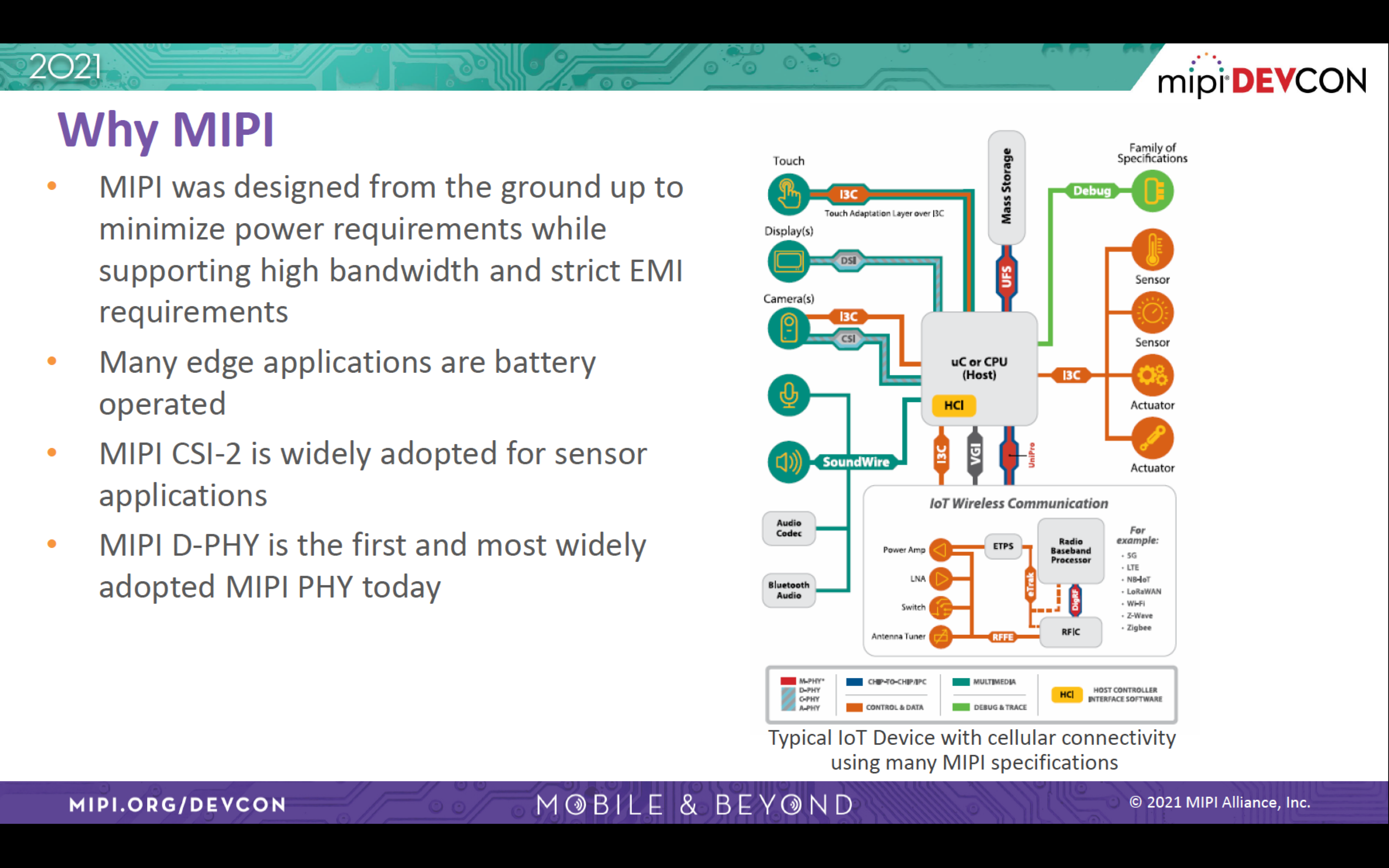

In the world of electronics, interfaces abound. Whether it is interfaces between systems or between chips, they are based on standards. Standards ensure compatibility and interoperability. One such interface that has found wide adoption is MIPI. Originally, it was developed to standardize interfaces within the mobile phone industry. Not only has MIPI beaten out other competing standards, it has also expanded its use cases. It is used in many more applications than it was originally developed for. That is the reason the expanded form of the MIPI acronym is not used anymore.

Why is MIPI Attractive for AI Edge Devices

While already among the popular interfaces, MIPI is seeing rapid demand growth from artificial intelligence (AI) driven surge in the marketplace. Whether it is for IoT applications or automotive applications, they all use lots of sensors to gather data to make AI-based decisions. This data needs to be processed to make real time decisions right there in the field. Because of this low latency requirement, cloud-datacenter based processing is giving way to AI edge processing.

Many AI edge applications have very low power and strict EMI requirements. At the same time, they also have reasonable bandwidth needs. MIPI is able to satisfy all three requirements. This expands the range of devices that are attracted to MIPI with many devices being battery-operated and worn/carried on a person.

Additional Points of Interest

During the presentation, Mixel also spotlighted an edge-AI processor that uses its MIPI D-PHY, built on Global Foundries 22FDX process. The Ergo inference processor from Mixel’s customer Perceive, can be used to make decisions at the edge. The talk covered use cases for this processor in various target applications. The performance aspects of this processor were covered in an earlier blog.

Ashraf also used one slide to summarize the rationale behind Perceive’s choice of a fully depleted silicon on insulator (FDSOI) process technology for implementing the Ergo SoC. This relates to prior work completed by Mixel that was published in EE Times and covered in an earlier blog.

Summary

The way a system is partitioned between what computing is done in the cloud and what is done on the edge device is important. This will help optimize the system performance and determine the viability and success of a particular product. AI edge devices use many types of sensing (visual and audio) to solve specific problems. MIPI specifications are designed from the ground up to enable low power, high bandwidth requirements of edge devices. The selection of process technology for implementing MIPI PHY and edge chips is an important decision.

If you would like to learn more information about Mixel and their MIPI offering, visit their website here or learn about their MIPI D-PHY IP here.

Also Read:

FD-SOI Offers Refreshing Performance and Flexibility for Mobile Applications

New Processor Helps Move Inference to the Edge

Mixel Makes Major Move on MIPI D-PHY v2.5

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.