Edge AI inference is getting more and more attention as demand grows for AI processing across an increasing number of diverse applications, including those requiring low-power chips in a wide range of consumer and enterprise-class devices. Much of the focus has been on optimizing the neural network processing engine for these smaller parts and the models they need to run – but optimization has a broader meaning in many contexts. In an image recognition use case, the images must come from somewhere, usually from a sensor with a MIPI interface. So, it makes sense to see Perceive integrating low-power MIPI D-PHY IP from Mixel on its latest Ergo 2 Edge AI Processor, bringing images on-chip for AI inference.

Resolutions and frame rates on the rise

AI processors have beefed up to the point where they can now handle larger images off high-resolution sensors at impressive frame rates. It’s crucial to be able to run inferences and make decisions quickly, keeping ahead of real-time changes in scenes. In view of this, Perceive has put considerable emphasis on the image processing pipeline in the Ergo 2.

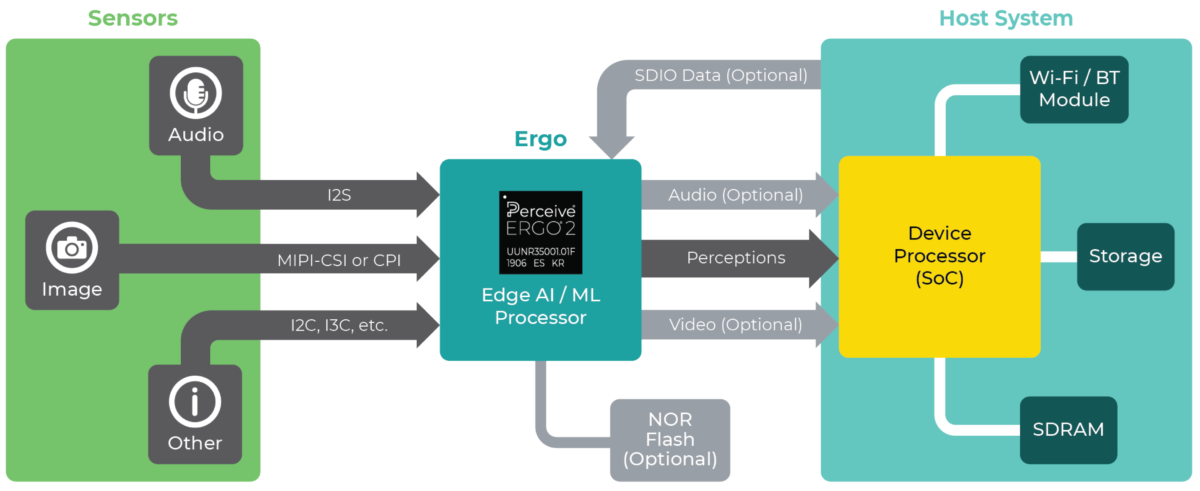

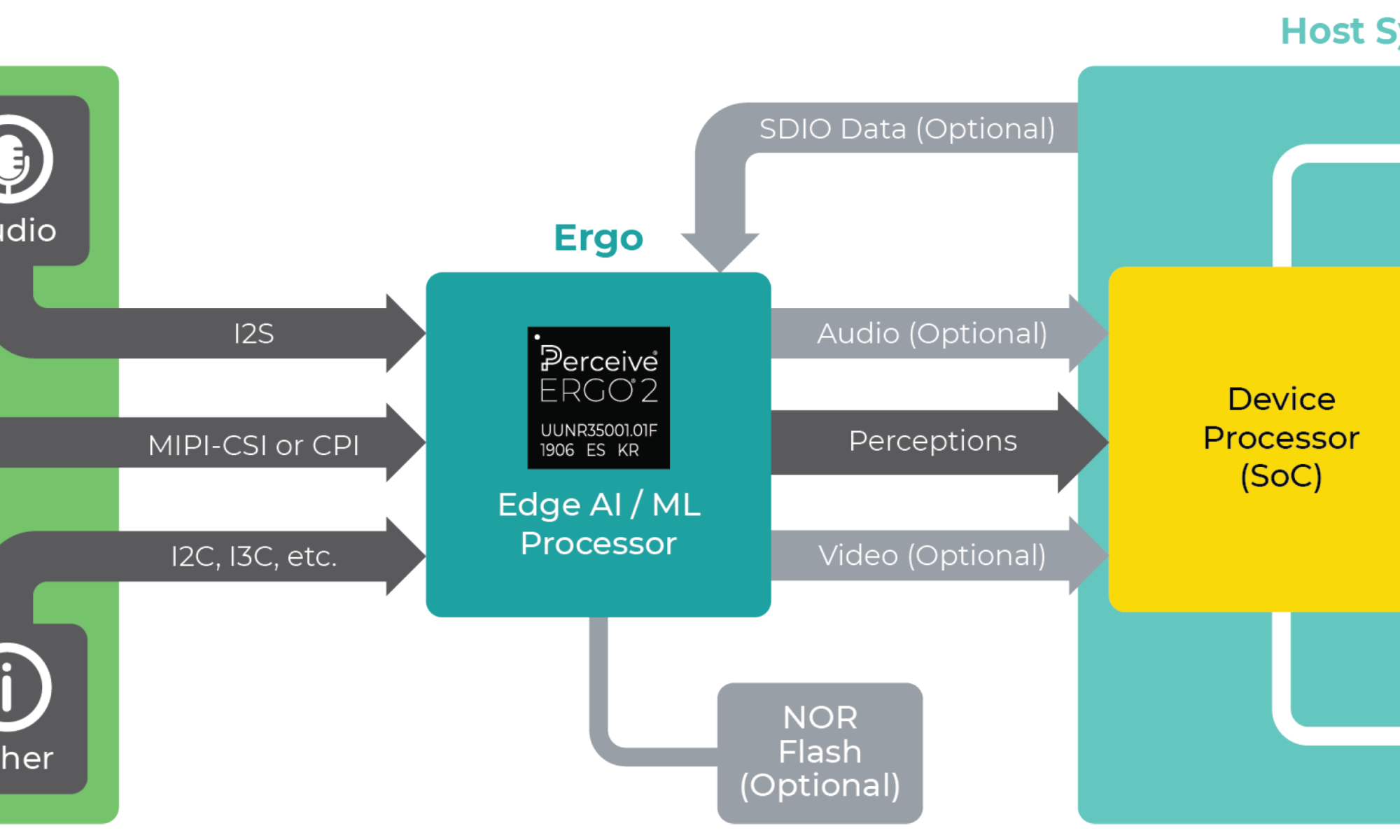

Ergo 2 Edge AI Processor system diagram, courtesy Perceive

Large images with a lot of pixels present a fascinating challenge for device developers. In a sense, image recognition is a misnomer. Most use cases where AI inference adds value call for looking at a region of interest, or a few of them, with relatively few pixels wrapped inside a much larger image filled with mostly uninteresting pixels. Spotting those regions of interest sooner and more accurately determines how well the application runs.

The Ergo 2 image processing unit has dual, simultaneous pipelines that can isolate interesting pixels, making it easier for AI models to handle perception. The first pipeline supports four regions of interest in a max image size of 4672 x 3506 pixels at 24 frames per second (fps). The second pipeline can target a single region in a 2048 x 1536 pixel image coming in at 60 fps. The IPU also handles image-wide tasks like scaling, range compression, rotation, distortion and lens shading correction, and more.

Lost frames can throw off perception

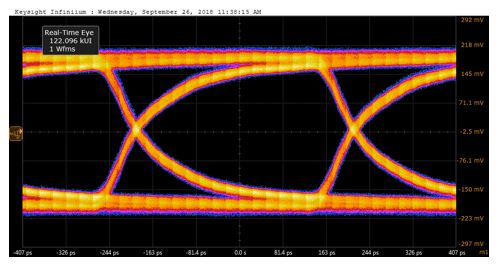

Excessive noise or jitter in these fast, high-resolution images can lead to frame loss due to data errors. Lost frames in an image stream can impact the accuracy of inference operations, leading to missed or incorrect perceptions. Reliable image transfer that holds up to challenging environments is a necessity for accurate perception at the edge.

A defining feature of the Mixel MIPI D-PHY IP is its clock-forwarded synchronous link that provides high noise immunity and high jitter tolerance. In the Ergo 2, three different MIPI IP solutions are at work: a four-lane CSI-2 TX, a two-lane CSI-2 RX, and a four-lane CSI-2 RX. Each IP block integrates a transmitter or receiver and a 32-bit CSI-2 controller core. Links run up to 2.5 Gbps, with a typical eye pattern shown next.

First-pass success makes or breaks smaller chips

A flaw appearing in a large SoC isn’t fun, and a redesign can be expensive. However, a bigger SoC project tends to have a bigger design team, a longer schedule, and a bigger budget. On a smaller chip, a bust can kill a project in its tracks, with debug and re-spin costs quickly escalating to more than the initial development cost.

Although first-pass success isn’t a given in the semiconductor business, Perceive was able to achieve that with the Mixel IP. Mixel supported Perceive with compliance testing, enabling the full-up integrated design to endure rigorous MIPI interface characterization before the SoC moved to high-volume production. Mixel MIPI D-PHY IP contains pre-driver and post-driver loopback and built-in self-test features for exercising transmit and receive interfaces.

The result for Perceive of integrating Mixel’s MIPI D-PHY IP was hitting power, performance, and cost targets for the Ergo 2. Perceive’s customers, in turn, can implement Ergo 2 in smaller, power-constrained devices where battery life is a key metric, but AI inference performance has to be uncompromised. It’s a good example where bringing images on-chip for AI inference with carefully crafted integration contributes to savings at the small-system level.

For more information:

Also Read:

MIPI bridging DSI-2 and CSI-2 Interfaces with an FPGA

MIPI in the Car – Transport From Sensors to Compute

A MIPI CSI-2/MIPI D-PHY Solution for AI Edge Devices

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.