In 2019 I was involved in a major project to move all our engineering and financial systems to the cloud. We succeeded in this endeavor, but it wasn’t easy. We faced a lot of infrastructure challenges during our journey. The freedom from facility management and capital budgeting offered by the cloud was significant, however. If you look a bit deeper, there is a long list of challenges associated with building the massive compute infrastructure needed to fuel the cloud revolution. Synopsys recently published a White Paper on these challenges that is very informative. If you’re involved in technology for cloud computing, you need to read this White Paper to understand the challenges ahead of you and how Synopsys is enabling the cloud computing revolution.

If you talk to folks about moving to the cloud, you will get one of two responses:

I’m on the cloud now.

I don’t think the cloud is ready yet, but it is the future.

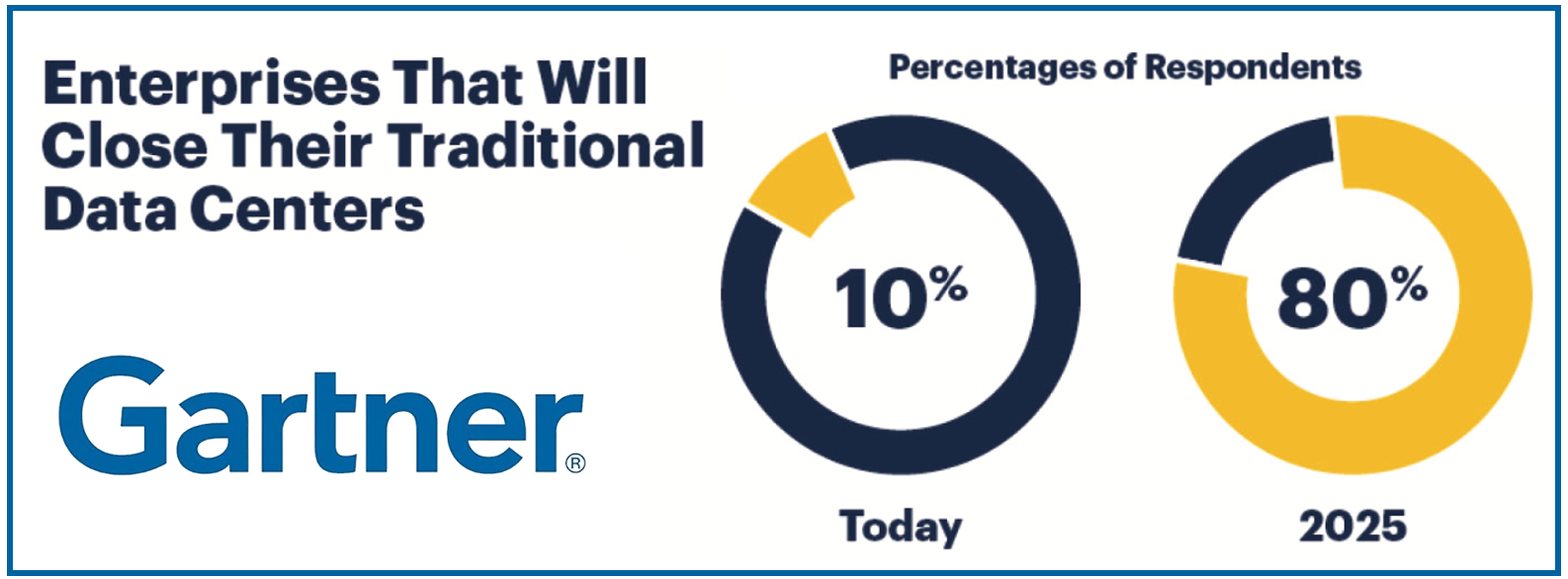

The second response is really one of not if, but when. The graphic at the top of this post from a Gartner survey supports this trend. This survey was done about two years ago; I believe the sentiment measured today would be stronger. The Synopsys White Paper shines a light on many of the technical challenges associated with the massive cloud build-out that is occurring around us. It’s good to step back and understand the big picture and this White Paper does that. It’s written by Scott Durrant, Cloud Segment Marketing Manager at Synopsys. Prior to Synopsys, Scott had a 24-year career at Intel and also spent time in the enterprise software market at places like McAfee. Scott understands the technology foundations of the cloud.

He begins with some interesting trends regarding cloud migration – growth rates, the wide deployment of AI and the expansion of edge computing for example. There is a prediction from IDC regarding the size of the global datasphere in the coming years that will either excite or frighten you, maybe both. Scott then spends quite a bit of time examining six major functional areas in cloud computing – the underlying technology, the challenges and the market trends. You will learn a lot. Here is a brief summary of each area.

Compute Servers

Compute capacity, communication bandwidth and energy efficiency are discussed. The various memory technologies and interfaces are explored, along with standards such as Compute Express Link (CXL) and the requirements of high-speed SerDes channels. It’s interesting to see how HBM2E fits. Compute server market share is also presented. This is a surprisingly balanced market – I see no “900-pound Gorilla”. You can also check out a Synopsys webinar I covered here that discusses high-speed communication in the data center.

Network Infrastructure

The main focus here is network switching. The march toward 400G speeds is discussed, along with the various architectures to get there. The market share view here is different. There is indeed a 900-pound Gorilla.

Storage Systems

Next up is storage systems. The use of AI to optimize these systems is discussed. The impact of non-volatile memory technology is examined, along with cache coherency and the relevant standards. There is a strong player in this market, but not as strong as the network infrastructure market.

Visual Computing

This is something of a new category. It refers to the hardware and software needed to perform real-time video processing and analysis. Think online collaboration, movie streaming, virtual reality, security and assisted driving. These applications demand some very high-end processing capability.

Edge Infrastructure

The edge is all about reducing latency. The amount of data collected by IoT devices is exploding. You can see estimates of the number of connected devices that will be deployed in the White Paper. I won’t spoil the details, but I will say these devices are counted in billions. The need to process all this data with latency that fits the application is the challenge. This leads to essentially tiers of edge computing so that the right processing can be done with the right proximity to the application. A three-tier view of such a system is presented. All this challenges what we used to think a data center was.

Artificial Intelligence

Last, but certainly not least is a discussion of AI accelerators. These devices form the very backbone of the whole infrastructure. Some applications demand performance first with power as a secondary requirement while others demand low power first with a required level of performance. The technology and relevant standards in this area are discussed.

How Synopsys Fits

Throughout the discussion there are examples of where Synopsys IP fits into the various architectures presented. It should come as no surprise that Synopsys offers a substantial footprint here. I strongly recommend you download this White Paper to become acquainted with the all the changes happening and how Synopsys is enabling the cloud computing revolution. The White Paper, entitled, Addressing the Evolving Technology Needs of Cloud Data Centers with IP, can be downloaded here.

The views, thoughts, and opinions expressed in this blog belong solely to the author, and not to the author’s employer, organization, committee or any other group or individual.

Also Read:

Synopsys Delivers a Brief History of AI chips and Specialty AI IP

The Heart of Trust in the Cloud. Hardware Security IP

Synopsys is Extending CXL Applications with New IP

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.