The tech standards cycle almost always goes like this: Problems or limits develop with the existing way of doing things. Innovators attempt to engineer solutions, usually many of them. Chaos ensues when customers figure out nothing new works with anything else. Competitors sit down and agree on a specification where things work together, then head off to re-implement.

Unless someone catches lightning in a bottle – say, USB, HDMI, Android, or anything else which takes off in high-volume consumer devices with many suppliers behind it – this cycle can take years to play out. The EDA industry shuddered when the Big 3 sat at the same table on a panel at DAC 47 in Anaheim to get behind UVM. Three years later, UVM is finally “here” and getting traction with everyday designers, who are seeing how their problems can be addressed.

It’s not surprising to see IEEE P1687, informally known as IJTAG – a specification dating back to 2005 – taking a while to emerge, but conditions may finally be right. We have lots of other embedded instrumentation solutions, with little to no interoperability. Chaos is imminent with design size and test times growing. ASSET InterTech, Avago Technologies, and Mentor Graphics have been on board for quite a while and have working P1687 solutions.

So, why are we not moving forward with more P1687 adoption, more quickly? The benefit of P1687-compliant IP fitting in a P1687 test framework is an end-game solution, and will certainly help reuse – when we get there. In the early adopter phase, the first efforts should be with lead customers and their immediate problems.

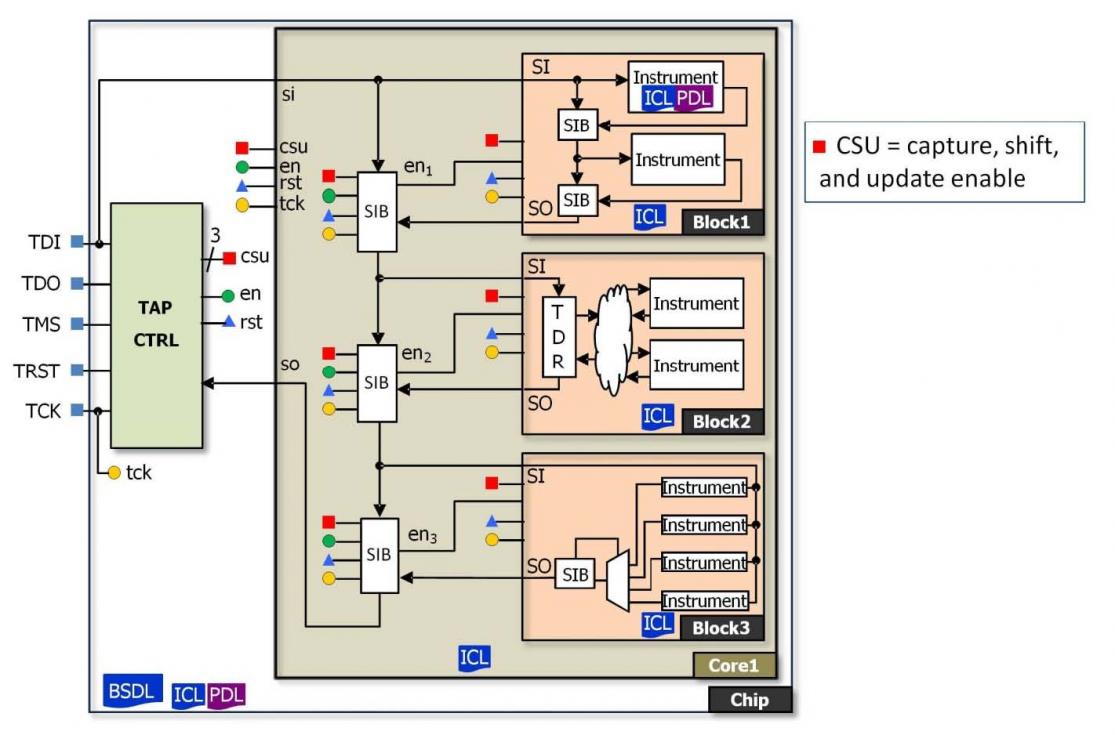

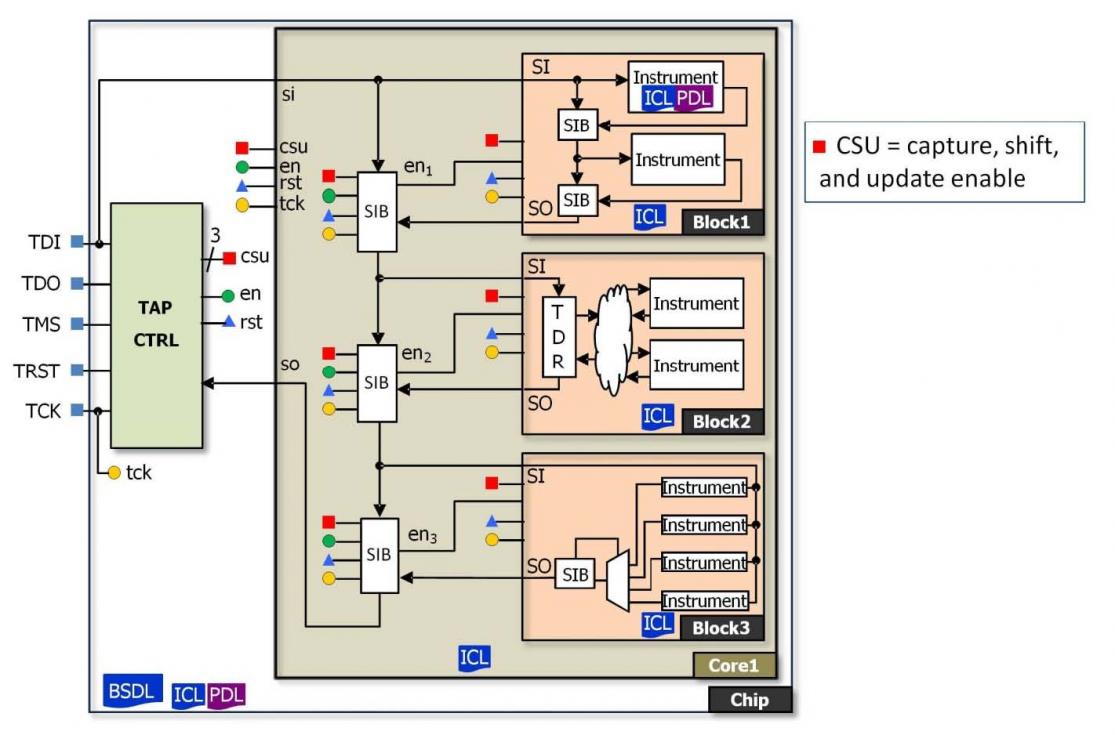

In one example of a real-world test problem, P1149.1 runs into trouble when active power management starts breaking the scan path, rendering blocks invisible to test at highly inconvenient times. P1687 can be used to add or subtract scan path segments on the fly, using Segment Insertion Bits (SIBs) and Network Instruction Bits (NIBs). By allowing variable-length scan paths with localized scan-path control, problems with power management are avoided and greater test flexibility is achieved.

The next example is one of time, but not in the way people might think. Reading the white paper on how Mentor Graphics teamed with NXP on a P1687 implementation, one might jump right to the punch line of reducing test setup length by up to 56% – important, but in my opinion not the most important result. Here is what NXP has spotted:

Time is not explicitly modeled as a resource, which hampers a fully automated test development flow .… The ultimate goal is to limit the user-defined input to the bare minimum.

Automated test development has meant automated test pattern generation (ATPG), which is effective for an IP block at known interface speeds. If the interfaces change – as they do in asynchronous timing scenarios with dozens of IP blocks involved, or as reference clocks change across domains – the test patterns for a block can fall apart instantly. Manual reengineering of test patterns is a time consuming, costly process, one many teams are trapped in today.

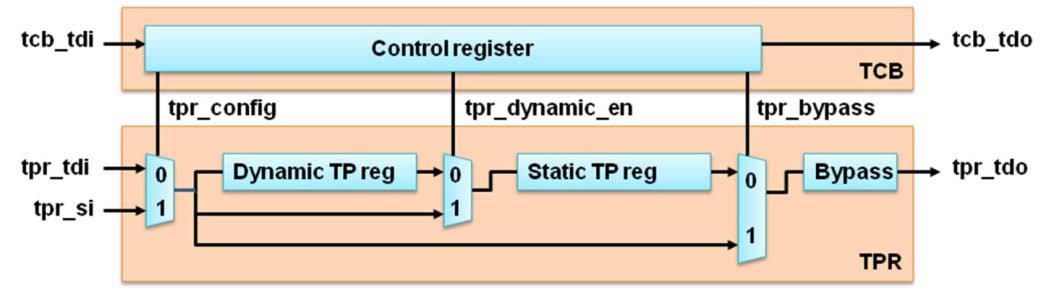

Instead of focusing on a pile of test patterns, IJTAG uses two constructs: ICL, Instrument Connectivity Language, to model test control blocks (TCBs) in an abstract, hierarchical way, capturing dependencies between structures; and PDL, Procedural Description Language, handling the operation of the embedded instruments.

Modeling in ICL and PDL allows tests to be scheduled across asynchronous blocks of IP, with the right resources available and initialized – part of the heavy lifting done in the Mentor Tessent IJTAG automation tool. It also allows analysis of reference clocks and JTAG wrappers, aiding in the retargeting of tests as the hierarchy becomes more complex. (For further discussion on retargeting, see the recent Mentor article in Electronic Design.) By describing the tests at a higher abstracted level, automated analysis produces better results.

P1687 is more than “yet-another-new-test-thingy,” as another EDA media outlet put it. Shifting the focus from fixed scan chains and pattern blasting to smarter modeling and automation able to deal with complex IP integration has the potential to deal with the much larger SoC designs we are guaranteed to see in the future.

PS: There is nothing inherently wrong in working with a draft IEEE specification in its latter stages of development. Some folks are conditioned to wait, believing the products implementing the draft specification will change when the standard is ratified. (Some folks don’t even like revision 1.0, but that’s another issue.) Minor changes are possible, but the methodology described in P1687 is unlikely to change significantly.

lang: en_US

Share this post via:

Comments

0 Replies to “Scan the horizon, P1687 takes us higher”

You must register or log in to view/post comments.