I was at EDPS in Monterey the last couple of days. It is one of the most interesting conferences to attend. Go next year since you already missed it this year. It is not big but the quality of the content is high. Historically the dinner in the middle is in the Monterey Yacht Club and there is a keynote speech. A few years ago it was me but this year there were two upgrades. eSilicon sponsored the dinner so we got some free wine and better food, and Wally Rhines gave the keynote instead of me.

Dan is going to blog about the keynote itself (which was also the keynote at U2U last week but I couldn’t make it since I was at the GSA event and if I wasn’t there I should have been at the SEMI event on Internet of Things…guys, you need to coordinate). But part of Wally’s keynote is one of the topics I like to go out on a limb on. EUV. It’s not happening.

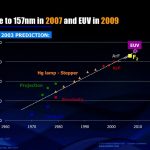

As Wally amusingly (well, we had all had some free eSilicon wine so we were easily amused) pointed out, in 2003 we predicted we would move to 157nm F[SUB]2[/SUB] in 2007 and EUV (14nm) in 2009. As I hope everyone who reads semiwiki knows, we are currently stuck with 193nm off-axis immersion lithography and the fact that we can print features on an 80nm pitch without double patterning and about 50nm with is totally amazing. But it is not cheap. There really seem to be no technical issues to get down to maybe 7nm using these techniques although we will be up to quadruple and maybe octuple patterning and…show me the money.

However, by 2005, two years later the predictions moved out…two years. Meaning no progress. F[SUB]2[/SUB] was now 2009 and EUV would be 2011. That would have been nice.

By 2006, only one year later, everything had slipped another two years. Definitely going backwards. EUV was 2013, yeah. It would save the day at 10nm.

157nm F[SUB]2[/SUB] dropped off the radar. EUV moved out again. Now scheduled for 2015. Maybe.

I don’t want to be too negative, there has been real progress in EUV. But it is a Rube Goldberg technology. I’ve related this before but here is how it works. Firstly, EUV is absorbed by air so the entire optics has to be in a high vacuum unlike traditional lithography. Not only air absorbs EUV but so does pretty much everything else so the masks have to be reflective not refractive (think patterned mirrors not photographic negatives). Even ordinary mirrors like in your bathroom (or even a state-of-the-art astronomical telescope) absorb EUV so all the other optics has to be Bragg mirrors (a sort of interference mirror formed of alternating layers of silicon and molybdenum) that only reflects about 70% of the incident light and absorb the other 30%. So they get hot. Well, not very hot, the light source isn’t that powerful yet haha.

So you drop molten tin, hit it with one laser to shape it and then hit it with a 100MW laser to get about 1% of the energy emitted as secondary radiation. The current best value is 80W of emission. And since those 100MW lasers are maybe 5% or 10% efficient you need a couple of GW of power in the subfloor under the fab to power it. A nuclear power plant would be nice. Then about 8 mirrors with 70% efficiency. So you start from 2GW and end up with a few watts hitting the photoresist.

Everyone is very focused on the light source power, which is really important for whether EUV will be economically more attractive than multiple-patterning. But another issue that I think people underestimate is the pellicle. Nobody talks about this much (except me, although experts tell me it is truly important when I bring it up, so I think I’m onto something). In refractive optics they put a layer on top of the mask (think saran wrap) that means that contaminants (think dust although it is much smaller) are not in the focal plane but a tiny distance away. But with EUV’s reflective optics, pellicles would absorb the EUV too so there isn’t a pellicle. So any contaminant will land on the mask and is in the focal plane and will print. So we need to clean the masks regularly. I read somewhere, although I can’t find it now, that Intel said they will not use EUV until there is a pellicle. And masks cannot be cleaned an infinite number of times, the pattern starts to degrade. The only interesting pellicle material appears to be crystalline silicon which apparently isn’t totally EUV absorbent. I shall be at SEMICON West in July at the Lithography seminar to find out what has happened in the last year. If you have any interest in this stuff, that is the place to be in July.

As Lars Liebmann said at a Common Platform (is that still a thing?) Symposium a couple of years ago “EUV is not as far along as X-ray lithography was when we decided it wouldn’t work.” At IBM they built cyclotrons and all sorts of weird stuff to attempt to make X-ray lithography (like 1nm “light”) work. I asked him at ICCAD last year whether that was still true and he said he though it was closer. Only later did I realize that perhaps he’d meant closer to realizing it wasn’t going to work. I remain a skeptic. That EUV will not only not be ready for Xnm (pick your X) but it will never work economically.