John Koeter is in charge of marketing Synopsys’ IP and prototyping solutions. I talked to him last week.

He grew up in upstate New York, son of a Scottish mother and a Dutch father who immigrated to the US, so he is first generation American, unlike everyone else I’ve interviewed so far for this series who were born overseas. He stayed in upstate New York for college and got a BSEE from Cornell.

After graduating, he joined TI in Dallas and did various jobs in TI ASIC, at one point moving to California for a couple of years to run a couple of design centers. One of his primary competitors was VLSI Technology where I was working at the time. We were always frustrated that TI had Nokia, which was the cell-phone market leader, locked up and we never had any success there. After 11 years at TI he decided he preferred Austin to Dallas and joined AMD in the embedded processor division, which was in the process of switching from the 29K bitslice approach to low end x86. But after a couple of years there was the usual semiconductor downturn.

He moved on and in 1998 joined Synopsys where he has been for 17 years now. He started as a program manager and then moved into business development for services doing production turnkey designs (this was the Tality Design Services era). After doing that for a time he went into sales for IP and services as a sort of east-coast overlay. When the position of VP marketing for the IP and prototyping opened up he took it. He has done that job for about 7 years now, covering IP, prototyping and FPGA synthesis. He also runs the pre-sales AE organization for those businesses.

We started by talking about IP. This is a business that Synopsys has been in for 25 years, starting with DesignWare which was basically datapath, UART, i[SUP]2[/SUP]C, timers and other basic building blocks of that era. The big transition came when they purchased inSilicon and got into USB and PCI Express on the digital side. A year later Synopsys acquired Accelerant and was in the SERDES business. They grew the business, partially organically and partially through other acquisitions such as Cascade. They really got heavily into analog when they acquired the analog business of MIPS (aka Chipidea).

One big change was that they started to package up a complete solution for interface IP. There was a digital controller, an analog PHY and verification IP (VIP). Over time this completely changed the market and all their competitors needed to do the same or get out of the business. Customers wanted to buy the complete solution. More recently they upped the ante again by launching their IP Accelerated initiative and adding software development kits, and prototyping kits and interface IP subsystems. It turns out that having SoC experts from the customer company along with IP experts from Synopsys is a powerful mixture. Although the IP may be standard, each chip is different in terms of power domains, power management, clcoks, which options of the IP are not required and so on.

In 2010 Synopsys bought Virage Logic bringing them standard cell libraries, memories (with test and repair) and the ARC microprocessor. This meant that they could, as Emeril used to say, kick it up a notch to IP subsystems, pre-integrated suites of IP including software, microprocessor, interfaces and more. The first was an audio subsystem. Then a complete sensor and control IP subsystem. At DAC this year they announced they were working with TSMC on a 40nm IoT platform. They also announced that they were pushing their IP portfolio up to automotive grade, with features to address functional safety, reliability and quality management. To qualify for automotive it requires a lot more than just slapping an “automotive” label on existing IP. At the same time they are optimizing IP for IoT applications in 45ULP and 55nm but very low voltage.

They are not done acquiring. In just the last couple of weeks they announced the acquisition of Bluetooth IP (for wireless interface) and security IP with Elliptic Technology.

The combination of interfaces, Bluetooth, security, the ARC microprocessor, memories, data converters and more gives them the broadest set of IP for IoT of anyone in the market.

There is clearly a major transition from making IP internally to buying it. It is getting so much more difficult to make, for a start. USB 2.0 to USB 3.0 has a verification requirement that is 20 times bigger. Standards turn fast, every couple of years. USB 3 to USB 3.1. DDR to LPDDR4. PCIe 3.0 to PCIe 4.0. And so on. Not many companies can keep up. They often don’t have the knowledge even if they would be willing to spend the time and the money.

Going back to 2010, when Virage was acquired, Synopsys made a couple of other key acquisitions in the system design space: VaST (where I used to be VP marketing) and CoWare. They had previously acquired Virtio. This gave them a lot of virtual platform technology. As I discovered when I had worked at VaST (and subsequently Virtutech) there is a big problem with modeling: it takes too long, costs too much and is hard to keep synchronized with the RTL. But Synopsys has three weapons that we never had: they have a lot of their own IP so they can provide TLM models, they have an emulator family ZeBu, and they have an HAPS FPGA-based prototyping system. This gives them the capability to do all sorts of hybrid solutions with processors and perhaps interfaces running as virtual models, combined with RTL running in HAPS or ZeBu. This makes it possible to look at functionality and performance, but especially these days power. Plus they have PlatformArchitect (ex CoWare) which allows for analyzing and optimizing architecture very early using TLMs, processor subsystems, DDR interfaces and more. Synopsys also now provide virtualizer design kits (VDKs) especially for some automotive MCUs and for ARM subsystems. By prepackaging everything it makes it easy for the software engineers to use the solutions immediately at low cost.

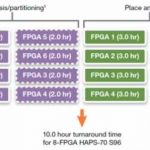

HAPS has also been very successful and leads the market. The HAPS FPGA-based prototyping solution allows high speed prototyping of SoC designs for software development, hardware/software integration and system validation. They have an optimized FPGA synthesis solution for HAPS (ProtoCompiler) that understands all the partitioning giving the highest performance for a design along with fast prototyping bringup.

At Synopsys, the prototyping business has started to be quite successful—both virtual and FPGA-based. The market for FPGA and virtual prototyping seems to be about $450M but only 1/3 is in the commercial marketplace, and 2/3 is internally developed. This means there is a big $300M potential market available if people switch from make to buy, the same problem as IP used to have a decade ago.

IP is already close to 20% of Synopsys’ business and the prototyping segment is a big opportunity. The future’s so bright you’ve got to wear shades.

See also Synopsys’ Andreas Kuehlmann on Software Development

See also Antun Domic, on Synopsys’ Secret Sauce in Design

See also Bijan Kiani Talks Synopsys Custom Layout and More