Deterministic, yet versatile. Robust and integrated, yet user-friendly and easily customizable. Those are some desirable characteristics of an EDA solution as the boundaries of our design optimization, verification and analysis keep shifting. A left shift driven by a time-to-market schedule compression, while the process and application complexities keep pushing it in the opposite direction.

From the many DAC held technical sessions, early verification has made progress in doing shift-left to keep pace with the implementation process by means of integrating the application or end-product software within the virtual prototyping to do an early system design exploration. Virtual prototyping allows designers to not only explore corner scenarios but also to reproduce the experiments with various permutations of constraints or variants. The more heterogeneous SoCs for the emerging applications demand virtual prototyping that supports not only software and hardware but also the incorporation of digital, analog and interconnect IPs.

Magillem provides robust front-end design XML based solutions that enable and streamline design activities around its integrated environment. It has deployed its products across several industry boundaries –from SoC design houses, semiconductor manufacturers to legal and technical documentation publishers.

Since rolling out its Magillem Architectural Intent (MAI) for architectural intent capture as covered in my prior blog, the company has announced a joint effort with Imperas for an integrated virtual prototyping platform and also introduced Magillem Flow Architect (MFA), a turnkey solution that help customers define their best recommended flow.

At DAC 2018, I had the opportunity to interview Magillem CEO, Isabel Geday, and Magillem VP of Strategic Account Manager, Paschal Chauvet. The discussion was centered around Magillem continued efforts to accommodate IC design needs and how it adapts with the current trends in the EDA ecosystem.

Some EDA players have announced their product collaborations. Does Magillem have similar efforts?

“I call it a partnership, by not creating duplicated solutions,” said Isabel diplomatically. She gave example of Magillem’s earlier partnership with Imperas using its Verification IP models and debugging software. At DAC, Magillem announced another partnership with Arteris IP, an indisputable leader in NOC-IP that provides SoC cache interconnect solution.

The integration with Arteris IP was demonstrated by a full-compliance validation of the company’s interconnects with the Magillem environment. Using a single design environment, customers can now easily build a SoC using Arteris’ IP instances (FlexNoC and NCore) and the Magillem front-end design environment (MAI, MPAand MRV). “It is very good for customers to have one design environment. To be able to work with and plug all the IPs,” she added.

Since Magillem is based on the industry standard IP-XACT, it enables a possible integration of other tools into its environment. “While the other big players have closed environments, for Magillem it is the DNA of our product to allow integration,” she pointed out. Furthermore, The unified environment also provides more efficient and automated sharing of information across the supply chain during the product development.

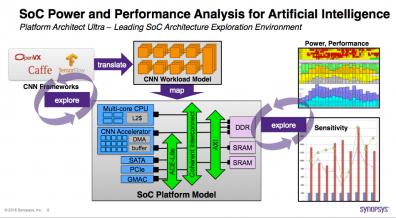

There are increasing AI and ML related efforts. How do these impact your products?

“This is very interesting question as we work with methodology and flow aspects,” Isabel said. She gave two examples. The first is from a product application stand point. A large customer has used Magillem solution on some kind of expert system, which interacts with engineers through questionnaires and depending on the given answers make decisions one way or another.

The second example is related to Magillem prior internal effort. “We had activities on the side, done several years ago to demonstrate how versatile is our platform, by building something using the assembly of metadata of descriptions.” She elaborated that the team applied some AI aspects to analyze the interpreted legal texts and the impact of changes made on the existing document corpus. It measured the impacts of the new text fragments on the existing ones and suggested changes when it’s necessary on the existing document corpus. In addition, it was capable of learning a new syntax, when it did not recognize a new pattern. To sum up, she believes that AI is more a replay of previously exercised concept, but with more memories, compute power and algorithms involved.

Aside from these examples, the recently announced Arteris IP-and-Magillem integrated solution has been targeted to simplify the increasingly complex SoCs designs for AI and autonomous driving applications, which are now bounded by the latency of on-chip interconnects rather than the performance of on-chip processors and hardware accelerators.

Here at DAC, more EDA vendors showcasing their products to be accessible on the cloud. What is your take on cloud deployment?

“Our customer are Tier-1 companies and they have entrusted us with their most complex, expensive, demanding SoC platform and designs,” said Isabel. She added that the customers policy is to keep confidentiality as a top-priority. She acknowledged that although some design data intelligence may benefit from cloud based scenario, cloud is not an option yet. “People gain ownership on this internal solution. It is less interesting idea to us than in providing a cognitive assistance to the experts. The customers are very good on what they do. They have to be the decision maker…to make fast decision and be more productive as they deal with huge legacy and data.”

What is your data model? Do you allow customer flow customization?

“Our solution was directly derived from IP-XACT, which is universal inside our tool, allowing our customers to use one data model for the entire design flow,” said Paschal. Embedding external tools can be achieved through the Eclipse plug-in and TGI (Tight Generator Interface), the standard API to manipulate any IP-XACT database. According to Paschal, such flexibility is crucial for smaller companies as they tend to highly customize their environment. The scalability of the compact data model is not an issue as Magillem have worked with SoC having millions of gates.

Traceability is about the ability to track the safety requirements from the initial design inception through its implementation and operation phase. It is a key ingredient for the functional safety standards compliance as defined in IEC 61508 and ISO 26262. With the parsable IP-XACT based data, automated traceability throughout the development flow can be achieved.

Commenting on future works, Isabel stated that the current Magillem platform offering is unique. “Our earlier vision is now very appealing to new customers and new markets,” she added. Ongoing works includes the infrastructure to build the hub of link that will guarantee traceability in a very elegant way. She added that instead of building a hub of data, one then could add different standards and other sort of data while preserving all the essential elements.

By providing a versatile framework that could be retargeted for complex system designs, Magillem solution enables design teams to adapt with changing requirements from both design specifications and implementation methodologies.

For further info on Magillem products, please check HERE.