It might seem paradoxical that simulation (or equivalent dynamic methods) might be one of the best ways to run security checks. Checking security is a problem where you need to find rare corners that a hacker might exploit. In dynamic verification, no matter how much we test we know we’re not going to cover all corners, so how can it possibly be useful? Wouldn’t formal methods be much better?

Dave Kelf (CMO for Breker) makes a point that security verification is inherently a negative verification problem. Unlike positive testing where you’re checking that a specific scenario works as expected, in security verification you need to check all possibilities, as you ideally would in negative testing. For example, in a positive test, we would check the key can be read through the crypto block. In security, we have to ask, “is there any other way that this can be done?”. The strength of formal is that it can analyze that entire state space and find paths you had not considered.

But while formal is ideal for completeness, it’s limited in scope – by the size of the state space and by the degree to which you have to abstract and decompose complex problems, leaving you to wonder what you might have overlooked in all that complexity. Formal also can’t work with software, a real problem for embedded system validation. Conversely, simulation doesn’t care – you can run whatever size system you have with whatever mixed levels you need.

Nevertheless, the completeness of the graph-approach is appealing. Breker have developed a way to build a conceptually similar graph at the system level, not automatically from RTL as a formal tool would but semi-manually / semi-automatically from a series of tables describing key aspects of the SoC system architecture.

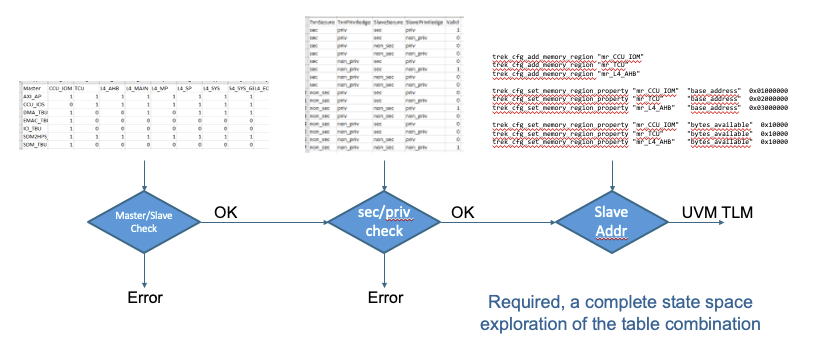

Then PSS becomes a pretty logical bridge to testing complete negative intent on a high-level graph rather than conventional formal gate-level paths. Breker has an app for that. In the security TrekApp, you can define a security policy through tables, in master/slave connectivity, security/privilege options and memory address zones.

An advantage in starting with these tables is that it’s easy to see what might be missing – trivially that you missed a master/slave option, you forgot to specify whether an access/privilege on the master and an access/privilege option on the slave is a valid (permitted) combination or not.

Going one level deeper, you can also define, in another table, various memory regions with corresponding secure and privilege accessibilities. These definitions are essential for later dynamic tests to check that it isn’t possible, through some unapparent sequence of actions (again a negative test), to read from or write into a secure/privileged memory region from a transaction not allowed to perform those actions.

Think for example of an ARM TrustZone environment in which one or more masters may at times be operating in a secure mode with a certain level of privilege, or a non-secure mode. Meanwhile slaves, some secure with low privileges, some secure with higher privileges are communicating with masters and trying to read from or write to regions in memory, each of which also have assorted privilege and secure settings. That’s a lot of combinations to worry about. Are you sure your tests are really going to cover them all?

The Breker security TrekApp will map the master/slave, secure/privilege and memory region tables into the Trek internal format, then build a graph – in effect a system-level state graph – which can generate tests for all possible transactions across that graph. Their test suite synthesis will then map that to realized sequences of tests, which you can then plug into your UVM testbench or software driven SoC test. A comprehensive sequence of tests that can cover all paths through the graph, including those you might not consider but a hacker may attempt.

That looks like a pretty valuable capability to me. You can learn more about the security TrekApp HERE.

Also Read

Verification, RISC-V and Extensibility

Build More and Better Tests Faster

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.