Ten years ago, earbuds might have seemed like a mundane product area with little room for exciting developments. Truly Wireless Stereo (TWS) has coincided with an avalanche of innovations that have moved earbuds from a simple transducer for creating sound into being a sophisticated device capable of accepting user commands and controlling a media device based on a wide range of environmental information. At the same time coupling earbuds with the latest in signal processing greatly enhances the listening experience. CEVA has a webinar on-demand, titled “Enhancing the TWS User Experience with Sensor Fusion”, that does an excellent job of describing just how significant the developments in so called hearables have become.

Once consumers are made aware of what is possible, they immediately grasp the new capabilities and are asking for them in new products. While things like sound quality, comfort, battery life and ease of use remain strong selling points, new features such as context aware behavior command strong interest in the market. It takes a lot more than good audio quality to develop a successful hearable product nowadays. A wide range of sensors need to be added to hearables, along with the processing capabilities to perform sensor fusion and deliver the audio experience that is needed.

Sensor fusion might seem like a very dry and abstract topic, but it is what allows inputs from accelerometers, gyroscopes, magnetometers, microphones, touch, and proximity sensors to be combined to create an understanding of not only the operating environment but the context necessary to control device behavior. Sensors themselves all have a variety of limitations which manifest as anomalies. Environmental factors such as aging, operating voltage and manufacturing variation can affect performance. Unless these factors are dealt with, user experience could be frustrating or the devices could even be useless.

Done right, sensor fusion can allow for a revolutionary user experience. Let’s start with input gestures for instance. The CEVA webinar goes through several scenarios. Clicking a button is often used for input, but with earbuds this can be problematic. Hitting a button on a tiny device can be hard and is made harder because the user might need to brace the device so it does not come out of their ear. Touch sensors are also difficult for earbuds because they required a larger area. If they could work, they could support a wide range of gestures and more natural motion. With advances sensors and sensor fusion, earbuds can take advantage of tapping and head movement for command input. An accelerometer can detect tapping and distinguish it from random movement. Head motion can be used by nodding and side-to-side movement. Head movement sensing is hands-free and uses natural motions.

But the real power of sensor fusion is using environmental information to drive device behavior. It is possible for hearables using sensor fusion to know if you are walking on a street, in a restaurant, in a crowd or by yourself. Using this information, the device can do things like pass through external audio for safety or reduce environmental noise to facilitate a phone call or music. It is even possible for the hearable to respond to sirens by muting media to help you maintain awareness of your surroundings.

One intriguing use model CEVA discussed is using 3-D sound combined with location information to help a user find a friend in a crowd. As the user’s head moves the apparent direction to their friend would shift in their earbuds, giving them cues about which way to walk to get to them. The CEVA webinar offers details of a number of scenarios where a device can respond to help with enjoying music, phone calls, sports activities, conversations in noisy environments and more.

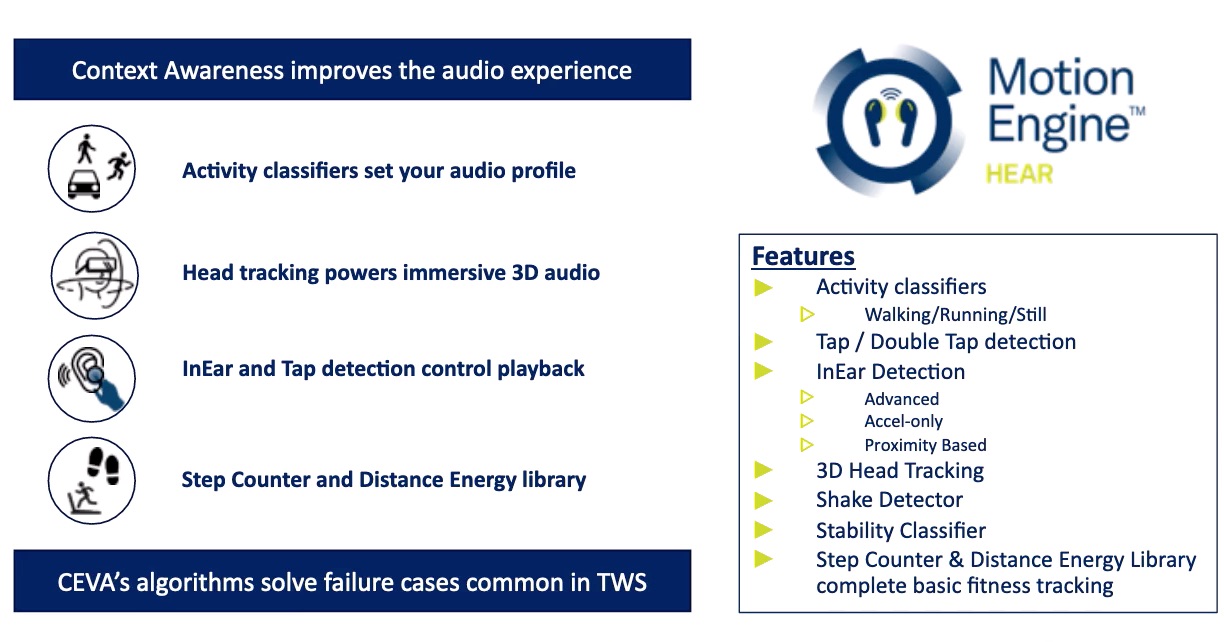

Getting all this to work requires the right assortment of sensors and the real-time software to enable local processing of raw sensor data so that the hearable and connected devices can perform as desired. This is CEVA’s specialty. They offer their MotionEngine Hear software library for the hearable market. It features 3D head tracking, InEar detection, Activity classifiers, tap/double tap detection, shake detector, step counter/fitness tracking and more. CEVA’s MotionEngine Hear library handles issues like gyroscope and accelerometer offset or bias to improve tracking accuracy. It offers a sophisticated calibration strategy that uses both static and dynamic methods to achieve excellent results.

Applying sensor fusion to hearables is leading to dramatically expanded functionality. It makes devices more intuitive and more responsive to their environment. Indeed, it is expanding functionality in ways that were not even apparent ten years ago. This is good news for consumers. However, there is essential technology that enables these changes. Fortunately, CEVA has been active in this space and has software available now to provide the foundation for these new features. The webinar provides a lot more information than can be mentioned here. You can view the full webinar on the CEVA website.

Also Read:

Sensor Fusion in Hearables. A powerful complement

Low Energy Intelligence at the Extreme Edge

Combo Wireless. I Want it All, I Want it Now

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.