Years ago, when FPGA prototyping started, there were no solutions that you could go out and buy and everything was created as a one-off: buy some FPGAs or an FPGA-based board, and put it all together. It was a lot of effort, nobody really knew in advance how long it would take, there was very limited visibility for debug and the whole thing was basically unsupportable. There is more discipline these days but even so, roughly half of all FPGA prototyping is done in a proprietary way that doesn’t scale as designs get larger and lacks more and more desirable features. The other half of the market uses an integrated solution that ties together FPGA-based hardware, the software for getting the design up and running, debug and daughter boards for hardware interfaces.

Years ago, when FPGA prototyping started, there were no solutions that you could go out and buy and everything was created as a one-off: buy some FPGAs or an FPGA-based board, and put it all together. It was a lot of effort, nobody really knew in advance how long it would take, there was very limited visibility for debug and the whole thing was basically unsupportable. There is more discipline these days but even so, roughly half of all FPGA prototyping is done in a proprietary way that doesn’t scale as designs get larger and lacks more and more desirable features. The other half of the market uses an integrated solution that ties together FPGA-based hardware, the software for getting the design up and running, debug and daughter boards for hardware interfaces.

Last week I talked to Johannes Stahl of Synopsys about the new solution that they are announcing today. He told me that for some time, Synopsys has had a free book, the FPGA-based Prototyping Methodology Manual which was available for download if you answered a few questions. From those questions the top 5 care-abouts turned out to be:

- mapping to the FPGA

- debug visibility

- performance

- limited capacity

- turnaround time

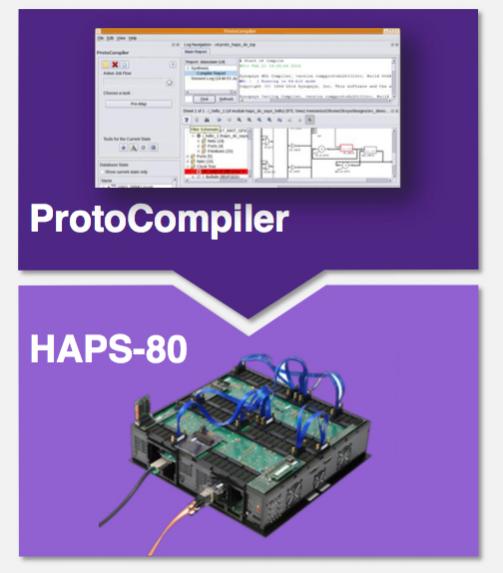

Today Synopsys is announcing an integrated solution combining ProtoCompiler software and HAPS-80 hardware that addresses these issues and:

Today Synopsys is announcing an integrated solution combining ProtoCompiler software and HAPS-80 hardware that addresses these issues and:

- reduces time to high-performance prototype to under 2 weeks

- built-in debug captures over 1000 RTL signals per FPGA at speed, integrated with Verdi

- 100MHz system performance

- scalable up to 1.6B ASIC gates, which is around 7B transistors using the usual rules of thumb

- fast parallelized tool flow

The fast bringup addresses three steps. First, an automated flow including partitioning and automatically inserting all the multiplexors necessary to get signals between the FPGAs. Second, reduced hardware and debug bringup time, and finally getting the performance optimized in multi-FPGA configurations (which is most of them). Bringup is less than two weeks, so not quite the one day that emulation has achieved today, but also not the multiple months that FPGA prototyping used to entail.

The performance increase is driven by new proprietary multiplexing that delivers 2X higher performance, system timing improved by up to 60% and better P&R guidance bringing another 10% timing improvement. Plus, under the hood, there are the latest Xilinx Virtex UltraScale VU440 devices with 26-million-ASIC-gates capacity per FPGA

These mean that for a single FPGA configuration they can achieve 300MHz, for a multiple-FPGA solution that does not involve signal multiplexing it can achieve 100MHz and designs requiring the new high-speed pin multiplexing, 30MHz. These speeds mean, for example, that you can boot a system to the OS prompt in less than a minute. HAPS-80 also enables at-speed operation of real world I/O.

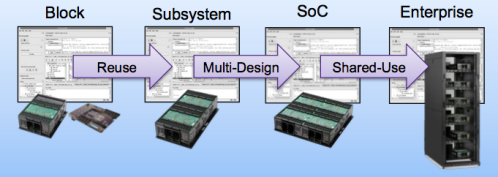

The system is scalable from a single module, delivering 26M ASIC gates up to an enterprise system with 1.6B. Increasingly, in fact, enterprises are putting FPGA prototyping into the datacenter so that it can be shared among different engineers. This can either be done by putting generic hardware on the network, or else for a critical design configuring one or more systems and making them available to be shared.

For people who have been using HAPS-70, the previous generation, everything is backwards compatible. Cables and connectors are the same, the daughter-boards are the same, the form-factor is the same as Haps Trak 3. The software flow through ProtoCompiler is the same.

There are really two somewhat separate reasons for wanting to make use of FPGA protptying solutions. The first is to get the hardware debugged by exercising the hardware with large amounts of realistic software load. The second reason is to get the software development done without needing to wait for prototypes to be manufactured. There are, of course, alternatives to this: emulation, virtual platforms, hybrid emulation. Which is most appropriate depends to some extent on the stability of the design. If the RTL is changing extensively, then bringing up FPGA prototyping is less attractive since it takes a couple of weeks by which time it is obsolete. But when it is close to stable then it is far and away the fastest running solution and so the most attractive. If you need to validate a lot of hardware against a lot of software then this is the sweet spot.

There are really two somewhat separate reasons for wanting to make use of FPGA protptying solutions. The first is to get the hardware debugged by exercising the hardware with large amounts of realistic software load. The second reason is to get the software development done without needing to wait for prototypes to be manufactured. There are, of course, alternatives to this: emulation, virtual platforms, hybrid emulation. Which is most appropriate depends to some extent on the stability of the design. If the RTL is changing extensively, then bringing up FPGA prototyping is less attractive since it takes a couple of weeks by which time it is obsolete. But when it is close to stable then it is far and away the fastest running solution and so the most attractive. If you need to validate a lot of hardware against a lot of software then this is the sweet spot.

Everything is available now. Faster bringup, higher performance, more visibility, large capacity, accelerated tool flow, backwards compatibility. What’s not to like?

The Synopsys blog on FPGA prototyping, Breaking the Three Laws, is here. The HAPS product page is here.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.