It is long past the time when general purpose processors could meet the needs of sensor fusion. Sensor fusion performs operations to process and integrate raw sensor data so that downstream processing is simplified and is performed at a higher level. When done properly it offers several other significant benefits such as lower latency & power, bandwidth savings and improved efficiency. CEVA, a provider of processor and platform IP, addressed the growing sophistication of sensor fusion last year with their SensPro Sensor Hub DSP. Since then the market has steadily grown with expanded requirements for new types of sensors and more powerful processing capabilities. In many cases new applications have driven these requirements. This includes everything from earbuds to automotive ADAS systems. CEVA has just announced a major update to this offering which is called SensPro2.

SensPro2 Major Improvements

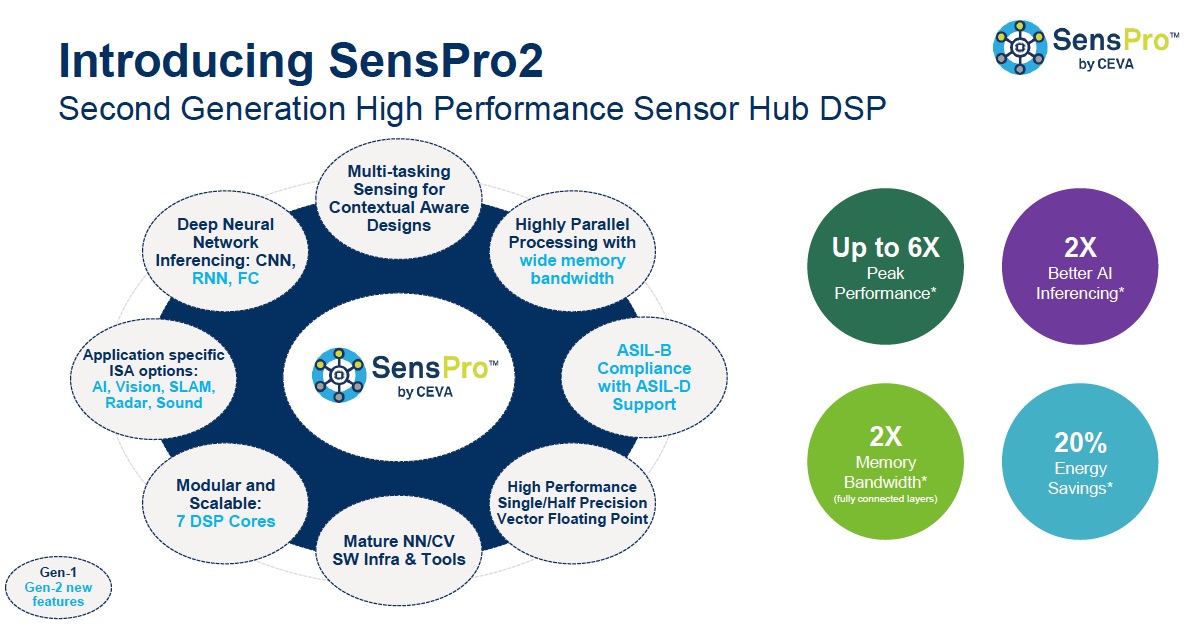

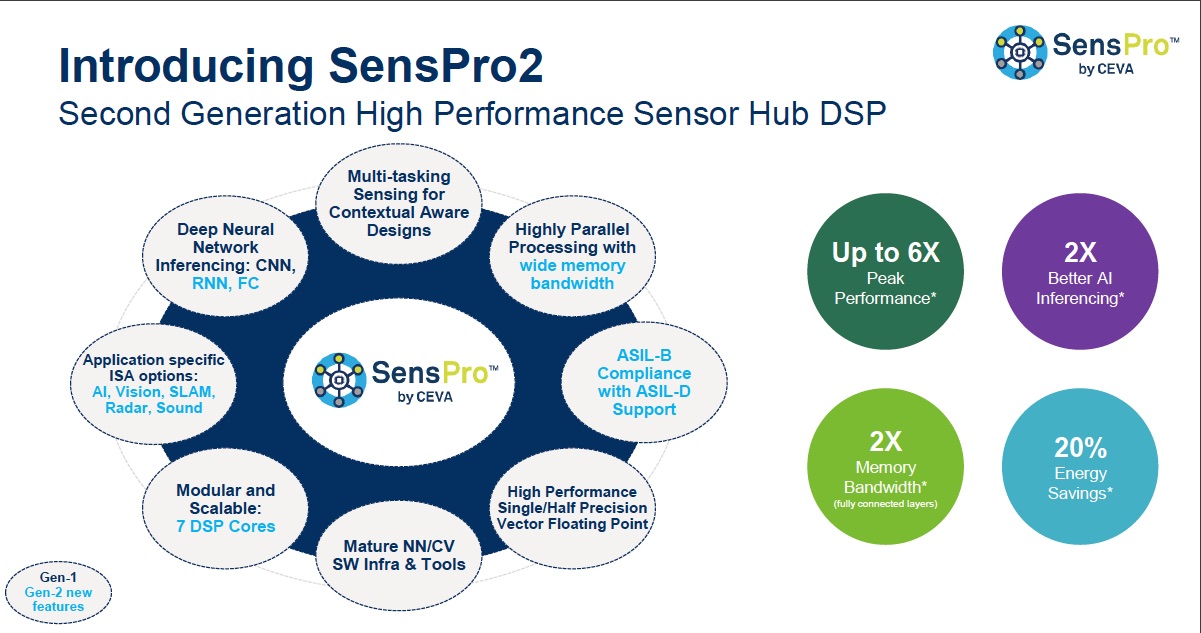

CEVA has packed a lot into this update. They have expanded the number of cores from 3 to 7. There is ASIL-B compliance and ASIL-D support. Parallel processing benefits from their wide-memory bandwidth. The neural network support includes RNN and FC layers. There are ISA extensions specific for AI, vision, SLAM, Radar and sound. Combined with other changes SensPro2 delivers a 2X boost for AI inferencing, up to 6X peak performance gain, 2X better memory bandwidth and 20% energy savings. These improvements create the opportunity for SensPro2 to support an expanded range of high and low end applications.

Across all members of the SensPro2 family there is a common ISA, which means that moving to a different core is made seamless when performance needs to scale. In addition to the three previous cores, SP250, SP500 and SP1000, there are two new low-end cores, SP50 and SP100, along with two new floating point cores, SPF2 and SPF4. The SP50 through SP1000 have MACs that support INT8 and INT16, and allow the addition of a FP32 MAC. The SPF2 and SPF4 offers FP32 floating point only MACs.

Focus on Performance

Under the hood SensPro2 offers impressive specifications. There is 8-way VLIW with a highly configurable architecture. It clocks up to 1.6GHz on 7nm silicon. It can deliver 3.2 TOPS (INT8) and 400 GFLOPS, using 64 single precision or 128 half precision FP MACs. The memory architecture offers 400 GByte/second of bandwidth. It includes a 4-way instruction cache and a 2-way vector data cache.

Using their own benchmark numbers CEVA shows that SensPro2 beats their previous generation by anywhere from 1.8X to 5X on CV benchmarks. SLAM benchmark results for SensPro2 show 1.8X to 6.4X over the previous generation. Similarly, for audio processing the SP250 core showed DeepSpeech2 results that were 18.9X faster than their general purpose CEVA-BX2 DSP. SensPro2 also has improved Radar performance capabilities as well.

Development Environment

CEVA backs up these IP improvements with solid and mature software development libraries. Included are ClearVox noise reduction, WhisPro speach recognition, wide angle imaging, SLAM SDK, Tensor Flow Lite Micro support, CDNN, and OpenVX & OpenCL. These all contribute to an extremely wide range of end applications. In the area of AI they support TensorFlow. CEVA has its own neural network compiler, CDNN, that supports over 200 NNs and is fully optimized for the SensPro2 processors. It includes graph optimizers for accuracy optimization, retraining and scaling per layer.

CEVA is well positioned with this new generation of senor fusion IP. The IP covers the full range of potential applications and is highly configurable. It is well supported with development libraries. They have shown great strides in improving performance to keep up with market needs. The full announcement can be found on the CEVA website.

Also Read:

Sensor Fusion Brings Earbuds into the Modern Age

Sensor Fusion in Hearables. A powerful complement

Low Energy Intelligence at the Extreme Edge

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.