One of the largest software companies in the world, Synopsys is a market and technology leader in the development and sale of electronic design automation (EDA) tools and semiconductor intellectual property (IP). Synopsys is also a strong supporter of local education through the Synopsys Outreach Foundation. Each year in multiple regions, the company conducts science fairs, offering funding, equipment, supplies and training to students and teachers. This fusion of technology and education, designed to spark innovation, served as the catalyst for forming the company.

As a young immigrant to the United States in the late 1970s, Dr. Aart de Geus, Synopsys’ co-founder, chairman and co-CEO, enrolled at Southern Methodist University in Dallas, and became immersed in the school’s electrical engineering program. He soon went from writing programs designed to teach the basics of electrical engineering, to hiring students to do the programming. In the process, he discovered the value of taking a technical idea, creatively building on it, and then motivating other people to do the same. This principle ultimately fueled the creation of Synopsys.

After earning his Ph.D. and gaining a wealth of CAD experience at General Electric, Dr. de Geus and a team of engineers from GE’s Microelectronics Center in Research Triangle Park, N.C. – Bill Krieger, Dave Gregory and Rick Rudell – co-founded synthesis startup Optimal Solutions in 1986.

The following year, the company moved to Mountain View, Calif., became Synopsys (for SYNthesis and OPtimization SYStems), and proceeded to commercialize automated logic synthesis via the company’s flagship Design Compiler tool. Without this foundational technology that transitioned chip design from schematic- to language-based, today’s highly complex designs – and the productivity engineers can now achieve in creating them – would not be possible. The advent of EDA enabled engineers to address scale complexity and systemic complexity simultaneously.

Over the past quarter century, Synopsys has grown from that small, one-product startup to a global leader with more than $1.7 billion in annual revenue in fiscal 2012. Early on, Synopsys established relationships with virtually all of the world’s leading chipmakers, and gained a foothold with its first products. Synopsys’ tools quickly broadened to: front-end design including simulation, timing, power and test; system level design to encompass higher levels of abstraction; and physical implementation to address place and route, extraction and increasing manufacturing awareness.

Synopsys established strategic partnerships with the leading foundries and FPGA providers, acquired some early EDA point tool providers, launched more than two dozen products, and completed its initial public offering (IPO) in 1992. Fewer than 10 years after its founding, Synopsys had achieved a run rate of $250 million.

Synopsys began donating to local communities in 1989 and formalized charitable giving in 1992 under the leadership of employee giving committees. In 1999, the Synopsys Foundation and the Synopsys Outreach Foundation were formed, making science and math education and community support initiatives part of their charter.

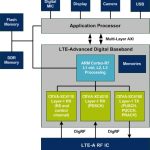

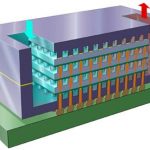

In the 2000s, Synopsys introduced an integrated design platform that allows customers to take a design from specification all the way through to silicon fabrication. In all of its development activities, Synopsys works closely with customers to formulate strategies and implement solutions to address the latest semiconductor advances.

Growing smarter

Over the years, the company assembled a team with diverse global backgrounds and many decades of combined semiconductor industry know-how. In 2012, Dr. Chi-Foon Chan (Synopsys’ president and chief operating officer since 1998) expanded his role and joined Dr. de Geus as co-CEO. Acknowledging the effectiveness of their longtime partnership and the breadth and complexity of Synopsys’ business, Dr. Chan will help nurture the company through its next phase of growth.

Both in-house technology innovation and strategic acquisitions have driven Synopsys’ success as the company extended beyond its core business to address emerging areas of great importance to its customers. For example, early in the 1990s, Synopsys saw the need to integrate EDA and IP. Today, IP libraries are critically important to design efforts at the chip and system level. Synopsys’ concentration on the IP space has made it the leading supplier of interface IP – essential to today’s many communications standards – and the no. 2 supplier of commercial IP overall.

Synopsys has also been highly effective in creating a successful services offering. The company’s design consultants, focused on understanding customers’ evolving technology challenges, utilize a broad portfolio of consulting and design services to help chip developers accelerate innovation and success for their design projects.

Complementary acquisitions

Synopsys has been an active acquirer of companies to round out and enhance its product portfolio. When making acquisitions, Synopsys focuses on technology that complements organic R&D growth in its core offering or broadens its capabilities beyond traditional EDA.

Synopsys executed two of the largest acquisitions in EDA history: Avant!, with its advanced implementation tools, and Magma Design Automation, whose core EDA products were highly complementary to Synopsys’ portfolio.

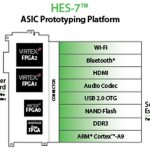

A number of acquisitions helped Synopsys build out its IP portfolio over the years, while also recognizing the growing importance of high-level synthesis and embedded system-level design. Early on, Synopsys also saw the trend toward advanced prototyping technology, including virtual prototyping and FPGA-based prototyping for hardware/software co-design.

As challenges associated with analog/mixed-signal (AMS) design escalated, Synopsys enhanced its portfolio with complementary technology to address various AMS design aspects.

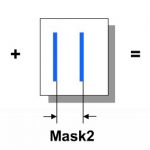

Mask synthesis and data prep became important additions to Synopsys’ manufacturing tool offering, as did the development and support of products for the design and analysis of high-performance, cost-effective optical systems.

Each technology advancement or acquisition has built upon the developments that preceded it, adding a new layer of value to the company. Dr. de Geus has a philosophy: If something already has value, how can it be moved to the next level? It was this approach that essentially informed the discovery of how fostering talent, technology and education can yield exciting results that drive ongoing innovation. One can only imagine what future years will hold.