Aldec tools and services have long been associated with FPGA designs. As FPGAs have evolved toward more RTL-based designs, the similarities between a modern FPGA verification flow and an ASIC verification flow often leave them looking virtually the same. Continue reading “Aldec extends FPGA and ASIC flows at DAC”

Cache Coherent Systems Get a Boost from New Technology

The speed and power penalties for accessing system RAM affect everything from artificial intelligence platforms to IoT sensor nodes. There is a huge power and performance overhead when the various IP blocks in an SOC need to go to DRAM. Memory caches have become essential to SOC design to reduce these adverse effects. However, ensuring cache coherency across all the local caches and system RAM can be tricky. The problem was not so bad when there were fewer IP’s that required caches, but things have changed.

Up until now, cache coherency solutions were typically manually created for point to point connections to DRAM or specific IP existed for cache coherency to serve predetermined use models. However, it looks like Arteris has announced what might be game changing technology for implementing cache coherent systems on a wide range of SOC’s. Their announcement on May 17[SUP]th[/SUP] states that the technology can be used to connect a large variety of IP, including those with differing cache coherency protocols, semantics and sizes. It can even be configured to provide the benefits of caching to IP blocks that do not support local caches.

It’s expected that Arteris will offer more details about the technology and its implementation in the coming weeks. Nonetheless, a lot can be gleaned from the recent announcement. First and foremost, it’s apparent that Arteris is using their robust and proven FlexNoC on-chip interconnect IP as a building block for this technology. Arteris is already adept at moving data around on SOC’s, where the data sizes and protocols vary dramatically. It makes complete sense to take advantage of this transport technology to help implement interfaces for cache agents.

According to their announcement, the new technology can simultaneously support heterogeneous cache agents, and even easily add local coherent caches to non-cache IP’s. This should make it easy to mix IP’s from different sources. Arteris offers the capability to add proxy caches to non caching clients to boost overall system performance. These proxy caches will fully participate in the coherency management process.

Another key element of the technology is the availability of multiple configurable snoop filters. By customizing the organization, size and associativity of multiple snoop filters SOC designers can improve the PPA of their designs.

This Arteris technology is scalable and configurable. Each cache agent can be configured to suit its own needs and to talk to the other members of the coherency system by using optimal interfaces. By using FlexNoC as its transport layer brings all the FlexNoC advantages to the implementation. Typical design concerns are area for interconnect resources, timing and power consumption.

One of the core tenets of the Ateris team is that ‘wires’ are very expensive relative to the cost of transistors in advanced nodes. This counterintuitive sounding assertion comes from looking at total system area needed for point to point interconnect. Very complex IP blocks typically need to talk to many other subsystems. Wiring up dedicated connections for all the subsystem that need to talk to each other would be prohibitive. Even if the blocks were connected this way, we would see that the utilization would be pretty low. Just as FlexNoC improves data transfer between blocks, it can be used as a building block for cache coherency. The same benefits apply – optimal utilization of system resources, configurability, etc.

With this technology Arteris is now offering unique enabling technology that is not available anywhere else. Nevertheless, it is compatible with coherency offerings from ARM and others. For Arteris, it shows a unique level of innovation and a willingness to go deeper into the design process to develop new products that solve significant design problems.

Stop FinFET Design Variation @ #53DAC and get a free book!

If you plan on visiting Solido (the world leader in EDA software for variation-aware design of integrated circuits) at the Design Automation Conference next month for a demonstration of Variation Designer, register online now and get an autographed copy of “Mobile Unleashed”. Such a deal!

Solido Variation Designer is used by 1000+ designers across 35+ major semiconductor companies to solve key production design challenges in memory, analog/RF, and standard cell design.

REGISTER HERE

Solido will be demonstrating the newest version of its software just released in March, Solido Variation Designer 4. Variation Designer 4 has advanced Solido’s state-of-the-art technology to tackle the latest variation-aware design challenges in nanometer processes including FinFET, FDSOI, low-power & low voltage.

The following demonstrations are available:

Solido Variation Designer for Memory

Full Chip Memory and Cell Level Statistical Verification

Solido Variation Designer delivers the most advanced and industry-proven technologies for statistical design & verification of memories:

- Hierarchical Monte Carlo: Verify full-chip memories with perfect statistical accuracy

- Statistically correct verification of replicated structures

- Correct application of both global and local variation

- High-Sigma Monte Carlo: Verify columns, bitcells, sense amps, and other memory blocks to high-sigma quickly and with perfect Monte Carlo and SPICE accuracy

- Accurately verify production-sized designs (such as memory columns and critical paths)

- Solve pass/fail, binary, and multi-modal output measurements

- Efficiently debug high-sigma variation problems

- Generate trustworthy high-sigma verification results

Solido Variation Designer for Standard Cell

Variation-Aware Verification of Cell Libraries

Standard cell designers use Solido Variation Designer to accelerate the verification of their standard cell libraries across variation. The key technologies are:

- High-Sigma Monte Carlo: Monte Carlo & SPICE accurate high-sigma verification of standard cells

- Fast and accurate verification to high-sigma

- Accurately capture performance and power vs. sigma tradeoffs for the entire sigma range

- Batch operation for creating customized library verification flows

- Fast Monte Carlo: Fast, accurate statistical verification of standard cells

- 3-sigma verification & corner extraction

- Batch operation for creating customized library verification flows

Solido Variation Designer for Analog/RF and Custom Digital

Statistical & PVT Verification and Debug

Solido Variation Designer gives analog designers the ability to design with greater speed, accuracy, coverage, and insight than ever before:

- Statistical PVT: Unprecedented accuracy and coverage across 3-sigma statistical variation and operating conditions

- Fast PVT: 2-50X faster verification across corners & operating conditions

- Fast Monte Carlo:Fast, accurate 3-sigma verification & corner extraction

- High-Sigma Monte Carlo: Monte Carlo & SPICE accurate high-sigma verification and design of analog/RF and custom digital circuits.

- DesignSense: Variation-aware sensitivity & design debugging.

There is also a Variation-Aware Design DAC Panel with ARM, IBM, Invecas, and Solido on June 6. Plus Solido is presenting at the TSMC DAC Invited Theater Presentations (Monday to Wednesday June 6-8) and the Samsung DAC Invited Theater Presentation (Monday June 6).

If you are not attending #53DAC you can attend the TSMC and Solido “Collaborate for Variation-Aware Design of Memory and Standard Cell at Advanced Process Nodes” webinar:

Date: June 1, 2016

Time: 10am Pacific

Duration: 55 minutes

Click here to register!

Abstract:

Variation effects have an increasing impact on advanced process nodes, and at each, new sources of variation must be considered. Furthermore, increased competition is forcing tighter design margins to make high-performance, low-power, low-cost products. Designers must now do more variation analysis than ever to achieve these tighter margins, using advanced variation-aware technology for speed, accuracy and coverage to deliver competitive chips on schedule. This webinar will discuss on how TSMC and Solido collaborate to offer variation-aware design techniques for memory and standard cell with TSMC advanced processes using Solido’s new Variation Designer 4.

Internet of Things Tutorial: Chapter Three

Emerging and future Internet-of-Things (IoT) systems will increasingly comprise mulitple heterogeneous internet connected objects (e.g., sensors), which will be operating across multiple layers (e.g., consider a camera providing a view of a large urban area and another focusing on a more specific location within the same area). Likewise, IoT applications will have to collect and analyze information from multiple heterogeneous objects, or even to compose services based on the interactions of multiple objects. Dealing with multiple sensors and internet connected objects, at multiple levels, requires:

- Uniform representation of IoT data and operations, preferrably including some standards-based representation for sensor data.

- Flexiblity in reallocating and sharing resources (including sensors, devices, datasets and services).

- Deploying and using resources (such as sensors) independently from a specific location, application or device.

These requirements are closely associated with the interoperability across IoT sensors and devices, which refers to the ability of two or more autonomous, heterogeneous, distributed digital entities to communicate and cooperate. In this definition of IoT nodes and elements interoperability, different entities are able to exchange and share both information and services, despite their major differences in programming platform, application context or data format. Furthermore, interoperability implies that their communication or collaboration does not require any extra or special effort from the human or machine leveraging the results of their collaboration.

The fulfillment of these interoperability requirements has been addressed by early sensor and WSN middlware frameworks, such as Global Sensor Networks and its X-GSN extensionwhich is part of our OpenIoT project. Furtheremore, standards-based implementations using Open Geospatial Consortium (OGC) standands have also emerged such as the Sensor Web project and the 52north project. OGC has introduced Sensor Web Enablement (SWE) as in aAn interoperability framework for accessing and utilizing sensors and sensor systems in a space-time context via Internet and Web protocols. OGC specifies a pool of web-based services, which can be used to maintain a registry/directory of available sensors and observation queries.

These services are built around the same web standards for describing the sensors’ outputs, platforms, locations, and control parameters, which are used across applications. Standards comprise specifications for interfaces, protocols, and encodings that enable the use of sensor data and services. The SWE standards are motivated by the following use cases:

- Quick discovery of sensors, which that can meet certain requirements and constraints in terms of location, observables, quality or even ability to perform certain tasks.

- Acquire multi-sensor information in standard formats, which can be readable and interpretable by both humans and software processes.

- Access sensor observations in a common manner, and in a form easily customizable to the needs of a given application.

- Management and handling of subscriptions in cases towards receiving alters upon the occurrance or measurement of specific phenomena.

Among the most prominent SWE standards-based modelling language are:

- Sensor Model Language (SensorML), which provides sandard models and XML Schemas for describing sensors systems and processes, while at the same time providing information needed for discovery of sensors. It also describes the location of sensor observations and the processing of low-level sensor observations.

- Transducer Model Language (TransducerML), which provides a conceptual model and XML Schema for describing transducers and supporting real-time streaming of data to and from sensor systems.

- Observations and Measurements (O&M), which provides Standard models and XML Schemas for encoding observations and measurements from a sensor, both archived and real-time.

Likewise, SWE prescribes the following web services:

- Sensor Observation Service (SOS), which provides interfaces for requesting, filtering, and retrieving observations, along with information about sensor system.

- Sensor Alert Service (SAS), which provides an interface for publishing and subscribing to alerts from sensors.

- Sensor Planning Service (SPS), which provides the interfaces for requesting user-driven acquisitions and observations.

- Web Notification Service (WNS), which provides a standard Web service interface for asynchronous delivery of messages or alerts from SAS and SPS web services and other elements of service workflows.

SWE provides a very good basis for the implementation of interoperable applications based on open standards. It’s also a very good basis for understanding several IoT concepts and common services, such as discovery, location-awareness, data acquisitions, subscriptions, sensor/IoT systems, as well as the merits of standards and interoperability when it comes to building non-trivial systems. At the same time, it provide fertile ground for understanding and implementing semantics towards the vision of semantic sensor web.

The Semantic Sensor Web is based on the enhancement of existing Sensor Web models with semantic annotations towards producing sematic descriptions and enabling enhanced access to sensor data. The X-GSN project (part of Openiot.eu) provides a good platform for analyzing this process, since it enriches sensor descriptions (data/metadata) with semantic annotations. This is a very popular concept, which is increasingly become part of all non-trivial IoT systems (e.g., the emerging one M2M standard is enhanced with support for semantic annotations, which has been demonstrated in the scope of the H2020 FIESTA project). Indeed, semantic annotation ensure model-references to ontology concepts, thus enabliing more expressive and interoperabilty descriptions of sensor concepts.

SWE and the projects that implement it are mostly focused on sensors, sensor networks and their interoperability, without adequately addressing other IoT devices and aspects. Indeed, the rising complexity of IoT systems are posing additional interoperability challenges, including interoperability across whole IoT ecosystems, including interoperability across different devices, security and privacy mechanisms, things & services directories and more.

An in-depth discussion of IoT interoperability issues are provided in the scope of semantic interoperability position paper of the Internet of Things Research Cluster of the Alliance for IoT Innovation (AIOTI), which EU’s H2020 programme has recently funded entire projects (such as InterIoT, symbIoTe, bigIoT) aimed at providing interoperability across IoT ecosystems. This is particularly important as IoT interoperability is (according to McKinsey in its “Unlocking the potential of the Internet-of-Things report“) expected to be the source of the 40% of IoT’s market value in coming years. We will thus revisit the interoperability discussion in future posts of this series, when discussing IoT/cloud integration and IoT ecosystems.

Internet of Things Tutorial: Chapter Two

In our Internet-of-Things (IoT) introduction we highlighted Wireless Sensor Networks (WSN) and Radio Frequency Identification (RFID) as two of the most prominent IoT technologies. Indeed, these two technologies can be considered as the forerunners of IoT. During the previous decade, IoT was in most people’s minds directly associated with WSN and/or RFID, and we can be sure that there is still is.

Internet of Things Tutorial: Chapter One

The Internet-of-Things (IoT) is gradually becoming one of the most prominent ICT technologies that underpin our society, through enabling the orchestration and coordination of a large number of physical and virtual Internet-Connected-Objects (ICO) towards human-centric services in a variety of sectors including logistics, trade, industry, smart cities and ambient assisted living. In a series of post, I will be presenting a set of internet-of-things technologies and applications in the form of a series of tutorial in nature post. The present post is the first introductory one.

For over a decade after the introduction of the term Internet-of-Things, different organizations and working groups have been providing various definitions. For example:

[LIST=1]

Independently of the defintion, the exploitation and coordination of ICOs data and services is enabling a large number of novel human centric applications (e.g., Smart Cities & Communities, IoT in Healthcare, IoT in Manufacturing & Logistics, IoT Platforms & Ecosystems).

The IoT paradigm is enabling the vision of pervasive & ubiquitous computing, which was back in the 90’s envisaged to be a direct consequence of the rapid prolifration of computing devices. Indeed, following the era of super-computing (where a few fat computing systems served many users (many-to-one)) and the era of personal-computing (where each user had its own personal device (one-to-one), we are already in the era where each human individual enjoys services based on multiple internet-connected computing devices (such as laptops, mobile devices, multi-purpose sensors, home gateways).

Hence, the IoT revolution is indeed propelled by the exponential increase of the number of connected devices, which is (according to CISCO) estimated to reach 50 billion devices in 2020. This will mean that each of the estimated 7.8 billion people on the planet will use on average more than six devices. Nowadays, we have already crossed the point (back in 2005) where the number of people around the globe was equal to the number of internet connected devices.

The proliferation of internet-connected-objects, empowers interactions both between devices and things, but also between things and people, thus providing unprecedented application opportunities. Indeed, people can directly connect to things (such as mobile phones, electronic health records etc..) via wearable sensors such as motion sensors, ECG (electrocardiogram) sensor and smart textiles. Likewise, things connect to each other e.g., as part wireless sensors networks (WSN), but also as part of a WSN’s interaction with other devices (gateways, mobile devices etc.). As another example car sensors, connect to intelligent transport systems and with sensors from other vehicles. These interactions are in most cases empowered by heterogeneous networking infrastructures, which provide ubiquitous high-quality connectivity, such as 4G/5G infrastructures.

Along with the term internet-of-things, several similar/analogous technologies and terms have been introduced. These include for example:

[LIST=1]

All these terms are very relevant (and in most cases overlapping) to IoT. Nevertheless, they have also subtle (but sometimes important) differences from IoT. We will illustrate these terms and their differences from IoT in following posts. In gerenal there are different viewpoints for IoT, and ΙοΤ experts approach IoT from different angles. For example:

[LIST=1]

Independently of one’s viewpoint about IoT and IoT technologies, any non-trivial IoT system is expected to comprise the following elements:

- Sensors and Actuators.

- Communication infrastructure between servers or server platforms.

- Server/Middleware Platforms.

- Data Analytics Engines.

- Apps (iOS, Android, Web).

These elements are evident in the functional viewpoints of the various architectural frameworks that have been introduced for IoT, and which we will be presented in following posts.

IoT is gradually becoming very closely affiliated with cloud computing infrastructurdes and BigData infrastuctures (including data analytics frameworks ). These infrastructures provide IoT applications with the means of levveraging the scalability, capacity and reliability of the cloud, along with the data processing and analysis capabilites of BigData systems. In later posts we will be discussing both IoT/cloud integration and the topical subject of IoT analytics (including mining of IoT data streams). However, we will start with an illustrations of the “things”-oriented technologies that underpin IoT, such as RFID and WSN.

Further Reading & Study:

1) There are a number of videos illustrating the IoT videos and introducting some motivating functionalities. Some of these are listed below:

- European Commission – Internet-of-Things

- Intel IoT — What Does The Internet of Things Mean

- Cisco – How the Internet of Things Will Change Everything–Including Ourselves

- IBM – Internet of Things

- Microsoft – The Internet of Your Thing

- TEDxCIT – The Internet of Things

2) One can read about the different viewpoints of IoT at:

Atzori et al. / Computer Networks 54 (2010) 2787–2805

3) The IoT/Cloud integration is explained at:

Gubbi et al. / Future Generation Computer Systems 29 (2013) 1645–1660

4) The IERC cluster books provide a wealth of information about IoT. The most recent book (authored in 2015) is titled”Building the Hyperconnected Society – IoT Research and Innovation Value Chains, Ecosystems and Markets –IERC Cluster Book 2015.

S2C tutorial and PROTOTYPICAL debut at DAC

It’s been a busy few days here in Canyon Lake, and we’re ready to share exciting news in advance of #53DAC coming up on Monday, June 6[SUP]th[/SUP]. S2C is offering a technical program tutorial on “Overcoming the Challenges of FPGA Prototyping” followed by the launch of our latest book project, “PROTOTYPICAL”, including a field guide authored by S2C. Continue reading “S2C tutorial and PROTOTYPICAL debut at DAC”

The Xiaomi Redmi Note 3 Significantly Improves Performance

The smartphone market has been experiencing many changes, some of those changes have included the slowing of the overall pace of growth. One of the remaining growth segments of the smartphone market right now remains the mid-range which is priced around $200. These phones have traditionally been ignored by the big players in the smartphone market or at least didn’t put up their best offerings. However, with the growth of the mid-range and the chips available to OEMs, the bar for mid-range phones has started to rise.

One of the places that has been experiencing the most growth in the mid-range globally has been the Indian market which is experiencing significant growth and demand for quality mid-range devices. One of the devices that is looking to satisfy the increased demand in India is the new Redmi Note 3 which now includes Qualcomm’s new Snapdragon 650 processor. We wrote a technical review of the new phone and performed benchmarks. You can download this here.

The Redmi Note 3 actually comes in two versions, with the China version having launched in November and the version for the rest of the world launching in India on March 3rd, 2016. The primary difference between these two models is that Xiaomi has swapped out the MediaTek Helios X10 for a Qualcomm Snapdragon 650. Changing SoCs is no small task, especially when you take into account that many other chips and the PCB end up changing as well. Xiaomi’s switch to the Snapdragon 650 changes the phone from an 8 core CPU to a 6 core CPU, but actually increases the CPU performance thanks to two A72 cores in the Snapdragon 650. The Snapdragon 650 also brings the Adreno 510 GPU to the new Redmi Note 3 and offers major performance and efficiency improvements over the previous model.

In addition to having a new 6-core processor, improved GPU and overall efficiency increases the Xiaomi Redmi Note 3 also comes with a 4000 mAh battery which further increases the overall battery life of the phone, giving it some impressive longevity. Xiaomi didn’t stop there either, this phone also has an aluminum unibody design as well as an integrated fingerprint sensor for biometric security and ease of use. All of these features fit into a 5.5” sized phone with a 1080P display, which isn’t considered the highest resolution available, but this phone also isn’t a high-end phone which could be deceiving when you see all the other features of this phone.

Based on our SoC testing where we tested many components of the SoC, we were able to determine that the Snapdragon 650 is one of the most efficient and highest performing processors in this class of devices. Xiaomi has provided significant value with the Redmi Note 3 with the Snapdragon 650 inside and has significantly raised the performance and battery life bar with our testing results. Many of the battery life and performance figures were astonishing to us as they surpassed our expectations significantly. Xiaomi really has a winner with the Redmi Note 3 and they are doing a great job of launching it in India first, a market where the demand for such devices is extremely high and a place where performance and battery life are extremely important.

If you would like to see the results of our testing and the figures that supported our conclusions about the Redmi Note 3 download the full paper here.

How a Connected Watch Will Change the Connected Car Business

It’s amazing the amount of excitement being ginned up over connected cars. Analyst firms regularly publish estimates of hundreds of millions of connected cars on the road by 2020. It’s enough to make you believe it might happen.

If we even come close to those projections it will be a miracle given the disconnect between the wireless industry and the auto industry. Nowhere is this off-the-hook relationship more apparent than in Europe – where I am this week at Telematics Berlin.

On the cusp of the implementation of the eCall, emergency call, mandate in Europe, car makers and wireless carriers are left to ponder what they have created. Leave it to the wireless carriers of Europe to give the automobile industry the gift of the dormant SIM – a wireless module that is inert until and unless the user has a severe enough crash in their car.

Car companies like PSA, BMW, Renault, Volvo, Volkswagen and Mercedes that have already implemented proprietary eCall systems will find themselves adding a second dedicated and redundant eCall device to accommodate the European Union mandate, according to industry suppliers. This means that the device that was originally conceived to enable a multifunction platform encompassing safety, security and infotainment will be dedicated to a single function necessitating the embedding of multiple telecom modules.

It’s worth noting that in both Brazil and Europe a mandated SIM was “sold” to the industry as a platform for “value-added services” – ie. stuff that car companies could “monetize.” In both instances – Brazil and Europe – the promise has been left unfulfilled. (Brazil’s mandate has been indefinitely delayed while Europe’s mandate appears to be on track for 2018 implementation.)

Should a car equipped with a dormant SIM eCall experience a crash, the dormant SIM module will spring to life and call for help – provided it is within range of a compatible network. Our fingers will be crossed on that point.

The latest European development sure to please smartphone makers along with Apple and Alphabet is the decision by Three, Tesco Mobile and Vodafone to offer inclusive data roaming for smartphone plans. The plans vary – ranging from 2GB up to 12GB of inclusive data roaming. The offer will be a boon to drivers who prefer to use their phones instead of the embedded wireless systems in their vehicles.

According to an Engadget report the deal has been “introduced ahead of new legislation, drawn up by the European Commission, which will scrap EU roaming charges altogether in 2017. A stop-gap measure was introduced last month, limiting the fees that network operators can enforce abroad.”

The problem here is that wireless carriers and regulators generally regard the telecom device built into a car as an M2M or, more importantly, a B2B system. The carrier is treating the car maker and the car as the customer so it is considered wholesale business – not subject to the same rules as retail smartphone roaming regulations.

This means that the removal of roaming limitations for consumer phones will not apply to devices built into cars. It also means that built-in telecom modules are subject to termination by carriers in some parts of the world who prefer to shut those telecom modules off for good if the consumer does not extend the contract beyond the free period.

As a result of these unfriendly policies it is reasonable to conclude that wireless carriers are not overly fond of connected cars – with the possible exception of AT&T and Vodafone (and Orange, Verizon and Telenor). By the same token, car makers are not overly fond of wireless carriers or vehicle connectivity generally. Wireless service for cars is complicated, full of security risks, and expensive. But the onset of the co-called eSIM may change everything.

As demonstrated at the Mobile World Congress with a Samsung Gear smartwatch, the day may soon arrive when consumers can provision the carrier of their choice in their car with the help of a smartphone app. They will also be able to transfer that privilege to a second owner of the car.

http://tinyurl.com/js8ob54 – A New SIM – For a New Generation of Connected Consumer Devices

Illustration of carrier provisioning process for Samsung watch with eSIM. – SOURCE: GSMA

The effect of emerging eSIM technology will be to allow the consumer to add their connected car to their existing wireless plan with all that that implies regarding the use of data and roaming. How far off on the horizon is this dream? Maybe not as far off as you think, judging by the presentations and live demo of the provisioning process portrayed in the (above) link.

The eSIM solution opens the door to a carrier-independent world of vehicle connectivity capable of resolving the conflicts and confusion that currently characterize carrier-car maker interactions. If eSIM technology sees rapid and wide adoption, the visions of hundreds of millions of connected cars may indeed be realized – and even sooner than expected.

But lost in all this excitement may be the importance of capturing vehicle diagnostic data and enabling firmware over-the-air updates. The value of the embedded SIM lies in developing a lifetime relationship with the car and the customer and preserving and enhancing the driving experience after the sale.

Until now, car companies have obsessed over the cost of wireless service with little help from the carriers. Multiple efforts are now underway from the Open Mobile Alliance and the International Telecommunications Union to find ways to build strong common bonds between carriers and car makers.

These parties need not and must not be in conflict. The challenges must be overcome as we have seen what will happen in the absence of cooperation – cars will fail and be recalled, carriers and car makers will be blamed, customers will be lost and profitability will suffer.

With the provisioning of a telecom module embedded in a Samsung Gear smartwatch the carriers and car makers were given a vision of what could be. It is time to embrace that vision.

Roger C. Lanctot is Associate Director in the Global Automotive Practice at Strategy Analytics. More details about Strategy Analytics can be found here: https://www.strategyanalytics.com/ac…e#.VuGdXfkrKUk

Bulking Up of Design Data Calls for Version Control on Steroids

Even though design management systems are gaining popularity as a way to manage design data growth, they actually contribute to the problem of exploding data size. What we already know is that a linear increase in die size causes exponential growth in chip area, and that smaller feature sizes compound this effect in the same way. Additionally, larger teams are required for larger SOC projects with more components. These larger teams mean more users need copies of the design to do their jobs.

Conventional revision control systems give users copies of the design files in their workspace so that they can run design tools to modify the the design or run verification tools. Traditionally, creating and managing workspaces is difficult and painful at best. At its worst it can become a true nightmare. All kinds of application level techniques have been applied to try to make this process easier to run and manage.

Some approaches use de-duplication (dedup) to save space on servers, but it is necessary to create a full copy of the data before deduping can begin. Furthermore, after the data is copied to make a workspace, it then needs to be compared to other stored data on the fileserver to determine if there is duplicate data that can be consolidated. This amounts to a copy, two reads and a compare in order to reduce storage space. The bandwidth and compute penalty for this step is severe and can negate the advantages of the process, especially when dealing with terabytes of data.

File system links are also frequently used, but managing them can be troublesome. Using links in a directory to point to workspace copies of files is still an ‘application’ level solution to ‘service’ level problem. Links can become jumbled and create a web of file pointers that can be hard to parse. At the time a file needs to be modified the link has to be removed and the file data needs to be copied locally.

In reality often only a small number of files in any given workspace need to be modified, the vast majority are there for reading only. So what is called for is a robust and ideally transparent system for efficiently creating workspaces and allowing users to read and/or work on the files that are needed specifically for their task. Most importantly of all, the file operations must follow the permissions dictated by the design management system.

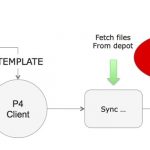

So, if you were starting from scratch, what would be the best design for a design management infrastructure? It would be based on Perforce or Subversion, or another standard revision control system. However, it would be differentiated by putting a fundamental understanding of the native revision control system into the file system itself.

Methodics has done just this with their WarpStor appliance. Yes, building a filer interface hardware unit is very unconventional by today’s standards. Thinking it through, it is not unlike what NetApp did with NFS, making it a service that is furnished through an appliance. Granted data management is a different beast, but there are many parallels.

Methodic’s WarpStor uses managed design data on existing fileservers, but presents it to each user as local data that complies fully with the design management policies and procedures. Methodics likes to call this Version Control on Steroids. It’s easy to see why. The amount of data across the network used for design data drops significantly. Network traffic and bandwidth consumption drop sharply. Users see higher levels of responsiveness. Best of all, administrators and users see the full benefits of design management, including versioning, permissions, releases, etc.

To learn more about the internals and implementation of WarpStore, you can read the white paper on “WarpStor – Version Control on Steroids” on their website, here.