IPnest is launching the “Interface IP Survey” since 2009, and we did it last September again. To build the survey as accurately as possible, I have followed the “divide and conquer” strategy. Interface protocols are varied, ranging from PCI Express, USB, or Ethernet, to memory controller (DDR3, DDR4, LPDDR3, LPDDR4 and more) and HDMI, DisplayPort, SATA, SAS, or MIPI specifications (CSI, DSI, I3C, M-PHY, D-PHY, C-PHY…).

Continue reading “The IP Paradox: Sales are growing despite Semi Consolidation”

Can one flow bring four domains together?

IoT edge device design means four domains – MEMS, analog, digital, and RF – not only work together, but often live on the same die (or substrate in a 2.5D process) and are optimized for power and size. Getting these domains to work together effective calls for an enhanced flow.

Historically, these domains have not played together in silicon. Designs were executed at a PWB level, bringing together chips with different design rules and packaging technology. This was a risk reduction maneuver; each domain could be debugged more or less independently, then integration issues such as crosstalk, interference, and signal integrity solved using macro techniques for mitigation. Domain experts usually didn’t cross disciplines, except to understand the interfaces between the domains.

Now, interaction between domains is much more critical to success in constrained IoT edge designs. Mixed signal design is pretty much taken for granted now, but more and more people are having success in on-chip RF and MEMS integration. The bar has been raised by better EDA tools that handle a unified flow from capture to simulation to layout.

Note I said tools. It’s not necessarily a single tool, but rather an integrated suite operating from the same data repository with the same user interface that is important. What kills productivity is switching costs. Bringing together these four domains is easier said than done, however. Analog types are used to working in schematics, digital types in RTL, and MEMS and RF designers are often working at the metal layers.

This challenge is really the motivation behind the Mentor Graphics purchase of Tanner EDA a year and a half ago. Integrating those disparate domains and bringing a full suite of EDA tools together in one comprehensive flow is a big job that the Tanner teams have been working on relentlessly since the acquisition. Mentor’s PWB tools such as PADS, created with small teams in mind, also factor in to the flow. For IoT edge devices, it goes even deeper – Mentor’s purchase of CodeSourcery was all about optimizing chips for real-time software.

A new Mentor white paper authored by Jeff Miller has an interesting premise: if we have a better design flow for IoT devices, handling all four domains, we get more optimized parts. (He’s gone as far to suggest there is “a new breed of designers.” That has a lot to do with how engineers come through school. I’m not sure deep expertise in all four domains are possible in a single person, but I am sure that designers are becoming much more familiar with cross-domain design and collaboration in small teams.)

The Tanner IoT design flow handles all the way from capture through simulation to layout:

Miller walks through how the tools tie together in this scenario. His discussion on simulation is particularly interesting. For example, in a mixed-signal simulation, T-Spice and ModelSim work together, passing data back and forth whenever signals change at the analog/digital boundary.

What about the MEMS part of the job? Miller suggests that creating 3D models and then trying to derive a 2D mask is difficult and can lead to errors. He suggests a mask-forward flow, bringing in a 2D mask from Tanner L-Edit and then generating the 3D model. And the RF? Tanner Eldo can be used to analyze RF circuits with several algorithms and help optimize the results for various types of circuits.

Just reading through this discussion, it is clear the different domains call for different handling, and there has been a lot of progress in Tanner EDA tool integration. The entire white paper is available for download (registration required):

Driving Intelligence to the IoT Edge Invents a New Breed of Designers

As I said, I don’t think there is any magic that transforms a single designer into an expert in all four domains just by adding tools. What we are seeing is EDA vendors starting to think about their tools as part of a bigger system design effort with various facets, with a collaborative design team working in one environment with integrated tools.

Is That PDK Safe to Use Yet?

In our semiconductor ecosystem we have foundries on one side supplying all of that amazing silicon technology, and IC designers on the other side that take their system ideas then go implement them in a SoC using a specific foundry. The required interface between foundry and chip designers has been the Process Design Kit (PDK), a collection of files that define how the silicon should work:

- SPICE models for transistor behavior

- Layout Parasitic Extraction (LPE) decks that define the physical interconnect in terms of resistors, capacitors and inductor

- Design Rule Checks (DRC) that define how the physical IC layout should be done in order to yield properly

- Layout Versus Schematic (LVS) decks that specify how transistor-level netlists should compare between layout and logical

Getting the PDK files right is really important because with small process nodes we have Layout Dependent Effects (LDE), for example the Vt of one transistor depends on how close it is physically placed next to another transistor or even a contact. Same issue with the mobility of a transistor, it depends on physical placement. Parasitic values can now dominate the speed of a transistor, so knowing how to extract them properly impacts the accuracy of timing analysis tools..

We all know that software is mostly written by hand, so that means that bugs can creep into the tool by accident. Well, the PDK is just a bunch of files that can be manually or automatically generated, and yes, these files may be off a bit, so what to do? If you’re an automation company you would come up with a way to create a QA tool for PDK creators and PDK users. This is exactly what the engineers at Platform DA have come up with, a QA toolset for the foundries that create the PDKs and for the circuit designers that use PDKs. They call their tool PQLab, and I just learned more about it.

Related blog – Are your Transistor Models Good Enough?

A chip designer has certain questions about the PDK:

- What just changed when going from PDK v.1 to v.2?

- How does a PDK change impact my IC project?

- Which foundry should I use for my next IC design?

- Is there a way to benchmark different PDKs of two different design flows quickly?

- How does LDE, statistical variation and parasitics impact my design?

At the foundry the PDK engineering team has their own set of questions:

- Are all of my PCell combinations DRC clean?

- Will all of my PCell combinations be LVS clean?

- Can I compare the pre-layout versus post-layout circuit simulation results for typical cells?

- What just changed when going from PDK v.1 to v.2?

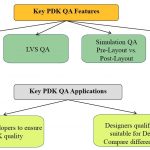

The approach used by PQLab is to help answer these questions through a set of QA features designed just for PDKs:

Starting with the DRC and LVS side of QA first, the idea is to automatically and randomly place cells from the Pcell library next to each other and then run a popular DRC/LVS tool like Calibre from Mentor Graphics to check that all combinations are actually clean and without any errors:

If DRC or LVS errors are found with certain cell combinations, then the foundry goes back and fixes those cell layouts and re-runs QA to ensure that each error has been fixed.

For the simulation QA there are three major tasks:

- Correlate SPICE models pre-layout versus post-layout

- Compare the device simulation specs like Vth, Idsat, etc. at pre-layout and post-layout conditions across a range of circuits

- Compare any differences between design flows with popular circuit simulators (HSPICE, Spectre) and extraction-based netlists from extractors (StarRC from Synopsys, Quantas QRC from Cadence, Calibre XRC from Mentor)

The third and final area of QA checking with the PQLab tool is PDK comparisons, where there are five criteria:

- PDK file comparison

- PCell property comparison

- CDF comparison

- PCell default layout and original position

- Simulation results comparison

Summary

PDK files continue to be the way that foundries and designers interface, so we need to be sure that all of the PDK files are consistent and correct. The PQLab tool from Platform DA provides the needed automation for PDK developers to ensure that they have the highest quality before releasing. IC designers can now quickly determine if a particular foundry PDK is going to provide them the performance and power requirements being sought and know what has changed between versions of a PDK. The QA process for a PDK doesn’t have to take weeks using semi-automated methods, now with some automation it can take only hours to complete. Foundries are using the PQLab tool to save time and produce PDK files that are solid.

Mentor Webinar Series: Integrating the Systems Engineering Flow

Product lifecycle management is probably not the most gripping topic for most design engineers. You want to get on with architecture, design, verification and implementation. But if you are building products for any safety-sensitive application in a car, a medical appliance, avionics, railway applications in Europe – to name a few – you know that now you have to demonstrate compliance with pretty detailed process standards like ISO26262.

You could do all of this, in principle, by exchanging pieces of paper and emails but that approach quickly becomes unmanageable. More likely you plan to build (or have partly built) your own system of spreadsheets and shared/ revision-tracked documents. You going to need some of that anyway. But integrating design flows and best-in-class design tools to provide the compliance and traceability required by standards like 26262 is complicated by differing needs, processes and data views among tools.

Mentor is offering a series of four webinars (the first has already posted) on what you have to consider (make sure you understand the full scope of the problem) and a better approach to managing the design flow process:

- Going beyond PLM solutions to manage design change (Oct 19)

- Simplifying overwhelming project data by putting it into context (Nov 3)

- System design management simplifies ARP4754A compliance (Nov 10)

- System design management for automotive functional safety (Nov 17)

While this might be fun project for an internal software team, it can take years to build and get right something this complex. It takes EDA expertise as well as understanding of standards like 26262, so don’t let the IT team tell you they can take care of this. You need something built by experts, not enthusiasts 😎, especially when compliance and safety are determined by the effectiveness of these solutions.

Increasing the productivity of an engineering team and improving first-time quality of their end product requires maintaining coordination and synchronization of the information needed between software environments. However, data integration between software tools intended to be used together in a work flow remains challenging. While individual software providers address some challenges, the problem is compounded when workflows employ best-in-class tools contributed by different suppliers.

A true Systems Engineering approach to integration manages relationships between tools throughout design disciplines; coordinates changes, dependencies, and impacts; and integrates with a user’s current tools and flows. In this session, we look at an innovative solution to this problem based on OSLC, extended by the Mentor Graphics approach to incorporate a central organizing structure for data tracking, history, and analysis.

You may sign up for one, several, or all four seminars. Each seminar stands alone.

Mentor Graphics systems experts discuss four unique systems

integration topics, two of which show a way to verify safety certification

efforts, such as ARP 4754A and ISO 26262. You may sign up for one,

several, or all four seminars. Each 30-minute seminar stands alone.

What You Will Learn:

- A new way to track changes in a design flow – without changing any design

- tools

- A way to share information where needed in a structured and organized

- manner (that’s not a PLM environment)

- A way to manage relationships among all facets of a design as it evolves

- A way to easily access legacy material

- A way to meet standards inspections

Who Should Attend:

- Systems engineers

- Requirements engineers

- Project managers

- Safety analysts

Between Waze and a Thin Hard Place

Car makers, semiconductor companies and wireless carriers are all excited these days about creating cars that can drive themselves. Billions of dollars are being spent on acquisitions and investments in companies and technologies that can make this happen. But there is a fly in the ointment by the name of Waze.

To create cars capable of automated driving, car makers and their supplier partners are having to replicate the functional capacities of the human brain and nervous system including vision systems and networks. In essence, the industry is being forced to create something we might regard as a “thick client” – a sentient car with sufficient storage and processing power to deliver a safe and reliable automated driving experience.

The alternative to the thick client is the “thin client” – in this case a smartphone. The thin client gets the job done by accessing off-board (cloud) resources to facilitate its decision-making. The best search and speech recognition solutions in cars today are either entirely cloud-based or hybrid on-board/off-board systems.

When Audi, BMW and Daimler came together to acquire the map data company, HERE, from Nokia, the intent was clearly to build an automated driving capability upon the foundation of HERE maps. If cars were going to drive themselves sooner or later, they’d need maps on-board and HERE was the big dog of embedded maps in cars.

Much was made at the time of the desire of car manufacturers to preserve their independence from Google and Apple and any other tech industry interlopers. So the HERE acquisition was something of a turf war over ownership of the customer relationship and the technology going into the cars.

The key differentiation between HERE as a source of map data and Google – aside from the ever-present Googlian invasion of privacy – was the fact that HERE actually provides a data set that resides in the car. Arch-rival TomTom also provides a map data set to its significantly smaller customer base of car makers. Google does not provide an on-board map, although it enables chunks of map data to be downloaded on an ad hoc basis.

Much has been made in the press lately of the onset of Apple’s CarPlay and Alphabet’s Android Auto smartphone integrations for cars. The hullabaloo over these increasingly widespread systems in new cars and the aftermarket revolves around the fact that they are creating headaches for car dealers and consumers (according to J.D. Power and Consumer Reports) even as they proliferate and begin to make smartphone navigation projection from the phone into the dashboard screen a reality.

But whether you snap your phone into a mount on your dashboard or connect it to your on-board infotainment system, the prevalence of smartphone navigation generally and Waze in particular is putting pressure on the pricing of built-in navigation systems. More importantly, it will ultimately cause consumers to reconsider buying the built-in navigation system if the price delta is too great.

As car makers lay the groundwork to enable automated driving the need for an on-board map will become increasingly imperative. In fact, the need for an up-to-date on-board map will become more important than ever.

If consumers abandon the idea of on-board navigation in favor of the good enough navigation experience of Waze on a smartphone – the progress toward automated driving will be severely impeded. And the risk is real because Waze is not only good enough navigation, for many it is becoming the preferred source of traffic information and, a dirty little secret, speed traps – or safety zones, as they are euphemistically described in Europe. Some Waze users won’t go anywhere without the app.

Car makers like BMW and Daimler are taking steps to provide for real-time hazard notifications and incremental map updates to match Waze’s perceived advantages. But the challenge extends beyond Waze. Toyota launched an OpenStreetMaps-based projectible smartphone navigation app in the U.S. last year. Apple is steadily replacing TomTom’s maps with OSM in dozens of countries around the world.

Meanwhile, Waze availability in dashboards continues to advance. Ford is expected to offer Waze via its SDLink app integration and Waze is also accessible via MirrorLink.

Waze’s viral marketing and crowd-sourced platform represent a formidable pothole on the path to automated driving for the masses. There are steps auto makers can take to preserve their long-term automation objectives. I will explore this topic in more detail in my keynote at next week’s TU-Automotive Europe event in Munich – http://www.tu-auto.com/europe/.

Smartphones have given us so much. They have the power to save time, fuel and lives on the road – as long as drivers don’t get distracted. They can enable entirely new business models and market opportunities. But good enough solutions don’t cut it when you are trying to transform an industry. Self-driving cars will need an on-board map to get them through thick and thin.

Roger C. Lanctot is Associate Director in the Global Automotive Practice at Strategy Analytics. More details about Strategy Analytics can be found here: https://www.strategyanalytics.com/access-services/automotive#.VuGdXfkrKUk

Manufacturing Singularity is Comng!

One of the many benefits of blogging is that you get to meet some very interesting people. This time I had the pleasure of speaking with Michael Ford of Mentor Graphics about Industry 4.0 and smart factories. In fact, Mentor has an excellent series of white papers titled “Is This a Manufacturing Revolution?” from their Valor Division, but first a bit about Michael.

Michael spent more than 25 years with Sony Electronics Manufacturing Systems which resulted in the spin out of Valor Computerized Systems Ltd. Valor was a recognized leader of productivity improvement software across the electronics design and manufacturing supply chains that “simulate, optimize, monitor and control the production lifecycle of electronics products, enabling companies to design and manufacture more efficiently, cost effectively and with better quality”. In 2010 Mentor Graphics acquired Valor and that is how Michael arrived at Mentor.

The first question I asked Michael is when will electronics manufacturing come back to the United States? Given the advancement of robotics, artificial intelligence, and cloud computing, manufacturing is now a very small percentage of the electronic product cost equation. In fact, according to Michael, Apple’s percentage cost of manufacturing in China is now about 1%. The answer to my question of course is described in detail in the 8 part white paper series:

Part 1: Stop the Leaking Factory

Part 2: What Does the Industry 4.0 Factory Look Like?

Part 3: The Customers’ Perspective

Part 4: How to Get Started

Part 5: Making the Connections

Part 6: Staying Flexible

Part 7: The ROI of Change

Part 8: Risks and the Future

Michael and I also talked about Industry 4.0 and Mentor’s Open Manufacturing Language specification (OML). You can get a good look at Industry 4.0 from Wikipedia so let’s talk about OML. Earlier this year Mentor launched the OML initiative which is really IoT for manufacturing.

“For some time now we have seen and heard the demand for a comprehensive shop-floor communication standard that is detailed enough to support the next generation of computerization such as Industry 4.0 solutions,” stated Dan Hoz, General Manager of Mentor Graphics Valor Division. “With this initiative, Valor contributes the first step and sets the pace for the revolution in manufacturing for PCB assembly.”

I found this YouTube clip which nicely encapsulates our discussion:

You can read Michael’s blog for more information about OML HERE. The OML community website is HERE.

The challenge of course is legacy manufacturing equipment which is why Mentor came out with a secure plug-and-play IoT device you can read about HERE.

“The Valor IoT Manufacturing solution with the Open Manufacturing Language (OML) should revolutionize today’s automated electronics assembly industry. OML will bring much needed interoperability to the PCB manufacturing industry,” stated Dick Slansky, senior analyst, PLM & Industry, ARC Advisory Group. “Mentor’s plug-and-play, comprehensive, secure networking and connectivity solution is a significant milestone for the mass customization of manufactured electronics.”

Bottom line: The ultimate goal of course is singularity, where machine intelligence surpasses human intelligence and on the manufacturing floor Industry 4.0 is a step in the right direction, absolutely.

The Ising on the Cake

Just when you thought you knew all the possible foundations for computing, along comes another one. Forget von Neumann, this approach models Ising machines, systems built on solving a statistical ensemble model of ferromagnetism. The concept is quite simple. Imagine a lattice of magnetic dipoles/spins, each of which can only be in a “north-up” or “north-down” state. The coupling, in various states, when summed over adjacent magnets represents an energy and the goal is to minimize this energy.

As you might expect, this model maps neatly to various kinds of optimization problem. The travelling salesman problem (TSP) is one example, encountered in chip place and route tools, network routing, aircraft and truck routing and many other problems. TSP is considered an NP-hard problem though heuristic solutions are known and widely used. But improving on any of these solutions is always challenging since scaling up parallelism is quickly defeated by exponential growth in combinational complexity as the problem size grows.

While neural net and quantum annealing approaches are also being explored, advances based on Ising modelling have been reported recently. Rather than building spin lattices this approach uses laser pulses circulating through a ring cavity. Pulses are generated from a pumping laser as optical parametric oscillators (OPOs), a particular form of squeezed light. Each of these, above an oscillation threshold, splits into one of two possible phases which can model Ising spins. The ring cavity is designed to allow a fixed number of evenly-spaced OPOs to circulate through the ring at any one time, thus modelling a set of spins.

Next you have to model the coupling between the spins. In one approach, optical taps into the cavity take a small percentage of an OPO, delay it for an integral number of OPOs in the chain and couple that percentage back into the next OPO in line. There are N-1 taps for a cavity supporting N OPOs. This enables modeling a coupling between the i[SUP]th[/SUP] and j[SUP]th[/SUP] spins for any (non-equal) i and j. (The optical tap approach becomes very cumbersome with increasing N, so recent approaches have switched to electronic methods to add delay.)

Of course this ring of OPOs decays over time and the decay rate for any OPO depends on the phase states in the OPO. The decay rate of the system of OPOs can be engineered through couplings to model the energy profile (Hamiltonian) of a target Ising problem. (You’ll have to take that on trust or follow the 3[SUP]rd[/SUP] link below.) Now the OPO system is pumped by a laser, starting below the oscillation threshold. As the gain through pumping is slowly increased, it will eventually reach the lowest point in that profile which is a threshold for oscillation/resonance and that will self-reinforce. This by the way is known as the minimum gain principle, intuitively reasonable but not provably true. Finally, by observing the relative phase state of OPOs, resonances and hence minima in the problem space can be detected.

So there you have it. Technically, I don’t imagine other optimization techniques are in great danger in the near future. This is a very complex technique requiring some very sophisticated control of laser optics, probably not reducible (near-term) to a chip. Still, it does have one very interesting characteristic. All optimization techniques I know start on the problem curve and move around to (hopefully) find the lowest point. This technique starts below the problem curve and effectively moves a global metric (OPO system gain) up towards the curve. By construction the first thing it will hit is the global minimum (at least if the minimum gain principle is valid).

As a footnote, I checked a number of articles on this topic and found all the “popular” articles (Wired, a Stanford report, IEEE Spectrum) ducked any real attempt at explaining the method, which left me feeling cheated. This blog is my attempt to go at least a little deeper. Apologies in advance to experts in the field if I butchered the explanation – feel free to correct me. You can read a lightweight write-up HERE and the arXiv papers I relied on most HERE and HERE.

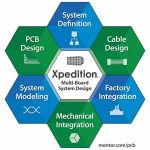

Automation for managed system-of-systems design

Anybody who has done any bus & board system design knows the problem. Merchant boards typically have standardized pinouts (after years of haggling in standards organizations) for the backplane bus, and a group of user-defined pins for daughtercard I/O. Homegrown systems usually have a just-as-carefully defined proprietary backplane bus pinout. Once defined, changing a signal name or pin location requires an act of deity.

Or a mistake. Each board design team starts with the pinout table, or if they are lucky they get a connector model in a PWB design tool library. When systems were small, and there were fewer than 20 boards on a backplane, checking interconnects wasn’t too terrible. Every once in a while, someone would miss a pin, or transpose the direction of high-to-low order bits on a parallel signal group. Hopefully that was caught before the smoke test. The offending “odd-man-out” board would be sent back for cut and jump rework, and a revision scheduled.

Systems have gotten huge; in many cases, they are now systems-of-systems. It isn’t unusual to see hundreds of boards with tens of thousands of interconnects including backplane traces, backplane cabling (usually for the user-defined pins), and over-the-top cabling. Boards and cables are parceled out to separate teams, along with some control documents – typically an Excel spreadsheet with the signal tables, or maybe dumb graphics in Visio. The entire system relies on everyone reading the documentation and interpreting it correctly.

The risk of a disconnect somewhere in the system is also huge.

Not to mention there is little (ok, no) ability to do trade-off analysis. Mentor Graphics released the last update to Xpedition with the ability to optimize pins from the board through the IC package – but what happens if that optimization would benefit neighboring boards? That is a very hard change to drive given semi-manual methods such as Excel or Visio.

Dave Wiens, Xpedition Product Manager in the Board Systems Division at Mentor Graphics, sums this up as the contrast between the norm of split & fragment design and managed system-of-systems design. He’s been pursuing a new release of Xpedition for multi-board system design with several goals. Rather than holding projects together with desktop office tools, teams can collaborate on “correct by construction” system design.

Reentering data becomes a thing of the past, and design rules can easily check connectivity across boards, backplanes, and cables, eliminating errors. Change management is built-in with controlled synchronization; users also have the capability to accept or reject changes. Disparate teams on large projects that might talk to each other infrequently have fewer worries about the currency of their design information.

One of the more challenging topics in systems design is thermal verification. Instead of the conservative “rule of thumb”, a multi-board system can actually be modeled in this release of Xpedition with confidence, and recommended changes can be driven across boards quickly. The same goes for signal integrity or mechanical issues.

The strength of Xpedition is its underlying data management infrastructure. This is really about a single design flow for all boards, backplanes, and cables, with everyone working in the same project repository with the same tools. Weeks of manual synchronization are reduced to automation, complete with tips and color coding. Cables can be created in schematic form, then optimized for size and weight using 3D modeling.

Much more detail on this announcement is in the Mentor press release:

Wiens says he completely understands existing processes are in place, especially on the change management front. He hopes that as users adopt the new Xpedition release, over time older system-of-systems design approaches yield to the increased efficiency of automation. The new ideas have been shaped with the help of lead customers such as ASML, factoring in a wealth of real-world experience. Any customer designing systems with boards, backplanes, and cables should benefit.

DFT Approaches for Giga-gate SoC Designs

In the early days of IC design there were arguments against using any extra transistors or gates for testability purposes, because that would be adding extra silicon area which in turn would drive up the costs of the chip and product. Today we are older and wiser, realizing that there are product pricing benefits to quickly test each new SoC before packaging and even in the field. The biggest annual event in the test world has to be the International Test Conference (ITC), coming up November 15-17 in Fort Worth, Texas. I was able to speak with Ron Press of Mentor Graphics by phone about what they are doing at ITC this year. Here’s an overview:

- Keynote by Wally Rhines, The Business of Test: Test and Semiconductor Economics (Tuesday, Nov 15, 9AM)

- Tutorial 5, Diagnosis-driven Yield Analysis (Sunday, Nov 13, 1PM – 4:30PM)

- Tutorial 9, Mixed-signal DFT and BIST: Trends, Principles and Solutions (Sunday, Nov 13, 1PM – 4:30PM)

- Session 2.1, Test point Insertion in Hybrid Test Compression/LBIST (Tuesday, Nov 15, 2PM – 4PM)

- Session 2.4, Minimal-Area Test Points for Deterministic Patterns

- Session 17.1, Automated Measurement of Defect Tolerance in Mixed-Signal ICs (Thursday, Nov 17, 1:30PM – 3:30PM)

Full details of Mentor at ITC are online here. By the way, Ron is the General Chair for ITC 2016. The big three themes that I learned from Ron about testability this year were:

[LIST=1]

Our present era has now enabled SoC designs with billions of gates, so how are you going to test that kind of a chip in a reasonable amount of time? The answer is to divide and conquer, applying the concept of hierarchical test to reduce ATPG run times, minimize RAM usage, and actually generate ATPG results much earlier in the design flow. This methodology lets test engineers create and validate patterns for each block, then automation enables reuse of the patterns as each block is placed in an SoC.

Mentor also offers Embedded Deterministic Test (EDT) points that works with test compression to further reduce pattern volume giving you 2X to 4X additional compression on top of what their TestKompress approach offers.

In the automotive world reliability of electronics is paramount, so they’ve created a standard called ISO 26262 and the EDA and semiconductor IP vendors have responded with both memory and logic BIST approaches. Field return analysis (RMA) is another key requirement for automotive. Mentor has created much of the test technology to meet the strict automotive requirements:

The final of three major points is diagnosing faults in FinFET technology, where the challenge is to identify the root cause of a particular type of physical failure. The three letter acronym they use is called Root Cause Deconvolution (RCD) and this technique uses statistical enhancement to pinpoint failures starting from the logical diagnosis and ending up at the layout location:

Transistor-level defects in FinFET designs can be located using cell-aware diagnosis for all of the pattern types (stuck-at, transition faults, cell-aware, etc.). The tools look at the FinFET layout and circuit schematic, then creates a cell-aware fault model specific to FinFET. Mentor has been using this sophisticated fault modeling since the 32nm node and smaller.

If you attend ITC this year then consider checking out what the actual users of Mentor tools are talking about in their test experiences at the Posters: Samsung, Teradyne, Spreadtrum.

New Frontiers in the Storage System Market Call for the Best of ICE and Virtual Emulation

The storage market has reached what Andy Grove once described as “…a strategic inflection point.”[1] This is the stage in the life of a business when its fundamentals are about to change.

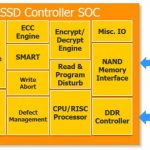

Changing fundamentals in the storage market—where solid state drives (SSD) are now at the forefront of multiple storage applications, from enterprise-based datacenters to PCs—create both great opportunities and significant challenges. Both arise from new technical innovations, emerging standards, and the desire to reach higher performance, increase storage capacities, and meet the needs of system-level infrastructures—all at a lower cost per device.

Delivering solutions for these challenges are where the Mentor Veloce® emulation platform demonstrates its strength as the hub of a sophisticated and comprehensive functional verification solution. One that gives design teams a catalyst to differentiate their enterprise storage devices that use SSD and NAND technologies.

Emulation can verify the hundreds of millions of gates and multiple protocols now seen in SSD controllers along with the complex software that drives them. It has the speed to support full-chip hardware/software co-verification so that the RTL IP and software specific to a particular SSD controller can be verified together.

Many protocols are still widely used in storage systems today (including SATA, SAS, PCIe, and NVMe). SSD device manufacturers need an emulation tool kit that offers solutions for them all. The Veloce emulation platform is without peer in this regard. Veloce provides in-circuit emulation (ICE) components (iSolve), virtual models (VirtuaLAB), and a unique new application (Veloce Deterministic ICE) to verify the design using the chosen host interface. Veloce also provides all the NAND, DDR, and NOR models used in conjunction with the SSD controller and software.

The Veloce iSolve library offers a full complement of hardware components to build a robust in-circuit emulation (ICE) flow, which is needed for many SoC verification scenarios.

The Veloce Deterministic ICE App complements and extends the usability of an ICE-based environment by delivering a repeatable and virtual debug flow. In addition to offline SW debug, the Veloce Deterministic ICE App enables advanced debug capabilities, power analysis, and coverage closure methodologies.

And the Veloce VirtuaLAB environment represents a new-generation of verification solutions delivering high-speed verification for multiple host protocols and memory devices, HW/SW system-level debug, power analysis, and system performance analysis.

SSD on ICE

When an SSD SoC design needs to be connected to real devices or custom hosts, the DUT (instantiated in the emulator) must be connected to physical hardware. In this case, teams use an emulation platform to set up the test environment by connecting the required peripherals/hosts using speed adapters/bridges to communicate with the SoC design mapped to emulator. Software teams make use of the ICE environment for firmware development and to run real applications. Verification teams use it to exercise various test methods to interact with the interfaces to verify the functionality of their SoC designs. Most designs today have an embedded CPU, and ICE is used for testing the OS boot cycles as well. ICE is also used for connecting proprietary hardware or proprietary operating systems (OS) to reproduce issues found in prototype or in a post-silicon lab setup.

Making ICE Repeatable

There are significant challenges in using ICE in certain scenarios, many of which are found in SSD verification. These include limitations on trace depth, long and iterative debug cycles, random and asynchronous events, and inflexibility in how the emulator can be deployed and shared. In addition, advanced verification techniques, such as power estimation, are not the best fit for a traditional ICE environment.

The Veloce Deterministic ICE App addresses these challenges by creating a virtual debug model of an ICE run. Significantly, it adds determinism to the debug environment by making the test run repeatable cycle-by-cycle. It does this by generating a replay database to re-run or repeat the same test without the need for hooking up to ICE targets.

Figure 3: Using the replay database without ICE targets.

While in replay mode, a user can choose to dump waveforms for an entire design for the duration of a test, or activate various other debug features (like live monitoring of important signals using streaming waveforms, enable displays, and protocol monitors), or do both. Having the ability to stop and inspect both data and full waveforms provides a rich debug platform and increased productivity in addition to efficient use of emulation resources.

The Veloce Deterministic ICE App makes the test environment portable to other emulators or teams located in different places as the test is no longer dependent on the external ICE hardware. It also gives the flexibility to use other Veloce Apps like power analysis, SW debug, coverage, and assertions that would not have been feasible without this technology.

The Virtual Lab

Virtualization is the game changer for enterprise verification. Virtualization, which Mentor pioneered almost 10 years ago, allows emulators to be moved to centralized data centers to establish company-wide virtual platforms that support multiuser, software-driven SoC verification in a 24/7 enterprise server environment. Verification engineers can now access an emulator from their desktop, even from remote locations thousands of miles away from the emulator.

Peripherals are virtualized via the Veloce VirtuaLAB environment. VirtuaLAB provides the same host interfaces as iSolve, including NVMe, but instead of using external hardware that must be cabled to the emulator, VirtuaLAB uses software protocol models. And, as VirtuaLAB uses the same IP as in ICE solutions, so it delivers the same functionality as iSolve hardware peripherals.

Importantly, VirtuaLAB delivers emulation performance equivalent to that of ICE, making it an attractive alternative that is better suited to multiple users and applications.

VirtuaLAB allows an SSD controller designer to run and debug the same software applications in the VirtuaLAB environment as they would on the real hardware. VirtuaLAB also eliminates the need for hardware speed adapters/bridges, and supports third-party performance analysis and power analysis applications. This means designers can do all of the same statistics, analysis, and metrics that they would do on the real design, but it’s done pre-silicon.

The Best of Both Worlds

With the Veloce emulation platform, verification teams have access to the best of both worlds—ICE and virtual emulation—powered by the world’s most versatile and flexible emulation technology.

The Veloce emulation platform is radically recasting the emulation landscape, making emulation friendlier and more useful, while delivering all of the speed, visibility, and performance of traditional ICE-based emulation. The Veloce emulation platform is uniquely built with highly scalable hardware, an extensible operating system, proven virtual solutions, and a growing library of apps that solve application-specific verification challenges.

To learn more about the rise of SSD technology and Mentor’s emulation solutions for storage system designs, check out the new whitepapers Veloce Delivers Best of ICE and Virtual Emulation to the SSD Storage Market and Using the Veloce Deterministic ICE App for Advanced SoC Debug.

[1]Only the Paranoid Survive, Andy Grove, Crown Publishing Group, May 5, 2010