Just as a reminder, there are three semiconductor books in PDF format available for free on SemiWiki.com. The only hitch is that you must be a registered SemiWiki member to download them. If you are not currently a member please join here as my guest:

Continue reading “Free Pivotal Semiconductor Books!”

Dolphin Webinar “The proven recipe for uLP SoC”

Dolphin will hold a live webinar on November 15, 9:00 AM PST or November 22, 10:00 AM GMT. This webinar targets the SoC designers wanting to learn how to quickly implement ultra-low power (uLP) techniques, using proven recipes.

Continue reading “Dolphin Webinar “The proven recipe for uLP SoC””

Flexible IoT Wireless

There’s been quite a bit of debate about what is the “best” wireless option for the IoT, coming down usually in favor of there being no single best option. Applications are so widely varied that different solutions are needed to ideally fit different requirements. However, IoT economics require we settle on a limited set of options, of which Bluetooth-5 (BT5) and two 802.15.4 options, ZigBee and Thread, seem to be the front-runners. But suppose we didn’t have to compromise, or at least not as much as we think? I talked to Teppo Hemiä, CEO of Wirepas, at ARM TechCon, to understand how the Wirepas solution (Pino – Finnish for Stack) can help.

Teppo makes the argument that cellular support, while long-range, is uneconomical for the IoT at least as a primary path to edge nodes and still lacks coverage in some locations critical for IoT (such as basements). BT is economical but lacks scalability and range. He also argues that to be effective LPWAN requires building infrastructure which would ultimately rival that for cellular, making it uneconomic as a universal solution. Teppo asserts that a better solution should leverage a combination of cellular and decentralized mesh networks, which is what they aim to provide with Pino.

Pino is software only, running he said on top of any radio, certainly on top of the physical layer of BT5 and 802.15.4. Wirepas replaces part of the wireless stack without need to add hardware or OS support. Most importantly, there is no need to build new infrastructure; any Pino-enabled device added to the network can route and extend a network supporting thousands of devices per gateway, with gateways connecting ultimately to cellular or WiFi networks or through Ethernet.

Communication can be multi-hop across homogenous devices with control based on local decision making. Operation parameters can be tuned to optimize bandwidth, latency, range and power consumption. For each device there can be multiple routing options and multiple eventual gateways for backhaul.

I had a couple of questions and I’m sure readers will have more. First up was power consumption. If ultra-low power devices also have to act as routers, won’t that drain batteries faster? Teppo said that standby consumption of a router can be less than 20uA, much lower than ZigBee, allowing for a 5-year battery life or 10 years with larger batteries. My second question (occurred to me after the meeting) was around adaptability/customization. Yes, because the product is purely software you can adapt to any IoT niche requirements in principle, but do you ultimately lose all your margin in high-cost customization? That is answered by their business strategy – going after large-scale installations where customization costs can be amortized over licensing fees at high-volume.

Wirepas have chalked up an impressive win in the Oslo region (Norway) where they are deployed in 700k Aidon electricity meters. Also Nokeval has released a series of environmental sensors using the same wireless networking technology. Both examples are consistent with their business strategy – large-scale installations in applications like metering, sensors, lighting and beacons. Another application Teppo mentioned is as a replacement for RFID. He claims this solution can be cheaper than RFID, and obviously you would no longer need readers (or people to bring readers near the devices) because devices are already connected. He said (but didn’t elaborate) that this application is already in deployment.

The company is quite young – founded in 2010 though raising their first round of funding only last year and only recently opening their first office in the Bay Area (Palo Alto). Of course their concept is not entirely new. Work in wireless mesh network architectures is very active with several areas still in research. In fact, a lot of this work came out of and continues at the university in Tampere (Finland) where these guys are based. It is encouraging to see a commercial solution emerge and already deployed at a city scale. This can only help push further progress. You can learn more about Wirepas HERE.

Ford Seeks Own Path to Car Sharing and IoT

It’s hard to be a thought leader around the future of transportation when the entire market seems to be moving in one of three directions simultaneously: either ride hailing (Uber, Lyft), car sharing (Zipcar, Car2go) or automated driving (Google, Tesla). If you’re Ford Motor Company and you care about whether you are adding to or mitigating existing congestion with new driving options, it is even harder.

The goal of any new transportation solution ought to be to reduce the traffic load on existing highways. The consensus opinion is that the available infrastructure is finite and any new transportation solution should be intended to reduce the demand rather than increase it.

Car sharing clearly is adding to the vehicle load on existing infrastructure. That load can be expected to grow around the world with car sharing service penetration currently so low and with the wave of car sharing startups still rushing in. Experts have already sounded the alarm that car sharing services – as well as ride sharing suppliers – are drawing consumers away from public transportation.

While experts have suggested that car sharing and ride hailing services will diminish the demand for new cars no less a voice than Boston Consulting Group has only attributed a diminution of vehicle demand of about 800,000 vehicles five years hence out of a market of 100M vehicles sold. Clearly, ride hailing and car sharing, if anything, represent a net addition to the number of cars on the road.

What is emerging is a fragmentation of transportation which is akin to the fragmentation of content consumption taking place in the car. Radio broadcasters are concerned that in-car listening has been diminished by the increasing access to streaming services via connected smartphones. The simple reality is that people are still listening to their car radios, but they are divvying up their listening among different sources.

So it goes with transportation. Car sharing options on streets and parking garages create new use cases which are not mutually exclusive. Ford has sought a different path and that path is reflected in its acquisition of Chariot.

Unlike the majority of existing car sharing services offering smaller vehicles like Daimler’s Smart or BMW’s i3, Chariot makes use of Ford’s Transit Connect vans and focuses on pooling passengers largely though not exclusively to and from public transit stops – crowdsourcing its routes based on demand. In reality, many city planners have discovered that this is precisely the application served by Uber and Lyft. The difference is the focus on carpooling – though this is also served by Uber and Lyft.

This positions Ford in direct opposition to Uber and Lyft without posing a threat to the existing taxi fleet. Ford has threaded the transit needle and is poised to take this opening to cities beyond its San Francisco beachhead.

What is even more unique about the Chariot play by Ford though is that the vehicles being used come from Ford’s fleet division, for which car sharing is a natural extension. Car sharing initiatives belong with fleet applications. Ultimately, shared vehicles will be networked and share information – setting the stage for the connection of passenger cars in the future.

It is no coincidence that Ford’s year-old Go Drive initiative in London was shut down at the end of October. Ford has likely concluded that just adding car sharing to the streets of London or any other city around the world is only adding to the vehicle load on already stressed streets.

Ford Executive Chairman William Ford has been advocating for four or five years for connected and shared transportation. With the acquisition of Chariot, Ford Smart Mobility is starting to take shape while shaping a leadership position on the future of transportation for Ford.

At the same time, Ford is beginning to make progress on its wider vision of automated driving and a connected transportation world built upon IoT principles. Ford appointed Laura Merling to head autonomous vehicle development within the Smart Mobility Group. Merling brings to Ford an IoT background from her work at SAP and AT&T.

At the same time, Ford announced a long-term tie-up with IoT kingpin Blackberry. Blackberry says it is dedicating a team to work with Ford on expanding the use of Blackberry’s QNX Neutrino operating system, Certicom security technology, QNX hypervisor and QNX audio processing software.

Ford, like a growing roster of other auto makers, is looking to partner with cities to help resolve transportation challenges. Ford’s vision of shared transportation based on crowdsourced routing is clearly intended to reduce the vehicle load within city limits.

Of course, the Chariot-related strategy might also negatively impact vehicle sales, but Ford is clearly making the calculation that either this is not the case or, if so, it is worth the sacrifice. The Ford Focus’s deployed in London for the Go Drive trial are gone suggesting that passenger-vehicle-based car sharing is not in the cards for Ford – at least not at the moment.

The last missing piece of the strategic puzzle is FordPass. What appeared at introduction to be a payment, parking and pedestrian navigation platform has yet to pan out. One of the handful of original apps, FlightCar, is now defunct.

FordPass may yet help to knit Ford’s IoT vision together. My personal recommendation has been to build in navigation and related location resources and services – integrated with Ford’s existing in-vehicle app resources.

Ford has not been shy about grabbing headlines – particularly with its announced plans to be mass producing automated vehicles by 2021. But taking that announcement in the context of the subsequent Chariot acquisition paints a clearer picture of Ford’s goal to reduce the need for private vehicle ownership within cities – with the ultimate goal of automating that transportation and enabling connectivity between transportation assets and infrastructure.

How does the IoT get to 20 Billion?

Not long ago I was asked the question “How do we get to 20 billion IoT devices?” (Actually, I’ve been asked this question multiple times over the past 10+ years.) Great question! How, exactly, do we get to 20 billion (or 30 billion, or a trillion) IoT devices? We’re certainly not going to get there with wearable devices and other personal gadgets. Well, we might but it would be a stretch, and the probability is near-zero. Why do I say it’s not going to happen with wearables, etc.? Well, again, let’s do some simple calculations.

There are (roughly) 7 billion people on the planet. For argument’s sake, let’s say every single person on the planet gets fitted out with 3 wearable devices. There, we made our 20 billion number with some spare. Done. We can all go home now. But not quite so fast. Only 4.5 Billion people have access to working toilets, so I’m going to guess that they might buy a toilet before they buy a FitBit or an Apple Watch. I know I would. You would too. Only 3 billion people are internet so that cuts down the number of possible devices quite a bit quite a bit too. Suffice it to say that we’re not going to get to 20 billion IoT devices anytime soon if we base it on the number of people on the planet.

The number of people on the planet simply isn’t interesting. It’s a limited market. I’ve been saying this since 2004. I call it the Internet of People (IoP). It’s what gets all the press because let’s face it, it’s fun and sexy and you get to buy cool toys and play with them. But in a real sense, it is uninteresting.

Great, so now we know how we’re not going to get there. Helpful, I guess, but not really in the way you wanted. So let’s take a simple example, and do some more simple math.

Let’s say we want to put strain/crack/breakage sensors on every window of a skyscraper. Let’s make this skyscraper 300 feet tall (about 30 storys), and put, say, 100 windows per floor. That gives us 30,000 windows. (Remember that number, because I’m coming back to it.) Now let’s make 10 of those per city. We’re up to 300,000 windows. Let’s make that for 100 cities. 300,000,000 windows to put sensors on. And that’s just ONE IoT application on (a few) buildings. I can think of about a dozen more without breaking a sweat, each one requiring about the same number of sensors. Now can you see how we get to 20 Billion devices? I sure can, and none of it has anything to do with consumers, wearable devices, or almost any of the other currently trendy “IoT” topics.

on actual Things is virtually limitless. If you’re looking for true market potential, this is where the interesting things will happen. This is where the real money is to be made. It’s where the truly difficult problems will be solved. It’s where the really interesting work is.

Now, let’s go back to that number I told you to remember. 30,000 windows on that building. It’s one thing to set about the task of placing sensors on all of those windows. That job alone would take you 6 months or more (look up how long it takes to wash all the windows on a skyscraper). But what if someone had to go back every year and replace 7,500 batteries on those sensors? Or even 1,000. What?! You’d basically have to have a full-time crew of battery-changers working on every one of your buildings. Great for unemployment world-wide. Not great for the economics of owning the building. Again, the tricky part is going to be in removing the battery from the equation. Make that sensor a solar-powered, stick-on sensor and your window-washers can stick one on each window during one cleaning cycle and then … never touch them again.

This is how the the IoT gets to 20 billion. It’s by connecting things to the internet, not just connecting people in more ways to the internet. My rule: If your IoT solution is based on the number of people on the planet, it’s a self-limiting solution. If it’s based on the number of things then it’s nearly limitless.

Also Read: What’s Really Going to Limit the IoT?

IP-SoC 2016: IP Innovation, Foundries, IoT and Security

The next IP-SoC conference will be held in Grenoble, France, on December 6-7, 2016 after Shanghai in September and Bangalore, India, in April. This will be the 20[SUP]th[/SUP] edition of this unique IP centric event, as well as the celebration of Design And Reuse 20[SUP]th[/SUP] anniversary. Creating in 1997 a company fully dedicated to Reuse, based on such an innovative business model as IP portal, was a real bet. If we look at D&R, it’s now the undisputed leader and the IP portal counts about 400 IP vendors and thousand IP product references. As an analyst, I must confess that I am using the web portal when needed, and my guess is that many others, customers or vendors do it as well.

IP-SoC conference agenda is organized by topics and we can identify the most hot of the year: IoT and Security. This shouldn’t be surprising as, during 2016, the semi industry has realized that the new business opportunities linked with IoT could only materialize if the security can be asserted. Do you know that the industry is still waiting for a security standard to be defined for IoT?

IP-SoC conference is located in Grenoble, France, and when talking about security we should remind that the smart card has been invented in France. We can notice that European companies are the market leaders for smart card IC, and the program reflects this focus put on security with presentations from ARM, Inside Secure and Barco Silex dealings with different aspects of security for IoT.

Before to launch a system, you need to design an IC integrating various IP and you will need to manufacture the IC. With presentations from GlobalFoundries, STMicroelectronics and TSMC, the foundry part of the IoT ecosystem is well covered! The IP side as well with ARM (#1) and Synopsys (#2) presenting several papers, as well as other IP vendors, analysts (including myself, but I will tell you more about my presentation in a separate blog) and Intel talking about IP from the customer side.

Last but not least, CEA-Leti is presenting a paper about IoT platform and FD-SOI, and Semiwiki readers know about the strong involvement of CEA-Leti into FD-SOI technology. In fact, the research center is involved in the development of specific IP that can be used in IoT systems, like connectivity IP (RF), but CEA-Leti could also tell you about security as this is one of their strong area of expertize among others, many others in fact: from High Performance Computing (HPC) to dedicated wireless network design or medical…

To register, just go HERE.

See you on Tuesday 6[SUP]th[/SUP] December in Grenoble

From Eric Esteve from IPNEST

IP-SoC Agenda

Foundry Corner and Innovation in Technology

[TABLE]

|-

| –

| “FDSOI production readiness and its roadmap beyond 22FDX” by Gerd Teepe, GlobalFoundries

|-

| –

| “Innovative FSOI IP needed !!!” by Patrick Blouet, Collaborative program manager, STMicroelectronics

|-

| –

| “Designing with TSMC Open Innovation Platform (OIP) Ecosystem” by Banchuan Cheong, Technical Manager, TSMC Europe

|-

• From IP to IoT : Where is the IP world going?

[TABLE]

|-

| –

| “IP Explosion (1995-2010) and IP Paradox (2010-2025)” by Eric Esteve, IP Nest

|-

| –

| “Back to the Future. The end of IoT” by Bill Finch, CAST

|-

| –

| “How to jump start your ARM-based IoT chip for free” by Phil Burr, ARM

|-

| –

| “HIGH PERFORMANCE SYNCHRONOUS INTERFACE FOR IOT AND WEARABLE APPLICATIONS” by Pratap Neelasheety, Synopsys

|-

• Security a key challenge

[TABLE]

|-

| –

| “Right sizing the SoC security architecture for the new connected world” by Bart Stevens, Director of Product Management, Mobile and Networking Hardware Security Solutions, Inside Secure

|-

| –

| “ARM technologies for IoT system’s security, from device to cloud” by Mike Eftimakis, ARM

|-

| –

| “Setting up secure VPN connections with cryptography offloaded to your Altera SoC FPGA” by Pieter Willems, Barco Silex

|-

• IoT Design Resources needed for a connected world

[TABLE]

|-

| –

| “L-IoT : a flexible and ultra low power IoT platform in FDSOI 28 nm” by Edith Beigne, CEA Leti

|-

| –

| “ADAPTING UART FOR DISTRIBUTED MONITORING IN IOT APPLICATIONS: A DESIGNWARE IP CASE STUDY” by Sreenath Panganamala, Synopsys

|-

| –

| “HIGH PERFORMANCE SYNCHRONOUS INTERFACE FOR IOT AND WEARABLE APPLICATIONS” by Pratap Neelasheety, Synopsys

|-

• IP in SoC and Product

[TABLE]

|-

| –

| “IP Breadcrumbs Method for tracking IP versions in SOC Database” by Mukund Pai, Intel Corp

|-

| –

| “A Knowledge Sharing Framework for Fabs, SoC Design Houses and IP Vendors” by Anne Meixner, The Engineers’ Daughter LLC

|-

One chip and the MCU variant challenge disappears

Merchant microcontrollers are usually made available in a wide range of variants based on one architecture with different peripheral payloads and packaging options. A couple of companies, notably Cypress with their PSoC families and Silicon Labs with the EFM8 Laser Bee Continue reading “One chip and the MCU variant challenge disappears”

Industry 4.0 and Manufacturing Processes

Industry 4.0 or, as it is also known the fourth industrial revolution is the trend that is currently coming into play of automating the manufacturing processes and the use of IoT and other technologies to make industrial processes more readily accomplished. It is working hand in hand with things like the internet of things, cloud computing and cyber-physical computing.

Using Industry 4.0, we create what are called smart processes and smart computing.

According to Wikipedia, “Within the modular structured smart factories, cyber-physical systems monitor physical processes, create a virtual copy of the physical world and make decentralized decisions. Over the Internet of Things, cyber-physical systems communicate and cooperate with each other and with humans in real time, and via the Internet of Services, both internal and cross-organizational services are offered and used by participants of the value chain.”

The term Industry 4.0 or fourth industrial revolution began in the German government with a project that they had created that was markedly high tech. It promoted computerized manufacturing and provided the reasons for that manufacturing to take place as well as how industry 4.0 would play out with other areas of manufacturing such as logistics and supply.

Industry 4.0 provides for changes in the way in which we work. It makes our work smarter and faster and in most cases will save a great deal of money for the factories and businesses which embrace it. For those that do not embrace the fourth industrial revolution, they will be hard pressed to keep up to those who have introduced smarter factories. Better manufacturing, better use of space and better safety results are just a few of the things that Industry 4.0 provides.

For those who embrace Industry 4.0 the results can be faster, better, more profitable results from their business. What’s not to love about that.

This is the second in a series. To see # 1 in the series please use this link: Short History of the Fourth Industrial Revolution

Or Check out our website at www.internetofthingsrecruiting.com

Also Read: Manufacturing Singularity is Comng!

Optimizing Prototype Debug

In the spectrum of functional verification platforms – software-based simulation, emulation and FPGA-based prototyping – it is generally agreed that while speed shoots up by orders of magnitude (going left to right) ease of debug drops as performance rises and setup time increases rapidly, from close to nothing for simulation to days or weeks for prototypes.

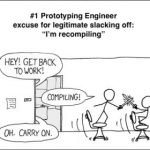

All of this adds up to a real headache for debug in prototyping. While FPGAs provide some level of built-in logic analysis, these devices are designed primarily for optimal mission-mode performance, not for broad and deep debug. Which means that typically you have to instrument the design RTL with logic to bring out the signals you want to probe. So if you find a bug in one run, then guess exactly the right signals to probe and when to trigger to find the root-cause, re-instrument and re-run, you have managed to track down the problem with just one re-spin. More commonly you’re going to re-spin multiple times to converge on the right probe and trigger set. A Wilson Research Group/Mentor Graphics survey found prototyping teams took 4-6 re-spins on average to converge on a root cause for a bug. And you’re burning a lot of unproductive time on each re-spin. Or not if you feel the need to polish your sword fighting skills from the perilous heights of your trusty office chair 😎

I’m going to talk about Mentor’s solution to this problem, but first I should touch on something that had me puzzled for a while. Mentor doesn’t have their own prototyping box (that I know of), so why are they providing solutions in this space? In fact, they offer a software product called Certus for instrumenting FPGA-based designs, which they acquired from Tektronix around 2013. Tek had been working closely with the Dini Group (among others) and that relationship continues with Mentor. Since the Dini Group is very well known for building high-performance multi-FPGA boards, frequently used in prototyping, the pieces came together for me. Mentor through Certus, offers a solution for instrumenting custom multi-FPGA prototype boards, a commonly used platform today and likely into the future, especially in performance-driven applications.

Now back to the debug problem. A prototype for a large design may be split across multiple FPGAs, so a root-cause for a problem appearing in one FPGA could be in another FPGA. Then you have to worry about overhead for tracing signals. The most obvious approach, to add logic and wiring to probe each signal you might want to observe, quickly gets out of hand. Certus has a more efficient method. You can map up to 64K signals into an observability network with low overhead (~1 LUT per signal instrumented). The observability network is connected to a capture station which can capture traces from up to 1024 of these signals.

Which 1024 signals are captured can be reconfigured without needing to re-instrument and recompile the design. And you can have up to 255 capture stations per FPGA, so you can have a very high level of configurable visibility across all FPGAs on your prototype board, without need for recompiles. Capture stations store traces in ring buffers (controlled by user-defined triggers) which are efficiently compressed to store traces in on-FPGA memory. This approach can store up to seconds of trace-depth, up to 15 seconds in one example cited where bus activity related to an Ethernet MAC activity was being traced.

Or you can stream trace data to external memory. In another example cited, all activity was traced on an AXI bus in an A7-processor-based design during Linux boot, into a 4GB DDR memory, though Steve Bailey noted that there is some expected blanking during streaming, unless you are willing to enable pausing the design clocks during streaming. However, even when there is blanking correct ordering/causality between signals will be preserved. In fact this is generally ensured, across all clock domains and across all FPGAs. Where clocks are derived from the same crystal, signals within those domains are guaranteed to be correlated. If they are from different crystals, they may be off in correlation by one or two cycles but hey, Mentor can’t control relative drift in crystals. All of this correlation is ensured by Certus.

Visualizer debug pulls all of this together for detailed analysis. You drill down and trace back, as you do in debug. Maybe you decide you need to look at some traces you didn’t capture, so you need a new prototyping run, but now you probably don’t need to re-instrument. You just reconfigure some capture stations to add those traces. Of course it is possible that even with this flexibility you might have to re-instrument in some cases. But on-the-ground experience indicates that the original average 4-6 rebuilds drops to 0-1 rebuilds when using Certus.

Steve also mentioned that Certus supports reading out register states, though he acknowledges this requires stopping clocks and is quite slow. Still, he pointed out that once in prototyping, many users want to stay there as long as possible and will take an occasional slow operation in exchange for faster and deeper co-debug between hardware and software. You can learn more about Mentor support for FPGA prototyping debug HERE.

Final SemiWiki Book Signing at REUSE 2016!

It has been a hectic year for the semiconductor industry so now is a good time to reflect on how we got to where we are today in hopes of better understanding where we are going tomorrow.

Given the importance of semiconductor IP (the $32B ARM acquisition by SoftBank for example) I would strongly suggest attending the REUSE 2016 event on December 1st at the Computer History Museum in Mountain View, CA. And yes, I will be giving away copies of our book “Mobile Unleashed: The History of ARM” in the exhibit hall. I will have 100 copies of the book and more than 200 people are expected so you do the math.

Mobile Unleashed is the origin story of technology super heroes: the creators and founders of ARM, the company that is responsible for the processors found inside 95% of the world’s mobile devices today. This is also the evolution story of how three companies – Apple, Samsung, and Qualcomm – put ARM technology in the hands of billions of people through smartphones, tablets, music players, and more.

Here is the REUSE 2016 abstract, I will post the presentation abstracts when they are available. Be sure and take a look at the exhibitor list because this is a great opportunity to meet the people behind the ever important building blocks of modern semiconductor design, absolutely.

REUSE 2016 is the first of an annual conference and trade show to bring together the semiconductor IP supply chain and its customers for a full day of everything to do with semiconductor IP. Hosted in the heart of Silicon Valley at the world famous Computer History Museum, there could not be a more appropriate venue for a day focused on the hottest segment of the semiconductor industry.

The day will begin with a keynote speech on trends driving IP reuse followed by multiple tracks of technical and business-oriented talks by a diverse set of companies, large and small.

Customer may engage with suppliers is a spacious exhibit area to learn about new technology and solutions that are available.

Capping off the evening will be a social in the exhibit hall with drinks and food provided to allow everyone the opportunity to relax and meet new friends, and even tour the museum itself.

The book signing will start at 5:00pm in the exhibit hall and based on previous events it takes less than one hour to sign 100 books so get there early if you want a print copy. If you would prefer to have a PDF version of the book you can download it HERE. Only registered SemiWiki members can access this wiki so if you are not already a member please join as my guest:

https://www.legacy.semiwiki.com/forum/register.php

If you haven’t been to theComputer History Museum lately this is your chance:

The Computer History Museum is a nonprofit organization with a four-decade history as the world’s leading institution exploring the history of computing and its ongoing impact on society. The Museum is dedicated to the preservation and celebration of computer history and is home to the largest international collection of computing artifacts in the world, encompassing computer hardware, software, documentation, ephemera, photographs, oral histories, and moving images.

The Museum brings computer history to life through large-scale exhibits, an acclaimed speaker series, a dynamic website, docent-led tours and an award-winning education program.

ABOUT REUSE 2016

A tradeshow and conference focused exclusively on semiconductor IP, REUSE 2016 will be held annually with its inaugural event Thursday, December 1, 2016, at the Computer History Museum in Mountain View, CA., from 9am-8pm. For event details, free participant registration, and prospective exhibitor information, please visit www.reuse2016.com. Stay informed of the latest news and updates using #REUSE2016.