Skipping over debates about what exactly changed hands in this transaction, what interests me is the technical motivation since I’m familiar with solutions at both companies. Of course, I can construct my own high-level rationalization, but I wanted to hear from the insiders, so I pestered Vic Kulkarni (VP and Chief Strategist) and Joāo Geada (Chief Technologist and previously CTO at CLKDA) into explaining their view of the background and benefits.

Ansys already has a strong position in integrity/reliability for electronic systems, from chip design up through the package and the board level. This is important, for example, in design for total system reliability and regulatory compliance in ADAS systems under challenging constraints such as widely varying temperature environments (in front of a windscreen in Death Valley, or in an unheated enclosure in Barrow, Alaska). Particularly at the chip-level, through their big data/elastic compute approach to fine-grained multi-physics analytics rather than margin-based analysis, they assert that they can offer higher-confidence in meeting integrity and reliability goals in reduced area with faster design turn-times. What they presented at DAC seems to bear this out.

This is an important advance, but as always in semiconductor design, the goal-posts continue to move. Vic and Joāo said that customers are finding increasing difficulty in getting to timing signoff at 16nm and below, often starting with 10k+ STA violations, which can take 3-4 weeks to close. They are managing process variation factors (OCV) though use of the latest standards for timing in Liberty models but there are also dynamic factors to consider. At these feature sizes, operating voltage can drop to 0.6-0.7V, but threshold voltages don’t drop as much, so sensitivity to power noise increases and that can lead to intermittent timing failures in what would otherwise appear to be safe paths. Equally, clock jitter as a result of power noise can cause intermittent failures.

Of course you could follow the standard path and over-design the power distribution network (PDN) to margin for worst possible cases across the die. But that over-design becomes increasingly expensive and uneconomic, especially at these aggressive nodes, so you settle for a compromise between area and risk in which you hope you covered the most likely corner cases. Based on presentations at DAC this year, Ansys has already demonstrated that they can replace this uncertain tradeoff in related problems with multi-physics analysis delivering low risk integrity/reliability across the die with significantly less over-design than the traditional approach.

Which bring us to the reason Ansys wanted the CLKDA FX tool-suite. To address these dynamic timing problems, they needed to fold a high-accuracy timer into their multi-physics analysis to enable analysis of timing side-by-side with dynamic voltage drops (DvD). The point here is to analyze locally across the design to guide local adjustment of the PDN where appropriate. In an area where there’s enough slack, maybe you’re not too worried if timing sometimes stretches out a bit, so there’s no need to upsize the local PDN. Where timing is tight, you upsize enough to ensure that DvD will not cause the path to fail. Similarly, multi-physics analysis will highlight where clock jitter sensitivity could cause failures and may require mitigation in the local clock distribution.

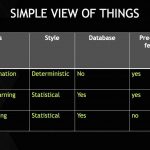

Getting this right requires an accurate timer, better than a graph-based STA, closer to Spice-level accuracy but much faster than Monte-Carlo Spice (MC Spice) so it can be effective on large designs. That of course has been the value-proposition of the FX family for many years – a technology which can propagate statistical arrival times and true waveform shapes while also maintaining correct distribution, yet run 100X faster than MC Spice. Since FX already has a bunch of customers, I have to believe that’s not just a marketing pitch 😎.

So where does this go next? I’m sure there will be continuing integration and optimization with the SeaHawk and Chip-Package-System flow. Joāo also pointed out a number of additional opportunities. Ansys’ strength is multi-physics analysis so there are opportunities to cross other factors in analysis – variability and timing or aging and timing for example. CLKDA has tilted at the variability windmill before, lobbying for a transistor-level static timing analysis approach, for example to better model the influence of accumulating non-Gaussian distributions along a path. Perhaps their concept will start to gain traction in this new platform. Their work in signoff for timing aging is also very intriguing and I think is likely to attract significant interest in high reliability/ long lifetime applications (automotive maybe?)

So now you know why Ansys acquired CLKDA. For me this seems like an even better home for the FX technology. You can learn more about ANSYS solutions, including the FX products, HERE.