Here are the Top 10 highlights from the recent TSMC 2018 Technology Symposium, held in Santa Clara CA. A couple of years ago, TSMC acknowledged the unique requirements of 4 different market segments, which has since guided their process development strategy — Mobile, High-Performance Computing (HPC), Automotive, and IoT. Many of the highlights described below are in the context of a specific platform.

Continue reading “Top 10 Highlights of the TSMC 2018 Technology Symposium”

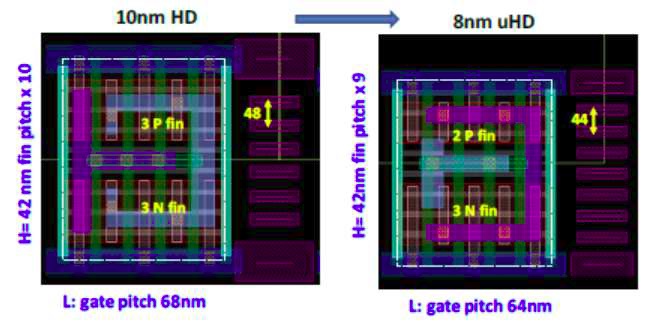

Samsung 10nm 8nm and 7nm at VLSIT

I got a tip sheet today for the upcoming 2018 Symposia on VLSI Technology & Circuits to be held June 19th through 21st in Honolulu, Hawaii. There is some interesting information on Samsung’s 10nm, 8nm and 7nm processes in the tip sheet:

Continue reading “Samsung 10nm 8nm and 7nm at VLSIT”

IP Vendor Tuning of Your SoC A Practice for Design Success

On April 17, Mick Posner, Director of Product Marketing, IP Subsystems, Hardening & IP Kit solutions held a Webinar entitled ‘Getting more from your IP Vendor, IP Tuned to Your SoC’. This brought back memories of the challenges in days past of making the right choices in IP selection, integration and validation when prudence limited choices and necessity dictated over-design or schedule push-out to make sure nothing was overlooked. Even with that, there were some setbacks followed by workarounds or waivers or fixes or ‘bug-as-a-feature’ specifications.

Luckily, we are in a golden age of design where success is more the norm and discipline and methodology complement the availability of pre-designed, pre-verified IP that interoperates and simplifies the task of subsystem and system verification.

The webinar addressed silicon design trends, approaches to faster IP integration, IP software development acceleration, IP validation and bring-up, DesignWare IP from architecture to silicon.

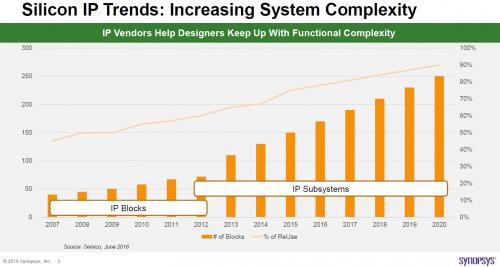

Increased System complexity has Silicon Design trends moving towards increased reuse of pre-designed and validated IP blocks and subsystems. This limits the task of verification to ensuring functionality at crossing boundaries of the IP interfaces and with this reduced exposure to implementation variability and increased consistency, the verification duration is reduced along with the functionality risk.

An IP Subsystem is far more complex than one realizes at first. As an example, one subsystem may contain more than a controller and PHY. You have unique logic intended to address clocking, reset, interrupts, DFT, security, and booting sequences to name a few. To address this, you need more than an RTL designer, you need expertise in layout, backend, test.

In addition, system level issues must be addressed like interface customization, interoperability, test and FPGA bring-up, interface simulation debug. Additional challenges exist like adequate throughput, latency, a timely schedule for tape out and silicon bring up.

This is where your IP provider can increase the chances of success, allowing a fast integration of IP into your SoC, enabling the acceleration of IP software development and the experience to support comprehensive silicon bring-up.

From the initial critical decisions in architecting the SoC design where low power strategies, performance, and area can be gauged and optimized to leveraging protocol expertise of Synopsys IP R&D team, you are able to define the SoC correctly right from the start and implement it with the least amount of delay and the highest degree of confidence in proper implementation. Custom IP Subsystems tailor functionality to your requirements and accelerate your Time-to-Market with a reduced Design Risk.

A comprehensive provision of resources, test suites, monitors, synthesis scripts and integration documentation combine to make the integration of the IP subsystem as seamless as can be. Case studies from High-End Application Mobile Processor design with a high number of IPs and complex power domain shutoff to IP Hardening with Performance and size optimization, involving floor planning, clock balancing and timing analysis. Hardening services with Signal and Power Integrity on Low-Power, High-Performance Mixed-Signal are another example of the breadth and width of available assistance. Five days of SoC integration support come with the typical IP hardening effort.

IP Hardening Benefits

• Integration: Enables faster PHY and/or Subsystem integration

• Floorplan: Explore high-performance design to find an optimal solution for a given PHY

• Placement: Deal with a high power density in reduced core power areas

• CTS: Leverage access to custom cell development to meet performance targets

• Routing: Experience to allocate higher metal layers for critical signals for higher performance

DesignWare DDR PHY hardening projects in Wireless, Networking, Video, and Automotive applications have been supported in a wide range of process technology nodes.

Software IP Software Development acceleration is another important aspect of support of DesignWare prototyping kits enabling Reference Design and Reference Software Stack with the provision of immediate out-of-the-box hardware or software -only Kits. Expert support in Silicon Bring-Up is another aspect of the comprehensive Synopsys offerings.

The Synopsys Global IP Development and Support Organization includes 2400+ R&D engineers, 220+ Application engineers, and 30 Program Managers. These all accomplish the task of getting more from the IP Vendor, combining the customer vision and Synopsys expertise from Architecture to Silicon.

Register to view the webinar replay

Peering Over the Timing Edge

I wrote recently about a yield problem which mobile vendors have been finding for devices built in advanced technologies. This was a performance issue (the devices worked fine at lower clock speeds), pointing to a discrepancy in some devices between predicted and observed timing. These were experienced design teams, using state of the art timing flows and libraries and yet performance yield was 10% lower than expected. This should raise a concern – are we approaching a point where current approximations for timing are becoming insufficient for signoff?

When we started analyzing timing, back in the days of really small circuits, we used SPICE. Designs grew, SPICE was no longer practical for full-circuit analysis, so we switched to cell-based design, with cells characterized for timing (ultimately in the Liberty format), with which we could do static timing analysis (STA) and drive timing-based synthesis and physical design. Those timing models have evolved through multiple versions as processes have shrunk and manufacturing variabilities have become more important, but we’ve still managed to retain the concept of cell-based timing models in some form, albeit more complex and requiring more corners to fully capture process variations. Factors which cannot be captured at the cell-level, such as power noise, have been managed by setting global margins and ensuring that IR-drop (in the power-noise case) does not stray outside those margins.

But the non-cell-based factors are becoming more troublesome at advanced processes where nominal voltages are much closer to threshold. Now noise, both in power and ground, can squeeze actual potential differences below threshold, pushing delays and slew rates well outside the nominal characterization range. Compensating by further increasing global margins becomes prohibitively expensive in power routing, decaps and ultimately total die cost. Which means we now must deal with instance-based and use-case-sensitive modeling; cell-based timing models, complemented by margins, are not sufficiently accurate for cost-effective design.

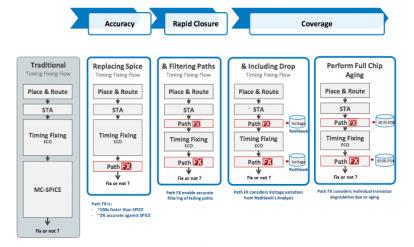

I doubt this is a cliff-edge in timing. The Liberty style of modeling (with many corners) still works well for huge designs on the most advanced processes. But we’re starting to see evidence, as I mentioned in that earlier blog, that accuracy is deteriorating noticeably (10% performance yield hit) at the fringes. Over time hopefully the library solution will improve further but that’s not a near-term fix. Monte-Carlo SPICE (MC-SPICE) can be used today but even in massive parallelism will be bounded to hundreds of paths, not very compelling on the mega-designs of today with potentially tens of thousands of paths near-critical.

Of course, there is another solution, one I’ve written about before – the FX platform from ANSYS. FX doesn’t look at Liberty models or faked waveforms. It does transistor-level modeling of the circuit with analog waveforms, Miller capacitances and the like. It’s not SPICE but it’s close – within 2% accuracy when compared with SPICE on one 7nm example operation at 435mV (sub-threshold). And it runs much faster than MC-SPICE, able to analyze up to 10K paths per hour.

Better yet, FX can model both voltage and ground noise accurately with real use-case waveforms. It’s integrated with the RedHawk product family – RedHawk and RedHawk-SC – for detailed IR-drop analysis and DvD-aware clock-jitter analysis. It can also do aging analysis, looking at per-transistor degradation (increase in Vt) as a result of use-case-dependent electrical stress.

Clearly even at this performance, FX doesn’t replace STA for bulk timing on SoCs. But when it comes to signoff for paths close to critical, higher accuracy will be necessary, with higher throughput than you can get with MC-SPICE. Why not just stick to Spicing a few hundred paths? If STA+Liberty was sufficiently accurate you could, if you knew for certain that no other paths were near-critical. But as outlined above, the standard flow isn’t sufficiently accurate near-critical. So you need check a larger selection of paths. Remember that what should be checked can’t be judged solely by how close STA says the path is; you also must consider use-cases which may promote big IR-drops and ground bounces. ANSYS mentions a customer example: a path thought to be just fine, which proved to be a real problem in a realistic use-case.

Over the long-term, where might this go? No doubt the STA/library model approach will continue to improve but there has to be some upper bound to acceptable model complexity given bounded return in accuracy for those models. That said, there is too big an investment in cell-based design and STA-based signoff methods to abandon this methodology. Perhaps there will be a shift over time to using Liberty-style models and STA for the bulk of the timing heavy-lifting, complemented by SPICE or FX-like methods for signoff near the margins.

You can watch a detailed webinar on the FX platform HERE.

ISO 26262: Automotive electronics safety gets an update in 2018

In the field of automotive electronics, the year 2011 was a long time ago. So, it is about time that the initial ISO 26262 specification that was adopted back then gets an update. The latest version will be known as ISO26262:2018 and will expand the scope of the original to cover more types of vehicles. It will add an entire section on semiconductors. This is good because with the existing standard there were many questions about best practices for semiconductor design.

The original specification only applied to passenger vehicles with a maximum gross weight of 3,500 kilograms. The weight limit is being eliminated and the spec adds other vehicles such as trucks, buses, and even motorcycles. The 2011 version applied to automotive electronic systems, but had little to say about semiconductors or the tools used to develop them. Very often this created confusion for chip developers and even more so for IP providers. ISO 26262 was really a system level specification, and sub-components could not be certified. Individual IC’s became what is known as a Safety Element Out of Context (SEooC). I have attended many technical panels where the participants tried to parse the implications of the 2011 standard in this regard. Hopefully the 2018 standard will add clarity here.

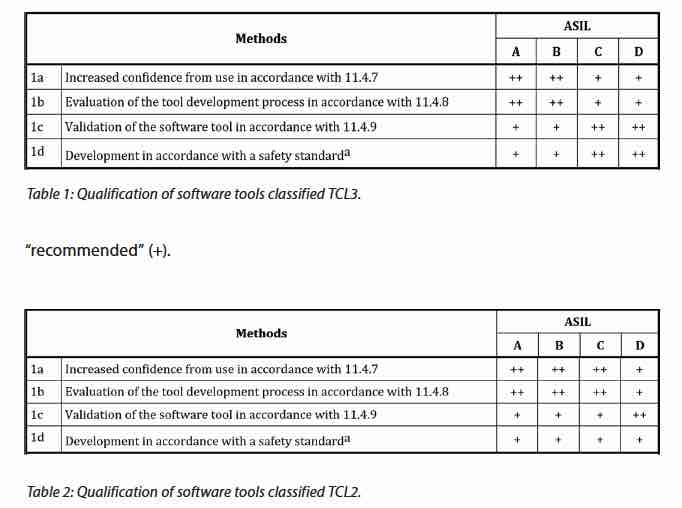

It is impossible to talk about semiconductor quality if we do not include the tools used to design and verify them. The 2018 version enumerates four different methods that can be applied to help qualify tools. The level needed depends on the characteristics of the tool as used in the flow. So called Tool Confidence Levels come from a determination of two inputs. The first is Tool Error Detection (TD1-TD3), with 1 being a high degree of confidence that the tool can detect an error. The second is Tool Impact (TI1-2), with TI1 being no chance that a tool malfunction can lead to a design failure. There is a matrix that combines these two factors into the Tool Confidence Level (TCL1-TCL3). When looking at the precautions necessary for a given automotive function, the ASIL level of the function has to be factored in.

Mentor has put together a useful white paper on the updates in ISO26262:2018. Included in this are some tables that help map out the effort recommended on tool qualification, given the inputs of TCL and ASIL. See the figure below from their white paper.

It’s an important to acknowledge that the tools used to design electronics for automotive use are an essential link in the quality chain. Over the decades that I have been involved with design tools, there has been an evolution from what you might call the early frontier days, to what is now a fairly sophisticated approach to tool and tool result quality. This has largely happened through pressure from customers. Of course tool vendors have also been willing participants in this activity, witness the ISO 9000 push years back. Through all of this, tool customers have learned and adapted in order to get their job done – namely to produce reliable chips.

Better tool qualification helps everyone, so having ISO26262 with its automotive origins providing the catalyst is not a bad thing. Maybe now that chips are being used in applications where failure could be life threatening, it is more than just a good thing. Tool vendors like Mentor have and will continue to invest in improving their tools to meet the new higher standards. It’s good to see them engaging in the public conversation around the ISO 26262 standard, and the upcoming related standards for fully autonomous vehicles. If you want to give their white paper a full read through it can be found on their website.

2018 Women in Engineering Achievement Award

Having spent my entire thirty plus year career in semiconductors and design enablement I have seen quite a change in diversity. When I first started I remember thinking that height and weight was the only diversity here in Silicon Valley. My wife really noticed it when she attended her first Design Automation Conference in 1985 and was shocked to see that she was one of the only women in a sea of men.

That changed quite quickly during the fabless semiconductor transformation when the foundries and the design enablement ecosystem became an integral part of the semiconductor industry. This brought all sorts of diversity and this diversity is clearly responsible for the amazing success we have experienced over the last 30 years, absolutely.

The Marie R. Pistilli Women in Electronic Design Award (named after the late Marie R. Pistilli, organizer of DAC) is an annual honor that recognizes individuals who have contributed to the advancement of women in EDA. I am quite honored to say that I have worked with quite a few of the recipients including this year’s winner Anne Cirkel:

Past Recipients of the Marie R. Pistilli Award

- 54th DAC, 2017 – Janet Olson, Synopsys, Inc.

- 53rd DAC, 2016 – Soha Hassoun, Tufts University

- 52nd DAC, 2015 – Margaret Martonosi, Princeton University

- 51st DAC, 2014 – Diana Marculescu, Carnegie Mellon University

- 50th DAC, 2013 – Nanette Collins, Nanette V. Collins Marketing, and Public Relations

- 49th DAC, 2012 – Dr. Belle W. Y. Wei, San Jose State University

- 48th DAC, 2011 – Limor Fix, Intel Corp.

- 47th DAC, 2010 – Mar Hershenson, Magma Design Automation, Inc.

- 46th DAC, 2009 – Telle Whitney, Anita Borg Institute

- 45th DAC, 2008 – Louise Trevillyan, IBM Research Center

- 44th DAC, 2007 – Jan Willis, Cadence

- 43rd DAC, 2006 – Ellen Yoffa, IBM

- 42nd DAC, 2005 – Kathryn Kranen, Jasper Design Automation, Inc.

- 41st DAC, 2004 – Mary Jane Irwin, Penn State Univ.

- 40th DAC, 2003 – Karen Bartleson, Synopsys, Inc.

- 39th DAC, 2002 – Ann Rincon, AMI Semiconductor

- 38th DAC, 2001 – Deidre Hanford, Synopsys, Inc.

- 37th DAC, 2000 – Penny Herscher, Previously Cadence

Anne Cirkel to Receive Marie R. Pistilli Women in Engineering Achievement Award

Past DAC General Chair and Technology Marketing Executive honored for her contributions to the EDA industry

In response to the award, Dr. Wally Rhines, CEO and President of Mentor, a Siemens Business, said, “I’m delighted that Anne’s contributions to our industry are being honored with this prestigious award. She has been a tireless advocate within Mentor for the design automation community and its flagship conference. As one of our most senior female executives, she is a superb role model for the leadership generations to come.”

Exactly!

Before joining Mentor 18+ years ago, Anne started her career in EDA with Viewlogic and Analogy. The Award will be presented during the 55th DAC General Session Awards on Monday, June 25, 2018 at Moscone West, San Francisco, CA. I hope to see you there.

Congratulations Anne!

About DAC

The Design Automation Conference (DAC) is recognized as the premier event for the design of electronic circuits and systems, and for electronic design automation (EDA) and silicon solutions. A diverse worldwide community representing more than 1,000 organizations attends each year, represented by system designers and architects, logic and circuit designers, validation engineers, CAD managers, senior managers and executives to researchers and academicians from leading universities. Close to 60 technical sessions selected by a committee of electronic design experts offer information on recent developments and trends, management practices and new products, methodologies and technologies. A highlight of DAC is its exhibition and suite area with approximately 200 of the leading and emerging EDA, silicon, intellectual property (IP) and design services providers. The conference is sponsored by the Association for Computing Machinery’s Special Interest Group on Design Automation (ACM SIGDA), the Electronic Systems Design Alliance (ESDA), and the Institute of Electrical and Electronics Engineer’s Council on Electronic Design Automation (IEEE CEDA).

Virtuoso at CDNLive – A Press Briefing With Yuval Shay

At CDNLive Silicon Valley 2018, I talked with Yuval Shay, Director of Product Management of Cadence Custom IC & PCB Group to scope out some more details on the recent Virtuoso product refresh announced earlier in the morning by Cadence Sr. VP & GM of the same group, Tom Beckley.

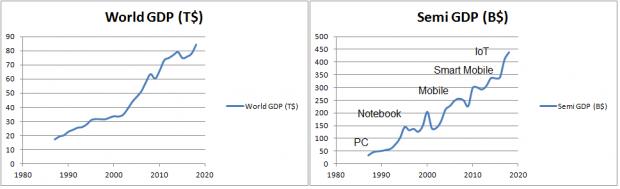

Tom shared his view on enabling the fourth industrial revolution (4IR). He illustrated the challenges faced by both the system and the semiconductor companies such as the growing need for complex integration across chip, package and board; the use of advanced technologies; designing systems that constantly connected to the cloud through the emerging 5G standard; and the changes in the automotive industry with autonomous cars.

Elaborating further, Tom talked about System Design Enablement (SDE) and the effort his organization is putting into SDE as well as how Virtuoso is enhanced to help address the challenges designing complex systems.

Yuval provided some Virtuoso press-release materials, which are also used as reference for my interview. In this release, Cadence revamped the Virtuoso design platform, dubbed ICADVM 18.1 to include the following updates, delivering productivity improvement across multiple segments.

Commenting on the announcement for Virtuoso upgrades that include feature to 5nm process support, Yuval first articulated the increased complexity of system’s integration and the effort to driving down system’s costs. For example, ADAS application with LIDAR technology, combining laser diode, MEMS, photonics, analog sensors and advanced node SOC’s –all integrated into a single system to provide a sub-$100 LIDAR system. Not only leading providers need a state of the art IC tools and solution across multiple technologies, customers also need system solutions.

Tell me about Virtuoso System Design Platform, and what’s new in ICADV18.1 ?

We first introduced Virtuoso System Design Platform (VSDP) in May 2017. It was an immediate hit; the rate of adoption is amazing. Only few months since the announcement and already customers using it in production. It showed us how strong the need is.

What VSDP really does, is allowing a “golden” schematic to capture the system design (SIP) using Virtuoso Schematic Editor and to drive downstream applications. Using Virtuoso’s schematic, the environment can drive Cadence SIP tool for layout implementation, automating the layout-versus-schematic (LVS) verification flow. At the same time, analysis done on the SIP layout, using Sigrity 3D-EM extraction and generating parasitics models, can be back-annotated into Virtuoso. Virtuoso will automatically manage the simulation environment, eliminating the highly manual and error-prone process of integrating system-level layout parasitic models back into the IC designer’s flow. All of this done by maintaining a single “Golden” schematic for both LVS and verification.

New in Virtuoso ICADVM18.1, is the ability to edit the SIP layout using Virtuoso Layout Suite and to be able to edit each die in the SIP layout and managing multiple process Design Kits (PDK’s), simultaneously.

Could you comment about concurrent layout access which was mentioned in the keynote?

Custom IC design depends on hierarchical design methodology, where each layout hierarchy (cellview) can be edited by a single user at a time. For example, if during sign-off review, there are thousands of DRC violations needed to be fixed at the top level, the ability to distribute the job across multiple users is limited. We developed a new technology which allows for multiple users to edit the same cellview/hierarchy and allow for selective integration of edits back into the master cellview. There is the ability for the cellview owner to accept or reject edits from other users and to add notes, in case edits get rejected. This new technology allows for a non-destructive editing of the layout artwork –data being touched just like editing a picture on your smartphone.

Could you share recent example of ML application in custom design space?

Design integrity and robustness need to be guaranteed for Custom Analog IP’s while designed within a timely manner. Virtuoso’s Electrically Aware Design (EAD) environment enables users to perform parasitics extraction and EM analysis on partial layout. We use Machine Learning (ML) to generate unique technology database with highly trained model for fast extraction of parasitic with high accuracy. Incremental RC extraction can be done within seconds as design being developed. For example, we have a customer using this top-level design with thousands of nets, to check coupling between noisy and sensitive nets to prevent crosstalk. This can be done in-design within seconds. In this new release of Virtuoso, we enhanced our layout environment to be driven by simulation data, introducing for the first time “Simulation Driven Layout” to mitigate EM problems, in-design.

What are the top three challenges in custom design space?

First, in the analog design space, there is a need of increased automation in order to shorten turn-around time. For example, people aspires to do top-down design, floorplanning and explore ways to reduce die-size. In reality, for a given project, there is no time to explore multiple floorplans and run multiple routing jobs to see if a new plan will converge. So designer usually goes with prior design knowledge and floorplan, or make a best educated guess. We developed new capability in Virtuoso to efficiently plan the design hierarchies, floorplan the design and analyze it for congestion and convergence –enabling multiple floorplanning exercises to occur in a short amount of time. This allows designers to find opportunities to do die size reduction.

Second, custom design gets extremely challenging in the advanced node process. Layout effort keeps increasing to a point where there is not adequate PD (Physical Designer) to complete the jobs. We advocate for a smarter methodology to better manage the sheer number of design rules, EM, layer’s density requirements and design variation. Virtuoso has the environment to address all of these. The 3X speed-up in keynote is conservative, in reality it could be even higher.

Third, design is not an isolated (effort) anymore –designers need to care about packaging and packaging effects on their design as well as to have the ability to manage multiple IC’s and complexity of advanced packages including its associated critical effects (for example, parasitic/magnetic on PCB affect IC design). How to capture those effects early, taking SiP design into analysis. We enhanced our Virtuoso System Design Platform to help with those challenges and collaborating with EDA connection partners to help customer with the solution.

Can you highlight a recent customer engagement experience?

At this CDNLive we had Bosch presenting the result of our collaboration on Simulation-Driven Layout. As you can imagine, Bosch care greatly about design reliability and wanted a solution to mitigate electro-migration problems, while not dragging down overall productivity. We worked together on this and came-out with a solution we are proud of. The new environment allows users to examine and plan their layout based on both current flow and current density. In addition, when using Virtuoso Wire Editor, wires will be sized automatically to address current density requirements.

At the end of the interview, I was inquiring about further anticipation of expanding cloud availability similar to the OrCAD Entrepreneur case. He tactfully mentioned Cadence have currently hosted design solution through (design) services and cloud readiness is being considered across Cadence.

ARM IoT Mbed Update

Normally press release events with ARM tend to be somewhat arms-length – a canned pitch followed by limited time for Q&A. Through a still unexplained calendar glitch I missed a scheduled call for a recent announcement. To make up I had the pleasure of a 1-on-1 with Hima Mukkamala, GM of IoT cloud services at ARM. Hima is a heavy hitter, previously head of engineering and a senior exec for GE Digital’s Predix platform. So while the rest of us pronounce on the IoT, he’s actually lived it, particularly the IIoT.

The topic was an announcement ARM made recently at the Hanover Messe, on their Mbed solutions for the IoT, from edge devices to the cloud. Hima walked me through the standard pitch of course but with much more opportunity for me to ask questions. Thanks to which I now have (I think) a better understanding of the strategy and advantages of the solution.

This starts with the very rapid growth ARM is seeing– they’re anticipating 100B devices shipped from 2017 to 2021, equal to the number shipped in the preceding 26 years. Since they should have better insight than most into what’s coming out over the coming 3 years, this clearly supports exponential growth. Which makes management and security a big potential headache. I’ve talked before about security and the advantages of diversity, but at this scale I can also see the appeal of putting all your eggs in one basket and watching that basket very carefully.

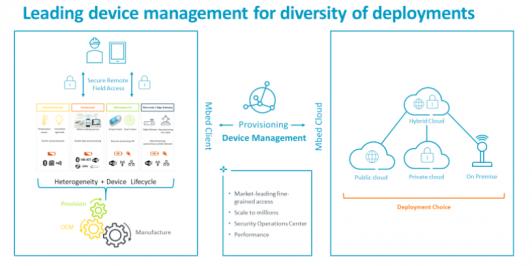

You should know the essentials of the solution by now – Mbed OS running on the edge device and at the other end, Mbed cloud providing the platform for management, provisioning and authorizations. ARM naturally points to the ubiquity of their hardware footprint and their strength in building partnerships as essential in delivering solutions to a very wide variety of needs in the inevitably diverse IoT space.

That said, I was encouraged to hear that this isn’t a monopolistic play, not just because we need healthy competition or because it would anyway be impractical but also because I’m a big believer that focused targets lead to more quickly to tangible results. So Mbed OS is free and open (at least to run on Cortex-M class cores) and provides for the security and communication features you would build around such designs. Meanwhile in Mbed cloud, Hima acknowledged that the customer landscape is already very mixed; they’re already running multiple apps at this level so Mbed cloud has to coexist, bridging gaps where needed but otherwise letting customer solutions call the shots. He noted a partnership they have established with IBM Watson IoT as an example where they are collaborating in a broad solution.

On the focus topic, Hima’s pitch opened with what I had assumed was a generic IoT applications slide, but he clarified these are ARM’s IoT solution focus areas. As one example he cited work they have done with Alphatronics to support building a solution for waste recycling management in Belgium. You’ve probably heard of a similar concept before; trash containers at centralized disposal sites signal when they are empty, near full or full, enabling trash disposal trucks to optimize pickup. This reduces transportation costs and staffing at these trash facilities and also checks that only approved customers are using the facility.

Another example Hima offered was in utilities. This area bristles with regulations. One aspect of those restrictions, at least today, is that management cannot be in a public cloud (I can imagine this being a concern in many IoT applications, whether or not constrained by regulations). To support this kind of need, ARM is now offering Mbed on Premises, a way to support Mbed Cloud, with all the connectivity, security, certification etc. management, in your private cloud.

ARM also announced an out-of-the-box Mbed solution they call Mbed Client Lite, for constrained applications such as pallet management (in the logistics focus area). Part of the advantage of this being a canned solution is that it comes pre-packaged with channel security and remote secure update. Solutions for pallet management and other high-volume, low-cost uses are exactly where providers will be tempted to cut security corners unless the right solutions are handed to them on a plate. ARM have also partnered with a couple of established certificate authority/identity service providers, so there’s hope that eventually IoT security may be wrested under control (and that day can’t come too soon).

It’s a good story – device-to-cloud integration allowing for private as well as public clouds, out of the box security for low-end devices, broad partnerships as always with ARM and application examples already in deployment. How well competitive solutions (eg RISC-V-based devices) can work in this environment is less clear to me, but I guess we’ll find out. You can learn more about the Mbed solutions HERE.

Hard IP for an embedded FPGA

As Moore’s Law enables increased integration, the diversity of functionality in SoC designs has grown. Design teams are seeking to utilize outside technical expertise in key functional areas, and to accelerate their productivity by re-using existing designs that others have developed. The Intellectual Property (IP) industry has emerged, where IP vendors offer design cores to SoC teams for integration. Specifically, “hard IP” refers to a core with a physical implementation, which has been optimized for performance, power, and area in a specific target process technology. Correspondingly, Moore’s Law also implies that the amount of programmable logic available in an embedded FPGA has expanded. It would make sense that SoC design teams leveraging the unique capabilities of an eFPGA would likewise be seeking to incorporate/re-use existing IP designs.

I’ve been having coffee periodically with the team at Flex Logix, to try to stay current on the rapidly expanding eFPGA industry segment. I asked Geoff Tate, CEO, and Cheng Wang, Senior VP Engineering, whether there is indeed a confluence of eFPGA customers and IP providers.

“Absolutely.”, Geoff replied. “Our customers are seeking the expertise of outside sources of IP, such as for functional accelerator cores.”(Refer to an earlier article on how eFPGA technology can provide a significant speedup on specific algorithms, compared to a software implementation on a microprocessor core – link.)

I asked Cheng,“How does the IP provider develop the implementation? What models are delivered to the end customer?” He replied, “The IP for an embedded FPGA is developed using the same model compilation flow as the customer, with only a single switch setting to differentiate the physical implementation.”

Figure 1. Illustration of the connectivity of a “hard IP” design in a customer eFPGA design.

“Like the customer design, the IP based on a set of eFPGA tiles.”, Cheng highlighted. “The physical distinction for the IP design is that the input and output pins of the IP are designed for internal connections, not to the top-level drivers/receivers.”

(For more on tile design, and the allocation of internal connections and receiver/driver circuits at the tile edge for primary inputs/outputs, here’s a link to an earlier article.)

“Other than the floorplan pin location assignment restriction, the compilation of the IP design is the same.”, Cheng said. “This is indeed a ‘hard IP’ approach. The end customer receives the eFPGA bitstream for the IP to merge with the personalization of their design. PPA optimizations for the IP have been completed by the IP provider, as reflected in the delivered personalization. Within the compilation environment, the customer has no visibility into the black box physical model.”

Figure 2. eFPGA compilation platform — the IP model is delivered as a physical black box, with no internal visibility.

“What IP models are delivered?”, I asked. “In addition to the final bitstream, the IP compilation flow automatically generates the physical abstract and the timing abstract for the customer. We collaborate with the IP provider on a power model.”, Cheng replied.

“Although we’ve discussed an IP vendor delivering models to an end customer, this same methodology certainly applies to any customer seeking the productivity benefits to re-using an existing optimized design block.”, Geoff added. “They would create an IP library for internal use in the same manner.”

The Flex Logix team refers to their hard IP flow as the design of an eFPGA “virtual array”. The figure below highlights a recent test chip of a 7×7 tile configuration incorporating a 4×4 IP virtual array.

Figure 3. Illustration of a 4×4 virtual array integrated into a 7×7 tile eFPGA design.

The IC industry has rapidly adopted the technical (and economic) model of hard IP integration and re-use, to augment the expertise of the design team and boost their productivity. Thus, it is a natural extension that this same model is also being adopted as part of the increasing capacities of embedded programmable logic.

For more information on the Flex Logix virtual array methodology for hard IP design, please follow this link.

-chipguy

imec and Cadence on 3nm

One of the more frequent questions I get, “What is next after FinFETs?” is finally getting answered. Thankfully I am surrounded by experts in the process technology field including Scotten Jones of IC Knowledge. I am also surrounded by design enablement experts so I really am the man in the middle which brings us to a discussion between Rod Metcalfe, product management group director in theDigital & Signoff Group at Cadence, Peter Debacker R&D team leader at imec, and SemiWiki on the 3nm testchip announcement.

Continue reading “imec and Cadence on 3nm”