My usual practice when investing is to look at startup companies and try to understand if the market they are looking to serve has a significant opportunity for a new and disruptive technology. This piece compiles the ideas that I used to form an investment thesis in Portable Stimulus. Once collected, I often share ideas to get feedback. Please feel free to offer up suggestions and critique. Thanks – Jim

Continue reading “Portable Stimulus enables new design and verification methodologies”

Honey I Shrunk the EDA TAM

The “20 Questions with Wally Rhines” series continues

Throughout the history of the EDA industry, pricing models have caused discontinuities in the way the industry operates. For a variety of competitive reasons, individual companies have developed ways to change the pricing model in an attempt to secure competitive advantage. Following are some of the most memorable:

- Valid Logic (1988) – Remove the premium for “global float” and allow all licenses to “float” around the world. This one sounds pretty reasonable in today’s computing server environment but in 1988, software licenses were “node locked”. You purchased a design software license for one work station and it could “float” only within a reasonable distance, say around a single corporate site. Valid Logic offered their customers free float of the license to any of the customer’s worldwide locations through a program called “ACCESS”. It was a big hit. It also destroyed a significant portion of the total available market for EDA software, more than half by some estimates, as other EDA companies followed suit.

- AVANTI Subscription Licensing – In the mid-1990’s, AVANTI introduced a three-year time-based licensing model. I am told by Daniel Nenni it was driven by AVANTI’s observation that customers purchased perpetual licenses that lasted for about 3 years (two Moore’s Law process nodes) before they had to upgrade and buy new perpetual licenses (although Red Herring magazine reported that Gerry told them he got the idea from car leasing plans). At this time, the industry model was a combination of perpetual licenses plus ongoing maintenance. The maintenance fee was 15-20% annually of the cost of the perpetual license, similar to what most of the non-EDA software industry offers today, except for the more recent introduction of SAAS (software as a service) models. The perpetual license cost was high and the revenue was all recognized “up front” because the customer now owned the software. For the AVANTI three year subscription model, the entire EDA industry followed the example like lemmings because of pressure from customers. It also had an attraction for the EDA companies since it offered a continuing revenue stream and EDA companies were worried about what would happen when perpetual license sales slowed to a smaller percentage of their revenue and maintenance revenue became the primary ongoing revenue source. The problem with the three year subscription model was that competitive discounting quickly drove the subscription price down to about the same level as the previous annual maintenance cost. Now the customers were receiving product plus maintenance for the same cost as they previously paid just for maintenance. A good deal for the customers but questionable for the EDA companies.

- Cadence FAM (Flexible Access Model) – This was introduced in the late 1990’s. It was essentially a three year “all you can eat” approach to software from a single EDA company. It was a hit with the Cadence sales force and the customers but it caused lots of disruption in the industry although I don’t think other companies offered anything similar. It led to internal management disruption at Cadence. At the Cadence earnings call on April 20, 1999, the company announced that “the company has run into a ‘one to two quarter delay in absorption of 0.18 micron design tools’ among semiconductor makers. Many in the EDA industry translated this as: “A large number of our best customers have purchased three year FAM licenses so we can’t collect additional revenue from them for a while”.

- Cadence Re-Mix – Once again, Cadence sets the pace of innovation in pricing with the introduction of “Re-Mix”. A customer specifies the mix of software products desired on the date of contract renewal but, if the customer chooses to change the relative mix of one product versus another, he can do so within the limits of the original contract value. Up until this time, customers had to guess what their mix of product needs would be for the next three years. Typically, they had to buy twice as much software as they would use on an ongoing basis because they couldn’t predict the mix of products they would need. The result: By some estimates, this re-mix approach eliminated as much as half of the EDA TAM because customers didn’t have to predict their future mix of needs and didn’t have to buy licenses sufficient for peak usage.

Foundry IP libraries – Until the late 1990’s, silicon foundries like TSMC left the entire design process to their customers. TSMC received a verified GDSII file from the customer and they checked it and then generated photomasks, fabricated wafers and shipped parts to the customer. Companies like Artisan were in the business of creating physical libraries of standard cell blocks that were checked for correctness and modeling by being fabricated on a test wafer by the foundry. They were then sold to customers doing the designs to speed design of the standard, undifferentiated parts of their chips. Wouldn’t it be great if customers could have access to the entire Artisan library during the design phase and then only be charged based upon the number of cells that were actually used in their designs multiplied by the number of chips produced?

Artisan thought so. And they convinced TSMC to adopt the model, providing software to trace the usage of Artisan cells. Artisan consequently developed a stable stream of royalty revenue from TSMC, making them an attractive acquisition for ARM. I’m told that the deal was not so good for TSMC. High volume customers negotiated discounts to wafer pricing with TSMC and the standard cell libraries became part of those negotiations. As a result, the additional money that TSMC expected to receive from their customers by charging them for the use of standard cells turned out to be elusive. The bundled price of wafers plus photomasks plus IP, etc. was included in the wafer price and any incremental revenue for the cell libraries was hard to find.

How can I be so cavalier about this whole topic when, during the last twenty-five years as CEO of Mentor, my company was subjected to so much cost and revenue pressure by these model changes? The reason can be seen in my previous blog EDA Cost and Pricing on October 12[SUP]th[/SUP]. The revenue of the EDA industry has continued to be 2% of semiconductor revenue for more than twenty years. These model changes were simply part of the way that discounts were provided to customers so that the EDA companies could stay on the learning curve and give semiconductor companies a reduced cost per transistor for design software. If the pricing models hadn’t changed, we would have had to provide those discounts in some other form because the EDA industry had to reduce its software price per transistor at the same rate that the semiconductor reduced its revenue per transistor.

Musk the Magician and Data Monetizer

Tesla Motors CEO Elon Musk pulled a rabbit out of his hat last month making thousands of cars vanish and converting reports of production and delivery hell into market leadership for premium sedan deliveries. Following SEC legal action and a podcast where he appeared to be drinking scotch and smoking marijuana and after surrendering – temporarily – the chairmanship of the company, Musk stands vindicated by empty factory parking lots and a spiking stock price.

But that spiking (upward) stock price soon took another nose dive as Musk mocked the SEC’s decision and investors fled again. What endured, though, was the report from Tesla’s subsequent shareholders’ meeting showing that the Model 3 is now the best-selling mid-sized premium sedan in the U.S.

As amazing and impressive as this achievement was, it does not obscure the deeper issue of Tesla’s pursuit of automated driving. While it appears, now, that Musk misled investors and the public generally regarding the orchestration of a move to take the company private, he continues to play fast and loose with the automated driving capabilities of his cars.

According to a report in Bloomberg News, Musk sent an email indicating that Tesla needed 100 more employees to join an internal testing program linked to rolling out the full self-driving capability. Bloomberg reported that Musk wrote: “any worker who buys a Tesla and agrees to share 300-400 hours of driving feedback with the company’s Autopilot team by the end of next year won’t have to pay for full self-driving – an $8,000 saving – or for a premium interior, normally costing $5,000.

“This is being offered on a first come, first served basis,” Bloomberg reports Musk writing in his email. “Given the excitement around this, I expect it will probably be fully subscribed by noon or 1 p.m. tomorrow.”

The problem is that Musk is still referring to Autopilot as full self-driving capability when it, in fact, remains at best Level 2 with enhancements only likely to shift it to a Level 3 still requiring driver re-engagement with the driving task. This matters because the growing range of Level 2 systems – steadily advancing the capabilities of adaptive cruise control with lane keeping etc. – all appear to perform slightly differently and consumer confusion can be fatal or at least dangerous.

There is the now-infamous video of the Volvo customer at a dealership being run over by an XC60 with City Safety because a) the driver accelerated and b) the system did not have pedestrian detection. Subaru with EyeSight and Nissan’s ProPILOT are similar enhancements but with slight variations in performance. Daimler and Audi are also in the game.

GM’s Super Cruise goes all out with enhanced vehicle positioning technology from Ushr, Swift and Trimble; location data updates via wireless connections; and a driver monitor from Seeing Machines. All of these systems are Level 2, but only the GM system monitors the driver while also integrating enhanced localization.

Musk has shown he can make cars disappear and yo-yo his stock price to the dismay of short sellers and regulators. What is missing from the Tesla high wire act, though, is some candor regarding the current capabilities and long-term expectations for Autopilot.

It is worth noting the value of automated driving data imputed by Musk’s email. Musk has put a marker on data monetization at $20/hour of automated driving – but that’s probably a lowball estimate. That data is priceless to an organization that has none. Investors, meanwhile, can run that against the millions of hours of such data gathered by Cruise Automation, Waymo and others as a validation of their high flying valuations. Thanks, Elon, but it’s still not “Autopilot.”

ARM Turns up the Heat in Infrastructure

I don’t know if it was just me but I left TechCon 2017 feeling, well, uninspired. Not that they didn’t put on a good show with lots of announcements, but it felt workman-like. From anyone else it would have been a great show, but this is TechCon. I expect to leave with my mind blown in some manner and it wasn’t. I wondered if the SoftBank acquisition had knocked them a little off their game.

This year they seem to have got their mojo back, at least judging by a press announcement I joined before the show. ARM is again swinging for the fences, this time in announcing a major initiative for cloud to edge infrastructure support and a new processor roadmap to support that direction. Of course ARM is already well-known in the edge but they’re also deeply embedded in base-stations, top of rack switches, gateways and WAN routers. In fact ARM claims the largest market share in units for processor IP in infrastructure (I’m sure intended as a reminder that RISC-V is still a toddler in markets already dominates).

They’re also starting to have some impact in the server space (the cloud in its various manifestations), though another person on the call asked if that initiative is struggling given e.g. Qualcomm’s exit. Drew Henry, the speaker and VP/GM for the Infrastructure BU, acknowledged the QCOM change but said there will be announcements at TechCon on new server entrants which apparently will demonstrate ample continuing momentum.

So what’s the big deal with infrastructure? ARM anticipates significant growth in this area given their expectation of a trillion devices in the IoT. Smart parking and city lighting, retail, transportation and many other applications will create many wireless edge nodes needing to communicate ultimately with the cloud. And that will require layers of intelligent data reduction and traffic management between those levels; mega-servers and 5G alone won’t be enough to manage the data volume.

So ARM has announced NEOVERSE, which diverges from the Cortex world. NEOVERSE is a brand we were told and is inclusive of technologies and services that partners will bring to the space. ARM’s contribution under this brand starts with high-performance secure IP and architectures. Drew showed us a roadmap for these IP, starting with the Cosmosplatform, related to the A72, A75 and available today in 16nm. The next step up will be the Aresplatform, available in 2019 at 7nm. Following Ares in 2020 we’ll get the Zeusplatform in 7nm+ and in 2021 we’ll see the Poseidon platform at 5nm. Drew expects about 30% improvement per generation in performance and features.

The next component of NEOVERSE is a wide range of solutions and, of course, an extensive ecosystem. The solutions don’t look too dissimilar from what you know around standard ARM offerings, though with perhaps with more networking options and multiple accelerators, from ML and embedded FPGA to video. In the ecosystem they already have endorsements from multiple silicon and EDA providers, most of the US and Chinese cloud providers (I didn’t notice Google), big systems names like Ericsson, Nokia, Huawei and Cisco and Sprint, Orange and Vodaphone among others in operators.

In OS they include RedHat, Suse and Oracle, in container/virtualization they have Docker, OpenStack, VMWare, etc. In language and library support they have endorsements from OpenJDK, Python, NodeJS and GO, in devtools they listed codefresh, shippable and more. Finally in Open source projects for networking and server they include Linaro (naturally), LF networking and Cloud Native Computing Foundation. Apparently some of this work is already underway, eg Ares is starting to appear in Linux builds. Overall not a bad starting point.

The third component of NEOVERSE is scalability. Naturally the level of solutions you want to see in the cloud will be different from what you would expect in connectivity, or the fog, or near the edge. In the cloud you want TBps and lots of cache, handling datacenter workloads. In networking, storage and security you still need performance but not at the same scale and you need the hardware features required to support workloads like NFV, SDN, IPSec and compression. At the edge, or close, demands are not nearly as challenging, though still possibly requiring support for virtualization and certainly support for wireless and/or wired upload.

This feels like more than just another arrow in ARM’s quiver. It’s an additional quiver with arrows crafted to a very distinct target, again with strong ecosystem support. Which should help them further expand the gap between ARM and alternative solutions. At least I’m sure that’s how ARM sees it.

Advanced Materials and New Architectures for AI Applications

Over the past 50 years in our industry, there have been three invariant principles:

- Moore’s Law drives the pace of Si technology scaling

- system memory utilizes MOS devices (for SRAM and DRAM)

- computation relies upon the “von Neumann” architecture

Continue reading “Advanced Materials and New Architectures for AI Applications”

Does the G in GDDR6 stand for Goldilocks?

In the wake of TSMC’s recent Open Innovation Platform event, I spoke to Frank Ferro, Senior Director of Product Management at Rambus. His presentation on advanced memory interfaces for high-performance systems helped to shed some light on the evolution of system memory for leading edge applications. System implementers now have to choose between a variety of memory options, each with their own pros and cons. These include HBM, DDR and GDDR. Finding the right choices for a given design depends on many factors.

There is a trend away from classic von Neumann computing, where a central processing unit sequentially processes instructions that act on data fetched and returned to memory. While bandwidth is always an issue, for this kind of computing latency became the biggest bottleneck. The frequent non-sequential nature of the data access exacerbated this problem. That said, caching schemes helped this issue significantly. The evolution of DDR memory was driven by these needs – low latency, decent bandwidth, low cost.

Concurrent with this, the world of GPUs faced different requirements and developed their own flavor of memory that had much higher bandwidth – to accommodate the intensive needs of GPUs to move massive amounts of data for parallel operations.

HBM came along as an elegant way to gain even higher bandwidth, but with a more complex physical implementation. HBM uses an interposer to include memory stacks in the same package as the SOC. HBM wins in bandwidth and low power. However, this comes at a higher cost and more difficult implementation, which are serious constraints for system designers.

Let’s dive into the forces driving leading edge system design. According to Frank, with the exponential explosion of data creation, data centers are being pushed harder than ever to keep up with processing needs. Also, AI and automotive are other big factors that Rambus is seeing changing the requirements for systems. Traditional process scaling is slowing down and cannot be depended on to deliver performance improvements. Architectural changes are needed. One such example that came up in my discussion with Frank was how moving processing closer to the edge to aggregate and reduce the volume of data is helping. It is estimated that ADAS applications will demand 512 GB/s to 1000 GB/s to support Level 3 and 4 autonomous driving.

One of the major thrusts of his TSMC OIP talk is that GDDR is in a goldilocks zone for meeting these new needs. It is proven cost effective technology and has very good bandwidth – which is especially important for the kinds of parallel processing needed by AI applications. It also has good power efficiency. He cited the performance of the Rambus GDDR6 PHY, which has 64GB/s in bandwidth at pin rates of 16Gb/s. Their PHY supports 2 independent channels and presents a DFI style interface to the memory controller.

With their long experience in high speed interface design, Rambus is able to offer unique tools to their customers to help determine the optimal memory configurations for their designs. The Rambus tools also can help with design, bring up and validation of interface IP, such as the GDDR6 PHY.

Frank mentioned that they have an e-book available online that talks about GDDR how it is evolving to meet the needs of Autonomous vehicles and data centers. It has some great background information on these applications and also offer insights into how GDDR is advancing with in performance in 2018 with GDDR6. The e-book is available for download on their site.

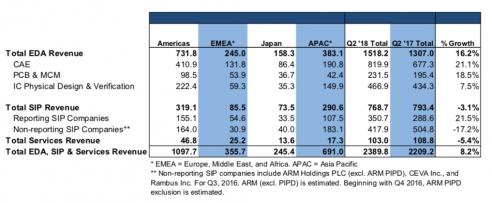

Who is Responsible for SIP Revenue Decline in Q2 2018?

According with ESDA, EDA revenues have grown YoY by 16.2% in Q2 2018, and this is the good news for our industry. The bad news is the decline of SIP (Design IP) revenues, by (3.1%) at the same time. As far as I am concerned, this figure looks weird, so I will try to understand the reason why SIP category can go wrong in a healthy EDA market, indicating a growing design starts number.

Continue reading “Who is Responsible for SIP Revenue Decline in Q2 2018?”

Avionics and Embedded FPGA IP

The design of electronic systems for aerospace applications shares many of the same constraints as apply to consumer products – e.g., cost (including NRE), power dissipation, size, time-to-market. Both market segments are driven to leverage the integration benefits of process scaling.

Continue reading “Avionics and Embedded FPGA IP”

The Shape of Semiconductor Things to Come

Given that the semiconductor industry is clearly in the midst of a down cycle (even though there are cycle deniers, also members of the flat earth society…), most investors and industry participants want to know the timing of the down cycle and the shape of the recovery as we want to know when its safe to buy the stocks again. We also want to try to predict the ramp rate of the recovery so as to value the growth of the industry and the stocks.

Continue reading “The Shape of Semiconductor Things to Come”

Technology Behind the Chip

Tom Dillinger and I attended the Silvaco SURGE 2018 event in Silicon Valley last week with several hundred of our semiconductor brethren. Tom has a couple blogs ready to go but first let’s talk about the keynote by Silvaco CEO David Dutton. David isn’t your average EDA CEO, he spent the first 8 years of his career at Intel then spent more than 18 years at wafer processing equipment company Mattson Technology including 11 years as their president and CEO.

David started his keynote with semiconductor market drivers (PC, Mobile, IoT, and Automotive) and moved quickly into Artificial Intelligence and Machine Learning which will drive the semiconductor industry across most market segments for years to come, my opinion.

It is a little frightening if you think about it and obviously I do. Not only will our devices be smarter than we are, they will outnumber us by a very large magnitude. Privacy as we once knew it will be gone in favor of security, automation, and social media obsessions.

Automotive is an easy example. Future cars will be very much silicon based with limited human interaction. I remember waiting impatiently for my 16[SUP]th[/SUP] birthday so I could drive legally. I got my pilot’s license when I turned 20 which was an even greater thrill. My grandchildren will have thought controlled flying cars so what will they look forward to when they turn 16?

David did a nice review of the technical challenges for the semiconductor industry then moved to more detail about Silvaco and the transformation they have made over the last four years. We have worked with Silvaco since 2013 starting with the blog ABrief History of Silvaco. Since then we have published 62 Silvaco blogs that have been read close to 300,000 times so we have had a front row seat for this amazing transformation.

The slide deck is located HERE. It is 38 slides (24.8MB) but it is a very quick read and does a very nice job summing up Silvaco and the chip challenges they address. Here is the summary slide for those who need more motivation to download the presentation or are on a mobile device:

- Global EDA Leader driving growth to provide value to our customers in Advanced IC, Display, Power and AMS

- Provider delivering complete smart silicon solutions for predictive and comprehensive design work before applying $$$ to Silicon

- Utilizing acquisitions combined with organic development to drive high growth rate

- Custom CAD supports fabless community across many foundries

- Strong IP division with leading automotive IP, Processor Platforms and unique Fingerprint tools

- Financially strong driving double digit growth from the balance sheet

- Supporting the industry ecosystem

I attend more conferences than most and have organized a few so for what it is worth here are a couple of suggestions. The conference itself was pretty well done but I would definitely serve lunch and if you want to attract the engineering crowd have tables in the lunchroom for all of your products or market segments and have them staffed with your field support people (AE/FAEs). Keynotes and breakout sessions are nice, marketing people are entertaining, but the face-to-face interactions with current and future customers should be left to the people who do the real work, my opinion.