The second keynote at Mentor’s U2U this year was given by Hooman Moshar, VP of Engineering at Broadcom, on the always (these days) important topic of design for power. This is one of my favorite areas. I have, I think, a decent theoretical background in the topic, but I definitely need a periodic refresh on the ground reality from the people who are actually designing these devices. Hooman provided a lot of insight in this keynote.

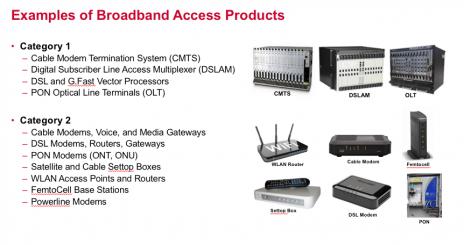

He set the stage by defining categories for power. Category 1 is high end, high performance, high margin where power is managed as needed at any cost. Category 2 is mid-range, good performance but under margin pressure, where power is critical to system cost. Category 3 is low end, lower performance and low margin where power is critical to system operation (e.g. battery/system lifetime). In his talk, Hooman focused mostly on Category 2 devices. He covered a lot of background on power and the challenges, which I won’t try to cover in depth here; just a few areas that particularly struck me.

First, some general system observations. He said that for Broadcom, thermal design at the system level is obviously not under their control and customers are often not very sophisticated in managing thermal, so Broadcom winds up shouldering much of the burden of making solutions work in spite of the customers. Given this, integration is not a panacea in part because of power. Multiple smaller packages can be cheaper both in manufacturing and in total thermal management cost.

In power estimation at initial planning/architecture, it was interesting to hear that this is still very much dominated by Spice modeling, spreadsheets and scaling. He said they have evaluated various proposed solutions over the years but have never found enough accuracy to justify switching from their existing flows. Which I don’t find very surprising. If you can carry across use-cases and measure power stats from an earlier generation device and scale parasitics, you ought to get better estimates from a spreadsheet that you could when estimating parasitics, uses-cases and power scaling in a tool. At RTL they use PowerPro (from Mentor, not a surprise) and have determined that this, like competing tools, typically can get to within 20% of gate-level signoff power estimates.

For power management, they use a lot of the standard techniques: mixed Vt libraries, clock gating both at the leaf-level and in the tree (since clock tree power is significant), frequency scaling and power gating. He also mentioned keeping junction temperatures down to 110[SUP]o[/SUP]C (he doesn’t say how, perhaps through adaptive voltage scaling?), which lowers leakage power and also improves timing, EM and long-term reliability. They also like AVS as a way to reduce power in the FF corner. Hooman touched on FinFET technologies; I have the impression they are more cautious than the mobile guys, where area/cost tradeoff is not always a win, though reduced (leakage) power can still provide an advantage in integration.

He talked about some additional techniques for power reduction, such as use of min-power designware, e.g. in a datapath with many multipliers or in a block instantiated many times in an SoC. Another technique he likes is XOR self-gating – stopping the clock on a flop in absence of a toggle on the data input (need to be careful with this one at clock domain crossings).

Looking at challenges they still face, he said that active power management is still a bigger concern for them than leakage. The challenge (at RTL) is in estimation, particularly in estimating the power in the synthesized clock tree since the detailed tree obviously doesn’t exist yet at this stage. He acknowledged that all tools have methods to manage this error and to tune estimates in general (eg by leveraging parasitic estimates from legacy designs).

Hooman talked about challenges in getting useful toggle coverage early for reasonably accurate dynamic power estimation. RTL power estimates lack absolute accuracy (they’re better for relative estimates), while gate-level sims are more accurate but take too long and are available too late in the schedule. He said they should be using emulation more but still see challenges with that flow. One is differences between the way combinatorial logic and memories are modeled in the emulator and in design logic – this he feels requires some improvisation in estimation. Another is that you still need to connect all that other scaling/estimate stuff (parasitics etc) with emulation-based power estimation. I would guess this part is primarily a product limitation which will be fixed in time (?).

Overall, good insights, especially on the state of the art in mid-range power management. No big surprises and, perhaps as usual, design reality may not be quite as far along as the marketing pitches. I’m sure it will eventually catch up :cool:.

Share this post via:

Comments

There are no comments yet.

You must register or log in to view/post comments.