The idea of a digital twin is simple enough. You use a digital model of a car, aircraft, whatever to test design ideas and prove your design will be robust across a wide range of scenarios before you commit millions of dollars and lives to proving out the real thing. As Siemens have accomplished in their PAVE360 platform. There are a couple of challenges in building such a twin. One is how much effort you want to put in to faithfully model all aspects of the design. Bearing in mind that it’s already hard to model whatever you consider to be the most complex aspect of your design. Which leaves you to abstract other features with simple and less accurate approximations.

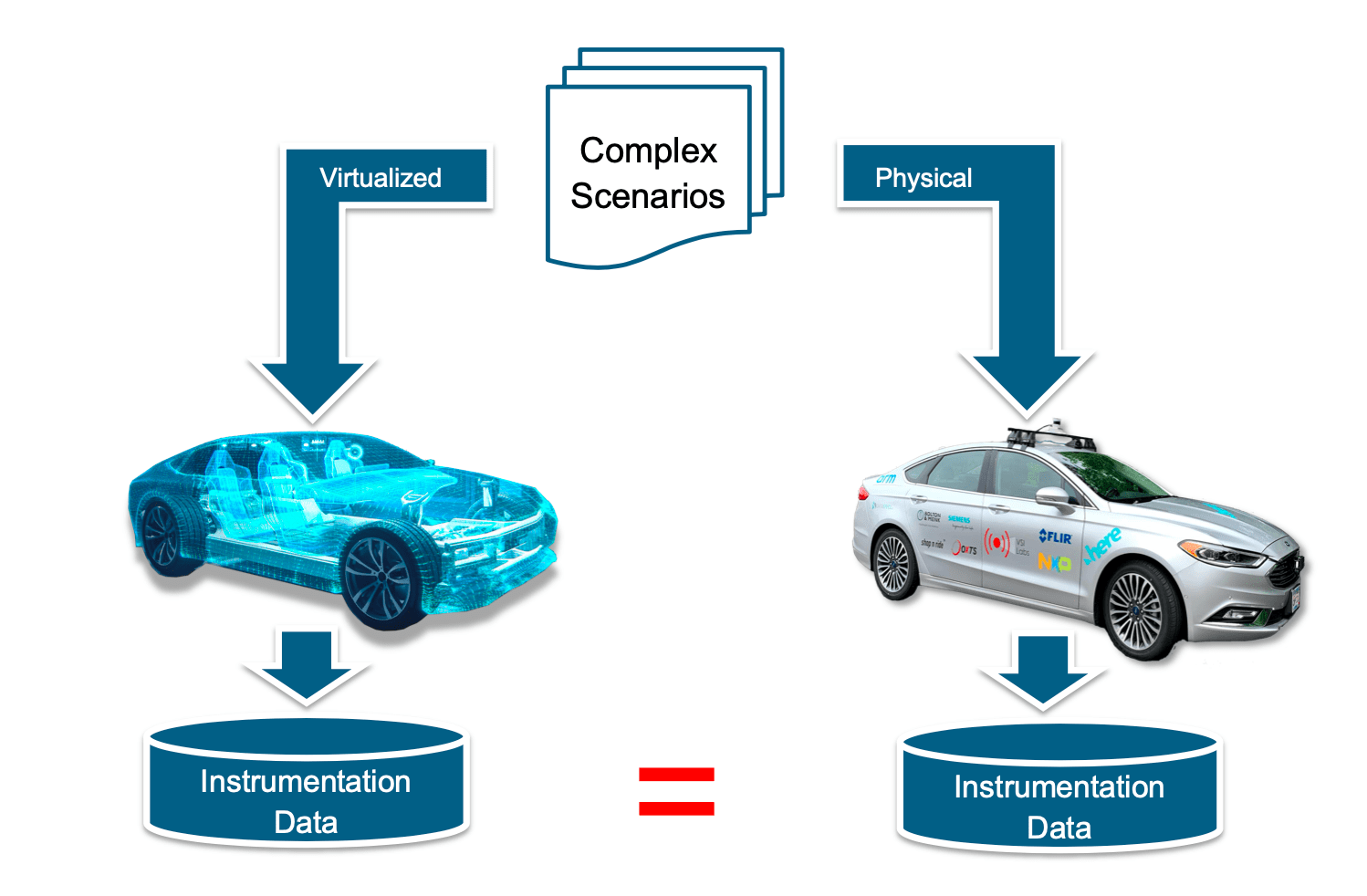

The second problem is calibrating your model to reality. Without that feedback to fine-tune the digital twin, predicted behaviors can be wildly wrong. What you think you proved in your model was safe behavior in fact is not at all safe.

Getting to scenario coverage

All of which is very relevant to modeling self-driving cars and advanced ADAS systems. The range of scenarios these have to cover is mind-boggling. RAND Corporation talk of cars needing to be trained over billions of miles of travel, equivalent to more than 100 years of driving. Which of course is impractical but also raises questions of how good that coverage really gives you. Was that really 100 years of widely varied experience or one year of experience repeated 100 times? Simulating a digital twin, carefully calibrated to a real car, is a much better approach. You can run an arbitrary number of experiments in parallel, and you can plan for comprehensive coverage, including dangerous scenarios you wouldn’t attempt in a real car.

Calibrating the model

That addresses the volume of testing and coverage questions, but what about calibration? For that you need an autonomous car, tricked out with all the sensors, actuators and software necessary to that purpose. VSI Labs has developed an autonomous Ford Fusion testbed with extensive capability. Ouster LIDAR, FLIR thermal imaging and OxTS inertial navigation. HERE HD maps, Dataspeed by-wire control (throttle, steering, brake), and NXP and Arm for localization, detection/recognition and planning. Nira Dynamics for road friction modeling and Aptiv for radar detection and tracking. Bolton and Menk for signal phase and timing and Leopard imaging cameras.

Siemens PAVE360

And finally, Siemens for digital twin modeling through their PAVE360 platform. Siemens and VSI can correlate instrumentation data from what they see in the real car with what they see in the digital twin. Providing the calibration they need to ensure the digital twin remains faithful over a subset of scenarios, while still allowing the twin to explore a much wider range of scenarios in a realistic time.

David Fritz (Sr Dir for autonomous and ADAS SoCs at Siemens PLM) told me about a demo they ran at CES which attracted a lot of attention. The twin was modeling an autonomous car, driving itself around a curve. At which point it detected a semi-truck stopped immediately ahead, calling for some fairly aggressive braking. It hit the brakes, but there was a water puddle on the left side of the road. Modeling the difference in friction between right and left and the weight distribution in the car, the car fishtailed.

Naturally at the same time another car came from behind, planning to drive past the first car (which should have turned safely out of the way). The cars collided. You can’t model that sort of scenario in an idealized digital twin. That takes comprehensive modeling, careful calibration. And the ability to model across scenarios you wouldn’t want to try in the real world.

You can learn more about the PAVE 360 platform HERE.

Also Read:

Verifying Warm Memory. Virtualizing to manage complexity

Trusted IoT Ecosystem for Security – Created by the GSA and Chaired by Mentor/Siemens

Emulation as a Service Benefits New AI Chip

Share this post via:

Comments

One Reply to “Siemens PAVE360 Stepping Up to Digital Twins”

You must register or log in to view/post comments.