There is a rumor making the rounds that Altera will leave Intel and return to TSMC. Rumors are just rumors but this one certainly has legs and I will tell you why and what I would have done if I were Altera CEO John Daane. Altera is a great company, one that I have enjoyed working with over the years, but I really think they made a serious mistake at 14nm, absolutely. Altera moving to Intel was not necessarily the mistake, in my opinion it is how they went about it.

The rumor started here:

“Altera’s recent move [contacting TSMC] is probably due to its worry of the recent Intel’s 14nm process delay causing delay in its new product will let Xilinx win”

ChinaEconomic Daily News 12/2/13

It became more real when Rick Whittington, Senior Vice President of Drexel Hamilton, released a downgrade on Intel stock (INTC) from buy to hold titled “A Business Model in Flux”. There are more than a dozen bullet points but this one hit home:

While Altera’s use of 14nm manufacturing late this year wasn’t to ramp until mid-late 2015, it has been a trophy win against other foundries

A trophy win indeed, the question is why did Altera allow itself to be an Intel trophy? After working with TSMC for 25 years and perfecting a design ecosystem and early access manufacturing partnership, it was like cutting off your legs before a marathon.

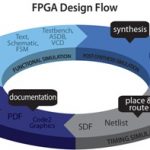

The EDA tools, IP, and methodology for FPGA design and manufacturing are not mainstream to say the least. It is a very unique application which requires a custom ecosystem and ecosystems are not built in a day or even a year. Ecosystems develop over years of experience and partnerships with vendors. FPGAs are also used by foundries to ramp new process nodes which is what TSMC has done with Altera for as long as I can remember. This early access not only gave Altera a head start on design, it also helped tune the TSMC manufacturing process for FPGAs. Will Intel allow this type of FPGA optimization partnership for their “Intel Inside” centric processes? That would be like a flea partnering with a dog, seriously.

What would I have done? Rather than be paraded around like a little girl in a beauty pageant, Altera should have been stealthy and designed to both Intel and TSMC for FinFETs. Seriously, what did Altera REALLY gain by all of the attention of moving to Intel? Remember, TSMC 16nm is in effect 20nm using FinFETs. How hard would it have been to move their 20nm product to TSMC 16nm while developing the required Intel design and IP ecosystem? Xilinx will tape out 16nm exactly one year after 20nm and exactly one year before Altera tapes out Intel 14nm. Remember, Altera gained market share when they beat Xilinx to 40nm by a year or so.

Correct me if I’m wrong here but this seems to be a major ego fail for Altera. And if the rumor is true, which I hope it is for the sake of Altera, how is Intel going to spin Altera going back to TSMC for a quick FinFET fix?

More Articles by Daniel Nenni…..

lang: en_US