Most analog cells have a power off mode intended to reduce power consumption. In this mode, all the circuit branches between the supply lines are set in a high impedance mode by driving MOS gates to a blocking voltage. This is a somewhat similar situation to that in tri-state digital circuits.

When a branch is set in that high impedance mode, all the nodes in that branch are in high impedance too. The concern is that voltages on these nodes are undefined. More precisely, the actual voltages on these nodes are defined by leakage currents. Some leakage currents pull the voltages up while others pull them down. Depending on actual leakage currents values, the high impedance nodes or weak nodes can have any value, normally within one diode drop outside the supply voltage lines.

If such a weak node drives a CMOS inverter input, this one can draw a significant current if the weak node voltage is incidentally close to the inverter threshold.

Detecting such a situation is important as it may impair production yield or even worse it may happen in the field later on after some leakage current has drifted. But it is not a simple task since leakage currents are not always realistically modeled and anyway, they change from wafer to wafer and from device to device. Monte-Carlo analysis might help but leakage currents are not always statistically modeled properly.

A feature of most analog simulators such as SPICE derivatives, Eldo, Spectre, and probably others can be used to reveal weak nodes.

This common feature is that circuit branches conductance cannot be lower than a simulator parameter called “gmin”. This parameter often defaults to 1E-12 but can be changed arbitrarily by the user. The effect of this parameter is that the simulated circuit has a 1/gmin resistance in parallel to any branch. It is normally intended to simplify the first iterations. But these added resistances are not suppressed when the solution is reached. This is why gmin must be set appropriately with respect to the circuit operating currents in order to limit the impact on the result. We can use this feature to detect weak nodes.

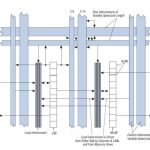

Let’s analyze the following potentially faulty circuit:

To analyze it, let’s simulate the operating point and measure the supply current for various gmin values over a wide range. The following graph was obtained with Eldo for a 180 nm CMOS process:

The curve shows four different areas from left to right: A horizontal branch, a steep positive slope, a plateau and a positive slope. The rightmost positive slope at high gmin values results from the current flowing through the 1/gmin resistances in the branches. The leftmost horizontal branch results from leakage currents. But the plateau and the steep slope on the left result from the weak node effect. The reason is that when 1/gmin gets low enough, the two branches driving the weak node drive it towards VDD/2 causing simultaneous conduction in the inverter.

Now, let’s fix that circuit by driving the weak node to 0 (1 would work too) and run the same analysis again:

Now, the curve is normal, with a nearly constant about 1 decade per decade slope and an horizontal branch on the left. One can note that the default 1e-12 gmin value is high enough in that case to hide the actual leakage current.

The gmin sweeping method can detect weak nodes through their signature. If a plateau exists for intermediate gmin values and even more significantly if a steep slope exists, there are chances that weak nodes affect the circuit power off current. Then, tracing the currents trough the circuit hierarchy drives you to the issue location.

In order to check that you can use this method with your simulator, you can use the faulty circuit above and its fixed version. This method has proved to be useful in many cases, but it cannot be proved it will always detect weak nodes, especially for large circuits since the faulty current can be hidden by the sum of normal leakage currents. This is why I suggest using this method incrementally for every individual block upwards to the circuit top.